Harness simplifies the transition from monolithic to microservices architectures by offering deployment strategies and Continuous Verification, enabling teams to deploy, test, and verify microservices independently and collectively, with a recent 'Barriers' feature ensuring consistent verification across all services.

Most organizations today are looking to break up their 'vintage' monolithic applications as they start to pursue Cloud, DevOps, and Continuous Delivery. In some respects migrating to a microservice deployment architecture is a pre-requisite for those initiatives.

This blog describes some of the deployment challenges around migrating from a monolithic to a microservices architecture.

The Monolithic Deployment Pipeline

Back in the good old days, monolithic apps might have had one or two artifacts and deployment pipelines were relatively simple like this one in Harness:

This pipeline has 1 artifact (Monolithic.war) and 4 stages {Development, QA, Manual Approval, Production}. Each stage (aka workflow) deploys the new artifact to its target environment, runs a series of tests, and then verifies all is well before proceeding to the next stage.

If one stage fails, the entire deployment pipeline fails and the artifact never makes it to production (thus saving your bacon). If production fails then Harness would automatically roll it back to the last working version (more saved bacon).

Bottom line: It's relatively trivial to deploy, test, and verify monolithic applications.

This is all well and good for 2010. However, we now live in a world ruled by Containers and Kubernetes. Microservices are fashionable and EJBs are very much not.

Microservices Changes Everything

Cloud-Native Applications are nothing more than a logical group of services, microservices, or functions.

The whole point of microservices is to break up application logic into small well-defined components that can be developed and deployed independently of each other, thus increasing the parallelism and productivity of teams.

Great, let's just have one deployment pipeline per microservice. Teams can then automatically trigger their own pipeline when a new artifact, build, or version exists. Job done?

Only it isn't, because, in reality, microservices architecture has implicit dependencies between services/microservices. These dependencies make deployment pipelines, testing, verification, and rollback much more complex to manage.

Bottom line: It's trivial to deploy microservices, but it's massively complex to test, verify and rollback microservices given their upstream and downstream dependencies.

What We Heard From Customers

Prior to using Harness, the customers we spoke with typically deployed many microservices in parallel to a given environment/cloud, and then manually verified the impact of each microservice independently and collectively. If one microservice deployment fails, customers might roll back one or all microservices related to the overall deployment.

At Harness, we automate this process and call it Continuous Verification. We allow customers to automatically verify the health (performance/quality) of each microservice independently as well as collectively, and if something fails we automate rollback locally or globally depending on the customer's preference.

Enabling Microservices Deployments With Harness

To help customers deploy their microservices, Harness offers several deployment strategies out-of-the-box:

- Basic (one service to one environment)

- Multi-Service (many services to one environment)

- Canary Deployment

- Blue/Green Deployment

- Rolling Deployment

Depending on your microservices architecture, teams, and circumstances there are several ways to deploy with Harness:

- Each microservice/team has its own deployment pipeline, and deployment pipelines can be chained to mirror dependencies

- One deployment pipeline with several multi-service deployment workflows for each stage (dev, QA, Prod)

- One deployment pipeline for each environment consisting of multiple parallel deployment workflows for each microservice; these pipelines can also be chained for Dev, QA and Prod.

For example, here is the latter showing one pipeline with multiple microservice deployment workflows executing together as one parallel stage:

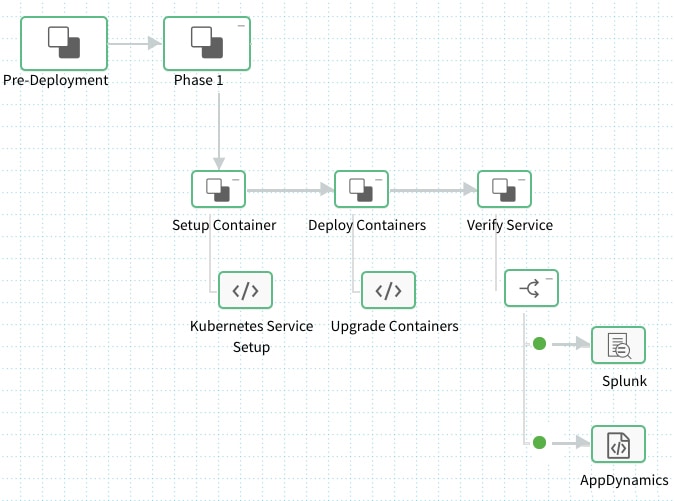

Each Microservice executes its own deployment workflow in parallel. For example, here is what a typical microservices workflow looks like:

Each Microservice artifact is first picked up, set up, and deployed using Kubernetes, and then each microservice is independently auto-verified using Splunk and AppDynamics by leveraging Harness Continuous Verification (machine learning-based verification). Harness has native verification support for the likes of AppDynamics, New Relic, Dynatrace, Splunk, ELK, Sumo Logic, Jenkins and so on.

Verifying Microservices Dependencies Post-Deployment is Hard

The above workflow is good for automating microservice deployment and independent verification, but it's really bad for collective verification because other microservices deployments may not have successfully completed.

It's possible you could start verifying one microservice while others are in mid-flight, so you're going to get inconsistent results unless all microservices deployments have completed and are behaving as expected in your environment. Once again, it's easy to deploy microservices but it's hard to verify them upstream and downstream.

Introducing 'Barriers' For Pipeline Flow Control

To overcome the above challenge, we recently shipped a feature called "Barriers" that lets customers control the flow of deployment pipelines so they can deploy and verify microservices independently, collectively, and safely.

Barriers basically block the execution of a deployment workflow until all other deployment workflows reach the same barrier. Once this happens, normal execution resumes.

If any deployment workflow fails or times out (does not reach the barrier) then Harness will automatically rollback by default. Rollback can be local to a specific deployment workflow or global across all deployment workflows in a pipeline. This, therefore, allows customers to roll back one or all microservices during a failed deployment or verification.

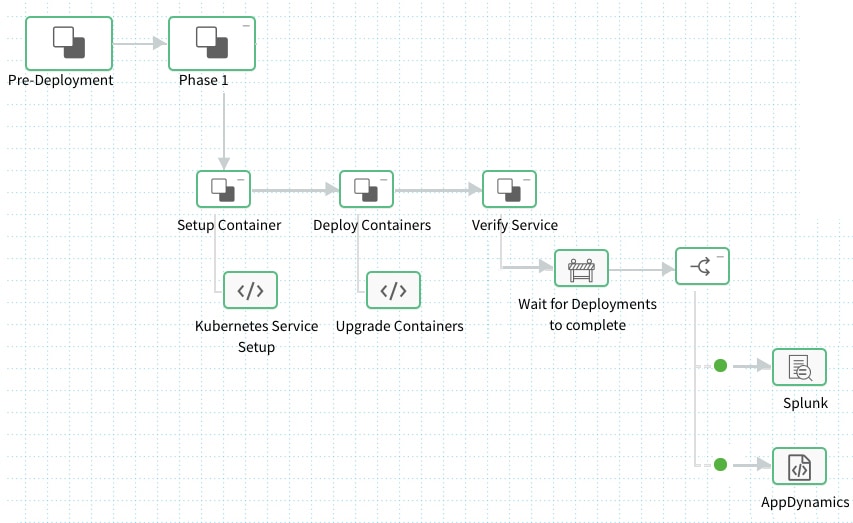

In the case of our microservices example, we can add a barrier "Wait For Deployments To Complete" to each of our microservice deployment workflows so that collective verification won't begin until all microservices have been deployed successfully. It would look something like this:

Our microservices deployment workflow now looks like this:

Our example pipeline would now execute many of the above workflows in parallel for each microservice. Once the "Deploy Containers" step completes, execution for each workflow would halt at our new barrier, and it would wait until all other workflows have the same barrier. Once this happens, normal execution resumes and verification would then take place based on all microservices being successfully deployed.

It's even possible to have multiple barriers layered in a deployment workflow or pipeline. For example, you might perform different types of deployment verification at different stages of a workflow. When you require consistency across microservices or workflows you simply add a new barrier to control the flow of execution.

We had one customer last week roll out this new barrier feature so they can now deploy and verify 40 microservices to the same environment at the same time. If any microservice or verification fails, then they automatically rollback. Try for yourself with the Harness trial.

How do you deploy and verify tens of microservices today in production?

Cheers,

Steve.

@BurtonSays