Kustomize and Helm are essential tools for managing Kubernetes configurations and packages, respectively, streamlining deployment processes and reducing the complexity of handling multiple manifests and clusters. Harness enhances these tools by providing support for both, enabling more efficient and simplified Kubernetes deployments.

The Kubernetes ecosystem is still relatively new. With Kubernetes hitting GitHub in the Summer of 2014 and the first 1.0 release of Kubernetes available in the Summer of 2015, the pace and advancements are still fresh in the minds of many. Like any piece of application infrastructure, operational tasks as workloads of substance started to be placed on Kubernetes became important.

Kubernetes works off a concept of resources and these resources are typically described by YAML-based manifests; e.g. deployment.yaml. If experimenting with Kubernetes for the first time, you might deploy a simple singular image, like NGINX, and can describe a deployment in a linear manifest, e.g. one deployment.yaml. Because Kubernetes is pluggable and you can change the opinion of Kubernetes pretty easily, the number of deployment resources (YAML files) increases for any workload of substance.

With a two-prong rise in the number of manifests needed to deploy (for example, in a microservice architecture) and the number of Kubernetes clusters to maintain and deploy to, which would most likely need cluster-to-cluster differences (e.g. networking stack), organizations can easily get overrun with manifests to support this. Enter two tools to tackle configuration and package management inside the Kubernetes ecosystem: Kustomize and Helm, respectively.

What is Kustomize?

Kustomize is a configuration management tool for the Kubernetes ecosystem. Configuration management, as a core discipline, focuses on maintaining consistency across environments. With the new paradigms that Kubernetes has been ushering in and a shift of computing resources to become more ephemeral, the number of Kubernetes clusters/environments continues to expand. Embracing ephemeral infrastructure, even a Kubernetes cluster itself can be recycled and recreated. The beauty and challenge of Kubernetes is that Kubernetes can codify operational tasks that application infrastructure needs, more often than not increasing the length and scope of Kubernetes manifest files.

As an implementation, Kustomize is a declarative templating engine that works off a concept of refactoring Kubernetes manifests. Base Kubernetes YAMLs are matched against Kustomize configurations (overlays and Kustomization files) and refactored/replaced per the logic defined in these files. Because of the large number of applications heading towards Kubernetes and maintaining the clusters themselves, Kustomize helps achieve configuration management across Kubernetes resources and applications that are deployed to Kubernetes.

Kustomize Structure

Kustomize works off a concept of “where, what, and how” to refactor specific Kubernetes manifests. The “where” to refactor/change are the base manifests, e.g. a deployment.yaml. The “what” to change are the overlays or small snippets of YAML to change, e.g. a replica count, volume mounts, etc. The “how” to change are the kustomization/config files.

Kustomize Files

If you have not used Kustomize before, assuming you have existing Kubernetes manifests (a deployment/service/etc/.yaml) to use as a base, the Kustomize Files (kustomization.yaml) will be where the Kustomize logic lives. Matching logic can work on specific Kubernetes resources, e.g. a specific base file, but more dynamically can match on specific Kubernetes Labels and Annotations inside those base files.

Pros of Kustomize

A contemporary comparison of using Kustomize falls on the alternatives, which is the status quo of not using a package/configuration manager and comparing Kustomize against Helm V2.

Using a templating engine/configuration management solution takes away the burden of keeping up with multiple manifests for specific changes.

The status quo would be to keep every change in a manifest in source control, and small nuanced differences would be hard to tell what to apply to what environment in Kubernetes based on file name alone.

Kustomize can natively be run by the Kubernetes Command Line Interface (CLI), kubectl from version 1.14. When compared to Helm V2, which requires an elevated privilege pod named Tiller on your Kubernetes cluster, Kustomize can be up and running without that cluster dependency.

Cons of Kustomize

Like any technology, there is a learning curve and challenges when implementing and adopting. Depending on the organization, the benefits of templating can also be a deterrent. Kustomize does not ship with a lot of convention out of the box, staying tighter to a templating engine design.

Potentially, the end-user can change anything in a base manifest without explicit templating code. By matching by a file, label, or annotation, the end-user has to have more detailed knowledge of what and where the change is going to take place. Poorly-crafted base YAMLs might not have easily accessible annotations/selectors to match the Kustomize files to. When compared to Helm, Kustomize is not a full ecosystem (e.g. a repository format itself) and relies on SCM, like a Git repository.

What is Helm?

Helm is viewed as the de facto package management solution for the Kubernetes ecosystem. Package managers focus on installing and uninstalling software packages. Similar to YUM/RPM/APT in the Linux world, or using Homebrew/Chocolatey on your Mac/Windows machine respectively, Helm streamlines the software installation lifecycle.

Helm was originally developed by Deis in 2015 and was showcased at the inaugural Kubecon. Helm is widely adopted by organizations and vendors alike as a mechanism to get software on and off a Kubernetes cluster. It is also a repository format, allowing for the rapid sharing/distribution of Helm resources. There are several open communities hosting Helm repositories, and vendors/projects can have specific Helm repositories.

Helm Structure

Helm runs off a concept of Helm Charts and Helm Templates. When the Templates are combined with the values, the Templates will generate valid Kubernetes manifests based on the variables/values.

Helm Charts

A Chart is the primary format in which Helm runs off of. Since Helm Charts are file-based and follow a convention-based directory structure, Charts can easily be stored in Chart Repositories. Charts are installed and uninstalled into Kubernetes clusters. Like the image-to-container relationship, a running instance of a Chart is called a Release. In the end, a Helm Chart is an executed template converting Chart definitions to Kubernetes manifests.

Helm Charts, when created, must have a version number that follows semantic versioning. Helm Charts can reference other Charts as dependencies, a core to any package manager. More advanced features of Helm Charts are Chart Hooks and Chart Tests, which allow for interaction with a Release’s lifecycle, and the ability to run commands/tests against a Chart respectively.

Pros of Helm

Leveraging Helm has the same benefits that package managers had off the Kubernetes ecosystem. By allowing for an ecosystem and convention for users to create, share, and distribute instructions to install/uninstall software, consumption of the software certainly increases.

Encompassing a convention and repository, creators of Helm Charts can enforce templates and standards on the consumers. DevOps or Platform Engineering teams can provide more completed Charts to the internal customers.

Cons of Helm

A good amount of cons are directed towards Helm V2 and the introduction (and later removal in Helm V3) of Tiller. Tiller was a part of the Helm Platform. It came from the part of the project integration with Google’s Deployment Manager and was designed to be a job runner, not too dissimilar to Cloud Foundry’s Bosh. In a nutshell, Tiller is the in-cluster portion of Helm that runs the commands and Charts for Helm. Because Tiller had unfettered access to the Kubernetes Cluster, it became a sore point for cluster security.

Helm V2, up until one of the final renditions before Helm V3’s release, was storing secrets or sensitive information in Kubernetes ConfigMaps in plain text. This potentially made sense for internal uses of Helm, but as Helm Charts started to head to public repositories, this practice needed to change.

Lastly, Helm can be overkill for simple deployments. The complexities that Helm brings in, along with being a release manager itself, is an additional layer of complexity to navigate. Because organizations want to standardize on how to deploy/release, migrating items that were easily accomplished without Helm into Helm, for consistency, is taxing.

What Other Kubernetes Templating Tools Should I Look At?

Two main alternatives to using Kustomize or Helm are Jsonnett and Skaffold.

Jsonnet/Ksonnet

Jsonnet is a templating language and engine. Jsonnett has an object-oriented approach for templating, allowing for complex and relationship-based templates to be created. If you need to make replicas of something, simply create a new object and the backing template will take over. Ksonnnet, created by Heptio, was a fork of Jsonnet specifically designed for Kubernetes. As of recently, the team behind Ksonnet no longer supports the project.

Skaffold

Skaffold is one of the latest Kubernetes management projects from Google. Skaffold is more encompassing than Jsonnet, Helm, and even Kustomize. Since Skaffold has build and deploy components, it is designed to be the one recipe needed for a Kubernetes application. Skaffold is pluggable for the deployment phase with Helm and Kustomize.

Improve Kubernetes Deployments with Harness

No matter your choice in Kubernetes deployment/templating methodology, Harness has your back. Even if you are not an expert or you are designing pipelines to be leveraged by the entire organization, Harness makes that journey much simpler.

Templating out Kustomize environments so users can pick which environment to deploy.

Harness has wide support for Helm and Kustomize. Harness’ abstraction model allows both applications under templating control (e.g. a Helm or Kustomize deployment) and outside templating control to be deployed.

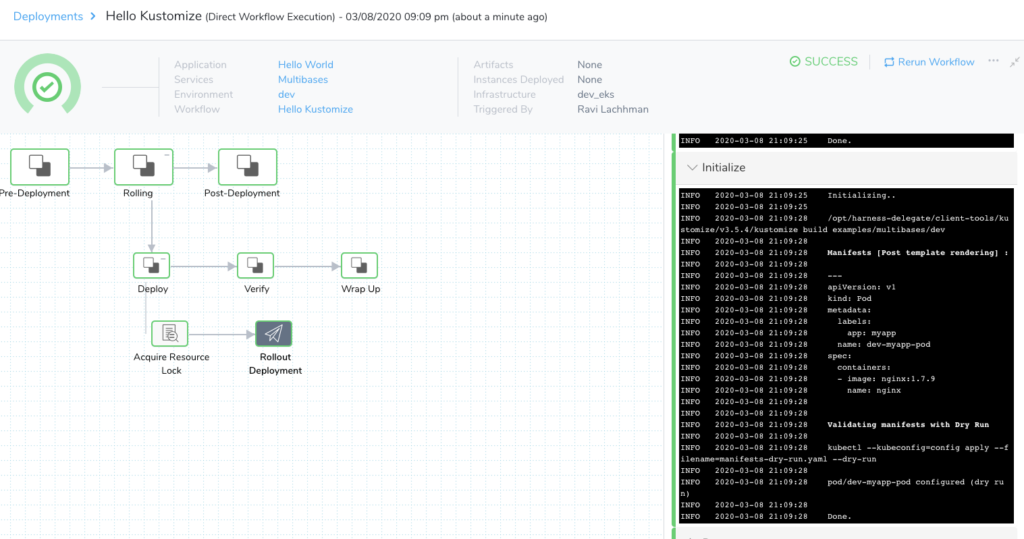

A successful Kustomize deployment with Harness.

Feel free to sign up for a Harness account and get started on - or supercharge - your Kubernetes journey!

Cheers,

-Ravi