Harness Blog

Featured Blogs

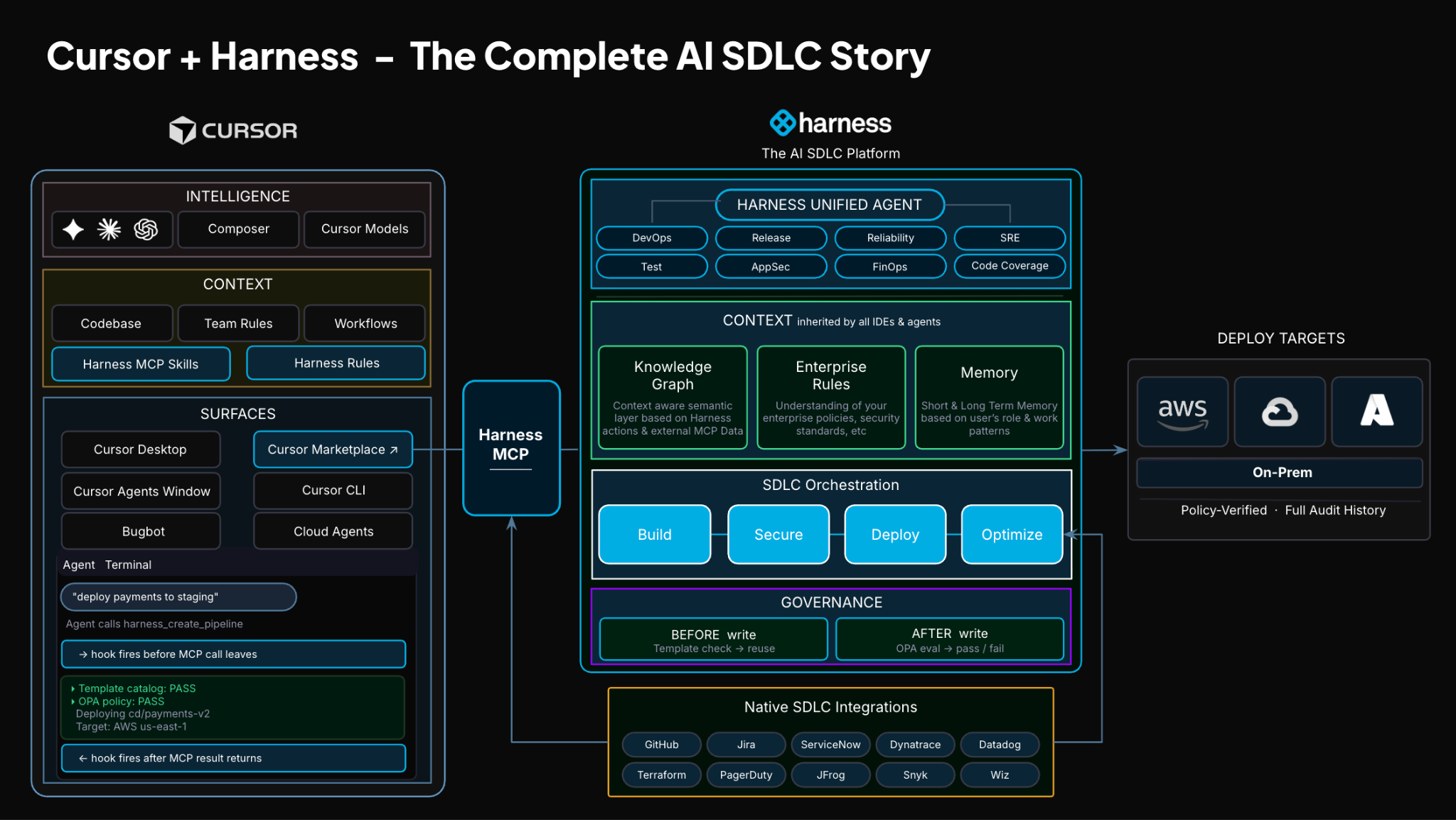

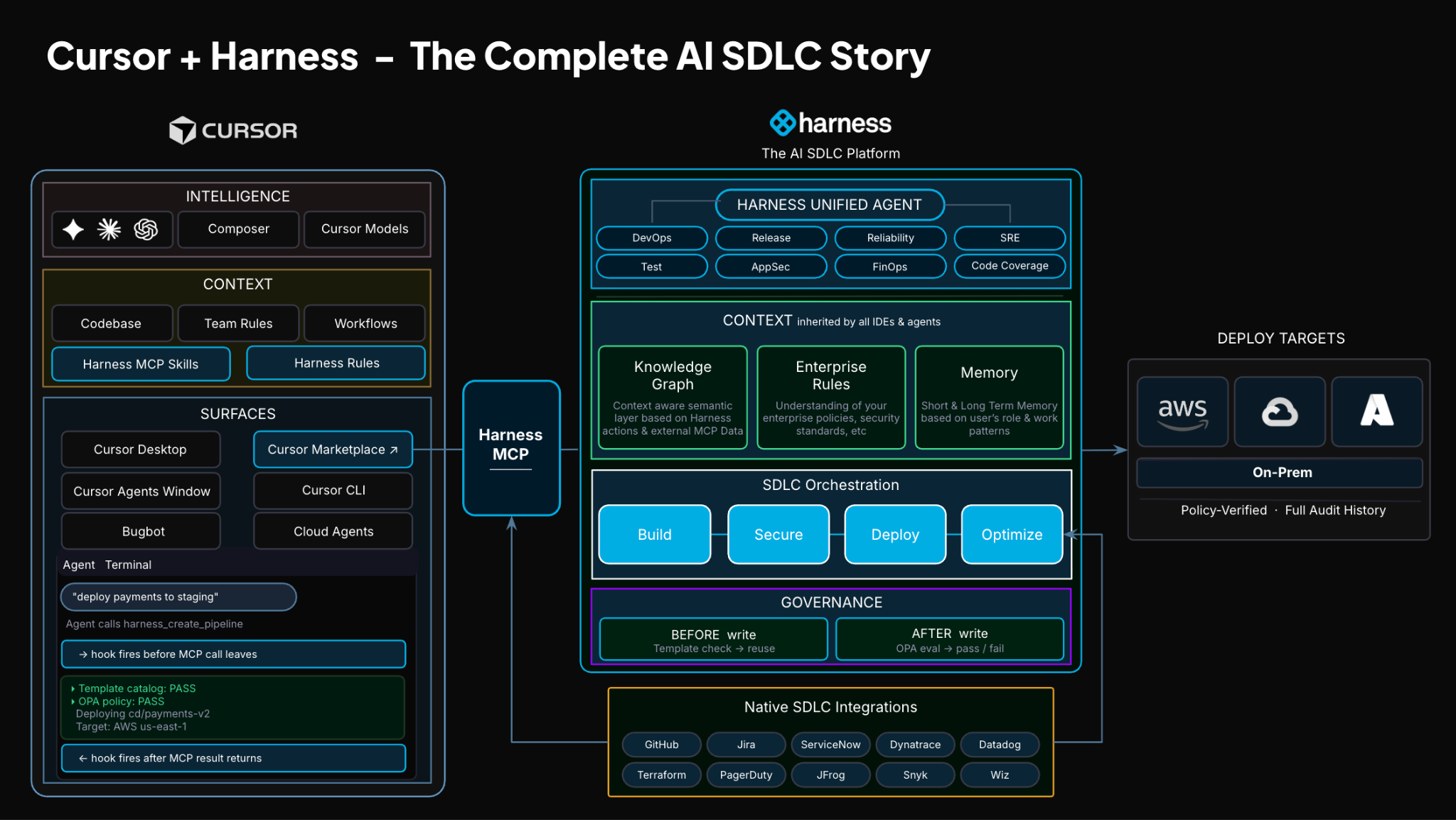

TLDR: Today, Harness is introducing the Harness Cursor Plugin, bringing the power of the Harness AI-native software delivery platform directly into Cursor. This integration, along with the Harness Secure AI Coding hook for Cursor, allows developers and AI agents to move from code changes to vulnerability detection, CI/CD execution, security validation, approvals, deployments, and operational insight without leaving the editor.

AI has completely changed how we write code. You can spin up functions, refactor entire files, and generate tests in seconds. The inner loop, writing and iterating on code, has never been faster. But the moment you try to ship that code, everything slows down. This is what we call the AI Velocity Paradox.

You are suddenly back to juggling pipelines, waiting on approvals, checking security scans, debugging failed runs, and bouncing between tools just to get a change into production.

That gap, between fast code and slow delivery, is what we kept running into. So we built something to fix it.

Today, we are introducing the Harness Plugin for Cursor, a way to go from PR to production without leaving your editor.

AI Made Coding Faster, But Delivery Did Not Catch Up

If you are using agentic coding tools, such as Cursor, you have probably felt this.

You can:

- Generate code instantly

- Understand unfamiliar repos faster

- Fix bugs and open PRs in minutes

But shipping still depends on everything outside your editor:

- CI/CD pipelines

- Security checks

- Approval flows

- Policy enforcement

- Deployment tooling

- Monitoring and debugging

And none of that got simpler just because AI showed up. In fact, AI makes the problem more obvious.

Now you can create changes faster than your delivery process can safely handle. And if those controls are not tight, you are introducing a whole new category of risk. Fast-moving code with fragmented governance.

AI did not break software delivery. It exposed how disconnected it already was.

What If You Could Just Ask

Instead of jumping between tools, what if you could just tell your editor what you want to happen?

Something like:

“Deploy PR #4821 to staging once the security scan passes, and Slack me if anything fails.”

That is the idea behind the Harness Cursor Plugin.

It connects Cursor directly to Harness, so you can trigger and manage your entire delivery workflow using natural language, right inside Cursor.

No tab switching. No manual orchestration. No guessing what is happening in the pipeline.

Some Sample Use Cases

Once connected, you can use Cursor to interact with your delivery system just as you do with your code.

For example, you can:

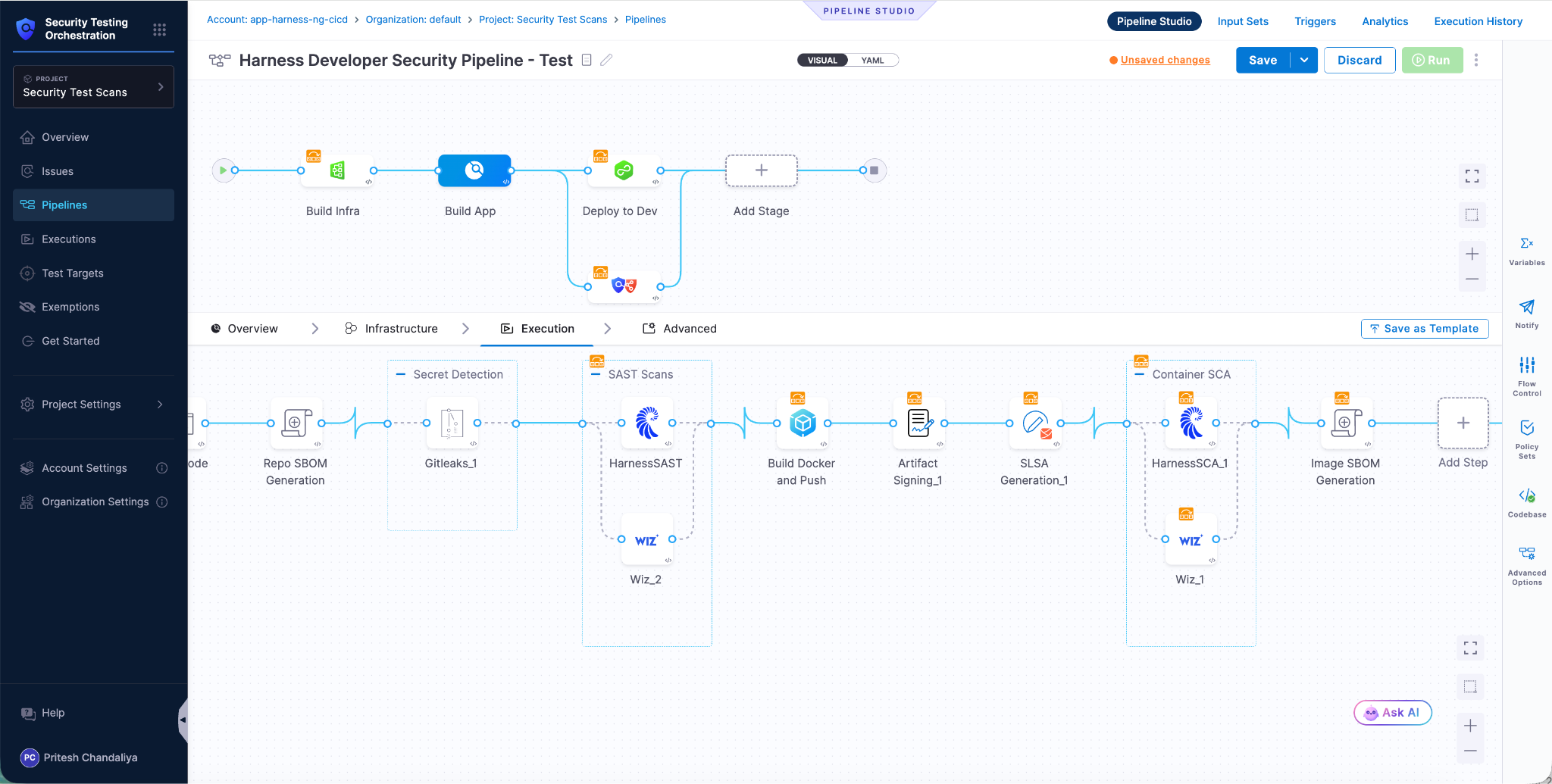

This builds on what we introduced last month, Secure AI Coding, which integrates directly with Cursor and scans code at the moment of generation rather than waiting for a PR review. Developers see inline vulnerability warnings with the option to send flagged code back to the agent for remediation, without leaving their workflow. Under the hood, it leverages Harness's Code Property Graph (CPG) to trace data flows across the entire codebase, surfacing complex vulnerabilities that simpler linting tools would miss.

The key thing is that you are no longer just interacting with code. You are interacting with the entire delivery system from the same place.

The Important Part: This Is Not Skipping Control

One of the biggest concerns with AI in delivery is obvious:

“Are we about to let agents push code to production without guardrails?”

No.

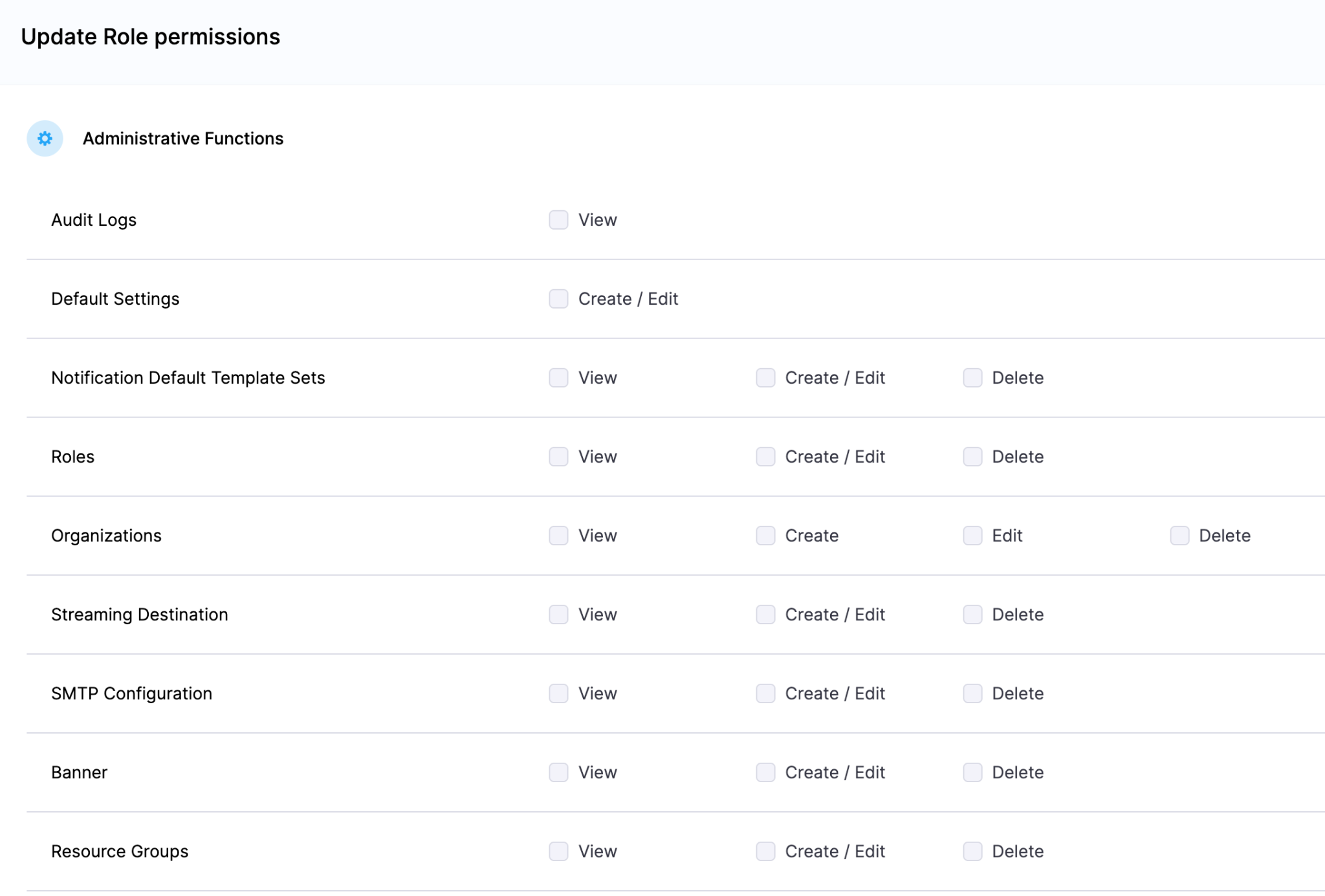

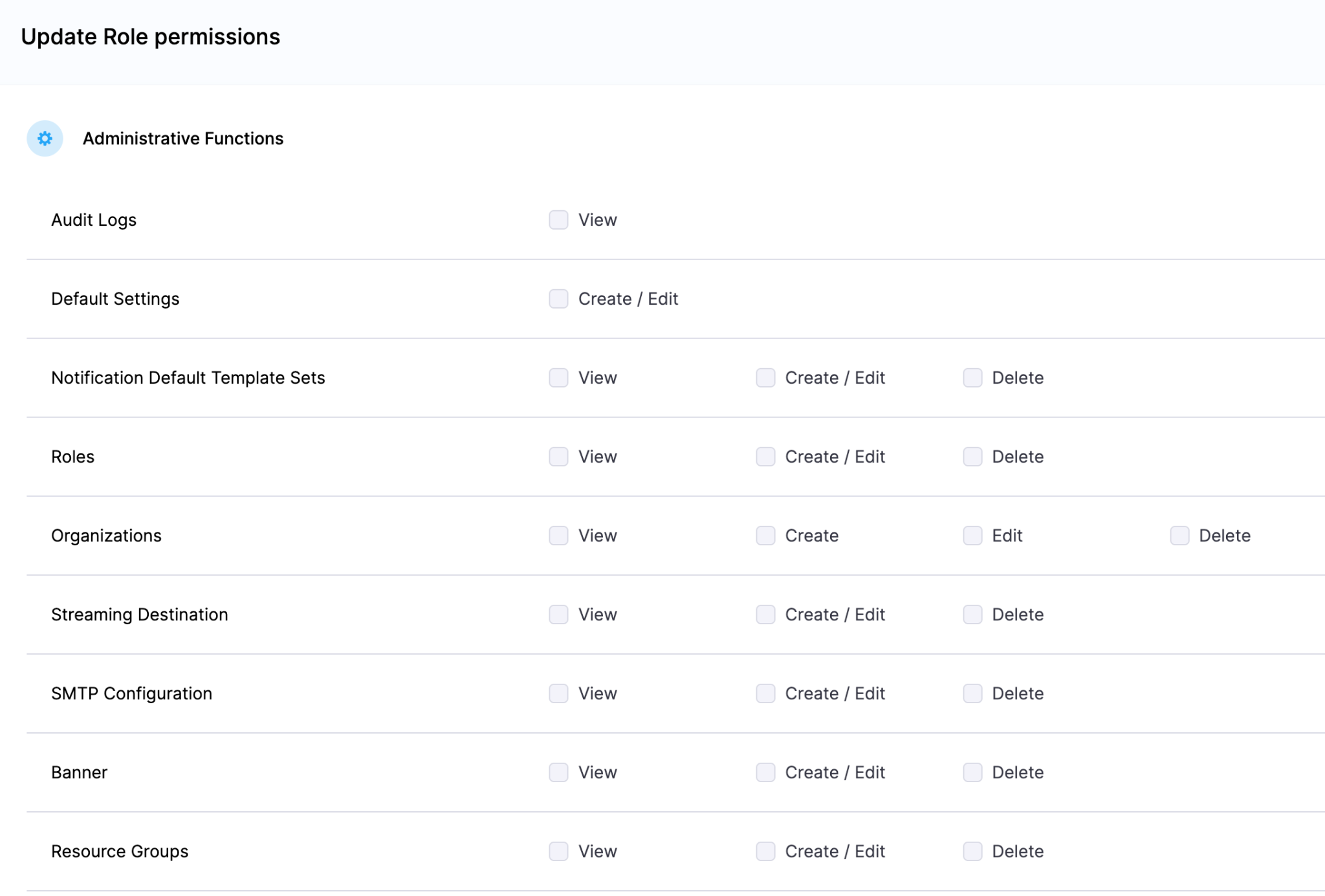

With Harness, everything runs through the controls that you can rely on:

- Granular RBAC permissions

- OPA policies

- Approval gates

- Audit logs

Instead of being manual checkpoints spread across tools, they are enforced automatically as part of the workflow while you stay in flow.

So AI can help move things faster, but it cannot bypass the governance that matters.

Why We Built It This Way

Most integrations today expose APIs or bolt AI onto existing systems. That is not what we wanted to do.

We designed the Harness Cursor Plugin specifically for how AI agents actually work:

- It is built around actions and workflows, not raw endpoints

- It spans the full delivery lifecycle, not just one step

- It gives agents enough context to reason about what to do next

Because shipping software is not a single action. It is a chain of decisions across CI, CD, security, approvals, and operations. If AI is going to help here, it needs access to that full picture. That’s where the Harness Software Delivery Knowledge Graph comes into play. It provides the necessary context for AI to take actions for you.

The knowledge graph models the relationships between services, pipelines, environments, policies, and operational signals in real time. Instead of treating each step in delivery as an isolated task, it creates a connected system of record that AI can reason over. This allows agents to understand not just what to do, but when and why to do it, based on dependencies, risk signals, and historical behavior.

In practice, this means smarter automation: deployments that adapt to context, approvals that are triggered based on policy and impact, and faster root cause analysis because the system already understands how everything is connected.

This Changes How Ideas Move To Prod

This is not just about convenience. It is a shift in how software actually moves from idea to production.

Instead of:

- Writing code in one place

- Managing delivery somewhere else

- And stitching it all together manually

You get a single, connected workflow:

- Code to pipeline to validation to deployment to operations

All accessible from your editor. Cursor accelerates the building. Harness governs the shipping. And the handoff between the two disappears.

Watch the demo:

Getting Started

If you want to try it:

- Install the Harness Cursor Plugin from the Cursor Marketplace

- Authenticate with Harness using OAuth. No API keys or setup headaches

- Start using natural language to run pipelines, debug issues, and manage deployments

For example:

“Run the CI pipeline for this branch, check if the security scan passed, and promote to staging if it did.”

That is it.

AI is not just changing how we write code. It is changing expectations for how fast we should be able to ship it. But speed without control does not work in real environments. What we are building toward is something simpler:

A world where every step, from PR to production, is:

- Fast

- Governed

- Observable

- Auditable

Without forcing developers to leave their flow. This plugin is one step in that direction.

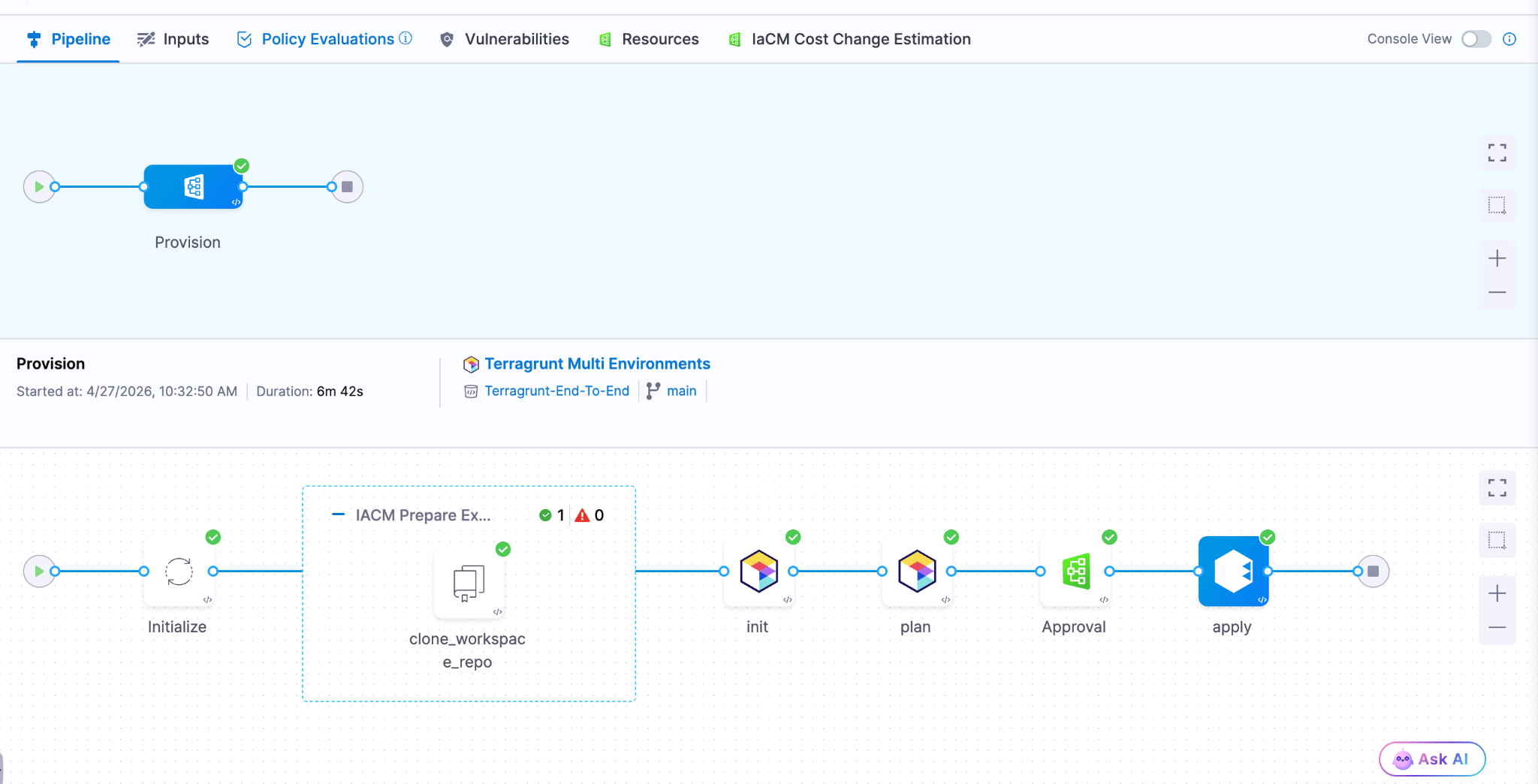

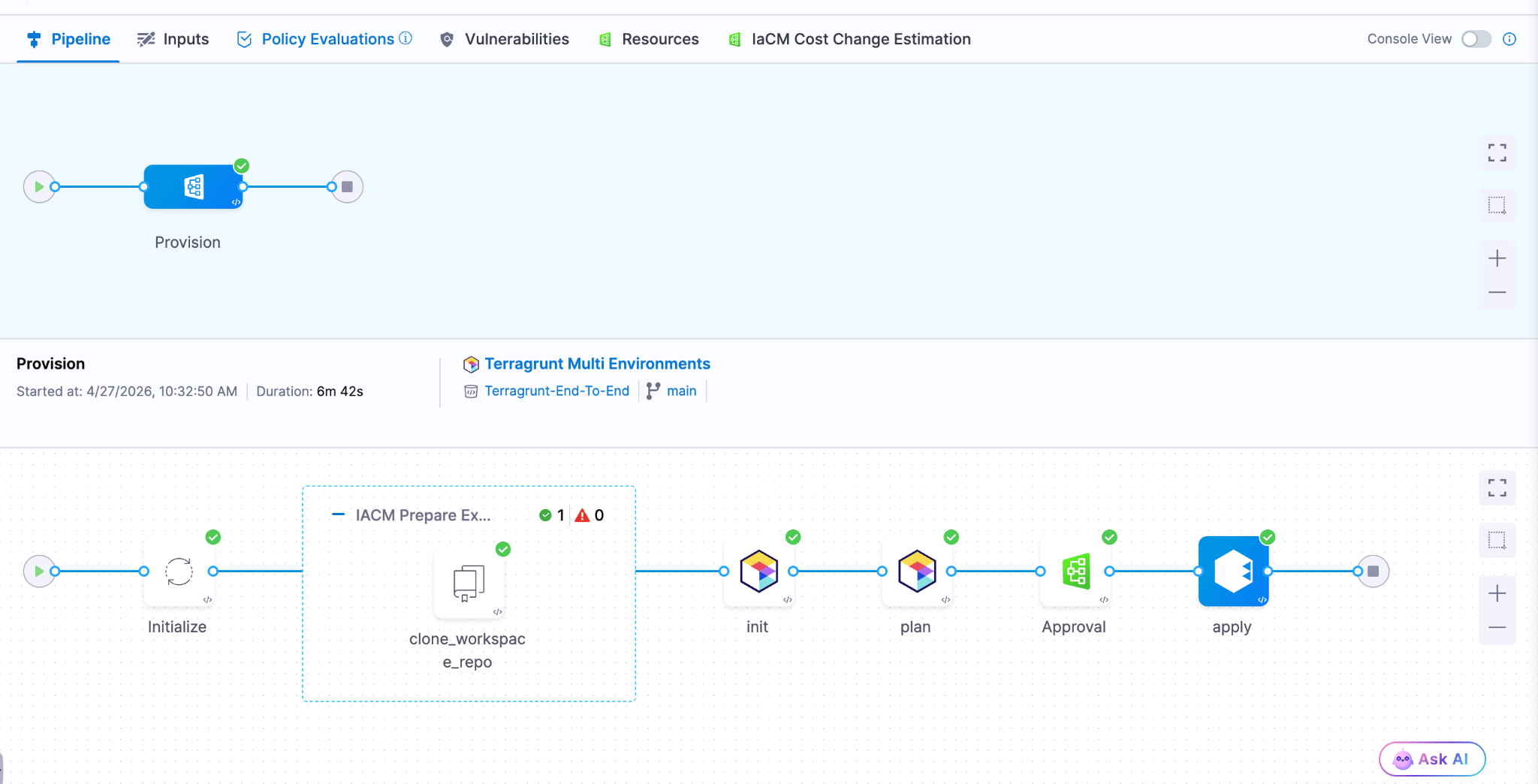

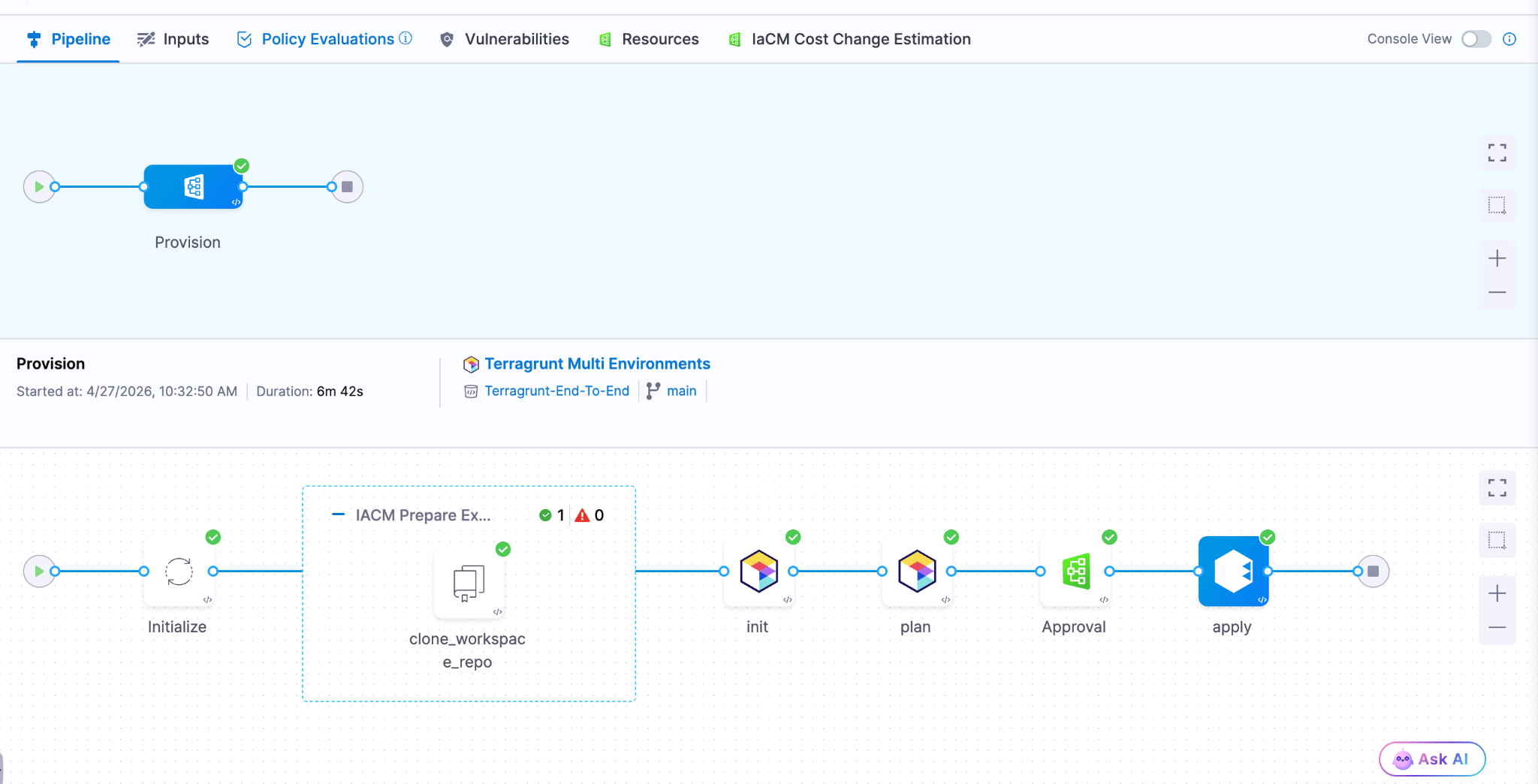

- Harness IaCM introduces native Terragrunt support, enabling true enterprise-grade orchestration at scale.

- Teams can now manage Terraform, OpenTofu, and Terragrunt in a single platform without fragmented tooling.

- Built-in governance, policy enforcement, and approvals streamline secure infrastructure operations.

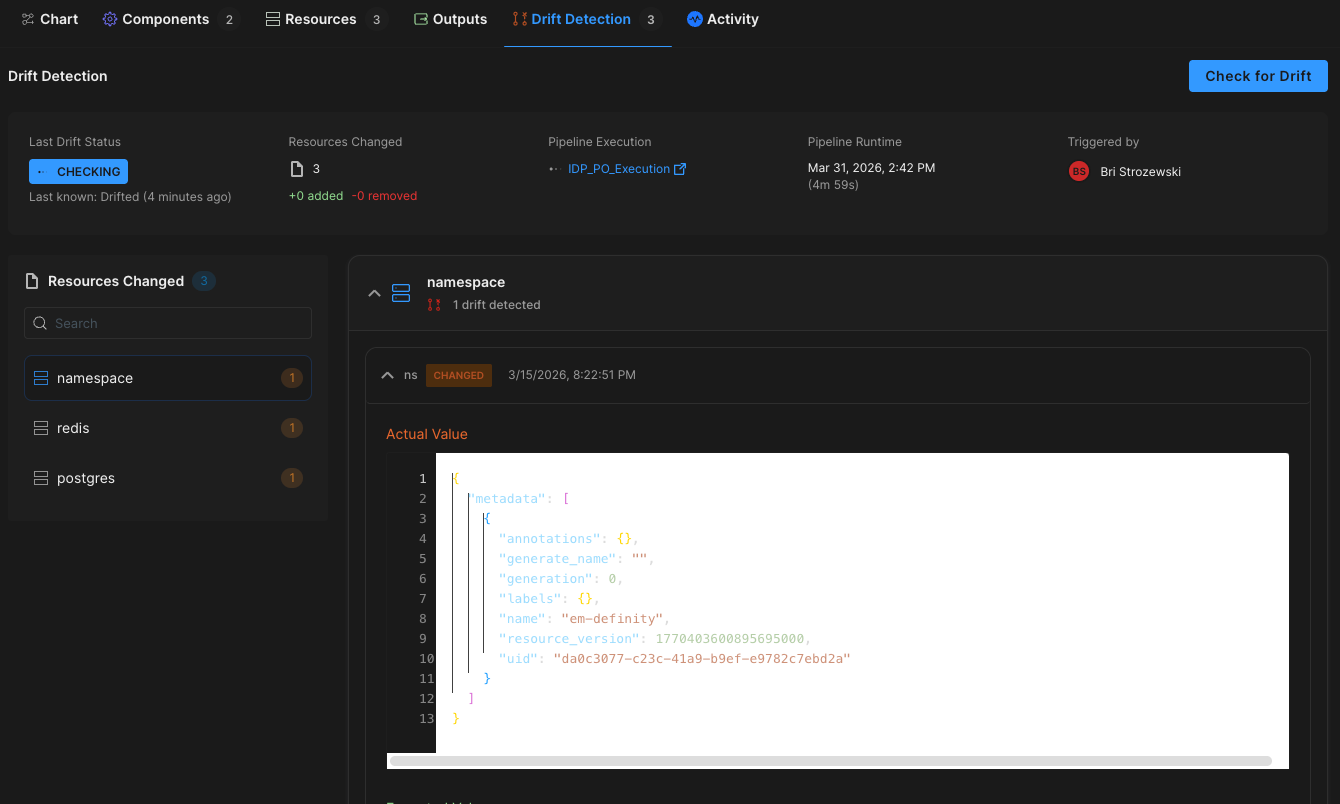

- End-to-end visibility and drift detection improve reliability across complex, multi-environment deployments.

- The launch marks a major step toward a unified, multi-IaC control plane for modern infrastructure teams.

Bringing First-Class Terragrunt Support to IaCM

“We’ve been operating in a hybrid environment with both OpenTofu and Terragrunt, and Harness has made it much easier to bring those workflows together into a single, consistent platform with IaCM. The addition of Terragrunt support is a valuable step toward simplifying how we manage infrastructure at scale.”

— Lead Platform Engineer, Enterprise Customer

Infrastructure as Code is now a standard for modern cloud operations, with most enterprises using IaC to provision and manage environments. However, as adoption grows, so does complexity. Teams are no longer managing a handful of environments. They are operating across multiple regions, accounts, and services, often at massive scale.

This is where traditional approaches begin to fall short.

As organizations scale their infrastructure, Terraform alone is often not enough. Teams adopt Terragrunt to manage complex, multi-environment deployments, but they are often forced to stitch together fragmented tooling that lacks visibility, governance, and consistency.

At Harness, we are changing that.

Today, we are excited to announce native Terragrunt support in Harness IaCM, bringing it to full parity with Terraform and OpenTofu while delivering capabilities that go beyond what is available in standalone tooling. This is more than support. It is about making Terragrunt a first-class platform for enterprise infrastructure management.

With Harness IaCM, teams can now:

- Orchestrate complex Terragrunt environments with full visibility across all units

- Apply cost estimation, approvals, and policy enforcement natively

- Detect and manage drift across environments with granular insights

- View infrastructure changes at the resource level across orchestrated deployments

Terragrunt has become a critical layer for managing infrastructure at scale because it simplifies how teams structure and reuse configurations across environments. Harness builds on that foundation with deep, native integration, enabling platform teams to operate with both flexibility and control.

This is especially important for enterprises where a single deployment spans multiple environments and services. Harness abstracts that complexity while maintaining governance, auditability, and consistency.

Extending IaCM to a Multi-IaC Future

Terragrunt is part of a broader shift toward multi-tool infrastructure strategies.

Modern teams are no longer standardized on a single IaC tool. Instead, they operate across:

- Terraform and OpenTofu for provisioning

- Terragrunt for orchestration

- CDK for developer-driven infrastructure

- Ansible for configuration and automation

This creates challenges around consistency, visibility, and governance. Harness IaCM is built for this reality. We are evolving IaCM into a unified control plane for multi-IaC workflows, where teams can manage different frameworks with a consistent experience, shared policies, and centralized visibility.

This means:

- Eliminating fragmented pipelines across tools

- Standardizing governance across environments

- Gaining full visibility into infrastructure state and changes

Instead of managing infrastructure in silos, teams can now operate from a single platform across the entire lifecycle.

What’s Next for Infrastructure as Code?

The next phase of Infrastructure as Code is not just about supporting more tools. It is about making infrastructure systems more intelligent and automated.

We are investing in two key areas:

Expanded IaC Support

We are continuing to support modern frameworks like AWS CDK, enabling developer-centric infrastructure workflows alongside provisioning, configuration, and orchestration tools.

AI-Driven Automation

We are introducing intelligence into IaC workflows to simplify tasks such as drift management and optimization. This helps teams reduce manual effort and operate more efficiently at scale.

Together, these investments move IaCM toward a unified, multi-IaC platform that combines flexibility, governance, and automation. Terragrunt has become essential for managing infrastructure at scale but until now, it hasn’t had a platform that truly supports it. As infrastructure continues to grow in complexity, our focus remains the same. Helping teams move faster, reduce risk, and scale with confidence no matter which IaC tools they use.

.png)

We’ve come a long way in how we build and deliver software. Continuous Integration (CI) is automated, Continuous Delivery (CD) is fast, and teams can ship code quickly and often. But environments are still messy.

Shared staging systems break when too many teams deploy at once, while developers wait on infrastructure changes. Test environments get created and forgotten, but over time, what is running in the cloud stops matching what was written in code.

We have made deployments smooth and reliable, but managing environments still feels manual and unpredictable. That gap has quietly become one of the biggest slowdowns in modern software delivery.

This is the hidden bottleneck in platform engineering, and it's a challenge enterprise teams are actively working to solve.

As Steve Day, Enterprise Technology Executive at National Australia Bank, shared:

“As we’ve scaled our engineering focus, removing friction has been critical to delivering better outcomes for our customers and colleagues. Partnering with Harness has helped us give teams self-service access to environments directly within their workflow, so they can move faster and innovate safely, while still meeting the security and governance expectations of a regulated bank.”

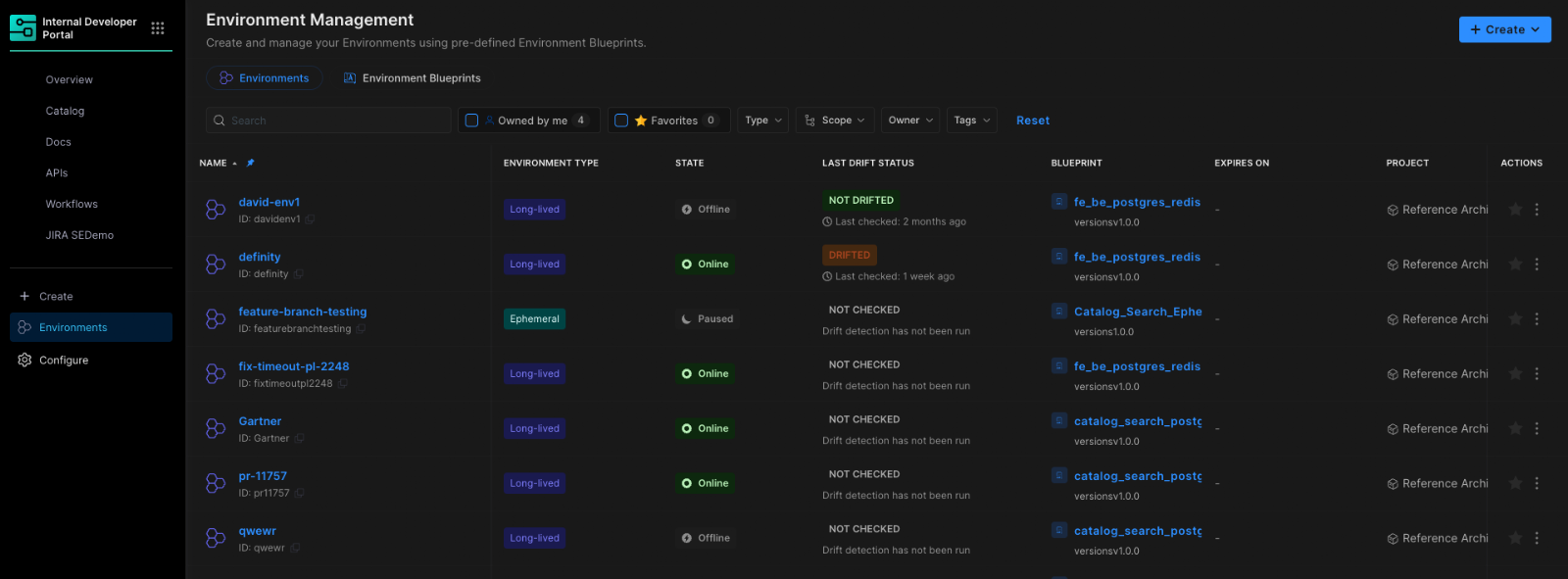

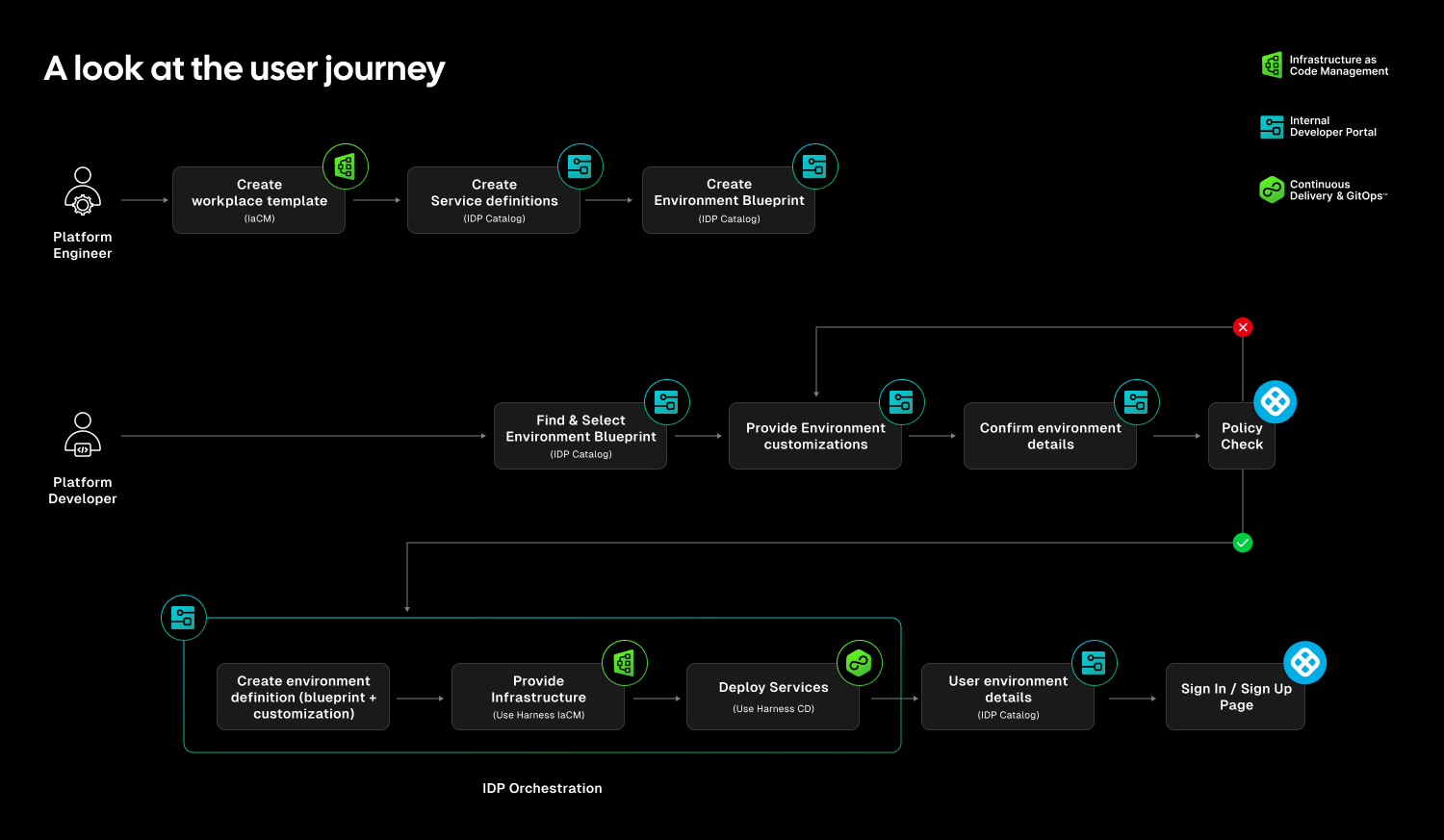

At Harness, Environment Management is a first-class capability inside our Internal Developer Portal. It transforms environments from manual, ticket-driven assets into governed, automated systems that are fully integrated with Harness Continuous Delivery and Infrastructure as Code Management (IaCM).

This is not another self-service workflow. It is environment lifecycle management built directly into the delivery platform.

The result is faster delivery, stronger governance, and lower operational overhead without forcing teams to choose between speed and control.

Closing the Gap Between CD and IaC

Continuous Delivery answers how code gets deployed. Infrastructure as Code defines what infrastructure should look like. But the lifecycle of environments has often lived between the two.

Teams stitch together Terraform projects, custom scripts, ticket queues, and informal processes just to create and update environments. Day two operations such as resizing infrastructure, adding services, or modifying dependencies require manual coordination. Ephemeral environments multiply without cleanup. Drift accumulates unnoticed.

The outcome is familiar: slower innovation, rising cloud spend, and increased operational risk.

Environment Management closes this gap by making environments real entities within the Harness platform. Provisioning, deployment, governance, and visibility now operate within a single control plane.

Harness is the only platform that unifies environment lifecycle management, infrastructure provisioning, and application delivery under one governed system.

Blueprint-Driven by Design

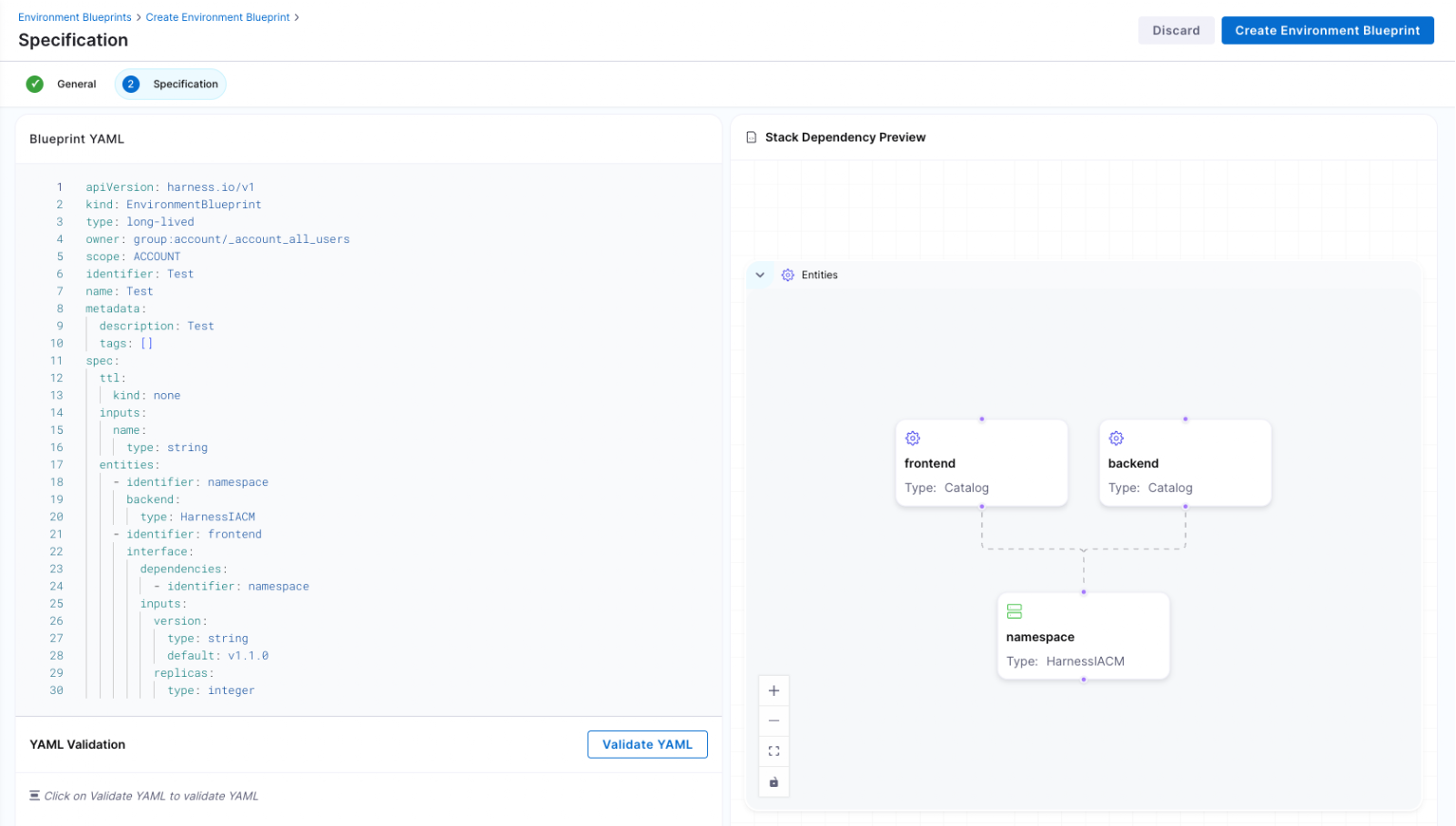

At the center of Environment Management are Environment Blueprints.

Platform teams define reusable, standardized templates that describe exactly what an environment contains. A blueprint includes infrastructure resources, application services, dependencies, and configurable inputs such as versions or replica counts. Role-based access control and versioning are embedded directly into the definition.

Developers consume these blueprints from the Internal Developer Portal and create production-like environments in minutes. No tickets. No manual stitching between infrastructure and pipelines. No bypassing governance to move faster.

Consistency becomes the default. Governance is built in from the start.

Full Lifecycle Control

Environment Management handles more than initial provisioning.

Infrastructure is provisioned through Harness IaCM. Services are deployed through Harness CD. Updates, modifications, and teardown actions are versioned, auditable, and governed within the same system.

Teams can define time-to-live policies for ephemeral environments so they are automatically destroyed when no longer needed. This reduces environment sprawl and controls cloud costs without slowing experimentation.

Harness EM also introduces drift detection. As environments evolve, unintended changes can occur outside declared infrastructure definitions. Drift detection provides visibility into differences between the blueprint and the running environment, allowing teams to detect issues early and respond appropriately. In regulated industries, this visibility is essential for auditability and compliance.

Governance Built In

For enterprises operating at scale, self-service without control is not viable.

Environment Management leverages Harness’s existing project and organization hierarchy, role-based access control, and policy framework. Platform teams can control who creates environments, which blueprints are available to which teams, and what approvals are required for changes. Every lifecycle action is captured in an audit trail.

This balance between autonomy and oversight is critical. Environment Management delivers that balance. Developers gain speed and independence, while enterprises maintain the governance they require.

"Our goal is to make environment creation a simple, single action for developers so they don't have to worry about underlying parameters or pipelines. By moving away from spinning up individual services and using standardized blueprints to orchestrate complete, production-like environments, we remove significant manual effort while ensuring teams only have control over the environments they own."

— Dinesh Lakkaraju, Senior Principal Software Engineer, Boomi

From Portal to Platform

Environment Management represents a shift in how internal developer platforms are built.

Instead of focusing solely on discoverability or one-off self-service actions, it brings lifecycle control, cost governance, and compliance directly into the developer workflow.

Developers can create environments confidently. Platform engineers can encode standards once and reuse them everywhere. Engineering leaders gain visibility into cost, drift, and deployment velocity across the organization.

Environment sprawl and ticket-driven provisioning do not have to be the norm. With Environment Management, environments become governed systems, not manual processes. And with CD, IaCM, and IDP working together, Harness is turning environment control into a core platform capability instead of an afterthought.

This is what real environment management should look like.

Latest Blogs

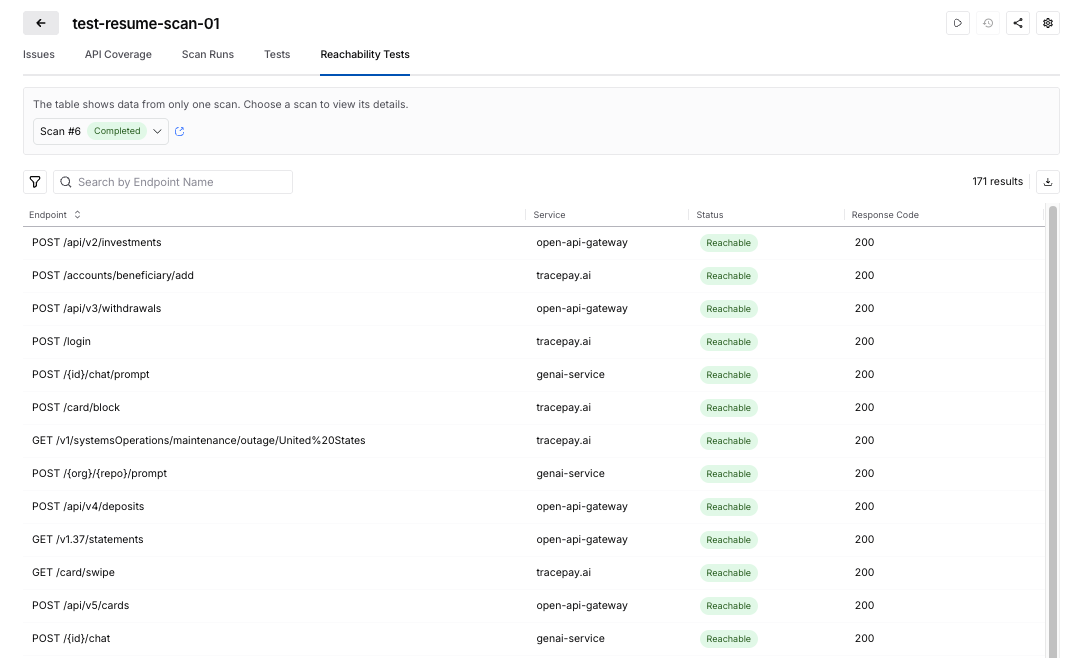

API Security Testing Just Got Easier & Smarter

Application security & engineering teams are under pressure to move faster, cover more, and reduce the operational drag that often comes with security testing. But in practice, two problems keep slowing teams down and adding friction.

- Scan setup is often more complicated than it needs to be. Teams lose time navigating fragmented configuration flows, interpreting unclear fields, and correcting setup mistakes that only surface later. Even when teams know precisely what they want to test, configuring a scan correctly becomes a project of its own.

- Test generation is only valuable when the targets behind it are actually reachable and executable. When APIs are unreachable, improperly mapped, or blocked due to missing authentication, teams end up generating tests that consume runner resources and waste time without producing meaningful results. That creates noise, extends scan times, and makes it harder to focus on other actionable results.

Harness API Testing Enhancements at a Glance

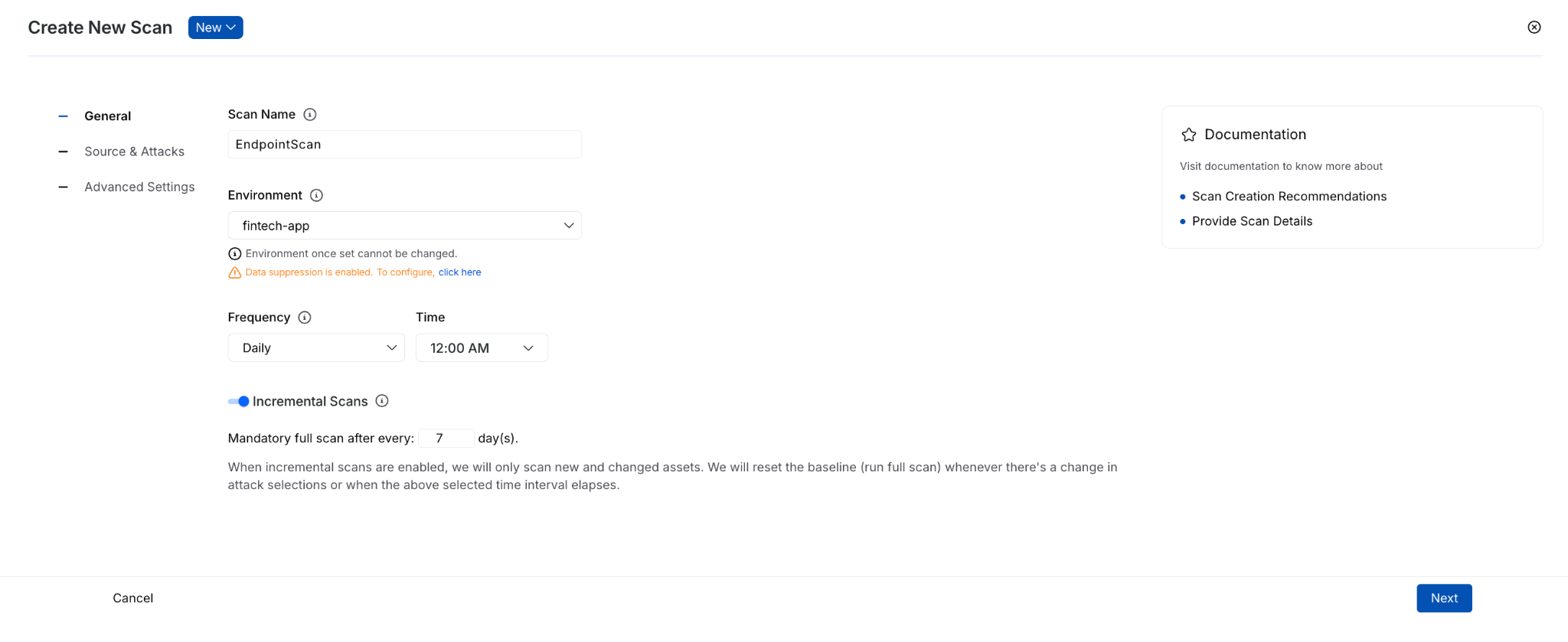

Today, we’re introducing several important enhancements to Harness API Testing that are designed to solve these exact issues and make API scans easier to configure, more reliable, and more efficient.

Improved Configuration Experience

The new scan configuration experience is built to reduce friction from the moment a user clicks “Create Scan.” It simplifies the setup flow, improves validation, and provides users with more guidance directly in context, rather than forcing them to guess or leave the page for help.

The highlights include:

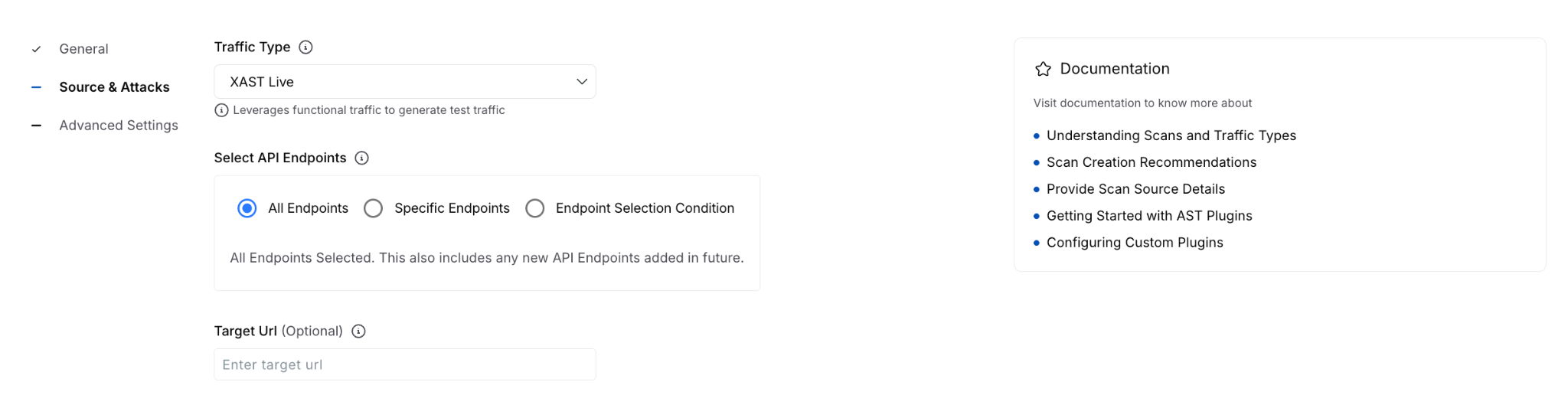

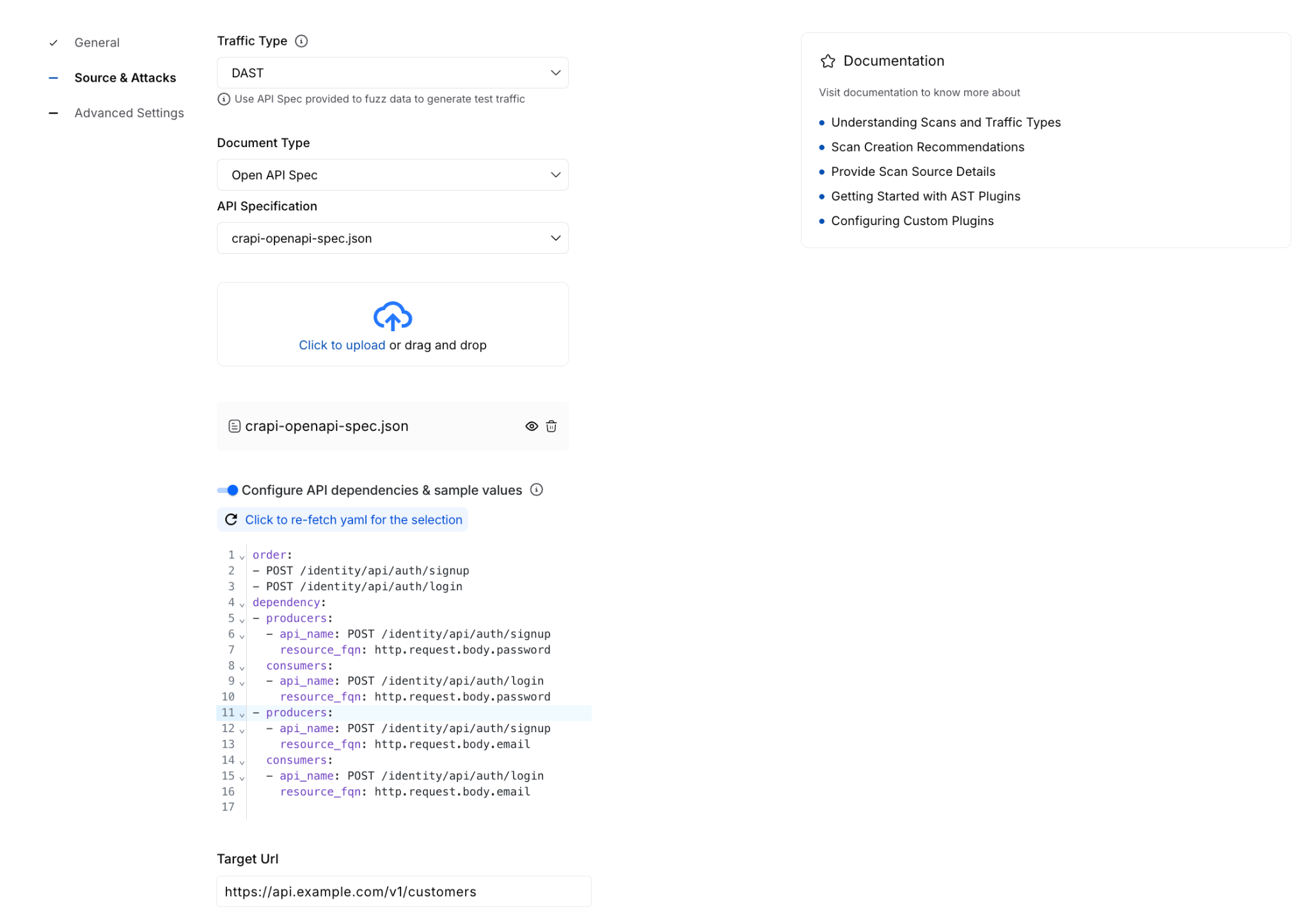

- A simpler, more structured configuration flow - to reduce the cognitive load of stepping through an extensive setup process, the scan creation experience has been consolidated into three clearer sections to help you focus on what matters.

- Field-level guidance everywhere it matters - every configuration field now includes tooltips and helper text, with step-level documentation available alongside the workflow. You get immediate explanations of what each field does and how to configure it correctly.

- Stronger validation early - the new experience focuses on automatic validation to avoid invalid names, incorrect selections, and incomplete inputs before finalizing creation of a scan, which translates to fewer failed setups and less trial-and-error.

- Inline creation for dependent entities - you can now create items such as policies, authentication methods, and runners directly within the scan flow via inline panels, without navigating away in the interface and breaking momentum.

Added API Endpoint Validation

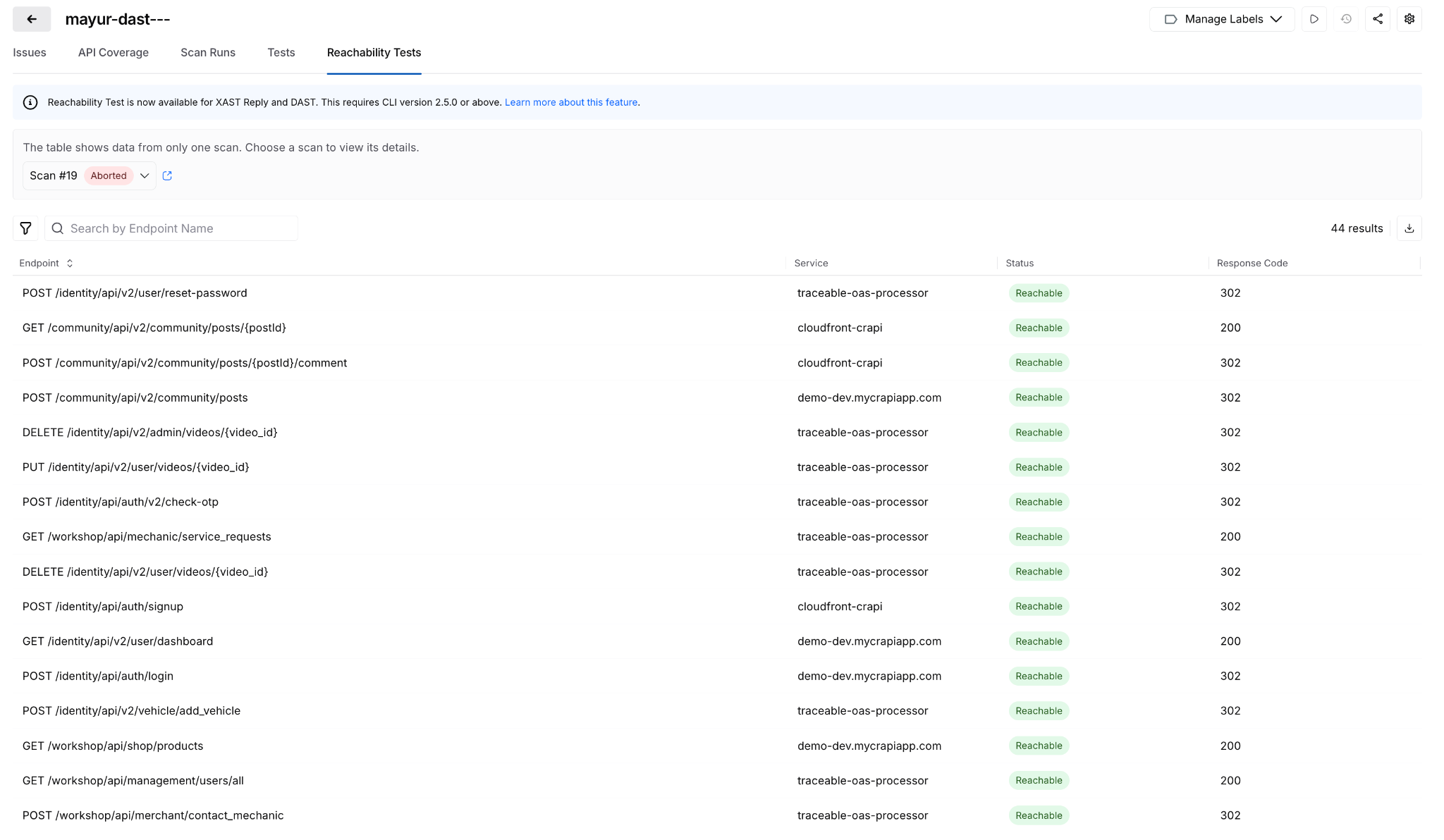

The new reachability validations in XAST Replay and DAST help you confirm whether APIs are actually reachable and properly authenticated, so scan execution stays focused on targets that can produce real results.

The highlights include:

- Dedicated Reachability Test view in scan configuration - each scan now includes a Reachability Test tab that provides a rundown of API endpoint-level readiness before test generation proceeds.

- Detailed endpoint visibility - you can see the full list of API endpoints, service mappings, reachability status, response codes, and response descriptions in one place.

- Downloadable CSV for analysis and troubleshooting - results can be exported for offline review, making it easier for you to share findings, investigate failures, and track patterns across environments.

- Smarter test generation control - helps reduce irrelevant noise by preventing test cases from being generated for APIs that are unreachable or fail authentication.

Why Teams Struggle with API Security Testing

These launches address two persistent sources of friction in API security testing: configuration complexity and execution inefficiency. Both slow teams down, create avoidable rework, and make it harder to get to meaningful security outcomes.

1. Scan setup has been too easy to get wrong

The problem is not just that the setup takes time. It is that the experience in tooling has often lacked enough structure, validation, and in-context explanation.

When configuration is too complex, users are far more likely to:

- Enter incomplete or incorrect settings

- Hesitate because they are unsure what a field requires

- Depend on internal experts or professional services to get a scan configured properly

- Spend more time troubleshooting than actually testing APIs

Security teams have long been dealing with incorrect or incomplete configurations, unclear field usage, and longer times to initially create a successful scan.

2. Weak validation delays necessary feedback

Without strong validation at the point of initial setup, users can move forward thinking a scan is correctly configured, only to discover later that something was malformed, missing, or misunderstood.

That creates a chain reaction:

- Errors are caught after setup instead of during setup

- Users have to backtrack and redo work

- Confidence in the scan creation process drops

- Time-to-value gets stretched unnecessarily

Without validations, helper text, tooltips, and field-specific guidance, it’s easy to make mistakes when entering wrong inputs and making selections.

3. Fragmented workflows break momentum

Context switching creates another major issue. If users need to leave the scan flow to create a policy, configure authentication, or add a runner, the API test setup experience becomes fragmented.

That fragmentation leads to:

- Slower scan creation

- More abandoned or half-finished workflows

- Higher odds of misconfiguration

- Less intuitive user experience

Teams often waste time bouncing between multiple pages and increase the likelihood of mistakes without inline workflows.

4. Test generation doesn’t equate to execution readiness

On the execution end of the equation, teams may encounter cases where tests are generated even when the target APIs are unreachable or not properly authenticated.

That leads to several downstream problems:

- Unnecessary test generation

- Wasted runner resources

- More noise in scan output

- Longer scan times

When API endpoint targets aren’t validated upfront, the result is unnecessary test generation and low-quality output.

5. High activity does not always mean high value

Large numbers of generated tests can look impressive on release dashboards, but if those tests are tied to unreachable APIs, they fail to create real security value.

Teams are left with:

- Inflated scan activity metrics

- Less trust in reported results

- Wasted time separating signal from noise

- Reduced confidence around coverage

Improper scan configurations that produce high volumes of poor results yield inaccurate metrics that are critical to application security programs. This reality can create a false sense of confidence in security posture.

A Closer Look at What’s New in Harness API Testing

These enhancements improve two critical parts of the API testing experience: how scans are configured and how test execution readiness is validated.

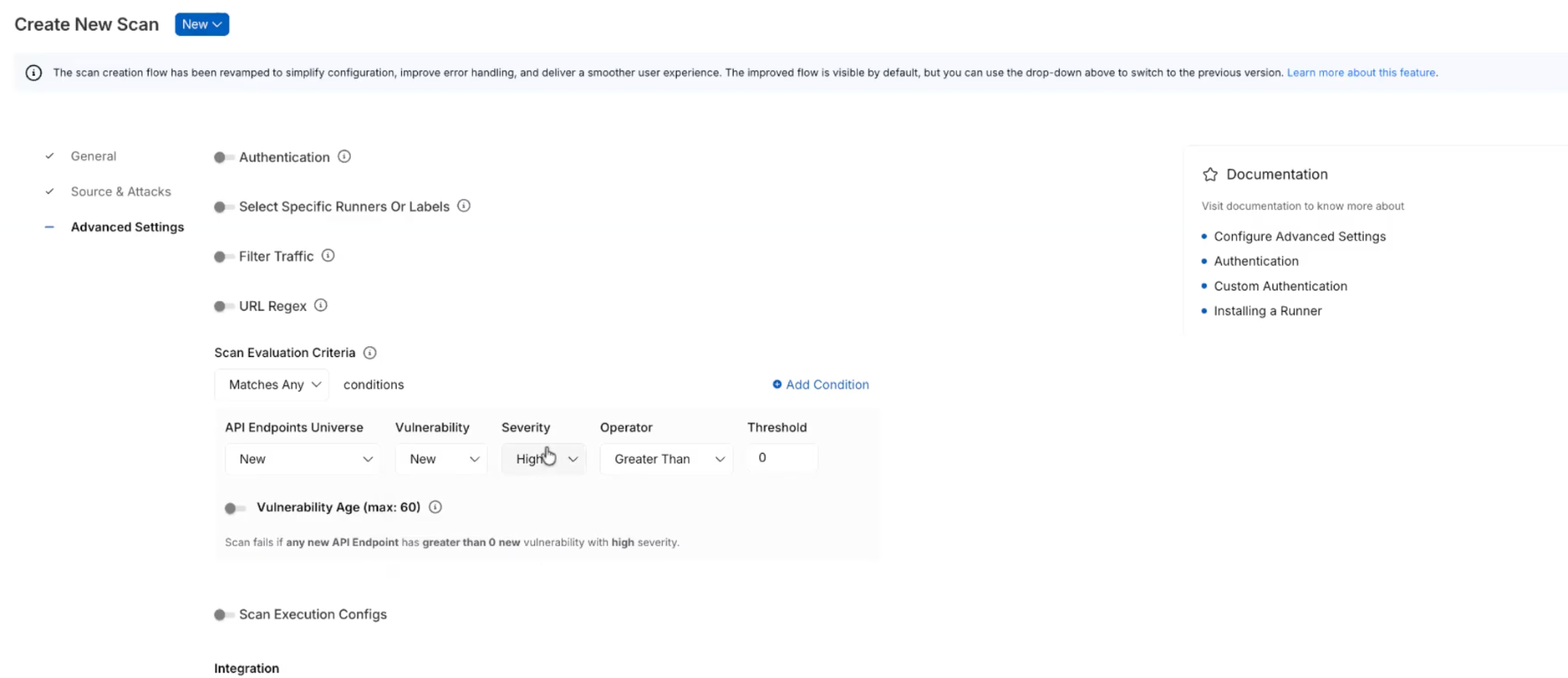

Scan Configuration Revamp & Validation Enhancement

Rather than spreading configuration across a larger set of steps, the new flow reduces the experience to three main sections that are:

- General - define the basics, such as scan name, environment, frequency, and incremental scan behavior.

- Source & Attacks - select traffic and policy.

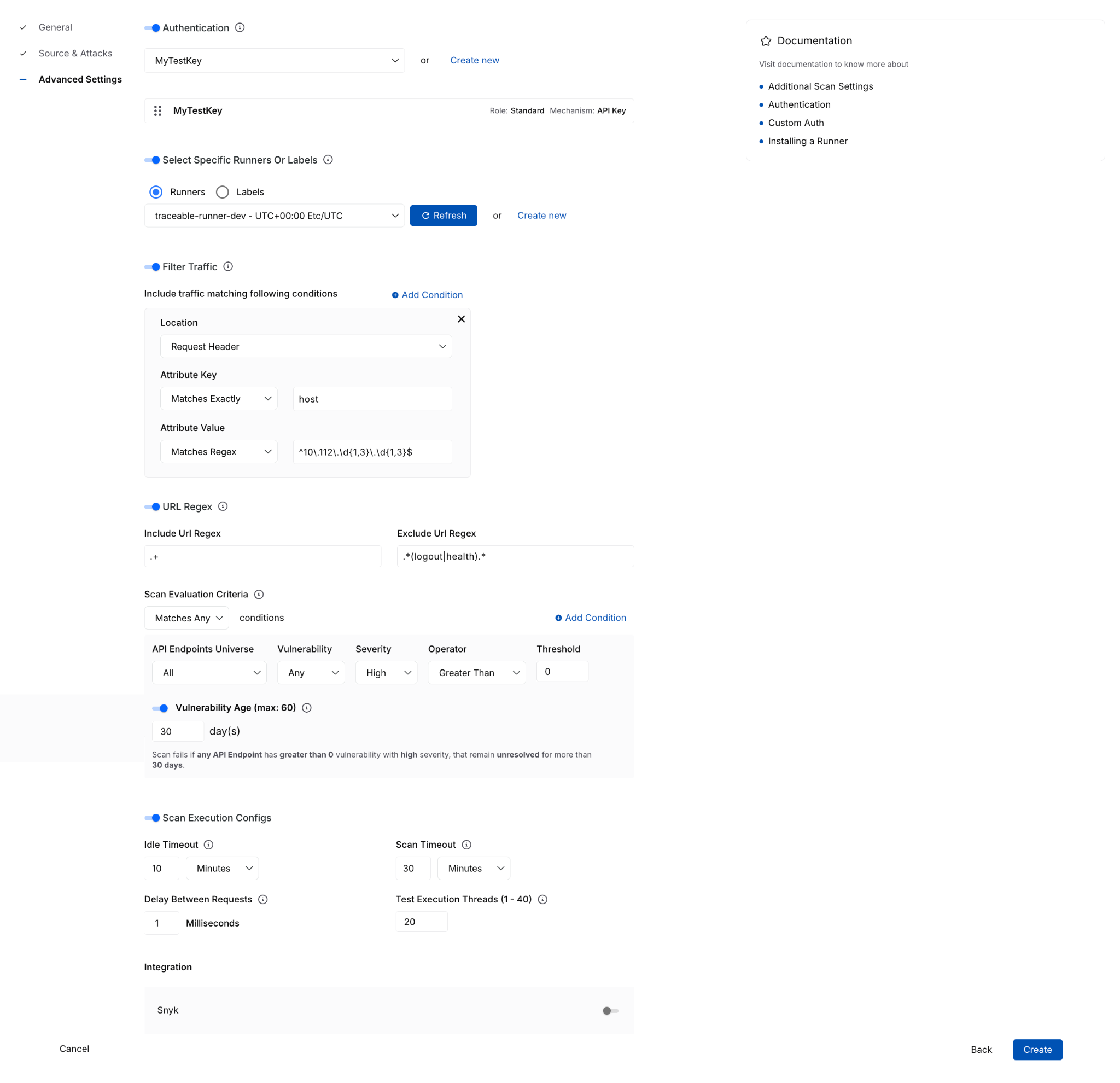

- Advanced Settings - configure optional items such as authentication, runners, traffic filters, URL regex, evaluation criteria, timeout behavior, and integrations.

That reorganization does more than simplify the UI. It separates required setup from optional tuning, helping you complete scan creation with more confidence and less guesswork.

With these enhancements, you can now more easily:

- Understand fields in context through tooltips, helper text, and side-panel documentation available during setup.

- Create policies inline without leaving the scan configuration flow.

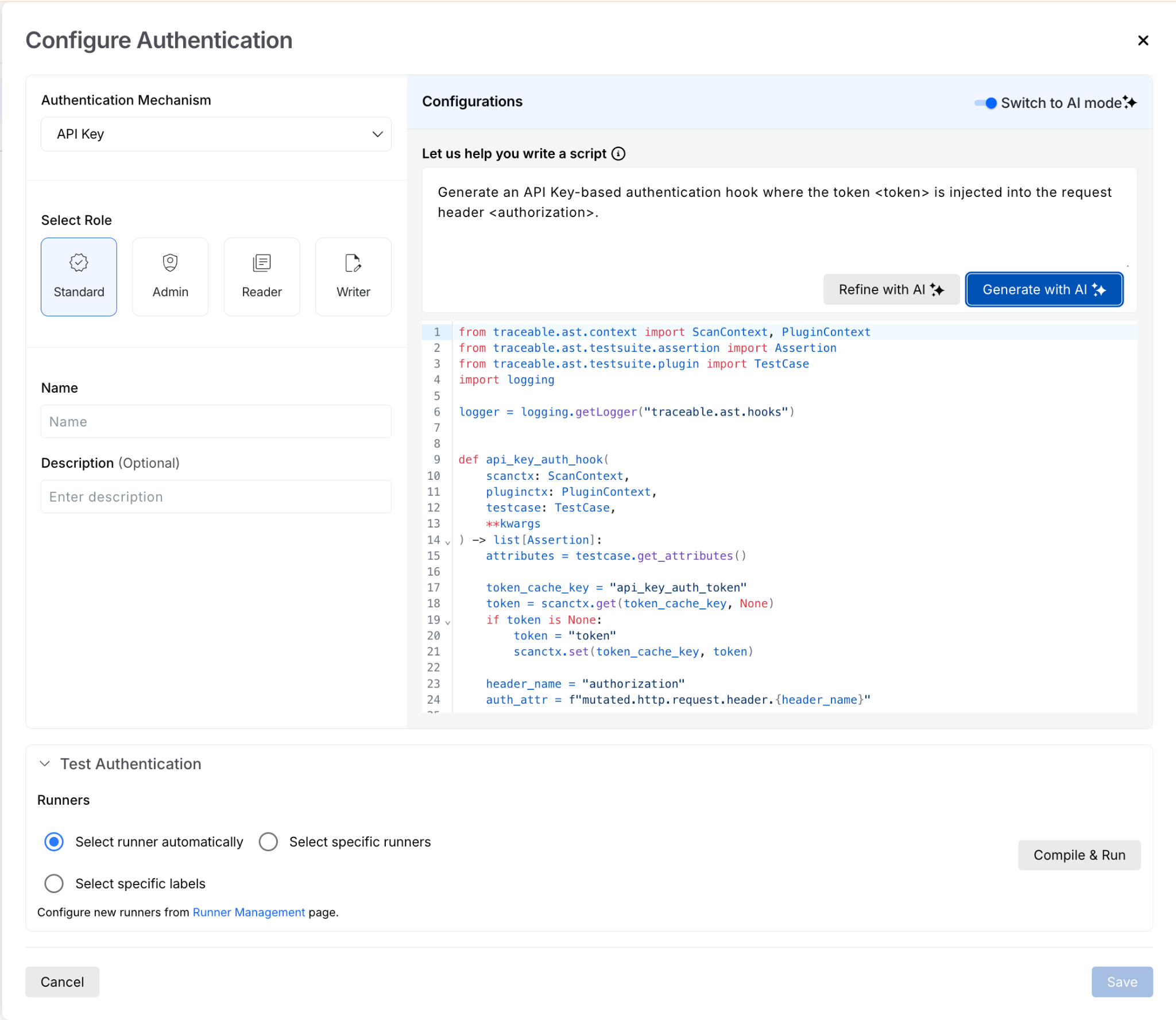

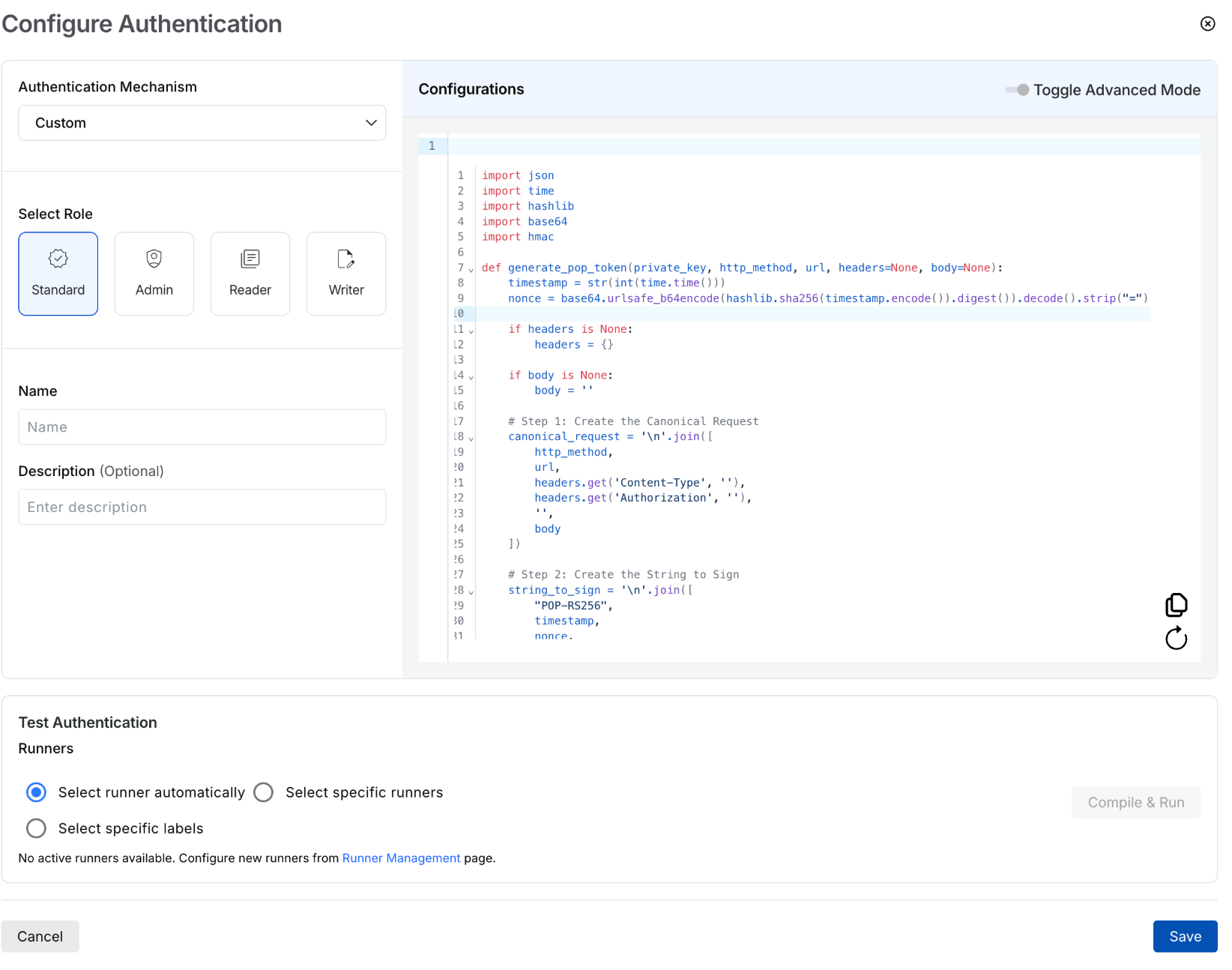

- Configure authentication inline, including form-based and AI-based authentication options, then immediately select them for use.

- Create or select runners from the same page instead of navigating out to separate workflows.

This enhancement is especially important for teams that want to move quickly without sacrificing correctness. Keeping these dependent tasks in a single flow reduces interruptions and lowers the risk of setup errors.

The advanced settings experience also adds more clarity around complex configuration options, where you can now work with:

- Traffic filter conditions

- URL regex settings

- Scan evaluation criteria with dynamic explanatory text

- Idle timeout and scan timeout execution controls

- Integrations

These details matter because they turn a complex setup from opaque to guided and actionable. You can find more technical documentation here.

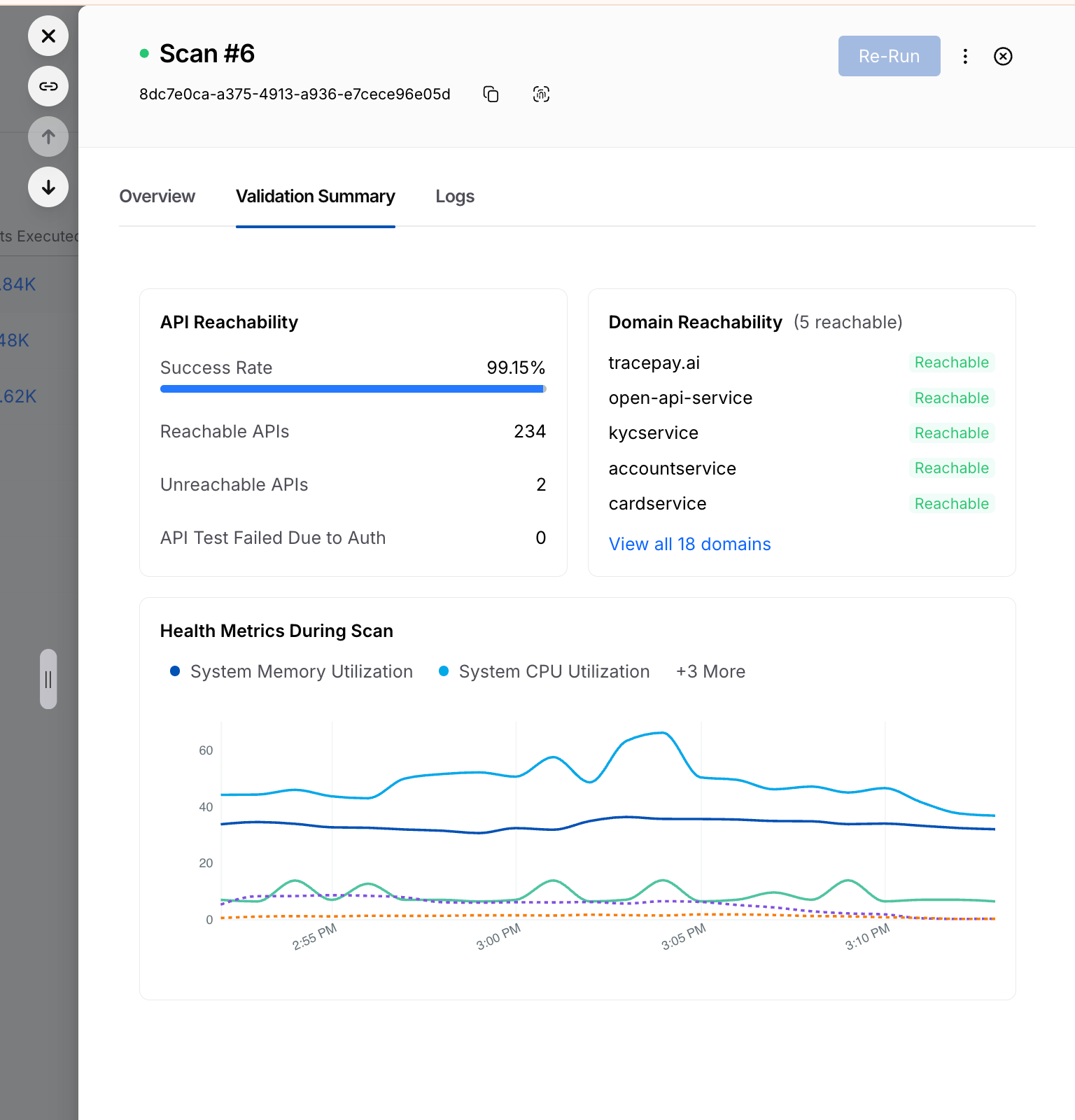

For every running or completed scan, you will now see a Validation Summary tab that highlights critical details and the overall health of the configured API testing. Information here includes:

- Total number of reachable and unreachable APIs

- Number of tests failed due to auth issues

- Domain reachability

- Resource utilization during the scan window

Reachability Test for XAST Replay and DAST

The Reachability Test enhancement brings that same philosophy to execution: validate earlier, execute smarter. Before generating tests, the Harness platform now provides clearer visibility into whether APIs are actually ready to be tested.

The new Reachability Test tab gives you a dedicated place to inspect endpoint readiness before test generation begins. It surfaces:

- the full list of API endpoints

- service mapping details

- reachability status

- response codes

- response descriptions

This enhancement turns what was previously harder to diagnose into something visible and actionable.

The Harness platform now uses reachability and authentication readiness as part of test generation control.

That means that no test cases are generated when:

- API endpoints are unreachable

- authentication is missing

- authentication fails

The reachability tests help ensure execution resources are spent on APIs that can actually produce meaningful results. For security teams, this creates a more efficient and trustworthy scan lifecycle with:

- less wasted runner consumption

- fewer irrelevant or misleading test artifacts

- cleaner signal in scan results

- better alignment between reported coverage and executable coverage

You can read more technical details here.

Taken together, these enhancements make API security testing more usable at the front end and more efficient at the back end. Teams can configure scans faster, with fewer errors and less dependency on expert intervention, while also improving the quality of what gets executed once a scan runs.

Get Started Today

These Harness API Testing features are available immediately with your existing Harness subscription. There is no additional cost or setup required.

- Current Customers: Log in to your dashboard today to test the security of your APIs seamlessly and more effectively.

- New to the Platform? If you aren't yet validating your API security, contact us to schedule a personalized demo of Harness API Testing in action.

Google Cloud Next ’26 Recap: AI, Efficiency, and the Rise of Frictionless Delivery

Summary: Google Cloud Next ’26 focused on the future of software delivery, emphasizing that AI, platform consolidation, and an urgent push toward efficiency are reshaping the Software Development Life Cycle (SDLC). The key takeaway from the event was that organizations are moving from AI experimentation to operationalization, actively consolidating fragmented tools onto end-to-end platforms that embed AI for control, intelligence, and speed.

Google Cloud Next 2026 made one thing clear: the future of software delivery is being reshaped in real time by AI, platform consolidation, and an urgent push toward efficiency. From the show floor to executive roundtables, the conversations we had reinforced a consistent theme: teams are looking to tackle the AI Velocity Paradox by simplifying, modernizing, and intelligently automating every stage of the SDLC.

Strong Signal from the Event

We had hundreds of meaningful conversations with engineering, platform, and cloud leaders. The patterns were unmistakable.

Across industries, organizations are grappling with:

- Fragmented CI/CD pipelines

- Manual or inefficient release processes

- Rising cloud costs without clear accountability

- Early, but serious, exploration of AI in the SDLC

These challenges mapped directly to Harness’ core solution areas:

- Software delivery & CI/CD modernization

- Database management & deployments

- AI-powered DevOps & FinOps

And the urgency is real. We spoke with teams:

- Still running homegrown deployment systems, shipping just twice a year due to regulatory constraints, but now exploring AI agents to orchestrate each pipeline step

- Deep in Jenkins-based environments, actively evaluating how to standardize pipelines and modernize security

- Managing multi-cloud footprints and searching for better cloud cost visibility and control

- Using CI tools with agents, but needing help scaling automation across build, test, and deploy workflows

- Leaning into AppSec integrations within CI/CD as security shifts further left

A recurring thread:

“We’re already experimenting with AI, but we need a platform that brings it all together.”

AI in the SDLC: From Curiosity to Commitment

If 2025 was about AI experimentation, 2026 is about operationalization.

We saw a sharp increase in:

- Interest in AI-assisted pipeline orchestration

- Discussions around AI-driven security and compliance workflows

- Demand for intelligent cost optimization tied to engineering activity

Multiple attendees explicitly mentioned:

- Building or planning AI agents across delivery pipelines

- Evaluating vendors based on AI capabilities within CI/CD and DevSecOps

- Wanting to understand how AI can reduce toil without sacrificing control

This shift aligns perfectly with Harness’ vision of AI-native software delivery, where intelligence is embedded, not bolted on. In fact, at Next, we announced a major step forward in making that vision real through our expanded partnership with Google Cloud. By integrating Google Cloud Developer Connect with the Harness Software Delivery Knowledge Graph, we’re enabling a unified layer of AI intelligence across the entire SDLC.

This means AI in Harness isn’t operating in silos. It has full, real-time context across code, pipelines, infrastructure, and runtime signals. The result is smarter automation, faster root cause analysis, and AI agents that can act with confidence, not guesswork. It’s a foundational step in moving from AI-assisted workflows to truly AI-native delivery systems, which is exactly what attendees told us they’re looking for.

A great example of this is Keller Williams. Keller Williams leveraged the Harness platform to transform their software delivery, increasing deployment frequency from a few times a year to over 20+ annual releases. By automating manual pipelines, the platform eliminates operational bottlenecks and allows its developers to focus on rapid innovation rather than deployment logistics.

DevOps Panel: The Future is Frictionless

Harness’s Martin Reynolds joined leaders from Atlassian, Datadog, LangChain, and Google to explore what’s next in a session titled: The Future of Developer Experience is Frictionless

The takeaway?

The next leap in productivity won’t come from isolated tools. It will come from connected, intelligent systems that remove friction entirely. With 150+ attendees on the final day, it was clear this message resonated.

The Bigger Picture: Platform Consolidation is Accelerating

Across every conversation, one strategic shift stood out:

Teams want fewer tools and smarter ones.

Organizations are actively:

- Replacing point solutions and legacy CI/CD tools

- Consolidating onto end-to-end platforms

- Prioritizing security, cost, and delivery in a single workflow

Jenkins modernization alone came up repeatedly. Not as a question of if, but when.

Recognition That Matters

The event kicked off with Google Cloud recognizing its partners. We were proud to be named Google Cloud’s 2026 Technology Partner of the Year, a reflection of the innovation and impact we’re delivering together with GCP.

Final Takeaway

Google Cloud Next ’26 wasn’t just about cloud. It was about control, intelligence, and speed.

The organizations moving fastest right now are:

- Embedding AI into their delivery workflows

- Rethinking how pipelines, security, and cost intersect

- Investing in platforms that eliminate—not add—complexity

Harness is uniquely positioned at that intersection.

And based on what we saw in Vegas, the demand for that future is only accelerating. Here’s the event recap video.

If you connected with us at the event or want to continue the conversation, we’d love to dive deeper.

.png)

.png)

Get Ship Done: Everything We Shipped in April 2026

It’s becoming increasingly clear that AI-generated code can create real challenges once it reaches production. At Harness, we’ve been focused on innovating fast and solving those problems, so teams can move quickly without sacrificing reliability.

In the past 30 days, we delivered 70+ new features. These features enable our users to ship fast, not by cutting corners, but by sharpening the feedback loops: faster builds, integrated security checks within the pipeline, deeper visibility into AI across discovery and testing, and deployment tools that are intuitive enough to use without a runbook.

Here’s a look at everything we shipped.

April Highlights

- Harness now lives inside the Cursor IDE. Developers and AI agents go from code change to vulnerability detection, CI/CD execution, security validation, approvals, deployments, and operational insight without leaving the editor.

- Google Cloud Developer Connect now integrates with our Software Delivery Knowledge Graph, giving teams a unified, AI-ready view of the entire software delivery lifecycle.

- With Warehouse Native Experimentation, you can run A/B tests and feature experiments directly in Snowflake, Redshift, or BigQuery, using your existing assignment and metric data as the source of truth. No data exports, no duplication outside your warehouse.

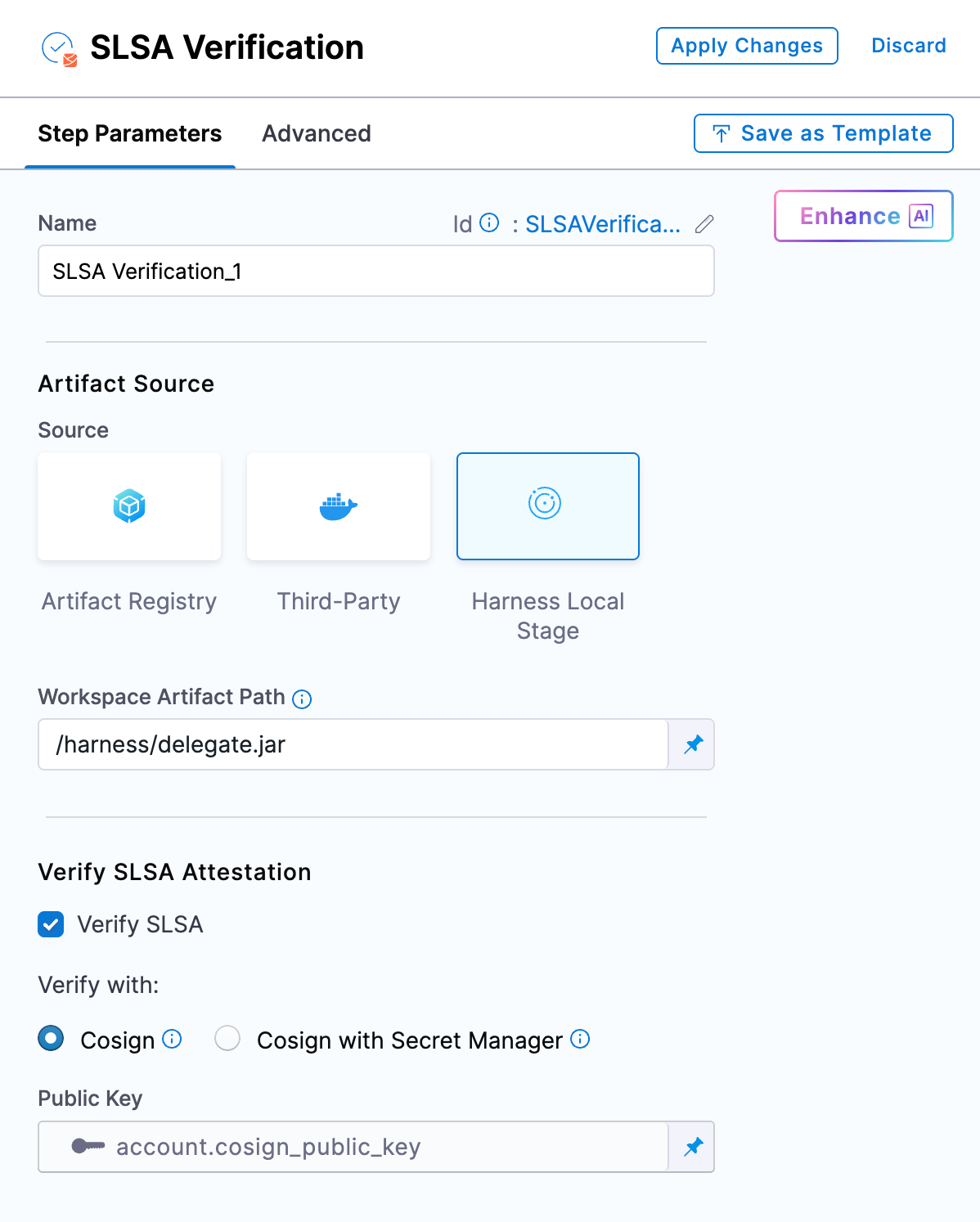

- With SLSA provenance for non-container artifacts, supply chain attestation now covers Helm charts, JAR/WAR files, and standalone binaries, not just container images. If you ship more than Docker images, your provenance story just got complete.

- When an incident closes, Harness AI SRE generates a structured six-section retrospective automatically. What typically takes 2-4 hours comes out in seconds, with action items captured in real time from Slack during the incident.

AI-Powered Development and Delivery

Cursor Plugin

Harness is introducing the Cursor Plugin, bringing AI-native software delivery directly into the Cursor editor. Developers and AI agents move from code changes to vulnerability detection, CI/CD execution, security validation, approvals, deployments, and operational insight without leaving the IDE. The integration includes the Harness Secure AI Coding hook for Cursor. Download the plugin.

Google Cloud Partnership for a unified AI for Software Delivery

We partnered with Google Cloud to integrate Developer Connect with our Software Delivery Knowledge Graph, giving teams a unified, AI-ready view of the entire software delivery lifecycle.

This enhanced context enables smarter, faster AI-driven decisions, helping engineering teams troubleshoot issues, improve accuracy, and deliver software with greater confidence and efficiency. Learn more.

Harness MCP Server Updates

The biggest additions to this version of the MCP server are pipeline YAML support so agent-driven pipelines work with the current schema, OSS vulnerability lookup for supply chain security with anti-fabrication extractors, and added resilience support. Download and get started.

Security Baked Into the Pipeline

SLSA Provenance for Non-Container Artifacts

Supply chain attestation via SLSA now covers Helm charts, JAR/WAR files, standalone binaries, and other artifact types, not just container images. Generate and verify provenance across the full artifact portfolio. Learn more.

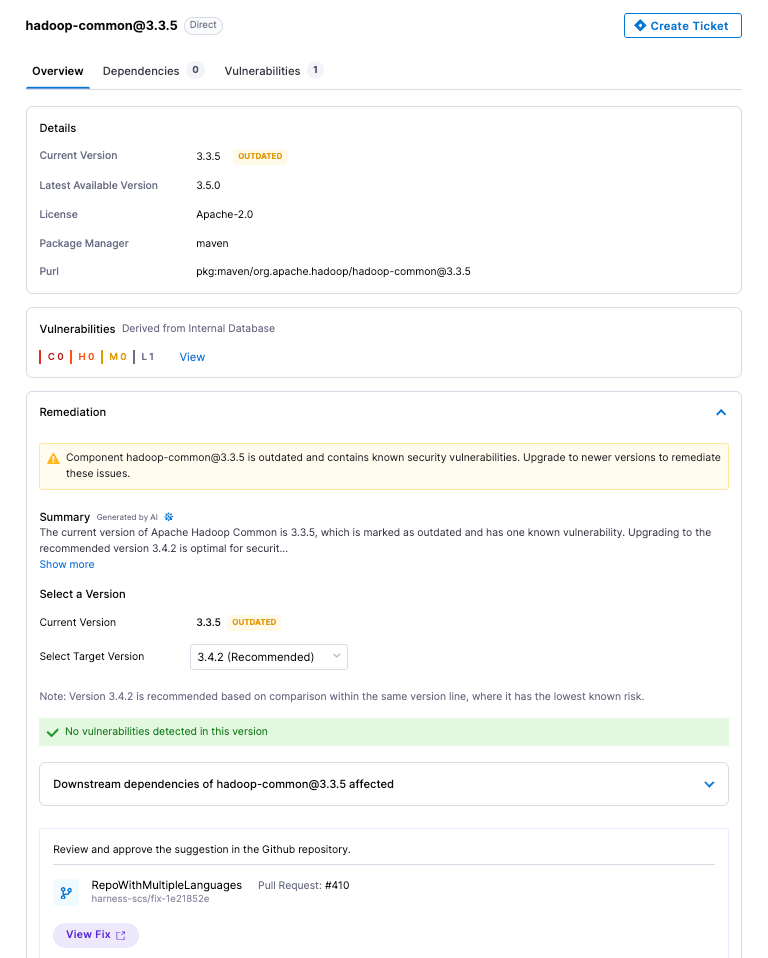

OSS Remediation for Code Repositories

Automated and manual remediation for vulnerable open-source components now runs directly against code repositories. When a dependency has a fix available, the tooling can apply it.

API Security Scan Configuration Revamp

The scan creation flow has been simplified into three logical groups: General, Source and Attacks, and Advanced Settings. Every field now has a tooltip and step-level documentation. Field-level validation catches misconfigurations before a scan runs.

Reachability Test for DAST and API Security Scans

Before generating test cases, a new Reachability Test validates that each API endpoint is actually reachable. Endpoints that don't respond don't generate test cases. Reduces wasted scan time against dead endpoints.

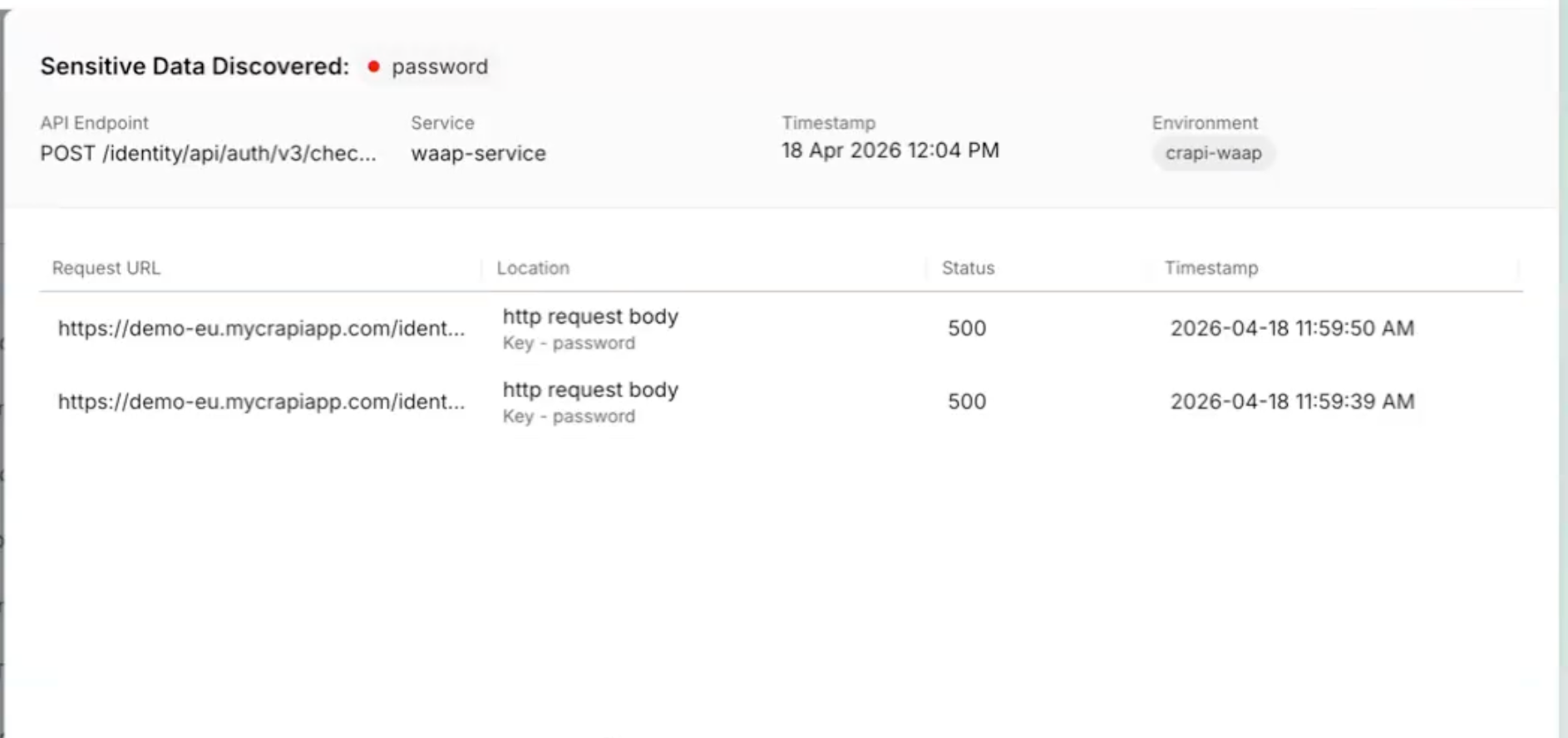

Posture Events: Sensitive Data Evidence

When a posture event involves sensitive data exposure, the finding now shows exactly where: which parameter, in the request or response, with the classification and dataset inline. Previously required navigating across modules to get this context.

All Occurrences Dashboard

A new account-level dashboard surfaces every raw vulnerability finding across all pipelines, not just the rolled-up view. Filter, export, and drill into file paths, line numbers, and repos. Useful when you need to understand whether a scanner finding is one instance or fifty. Release notes.

Prisma Cloud Scan Result Enhancements

Prisma Cloud (formerly Twistlock) scan results now include File Name, Distro, and Distro Release fields. The file name is derived from packagePath to improve traceability when the same vulnerability appears across multiple package locations.

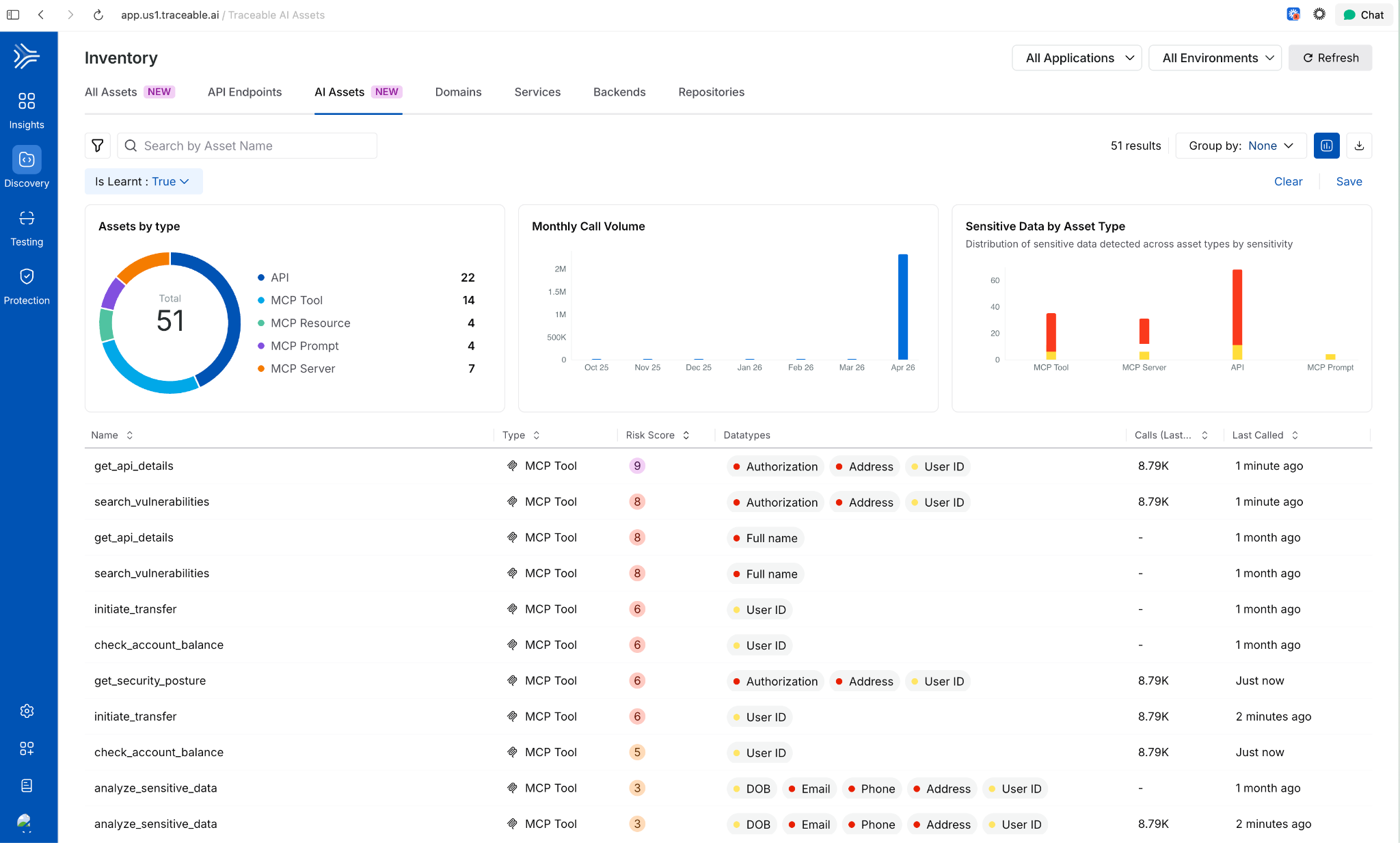

AI Asset Discovery and Risk Visibility

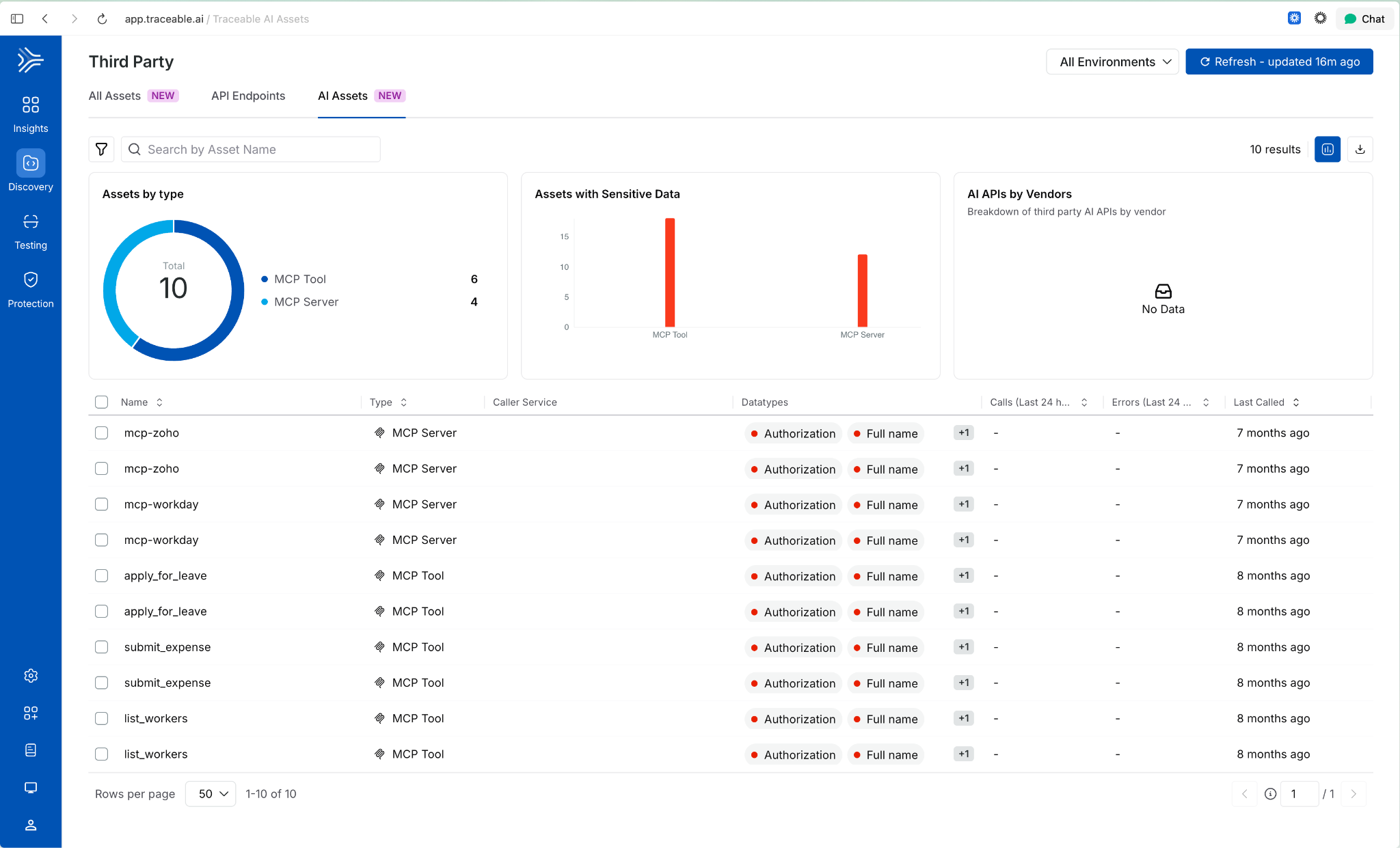

Third-Party MCP Discovery

Extends AI asset discovery beyond your own application ecosystem. Harness now surfaces external MCP servers and the AI assets they expose, giving security and platform teams visibility into AI interactions that originate outside their direct control.

Behavioral Insights Extended to MCP Tools

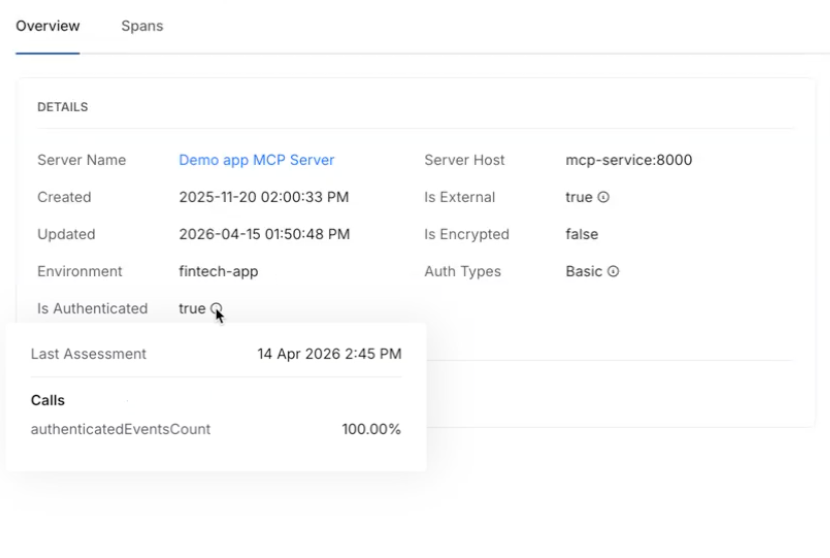

Internet exposure, encryption status, and authentication usage were previously available for APIs only. Those same behavioral signals now apply to MCP tools. View them via the info tooltip on any MCP tool in the inventory. Helps identify high-risk tools based on actual usage patterns, not configuration alone

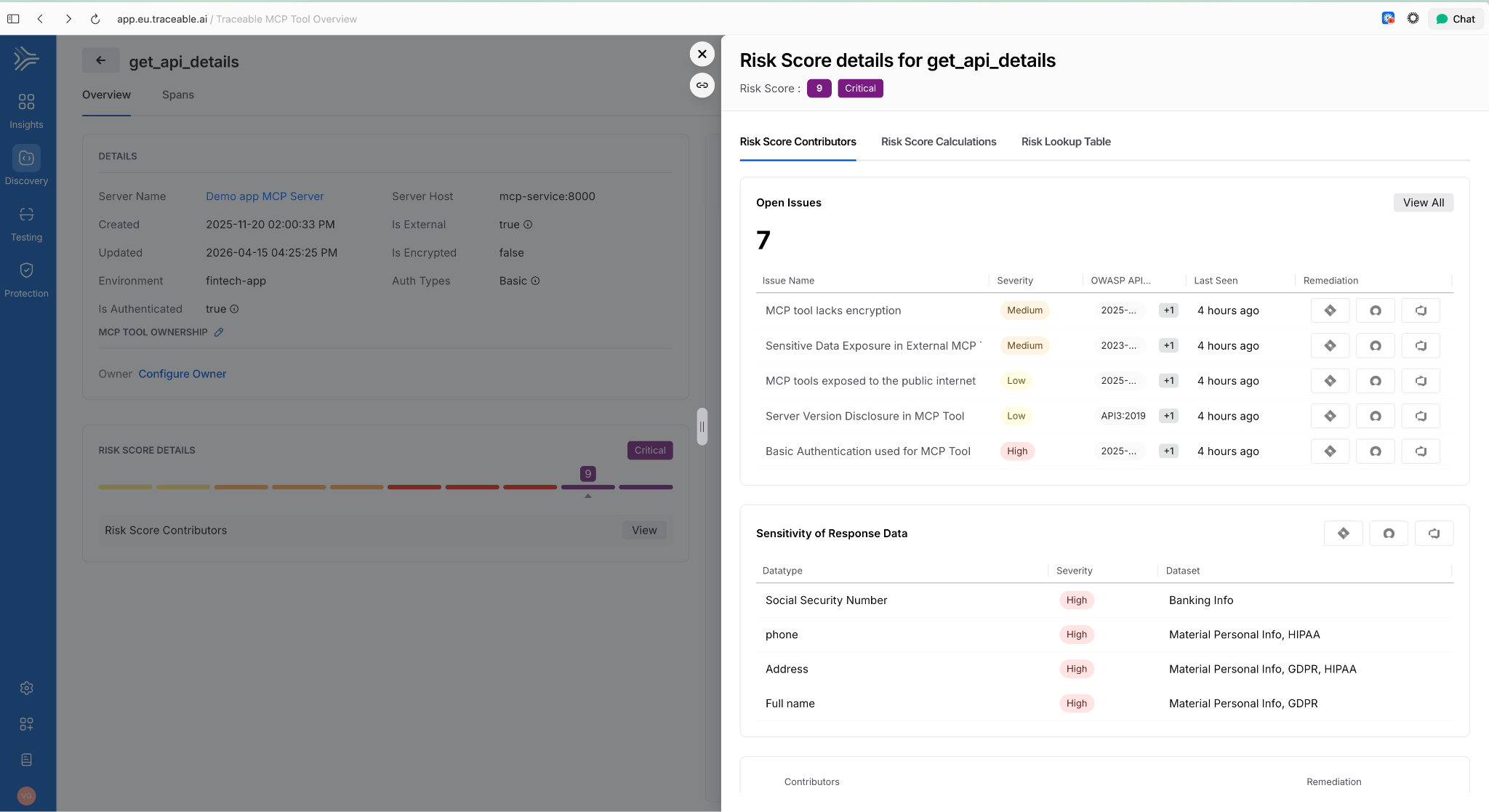

Risk Score Enhancements for APIs and MCP Tools

Two changes in one release: API risk now shows a unified view with contributing factors, the Likelihood vs. Impact calculation, and direct links to underlying issues. MCP tools now have their own dedicated risk scores using the same model. Side-sheet editing means you can act on a finding without leaving context.

AI Assets Tab and Licensing Visibility

A dedicated AI Assets tab provides a single view of all AI-related assets discovered in customer environments: AI APIs, MCP tools, models, and their usage patterns. Licensing visibility is included so teams can track AI consumption against entitlements.

Deploy Faster and More Reliably

Improved Pipeline Execution Layout

The pipeline execution listing page now uses a card-based layout. The Service and Environment columns are replaced by an Update Summary column showing service-to-environment mappings for CD stages and schema-to-instance mappings for Database DevOps stages, i.e., more signal per row!

AWS Connector Validation Without ec2:DescribeRegions

AWS connector validation now uses sts:GetCallerIdentity instead of ec2:DescribeRegions. The new call requires no IAM permissions, which means tighter least-privilege configurations no longer block connector setup.

ApplicationSet TemplatePatch Support

TemplatePatch configuration in GitOps ApplicationSets is now preserved in the Manifest Edit panel. Previously, setting TemplatePatch in the UI and saving caused the configuration to disappear.

Faster Builds

Cache Storage Connector Override in YAML

Self-hosted builds can now specify a stage-level connector override for cache storage in YAML. If not set, the connector from Default Settings is used. Useful when different stages need to read from different cache backends.

Containerless Step Binary Path

Containerless CI steps now use app.harness.io as the default download path for step binaries. This reduces egress dependencies on external sources.

Feature Flags and Experimentation

Warehouse Native Experimentation

Run experiments directly in your data warehouse using your own assignment and metric data. No exporting, no duplicating data outside your analytics source of truth. Supports Snowflake, Amazon Redshift, and Google BigQuery. Release notes.

Reallocate Traffic API

A new Reallocate Traffic endpoint lets you reset the bucketing seed for a feature flag in a specific environment via the API. Useful when you need to re-randomize user assignments without changing the flag configuration.

Infrastructure as Code

Native Terragrunt Support and Multi-IaC Orchestration

Teams can now orchestrate complex deployments across Terraform, OpenTofu, and Terragrunt in a single platform. A unified multi-IaC control plane eliminates fragmented tooling, standardizes workflows, and covers provisioning, configuration, and deployment consistently. Read the blog post.

AWS CDK Support (Beta) Define AWS infrastructure in TypeScript or Python using the AWS Cloud Development Kit and let Harness handle provisioning, state, and pipeline integration. Engineers who already write CDK don't need to learn HCL or adopt a separate tool.

Module Registry 2.0 Store IaC modules as artifacts natively in Harness, auto-sync new versions as they're published, and run module onboarding directly on Harness pipelines. A single place to manage the full module lifecycle: publishing, versioning, and consumption, without stitching together a registry, a pipeline tool, and a version tracker.

Terraform Sensitive Output Masking (Beta)

Output fields marked sensitive = true in your main.tf are now automatically masked in the pipeline Output tab during Terraform Apply step execution. Sensitive outputs remain accessible in downstream steps via Harness expressions, but don't appear in plain text in the UI.

Artifact Management

Swift Package Registry

Artifact Registry now supports Swift packages with full SwiftPM compatibility. Authenticate, publish, and resolve dependencies using the registry URL directly. Existing SwiftPM workflows work without changes. Release notes.

Raw File Registry

A new Raw File registry stores and retrieves arbitrary files by path: archives, reports, configuration files, binaries, anything that doesn't belong to a package manager. Upload and download via HTTP and curl. No specialized client required.

Copy Version Between Registries

Promote a specific package version from one Harness registry to another directly from the UI. No re-pushing from your machine, no scripts to move artifacts between project or organization registries.

Soft Delete for Artifacts and Versions

Deleting a package or version now moves it to a Deleted view where it remains recoverable until the retention window expires. Permanent delete is available from the same dialog when that's the intent.

Artifact Registry Audit Dashboard

An out-of-the-box dashboard records every artifact upload and download across all Harness Artifact Registries. Provisioned and maintained automatically for accounts with Artifact Registry enabled. No setup required.

Webhooks Extended to Python, Maven, and NuGet

Artifact Registry webhooks now cover Maven, NuGet, and Python (PyPI) in addition to existing package types. Use artifact events to trigger CI/CD, security scans, or notifications for more of your package ecosystem.

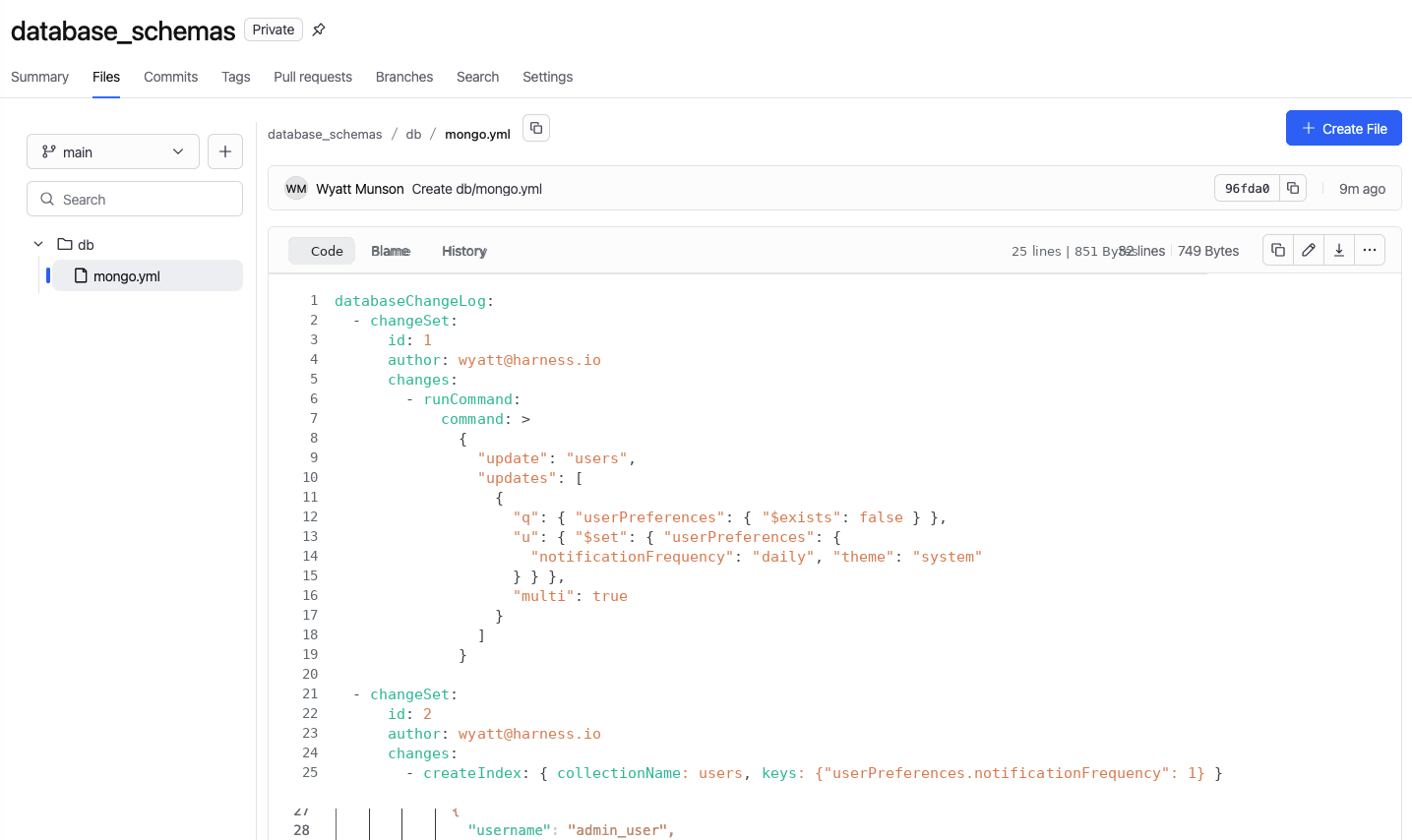

Database Changes Without the Drama

IBM DB2 Support

Database schema changes and migrations now work across all DB2 variants: DB2 LUW, DB2 for iSeries, and DB2 for z/OS. Mainframe and midrange databases now fit in the same pipeline workflow as everything else. Release notes.

Google BigQuery Support

Deploy database changes to BigQuery using the same Liquibase-based workflow used for relational databases. No separate tooling or custom scripting required.

Percona Toolkit for MySQL

Use Percona Toolkit natively in Harness Database DevOps to make MySQL schema changes safer and virtually downtime-free. Read the blog post.

ECS Support for Database Jobs (Early Access)

Database DevOps can now run deployment jobs on ECS Fargate instead of Kubernetes. For teams not running Kubernetes, this removes the requirement to stand up a cluster just to run database migrations. Contact Harness to enable. Read the docs.

Keyless Authentication for Google CloudSQL

Authenticate to CloudSQL (Postgres and MySQL variants) using the delegate's service account. No credentials to rotate, no secrets to manage. Read the docs.

OIDC Authentication for Google Cloud Databases

Authenticate to CloudSQL (Postgres and MySQL), Google Spanner, and Google BigQuery using OIDC. Works with any OIDC-compatible identity provider already in use for the rest of your Google Cloud infrastructure. Read the docs.

Engineering Metrics and Developer Portal

Environment Management

Developers can now self-serve dev, test, staging, and production environments directly from the developer portal. Platform teams configure the governance rules; developers provision within those bounds without opening a ticket. Read the blog post.

ServiceNow Integration for Engineering Metrics (Beta)

DORA metrics in the Efficiency Insights dashboard can now be calculated from ServiceNow incident and change management data. Deployment Frequency, Change Failure Rate, and MTTR all supported. Useful for teams where ServiceNow is the system of record for incidents, not a secondary tool.

Custom Dashboards in Engineering Metrics (Beta)

A new Canvas page (being renamed to Studio) lets teams build custom Insights dashboards using HQL queries across all data sources. Dashboards support Draft and Published states. Query Variables allow dashboards to adapt dynamically per team or environment.

Custom Entity Kinds in Developer Portal

Platform engineers can now define entity kinds beyond the built-in set (Component, API, Resource, Environment, System). Model domain-specific software components that don't fit existing kinds, with their own name, icon, and JSON Schema for validation. Release notes.

SonarQube Integration in Developer Portal

Harness connects to SonarQube Server (self-hosted) or SonarQube Cloud and brings projects into the developer portal catalog as catalog entities. Code quality data surfaces alongside the rest of your software catalog.

Scorecard Aggregation

Scorecard data can now be aggregated across multiple catalog entities. Roll up compliance and health metrics from individual components to systems or domains without manually combining reports.

Custom Dashboard Data Retention Extended to 12 Months

The data retention period for custom dashboards increased from 3 months to 12 months. Longer historical windows for trend analysis and compliance reporting.

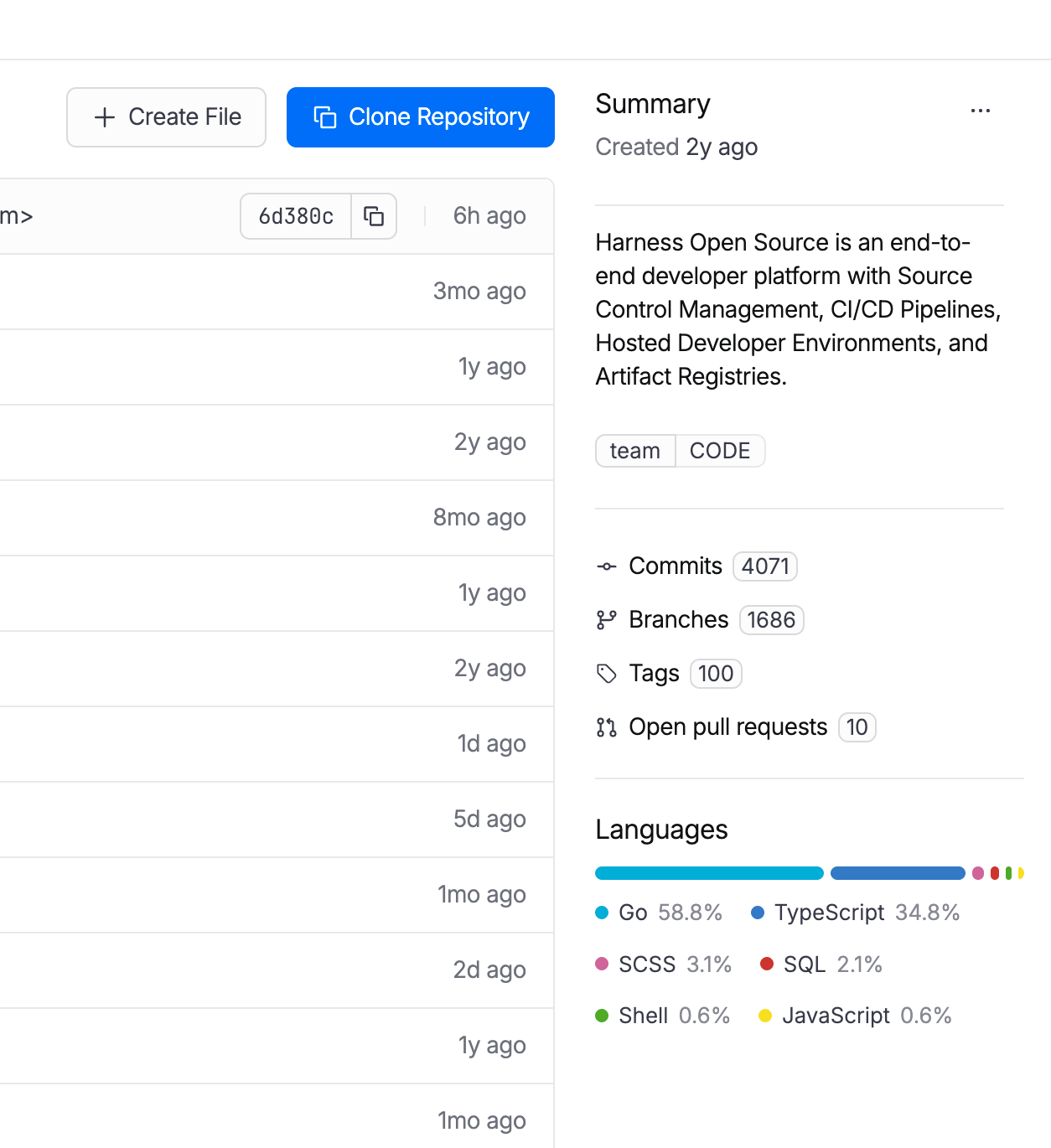

Code Repository Language Breakdown

Developers can now see the language composition of any repository directly in the list view and repo detail page. Particularly useful when migrating off other source control systems and auditing what you're moving.

Code Repository Tags Repositories can now be tagged with metadata like team, intent, or domain, consistent with how pipelines, connectors, and other Harness entities are tagged. Useful for filtering, governance, and search at scale.

AI SRE

AI-Generated Post-Mortems with Action Item Detection

When an incident closes, AI SRE automatically generates a structured six-section retrospective: Summary, Impact, Root Cause, Resolution, Insights, and Lessons Learned. The AI synthesizes the full incident context: timeline events, Slack conversations, RCA theories, and responder actions. What typically takes a lead engineer 2-4 hours to write comes out in seconds. Action items are detected in real time from Slack conversations and meeting transcripts during the incident, with each item including a description and the responsible person extracted from context. They carry forward into the post-mortem automatically, so nothing gets reconstructed from memory days later. Release notes.

ServiceNow Change Record Correlation in RCA

When an incident fires, the AI Investigator automatically pulls recent ServiceNow change records and correlates them to the incident timeline. If your organization already has a Harness ServiceNow connector configured for pipelines or approvals, change data flows into root cause analysis immediately with zero additional setup. Change records appear alongside deploy events and code changes in the AI's correlation engine, reducing manual cross-referencing between tools. Documentation.

Stakeholder Status Updates

Incident commanders can now broadcast structured status updates to subscribed stakeholders (executives, customer support, dependent teams) without flooding the war room. Stakeholders subscribe to the services they care about and receive updates triggered by the Incident Lead. The system pre-populates a branded email with incident ID, title, summary, impacted services, and current status. The sender reviews, edits if needed, and sends. Eliminates the "what's the status?" interruptions that pull responders out of active response. Release notes.

Google Chat Integration

Teams running Google Workspace can now run incident response directly from Google Chat: create incident channels, post updates, receive notifications, and collaborate in real time. Uses a Pub/Sub-based architecture for reliable message delivery. Bring incident collaboration to Google Chat on par with the existing Slack integration. One-time admin setup per organization.

Runbook Slug Commands in Slack

On-call responders can now trigger runbook automations from Slack using short slug commands: /harness run <slug>. No UI navigation during high-pressure response. Common actions like restart-pods or scale-up become muscle memory. Removes a context switch from the critical path during active incidents. Release notes.

Chaos Engineering and Resilience Testing

MCP support for Resilience Testing

MCP support for Resilience Testing improves extensibility across chaos and resilience workflows.

Pipeline Integration with Chaos Step Templates

Any experiment template can now be referenced and used from any scope in a pipeline. Makes it easier to standardize chaos execution across delivery workflows.

Probes and Observability Splunk Enterprise and Datadog APM Probes

APM probes now support Splunk Enterprise and Datadog. Teams can validate system behavior during experiments using the observability tools they already rely on.

Namespace Label Filters in ChaosGuard

ChaosGuard conditions now support namespace label filters, giving teams finer-grained control over which namespaces chaos experiments can target. Release notes.

Experiment Run Reports

Experiment run reports are now available in the UI and accessible via a new API endpoint that returns report data as JSON. Useful for integrating chaos results into external dashboards or compliance workflows.

Docker Labels-Based Chaos Injection on ECS

Added support for targeting ECS in-VM SSM chaos injection using Docker labels. Expands targeting flexibility for teams running mixed ECS workloads.

Other Updates

- Service account token notifications: Configure alerts for token creation, rotation, updates, expiration, deletion, and upcoming expiration across your notification channels.

- Cloud cost RBAC enforcement: Users with CCM Viewer (view-only) access no longer see an enabled Save Preferences button in Cost Settings. RBAC is now consistently enforced across the Recommendations and Anomalies pages. Release notes.

- Rejected and Ignored recommendations moved to main view: These lists are now in the Recommendations list itself, enabling direct export without extra navigation.

- AI test automation task nesting limit: Tasks can now nest at most two levels deep, preventing runaway task hierarchies. Enforced in both UI and backend. Release notes.

- Chaos Engineering LLM optimization: Recommendation calls are now processed in chunks instead of one-by-one, reducing latency for experiment recommendation generation.

- IDP sync and delete for integrations: Integration instances (ServiceNow, Kubernetes, SonarQube, GitHub) now include sync and delete actions directly in the UI.

- IDP separates create and edit permissions: Environment and Blueprint permissions are split into distinct Create and Edit actions for finer-grained access control.

- IDP custom user identifiers: The saveDiscoverEntities API now accepts an explicit action_identifier field when registering catalog entities.

Closing

70+ features in 30 days. The teams using AI to accelerate code generation are now running into the same reality we tracked in March: the bottleneck isn't writing code, it's everything downstream. Artifact management, security posture, deployment reliability, incident response, and AI asset governance. April's releases push the feedback loop tighter at each of those stages. Post-mortems that took 4 hours now take seconds. The change record correlation that required manual cross-referencing now happens automatically.

The velocity compounds when the whole software delivery moves together, not just the part where the AI writes code.

See you in May!

From PR to Production Without Leaving Your Cursor IDE

TLDR: Today, Harness is introducing the Harness Cursor Plugin, bringing the power of the Harness AI-native software delivery platform directly into Cursor. This integration, along with the Harness Secure AI Coding hook for Cursor, allows developers and AI agents to move from code changes to vulnerability detection, CI/CD execution, security validation, approvals, deployments, and operational insight without leaving the editor.

AI has completely changed how we write code. You can spin up functions, refactor entire files, and generate tests in seconds. The inner loop, writing and iterating on code, has never been faster. But the moment you try to ship that code, everything slows down. This is what we call the AI Velocity Paradox.

You are suddenly back to juggling pipelines, waiting on approvals, checking security scans, debugging failed runs, and bouncing between tools just to get a change into production.

That gap, between fast code and slow delivery, is what we kept running into. So we built something to fix it.

Today, we are introducing the Harness Plugin for Cursor, a way to go from PR to production without leaving your editor.

AI Made Coding Faster, But Delivery Did Not Catch Up

If you are using agentic coding tools, such as Cursor, you have probably felt this.

You can:

- Generate code instantly

- Understand unfamiliar repos faster

- Fix bugs and open PRs in minutes

But shipping still depends on everything outside your editor:

- CI/CD pipelines

- Security checks

- Approval flows

- Policy enforcement

- Deployment tooling

- Monitoring and debugging

And none of that got simpler just because AI showed up. In fact, AI makes the problem more obvious.

Now you can create changes faster than your delivery process can safely handle. And if those controls are not tight, you are introducing a whole new category of risk. Fast-moving code with fragmented governance.

AI did not break software delivery. It exposed how disconnected it already was.

What If You Could Just Ask

Instead of jumping between tools, what if you could just tell your editor what you want to happen?

Something like:

“Deploy PR #4821 to staging once the security scan passes, and Slack me if anything fails.”

That is the idea behind the Harness Cursor Plugin.

It connects Cursor directly to Harness, so you can trigger and manage your entire delivery workflow using natural language, right inside Cursor.

No tab switching. No manual orchestration. No guessing what is happening in the pipeline.

Some Sample Use Cases

Once connected, you can use Cursor to interact with your delivery system just as you do with your code.

For example, you can:

This builds on what we introduced last month, Secure AI Coding, which integrates directly with Cursor and scans code at the moment of generation rather than waiting for a PR review. Developers see inline vulnerability warnings with the option to send flagged code back to the agent for remediation, without leaving their workflow. Under the hood, it leverages Harness's Code Property Graph (CPG) to trace data flows across the entire codebase, surfacing complex vulnerabilities that simpler linting tools would miss.

The key thing is that you are no longer just interacting with code. You are interacting with the entire delivery system from the same place.

The Important Part: This Is Not Skipping Control

One of the biggest concerns with AI in delivery is obvious:

“Are we about to let agents push code to production without guardrails?”

No.

With Harness, everything runs through the controls that you can rely on:

- Granular RBAC permissions

- OPA policies

- Approval gates

- Audit logs

Instead of being manual checkpoints spread across tools, they are enforced automatically as part of the workflow while you stay in flow.

So AI can help move things faster, but it cannot bypass the governance that matters.

Why We Built It This Way

Most integrations today expose APIs or bolt AI onto existing systems. That is not what we wanted to do.

We designed the Harness Cursor Plugin specifically for how AI agents actually work:

- It is built around actions and workflows, not raw endpoints

- It spans the full delivery lifecycle, not just one step

- It gives agents enough context to reason about what to do next

Because shipping software is not a single action. It is a chain of decisions across CI, CD, security, approvals, and operations. If AI is going to help here, it needs access to that full picture. That’s where the Harness Software Delivery Knowledge Graph comes into play. It provides the necessary context for AI to take actions for you.

The knowledge graph models the relationships between services, pipelines, environments, policies, and operational signals in real time. Instead of treating each step in delivery as an isolated task, it creates a connected system of record that AI can reason over. This allows agents to understand not just what to do, but when and why to do it, based on dependencies, risk signals, and historical behavior.

In practice, this means smarter automation: deployments that adapt to context, approvals that are triggered based on policy and impact, and faster root cause analysis because the system already understands how everything is connected.

This Changes How Ideas Move To Prod

This is not just about convenience. It is a shift in how software actually moves from idea to production.

Instead of:

- Writing code in one place

- Managing delivery somewhere else

- And stitching it all together manually

You get a single, connected workflow:

- Code to pipeline to validation to deployment to operations

All accessible from your editor. Cursor accelerates the building. Harness governs the shipping. And the handoff between the two disappears.

Watch the demo:

Getting Started

If you want to try it:

- Install the Harness Cursor Plugin from the Cursor Marketplace

- Authenticate with Harness using OAuth. No API keys or setup headaches

- Start using natural language to run pipelines, debug issues, and manage deployments

For example:

“Run the CI pipeline for this branch, check if the security scan passed, and promote to staging if it did.”

That is it.

AI is not just changing how we write code. It is changing expectations for how fast we should be able to ship it. But speed without control does not work in real environments. What we are building toward is something simpler:

A world where every step, from PR to production, is:

- Fast

- Governed

- Observable

- Auditable

Without forcing developers to leave their flow. This plugin is one step in that direction.

AI writes the code. Who delivers it safely?

The question for enterprise AI in 2026 is no longer just which model. It’s which harness.

An agent harness is the system around the model. It decides what the agent remembers, what context it sees, what tools it can call, what it is allowed to do, and what happens when it is wrong.

The model provides intelligence. The harness provides control.

This is where the real engineering is happening. When Claude Code's source was accidentally exposed earlier this year, reports put it at more than half a million lines. None of that was the model. All of it was the system around the model.

The model gets you started. The harness gets you to production.

In software engineering

Software engineering is one of the first places this plays out. AI coding tools are writing and editing code. Autonomous agents are starting to deploy, operate, and respond to incidents. These are not suggestions anymore. They are changes to running software, made by agents acting on their own.

And one harness is not enough.

Two loops, two harnesses

Software engineering has two halves at the level that matters for agent harness design. Software development, where code gets written. Software delivery, where code becomes running software.

The inner loop is software development. Code gets written, edited, tested, and reviewed. Coding agents work here, close to the developer and bounded by the repository. Whether they live in an IDE, a terminal, a background session, or a web workspace doesn’t change what they do. They help one person write better code faster.

The outer loop is software delivery. Code becomes software that is built, tested, secured, deployed, verified, operated, and sometimes rolled back. That includes CI, security scans, deployments, infrastructure, feature flags, incidents, and approvals.

The two are loops different. The inner loop is about individual productivity. The outer loop is about organizational execution under risk. It crosses teams, touches production, uses secrets, enforces policy, and leaves an audit trail.

An agent delivering software can’t be a coding assistant with API access. It has to run inside a system that enforces the organization’s rules.

What goes wrong without the right harness

The stakes are easier to see by starting with what breaks.

Security. An agent with broad access to deploy, provision, and push config changes is a new attack surface. Prompt injection through a PR description, a poisoned dependency, or a malicious issue comment can turn an autonomous agent into the most privileged insider threat in the company. It acts under its own identity, with its own scoped credentials, doing exactly what it’s authorized to do. The attacker just redirects the authorization. Without an identity model and governed execution, every action the agent can take becomes a potential action path for an attacker.

Compliance. An agent that ships code without the same policy gates, approvals, and audit trails humans use creates a parallel path that regulators and auditors will challenge. A single deployment that skipped EU data residency review can trigger a finding that takes quarters to close. Cyber insurers are starting to scrutinize AI governance, and some are exploring exclusions or tighter terms for poorly governed AI. Within a year or two, “we have autonomous agents deploying code without an evidence trail” will be impossible to defend. Autonomous delivery without verification is autonomous liability.

Confident bad decisions. An agent with partial context looks like it’s working. It deploys during a change freeze. It rolls out a config change that breaks an upstream service. It enables a feature flag during an incident. Each failure is locally reasonable and globally wrong. Without the full knowledge graph, the agent keeps making the wrong call.

AI-specific failure modes. Autonomous agents fail in ways that deterministic automation doesn’t. They hallucinate actions, generating and deploying a Kubernetes manifest that doesn’t match reality. They get stuck in loops, rolling back and redeploying the same change until a human kills the process. They’re confidently wrong, proposing a fix that passes a weak policy gate and breaks production an hour later. No attacker involved. Without verification strong enough to catch them, errors reach production.

All of this has happened with deterministic automation, one mistake at a time. With autonomous agents, errors happen in parallel. A coding agent with bad context can push 10 broken PRs in 10 minutes. A delivery agent without verification can deploy 20 services before anyone notices.

Speed used to be the feature. With autonomous agents, speed is also the damage multiplier.

What a software delivery agent actually needs

A software delivery agent needs four things: memory, context, tools, and verification. The shape and stakes of each element are distinct.

Suppose a team is shipping a new version of a retailer’s checkout service on Thursday. Checkout depends on payments, inventory, fraud, and identity.

Memory: a graph of how your company ships

A Software Delivery Knowledge Graph is a connected map of services, teams, pipelines, deployments, incidents, policies, scorecards, and artifacts. Nodes and edges show how they all relate.

To answer “Is checkout safe to ship Thursday?”, the agent has to know which services checkout depends on, what their scorecards look like, whether any have open critical CVEs, whether there’s a change freeze, and who’s on call Thursday night.

Tha’is a graph query. If the agent doesn’t have the graph, it’s guessing.

Context: the live signal

Memory is the durable map. Context is the live signal. Memory tells the agent how the delivery system is connected. Context tells it what’s happening now.

Back to checkout. The agent sees that a chaos experiment last week showed payments fail when its Redis cache is unavailable. It sees that yesterday’s security scan flagged a critical CVE in a library fraud detection depends on. It sees that the new version changes the same config flag that caused an incident two weeks ago.

None of this is in the pull request. All of it matters.

Context isn’t something you assemble from scratch at runtime. It accumulates in the harness long before the agent is asked to act.

Tools: governed execution

People often assume “tools” means function calls to APIs. For a software delivery agent, it means something different. The agent can deploy to Kubernetes, run a database migration, apply a feature flag, trigger a security scan, run a chaos experiment, open and close an incident. Real actions, inside your network, using your credentials, under your policies, with full audit logging.

At Harness, every action runs through a Delegate: a lightweight worker inside your environment. Your VPC, your Kubernetes cluster, your data center. The agent issues an instruction. The Delegate executes it inside your perimeter and returns the result.

Secrets are decrypted inside the Delegate. Never in the agent’s context window, never in a model provider's memory, never in an audit log.

An agent with arbitrary production access is dangerous. An agent constrained by governed execution is governable.

Verification: proving the action was safe

This is the pillar coding and personal productivity agents don’t need at this depth. Software delivery agents do.

Three mechanisms make it concrete:

- Scorecards grade services against rules the organization defines. Test coverage, SLO compliance, library currency, critical CVEs. Every rule measurable. Every score live. Thresholds set by the organization.

- Policy gates block actions until conditions are met. “No deployment without a passing scorecard.” “No EU infrastructure change without a named EU approver.” The gate sits in the pipeline. The agent can’t route around it.

- Evidence is cryptographically signed proof that each action met its policy. When an auditor asks, “prove last Tuesday’s deployment passed security testing,” the system returns a tamper-evident record.

For checkout, the Thursday release is blocked unless the scorecard passes, no critical CVEs are open, no change freeze applies, and an EU compliance approver signs off. If any of those fail, the agent cannot deploy. If they all pass, the deployment runs through a Delegate and an evidence record is written.

The rules of the organization are enforced in the harness. The agent operates inside them.

The foundation is already built

I mentioned that an agent needs memory, context, tools, and verification. The good news: a modern software delivery platform like Harness already has the foundations, because truly automated delivery has always needed those four things.

A note on our name. We called the company Harness in 2017 because the original thesis was a safety harness for code: let developers move fast without breaking things. Pipelines, policies, approvals, rollbacks, evidence. The scaffolding that lets speed and safety coexist.

That thesis hasn’t changed. The mover has. Developers are still moving fast. AI agents are moving fast too, and faster. The harness has to hold both.

Pipelines aren’t agents. Pipelines are the harness that lets agents safely act. They’re the control plane where agent actions are evaluated, constrained, and executed under policy.

The word “pipeline” carries baggage. Many people hear “script runner.” That isn’t what we mean. Harness pipelines are production orchestration engines: loops, matrix runs, parallel stages, conditions, approvals, OPA gates, rollback, retries, and deterministic-plus-agentic step-chaining.

An agent step can run inside a loop. A deterministic step can pass output to an agent, then to a policy gate, an approval, another agent, and a deployment. The agent isn’t replacing the pipeline. The agent is one kind of step the pipeline already knows how to run.

Harness pipelines execute hundreds of millions of runs a year across enterprise production systems. That isn’t a theoretical runtime for agents. It’s a runtime already hardened at scale, on real delivery, under real policy, with real rollback. That’s the difference between a script runner and a production harness for autonomous action.

The rest of the foundation maps the same way. The Delegate is how actions reach your infrastructure. The Software Delivery Knowledge Graph is the memory. Our platform modules are the tools. Scorecards, policy gates, and signed evidence are the verification. Harness AI, the intelligence layer on top, uses all four of these elements.

We didn’t set out to build an agent harness. We set out to build a software delivery platform with AI at its core. It turns out those two things are the same.

Why coding agents are a different harness

Coding agents (IDE copilots, background agents, terminal-based assistants, cloud coding sessions) are built for a different job. They know your codebase, your style, your recent commits. That’s a real harness, bounded by the repository and the developer. A software delivery harness has different scope, memory, risks, and accountability.

A coding agent’s memory is the repository. A software delivery agent’s memory is the organization.

The context gap. Ask your coding assistant: “Is it safe to deploy this checkout change to production tonight?” It can’t answer. It doesn’t know the current scorecard, the change freeze status, last week’s chaos test results, or who’s on call. None of that lives inside the developer's workspace. A coding agent can write a change. It can’t know if the change is safe to ship.

The blast radius gap. A coding agent’s bad change usually gets caught before it hurts anything: in review, in CI, in a security scan, on a policy gate. Fifteen minutes wasted, not a production incident. A software delivery agent’s worst day is customer data exposure, a production outage, or a regulatory incident. Same agent paradigm, radically different blast radius.

The safety-net gap. Both kinds of agents are moving toward less human oversight. The difference is what catches them when they’re wrong. A coding agent mistake gets caught downstream: by CI, by security scans, by policy gates, by the delivery harness itself. A delivery agent mistake has nothing downstream. It is the downstream.

The control-plane gap. Could a coding agent call Harness as a backend? Of course. It should. But the caller isn’t the control plane. The software delivery harness decides whether the request is allowed, how it executes, and what evidence is retained.

The preference gap. Developers are going to pick their own coding agents. Most enterprises already run two or three: Cursor on some teams, Claude Code on others, Copilot on others, whatever ships next year on yet other teams. That’s healthy. Software development is distributed by design. Software delivery is the opposite: it’s centralized. One company, one delivery control plane. One set of policies, one audit trail, one source of evidence, one place where credentials are held.

The winning pattern is the two meeting cleanly: whichever coding agent the developer picks, the deployment passes through the same delivery harness.

Why model providers aren’t the delivery harness today

Managed agents. Stateful APIs. Server-side memory. Model providers are extending into harness territory, and for many use cases, that works. For software delivery specifically, the architecture runs into a different set of constraints.

The credentials problem. Every software delivery action requires production credentials: cloud admin roles, Kubernetes service accounts, database passwords, secrets manager keys. The most sensitive assets in the company. Enterprises spend years building the controls around them: vaults, rotation, scoped access, audit trails. A model-provider-hosted agent loop would require those credentials to flow through the model provider’s infrastructure on every action. Few CISOs will approve it. Few auditors will sign off. In regulated industries, it’s often a non-starter.