Harness Blog

Featured Blogs

.png)

We’ve come a long way in how we build and deliver software. Continuous Integration (CI) is automated, Continuous Delivery (CD) is fast, and teams can ship code quickly and often. But environments are still messy.

Shared staging systems break when too many teams deploy at once, while developers wait on infrastructure changes. Test environments get created and forgotten, but over time, what is running in the cloud stops matching what was written in code.

We have made deployments smooth and reliable, but managing environments still feels manual and unpredictable. That gap has quietly become one of the biggest slowdowns in modern software delivery.

This is the hidden bottleneck in platform engineering, and it's a challenge enterprise teams are actively working to solve.

As Steve Day, Enterprise Technology Executive at National Australia Bank, shared:

“As we’ve scaled our engineering focus, removing friction has been critical to delivering better outcomes for our customers and colleagues. Partnering with Harness has helped us give teams self-service access to environments directly within their workflow, so they can move faster and innovate safely, while still meeting the security and governance expectations of a regulated bank.”

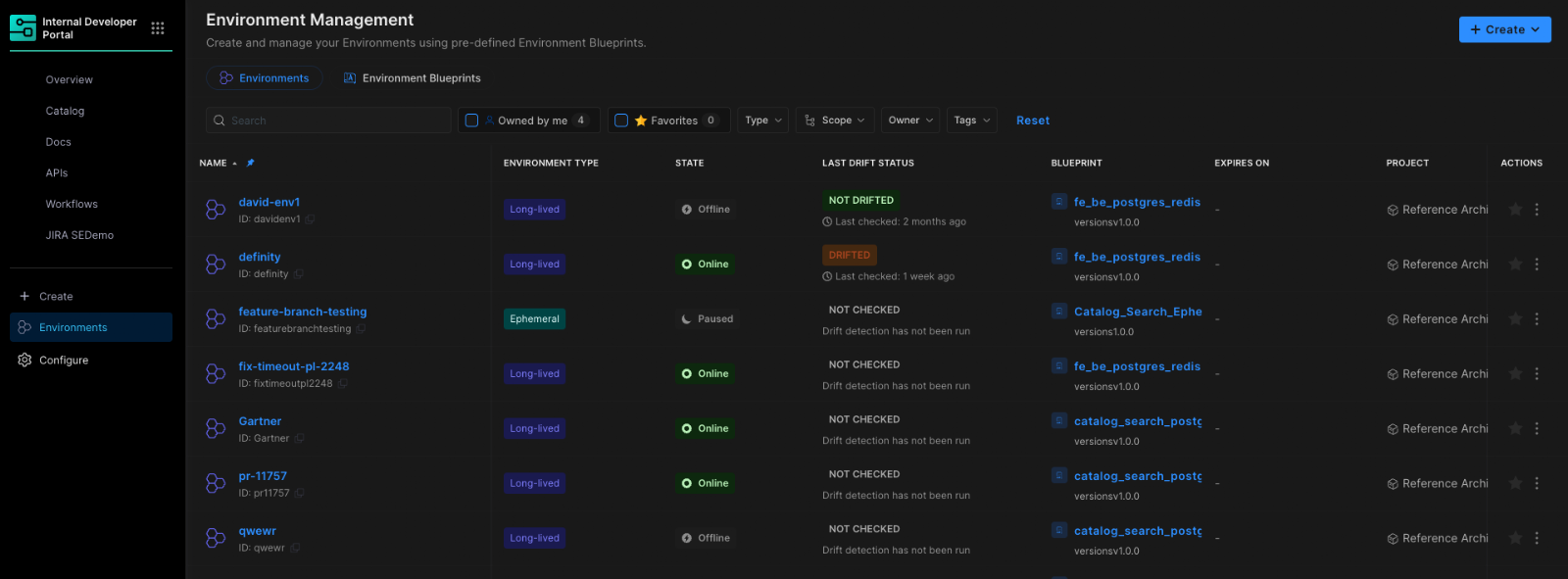

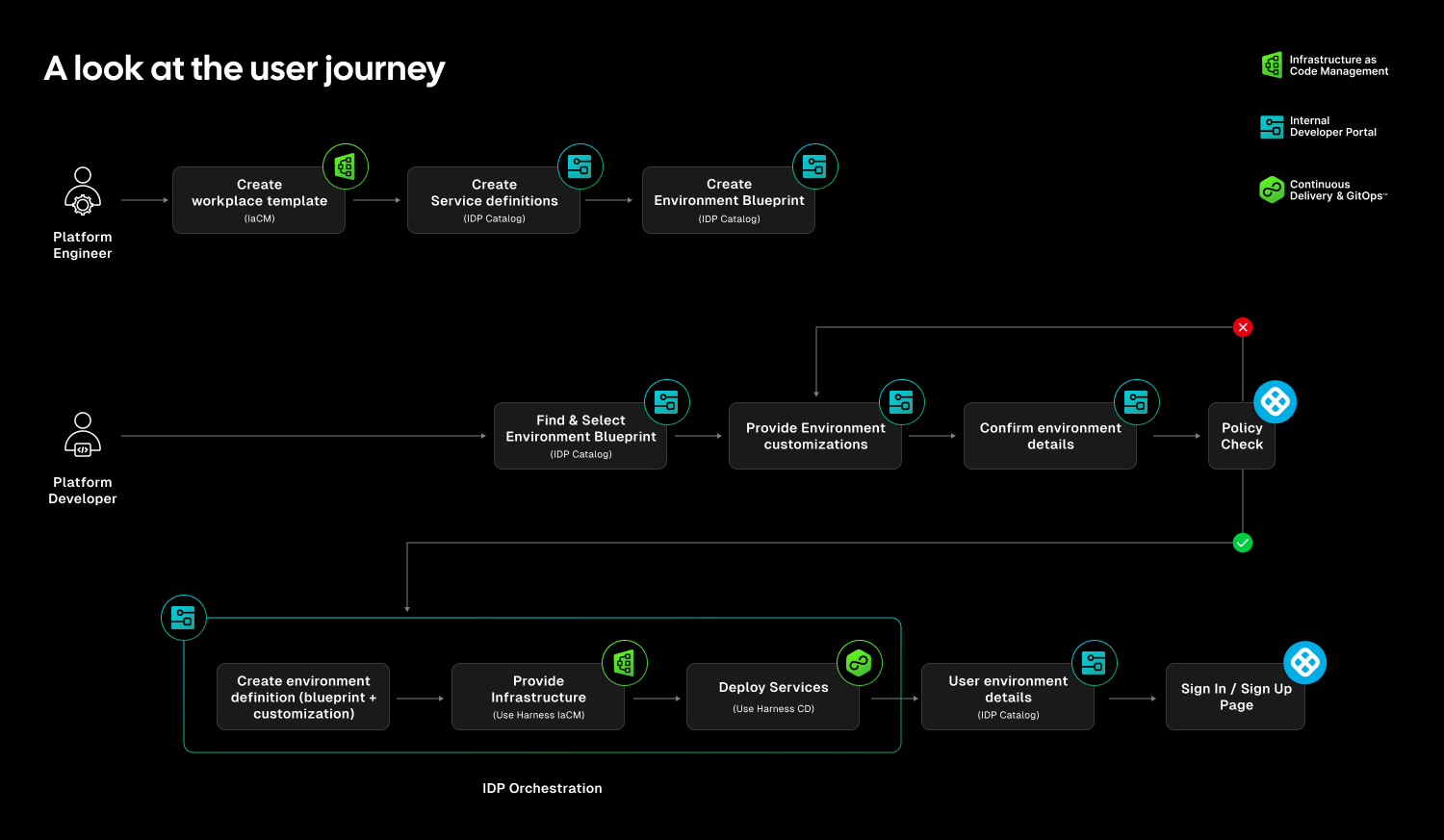

At Harness, Environment Management is a first-class capability inside our Internal Developer Portal. It transforms environments from manual, ticket-driven assets into governed, automated systems that are fully integrated with Harness Continuous Delivery and Infrastructure as Code Management (IaCM).

This is not another self-service workflow. It is environment lifecycle management built directly into the delivery platform.

The result is faster delivery, stronger governance, and lower operational overhead without forcing teams to choose between speed and control.

Closing the Gap Between CD and IaC

Continuous Delivery answers how code gets deployed. Infrastructure as Code defines what infrastructure should look like. But the lifecycle of environments has often lived between the two.

Teams stitch together Terraform projects, custom scripts, ticket queues, and informal processes just to create and update environments. Day two operations such as resizing infrastructure, adding services, or modifying dependencies require manual coordination. Ephemeral environments multiply without cleanup. Drift accumulates unnoticed.

The outcome is familiar: slower innovation, rising cloud spend, and increased operational risk.

Environment Management closes this gap by making environments real entities within the Harness platform. Provisioning, deployment, governance, and visibility now operate within a single control plane.

Harness is the only platform that unifies environment lifecycle management, infrastructure provisioning, and application delivery under one governed system.

Blueprint-Driven by Design

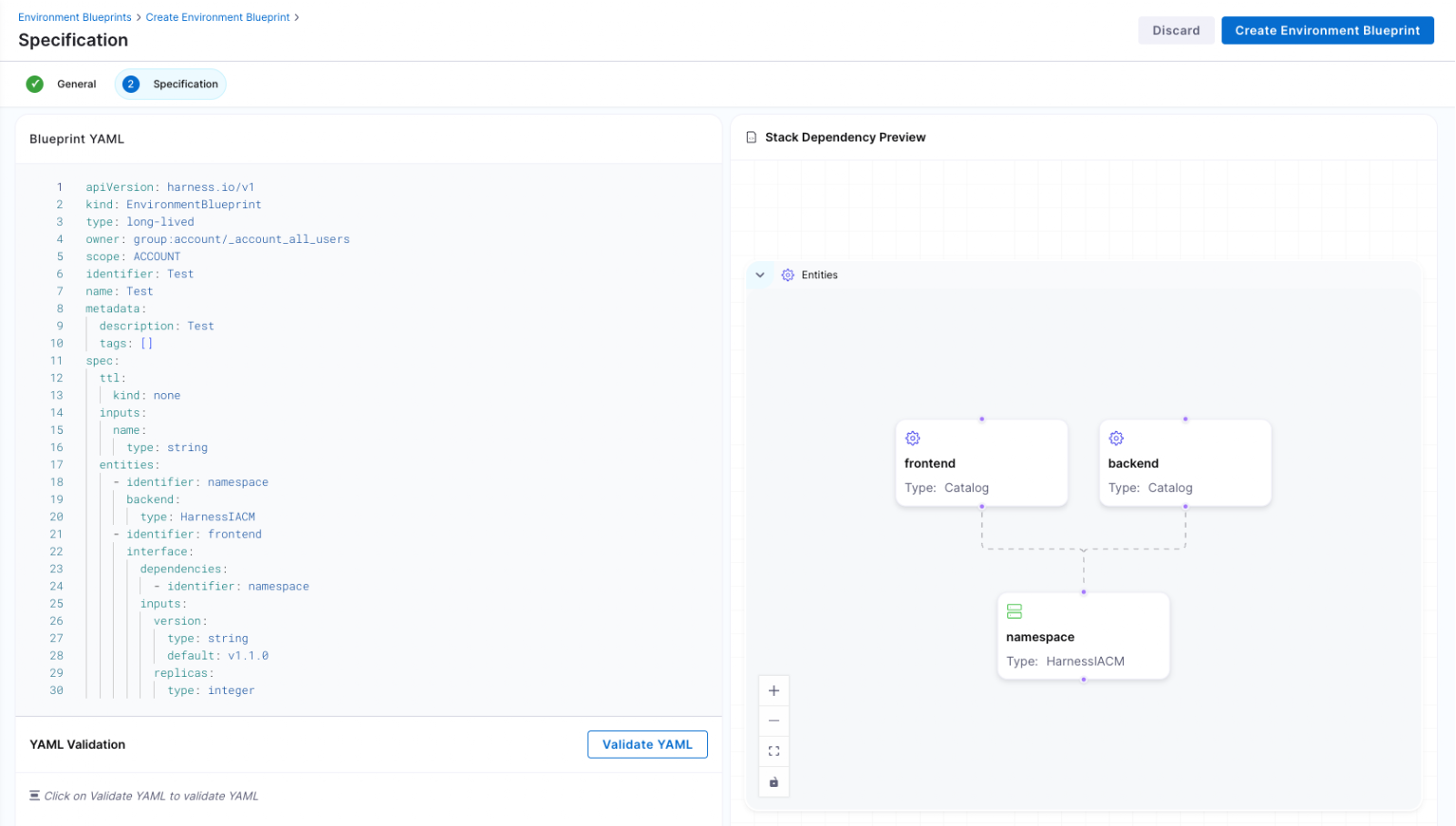

At the center of Environment Management are Environment Blueprints.

Platform teams define reusable, standardized templates that describe exactly what an environment contains. A blueprint includes infrastructure resources, application services, dependencies, and configurable inputs such as versions or replica counts. Role-based access control and versioning are embedded directly into the definition.

Developers consume these blueprints from the Internal Developer Portal and create production-like environments in minutes. No tickets. No manual stitching between infrastructure and pipelines. No bypassing governance to move faster.

Consistency becomes the default. Governance is built in from the start.

Full Lifecycle Control

Environment Management handles more than initial provisioning.

Infrastructure is provisioned through Harness IaCM. Services are deployed through Harness CD. Updates, modifications, and teardown actions are versioned, auditable, and governed within the same system.

Teams can define time-to-live policies for ephemeral environments so they are automatically destroyed when no longer needed. This reduces environment sprawl and controls cloud costs without slowing experimentation.

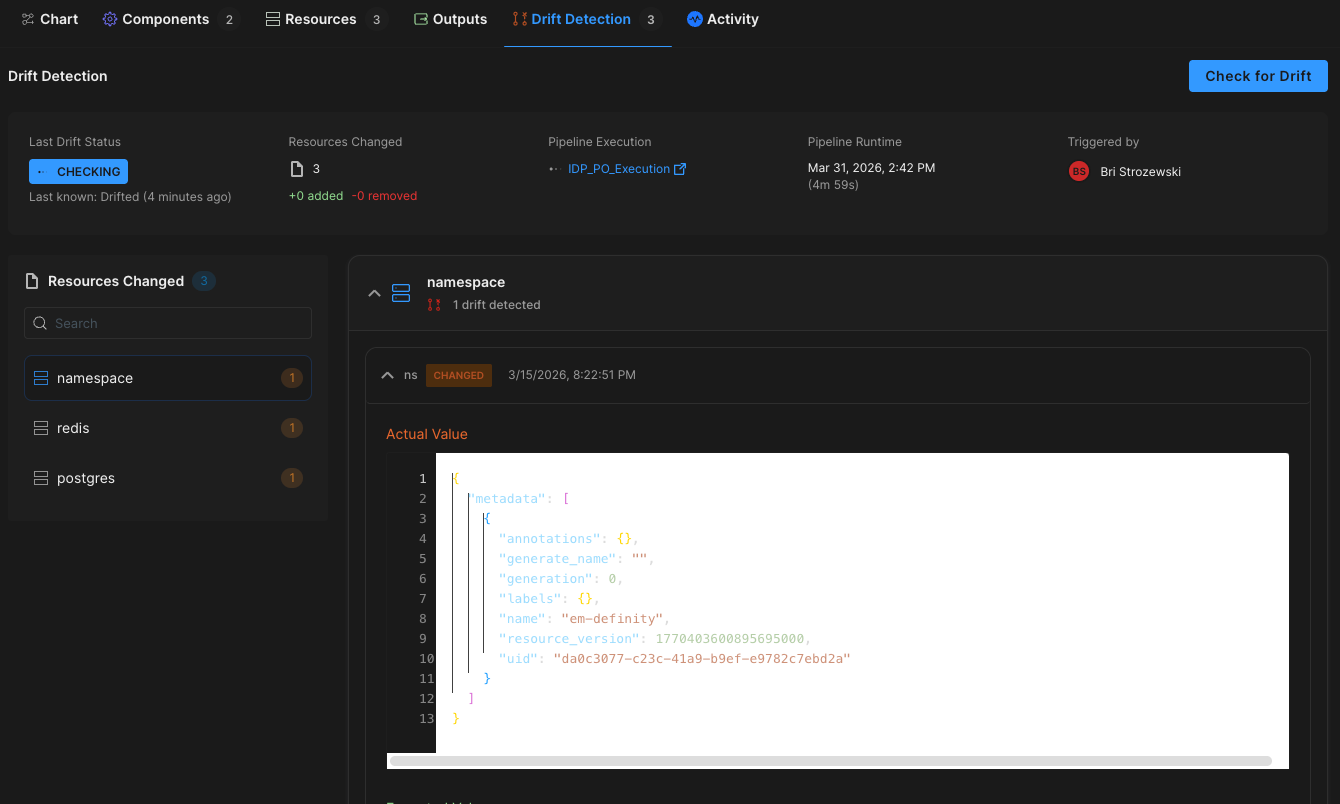

Harness EM also introduces drift detection. As environments evolve, unintended changes can occur outside declared infrastructure definitions. Drift detection provides visibility into differences between the blueprint and the running environment, allowing teams to detect issues early and respond appropriately. In regulated industries, this visibility is essential for auditability and compliance.

Governance Built In

For enterprises operating at scale, self-service without control is not viable.

Environment Management leverages Harness’s existing project and organization hierarchy, role-based access control, and policy framework. Platform teams can control who creates environments, which blueprints are available to which teams, and what approvals are required for changes. Every lifecycle action is captured in an audit trail.

This balance between autonomy and oversight is critical. Environment Management delivers that balance. Developers gain speed and independence, while enterprises maintain the governance they require.

"Our goal is to make environment creation a simple, single action for developers so they don't have to worry about underlying parameters or pipelines. By moving away from spinning up individual services and using standardized blueprints to orchestrate complete, production-like environments, we remove significant manual effort while ensuring teams only have control over the environments they own."

— Dinesh Lakkaraju, Senior Principal Software Engineer, Boomi

From Portal to Platform

Environment Management represents a shift in how internal developer platforms are built.

Instead of focusing solely on discoverability or one-off self-service actions, it brings lifecycle control, cost governance, and compliance directly into the developer workflow.

Developers can create environments confidently. Platform engineers can encode standards once and reuse them everywhere. Engineering leaders gain visibility into cost, drift, and deployment velocity across the organization.

Environment sprawl and ticket-driven provisioning do not have to be the norm. With Environment Management, environments become governed systems, not manual processes. And with CD, IaCM, and IDP working together, Harness is turning environment control into a core platform capability instead of an afterthought.

This is what real environment management should look like.

Engineering teams are generating more shippable code than ever before — and today, Harness is shipping five new capabilities designed to help teams release confidently. AI coding assistants lowered the barrier to writing software, and the volume of changes moving through delivery pipelines has grown accordingly. But the release process itself hasn't kept pace.

The evidence shows up in the data. In our 2026 State of DevOps Modernization Report, we surveyed 700 engineering teams about what AI-assisted development is actually doing to their delivery. The finding stands out: while 35% of the most active AI coding users are already releasing daily or more, those same teams have the highest rate of deployments needing remediation (22%) and the longest MTTR at 7.6 hours.

This is the velocity paradox: the faster teams can write code, the more pressure accumulates at the release, where the process hasn't changed nearly as much as the tooling that feeds it.

The AI Delivery Gap

What changed is well understood. For years, the bottleneck in software delivery was writing code. Developers couldn't produce changes fast enough to stress the release process. AI coding assistants changed that. Teams are now generating more change across more services, more frequently than before — but the tools for releasing that change are largely the same.

In the past, DevSecOps vendors built entire separate products to coordinate multi-team, multi-service releases. That made sense when CD pipelines were simpler. It doesn't make sense now. At AI speed, a separate tool means another context switch, another approval flow, and another human-in-the-loop at exactly the moment you need the system to move on its own.

The tools that help developers write code faster have created a delivery gap that only widens as adoption grows.

What Harness Is Shipping

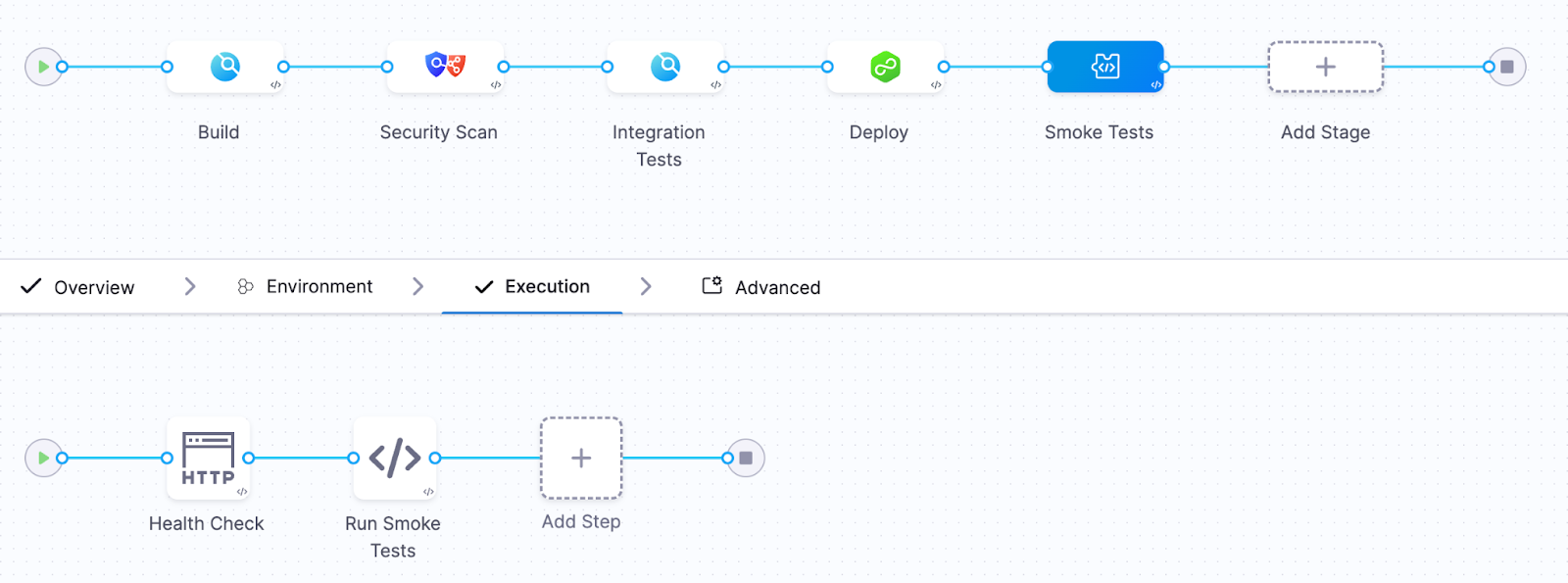

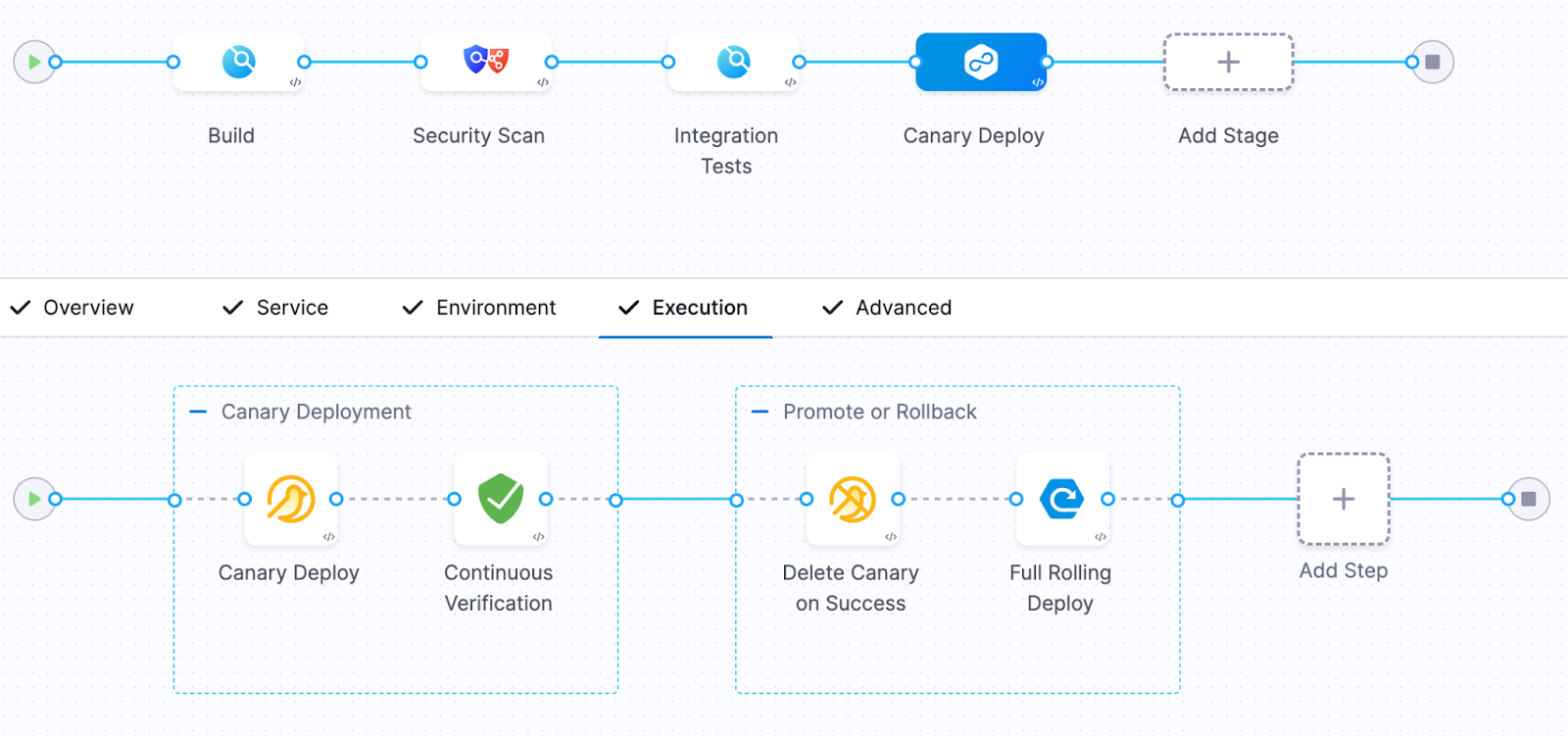

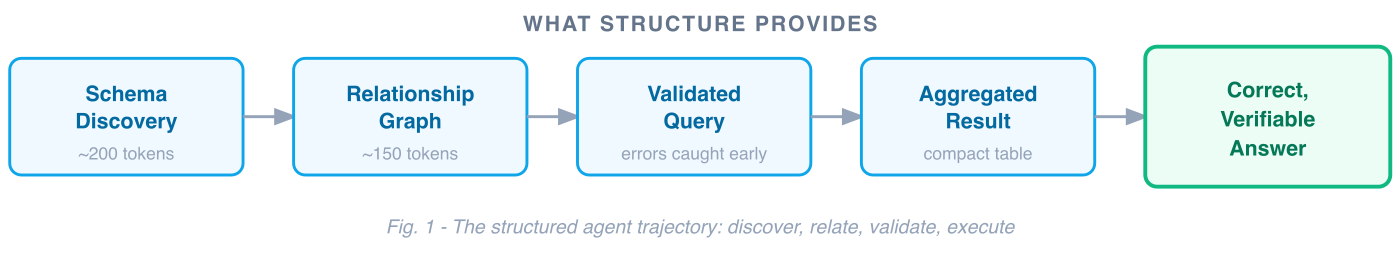

Today Harness is releasing five capabilities, all natively integrated into Continuous Delivery. Together, they cover the full arc of a modern release: coordinating changes across teams and services, verifying health in real time, managing schema changes alongside code, and progressively controlling feature exposure.

Coordinate multi-team releases without the war room

Release Orchestration replaces Slack threads, spreadsheets, and war-room calls that still coordinate most multi-team releases. Services and the teams supporting them move through shared orchestration logic with the same controls, gates, and sequence, so a release behaves like a system rather than a series of handoffs. And everything is seamlessly integrated with Harness Continuous Delivery, rather than in a separate tool.

Know when to stop — automatically

AI-Powered Verification and Rollback connects to your existing observability stack, automatically identifies which signals matter for each release, and determines in real time whether a rollout should proceed, pause, or roll back. Most teams have rollback capability in theory. In practice it's an emergency procedure, not a routine one. Ancestry.com made it routine and saw a 50% reduction in overall production outages, with deployment-related incidents dropping significantly.

Ship code and schema changes together

Database DevOps, now with Snowflake support, brings schema changes into the same pipeline as application code, so the two move together through the same controls with the same auditability. If a rollback is needed, the application and database schema can rollback together seamlessly. This matters especially for teams building AI applications on warehouse data, where schema changes are increasingly frequent and consequential.

Roll out features gradually, measure what actually happens

Improved pipeline and policy support for feature flags and experimentation enables teams to deploy safely, and release progressively to the right users even though the number of releases is increasing due to AI-generated code. They can quickly measure impact on technical and business metrics, and stop or roll back when results are off track. All of this within a familiar Harness user interface they are already using for CI/CD.

Warehouse-Native Feature Management and Experimentation lets teams test features and measure business impact directly with data warehouses like Snowflake and Redshift, without ETL pipelines or shadow infrastructure. This way they can keep PII and behavioral data inside governed environments for compliance and security.

These aren't five separate features. They're one answer to one question: can we safely keep going at AI speed?

From Deployment to Verified Outcome

Traditional CD pipelines treat deployment as the finish line. The model Harness is building around treats it as one step in a longer sequence: application and database changes move through orchestrated pipelines together, verification checks real-time signals before a rollout continues, features are exposed progressively, and experiments measure actual business outcomes against governed data.

A release isn't complete when the pipeline finishes. It's complete when the system has confirmed the change is healthy, the exposure is intentional, and the outcome is understood.

That shift from deployment to verified outcome is what Harness customers say they need most. "AI has made it much easier to generate change, but that doesn't mean organizations are automatically better at releasing it," said Marc Pearce, Head of DevOps at Intelliflo. "Capabilities like these are exactly what teams need right now. The more you can standardize and automate that release motion, the more confidently you can scale."

Release Becomes a System, Not a Scramble

The real shift here is operational. The work of coordinating a release today depends heavily on human judgment, informal communication, and organizational heroics. That worked when the volume of change was lower. As AI development accelerates, it's becoming the bottleneck.

The release process needs to become more standardized, more repeatable, and less dependent on any individual's ability to hold it together at the moment of deployment. Automation doesn't just make releases faster. It makes them more consistent, and consistency is what makes scaling safe.

For Ancestry.com, implementing Harness helped them achieve 99.9% uptime by cutting outages in half while accelerating deployment velocity threefold.

At Speedway Motors, progressive delivery and 20-second rollbacks enabled a move from biweekly releases to multiple deployments per day, with enough confidence to run five to 10 feature experiments per sprint.

AI made writing code cheap. Releasing that code safely, at scale, is still the hard part.

Harness Release Orchestration, AI-Powered Verification and Rollback, Database DevOps, Warehouse-Native Feature Management and Experimentation, and Improve Pipeline and Policy support for FME are available now. Learn more and book a demo.

On March 19th, the risks of running open execution pipelines — where what code runs in your CI/CD environment is largely uncontrolled — went from theoretical to catastrophic.

A threat actor known as TeamPCP compromised the GitHub Actions supply chain at a scale we haven't seen before (tracked as CVE-2026-33634, CVSS 9.4). They compromised Trivy, the most widely used vulnerability scanner in the cloud-native ecosystem, and turned it into a credential-harvesting tool that ran inside victims' own pipelines.

Between March 19 and March 24, 2026, organizations running affected tag-based GitHub Actions references were sending their AWS tokens, SSH keys, and Kubernetes secrets directly to the attacker. SANS Institute estimates over 10,000 CI/CD workflows were directly affected. According to multiple security research firms, the downstream exposure extends to tens of thousands of repositories and hundreds of thousands of accounts.

Five ecosystems. Five days. One stolen Personal Access Token.

This is a fundamental failure of the open execution pipeline model — where what runs in your pipeline is determined by external references to public repositories, mutable version tags, and third-party code that executes with full privileges. GitHub Actions is the most prominent implementation.

The alternative, governed execution pipelines, where what runs is controlled through policy gates, customer-owned infrastructure, scoped credentials, and immutable references, is the model we designed Harness around years ago, precisely because we saw this class of attack coming.

Part I: The Long Road to TeamPCP (2025–2026)

TeamPCP wasn't an anomaly; it was the inevitable conclusion of an eighteen-month escalation in CI/CD attack tactics.

1. The tj-actions Proof of Concept (March 2025)

CVE-2025-30066. Attackers compromised a PAT from an upstream dependency (reviewdog/action-setup) and force-pushed malicious code to every single version tag of tj-actions/changed-files. 23,000 repositories were exposed. The attack was later connected to a targeted campaign against Coinbase. CISA issued a formal advisory.

This proved that the industry's reliance on mutable tags (like @v2) was a serious structural vulnerability. According to Wiz, only 3.9% of repositories pin to immutable SHAs. The other 96% are trusting whoever owns the tag today.

2. The Shai-Hulud Worm (Sept–Nov 2025)

The first self-replicating worm in the CI/CD ecosystem. Shai-Hulud 2.0 backdoored 796 npm packages representing over 20 million weekly downloads — including packages from Zapier, PostHog, and Postman.

It used TruffleHog to harvest 800+ credential types, registered compromised machines as self-hosted GitHub runners named SHA1HULUD for persistent C2 over github.com, and built a distributed token-sharing network where compromised machines could replace each other's expired credentials.

PostHog's candid post-mortem revealed that attackers stole their GitHub bot's PAT via a pull_request_target workflow exploit, then used it to steal npm publishing tokens from CI runner secrets. Their admission that this kind of attack "simply wasn't something we'd prepared for" reflects the industry-wide gap between application security and CI/CD security maturity. CISA issued another formal advisory.

3. The Trivy Compromise (March 19, 2026)

TeamPCP went after the security tools themselves.

They exploited a misconfigured GitHub Actions workflow to steal a PAT from Aqua Security's aqua-bot service account. Aqua detected the breach and initiated credential rotation — but reporting suggests the rotation did not fully cut off attacker access. TeamPCP appears to have retained or regained access to Trivy's release infrastructure, enabling the March 19 attack weeks after initial detection.

On March 19, they force-pushed a malicious "Cloud Stealer" to 76 of 77 version tags in trivy-action and all 7 tags in setup-trivy. Simultaneously, they published an infected Trivy binary (v0.69.4) to GitHub Releases and Docker Hub. Every pipeline referencing those tags by name started executing the attacker's code on its next run. No visible change to the release page. No notification. No diff to review.

Part II: Inside the "Cloud Stealer" Tradecraft

TeamPCP's payload was purpose-built for CI/CD runner environments:

Memory Scraping. It read /proc/*/mem to extract decrypted secrets held in RAM. GitHub's log-masking can't hide what's in process memory.

Cloud Metadata Harvesting. It queried the AWS Instance Metadata Service (IMDS) at 169.254.169.254, pivoting from "build job" to full IAM role access in the cloud.

Filesystem Sweep. It searched over 50 specific paths — .env files, .aws/credentials, .kube/config, SSH keys, GPG keys, Docker configs, database connection strings, and cryptocurrency wallet keys.

Encrypted Exfiltration. All data was bundled into tpcp.tar.gz, encrypted with AES-256 and RSA-4096, and sent to typosquatted domains like scan.aquasecurtiy[.]org (note the "tiy"). These domains returned clean verdicts from threat intelligence feeds during the attack. As a fallback, the stealer created public GitHub repos named tpcp-docs under the victim's own account.

The malicious payload executed before the legitimate Trivy scan. Pipelines appeared to work normally. CrowdStrike noted: "To an operator reviewing workflow logs, the step appears to have completed successfully."

The Five-Day Cascade

Sysdig observed that the vendor-specific typosquat domains were a deliberate deception — an analyst reviewing CI/CD logs would see traffic to what appears to be the vendor's own domain.

It took Aqua five days to fully evict the attacker, during which TeamPCP pushed additional malicious Docker images (v0.69.5 and v0.69.6).

Part III: Why Open Execution Pipelines Break at Scale

Why did this work so well? Because GitHub Actions is the leading example of an open execution pipeline — where what code runs in your pipeline is determined by external references that anyone can modify.

This trust problem isn't new. Jenkins had a similar issue with plugins. Third-party code ran with full process privileges. But Jenkins ran inside your firewall; exfiltrating data required getting past your network perimeter.

GitHub Actions took the same open execution approach but moved execution to cloud-hosted runners with broad internet egress, making exfiltration trivially easy. TeamPCP's Cloud Stealer just needed to make an HTTPS POST to an external domain, which runners are designed to do freely.

Here are a few reasons why open execution pipelines break at scale:

Mutable Trust. When you use @v2, you are trusting a pointer, not a piece of code. Tags can be silently redirected by anyone with write access. TeamPCP rewrote 76 tags in a single operation. 96% of the ecosystem is exposed.

Flat Privileges. Third-party Actions run with the same permissions as your code. No sandbox. No permission isolation. This is why TeamPCP targeted security scanners — tools that by design have elevated access to your pipeline infrastructure. The attacker doesn't need to break in. The workflow invites them in.

Secret Sprawl. Secrets are typically injected into the runner's environment or process memory during job execution, where they remain accessible for the job's duration. TeamPCP's /proc/*/mem scraper didn't need any special privilege. It just needed to be running on the same machine.

Unbounded Credential Cascades. There is no architectural boundary that stops a credential stolen in one context from unlocking another. TeamPCP proved this definitively: Trivy → Checkmarx → LiteLLM → AI API keys across thousands of enterprises. One PAT, five ecosystems.

Part IV: Governed Execution Pipelines — Three Structural Walls

Harness CI/CD pipelines are built as governed execution pipelines — where what runs is controlled through customer-owned infrastructure, policy gates, scoped credentials, immutable references, and explicit trust boundaries. At its core is the Delegate — a lightweight worker process that runs inside your infrastructure (your VPC, your Kubernetes cluster), executes tasks locally, and communicates with the Harness control plane via outbound-only connections.

When we designed this architecture, we assumed the execution plane would become the primary target in the enterprise. If TeamPCP tried to attack a Harness-powered environment, they would hit three architectural walls.

Wall 1: The Airlock (Outbound-Only, Egress-Filtered Execution)

The Architecture.

The Delegate lives inside your VPC or cluster. It communicates with our SaaS control plane via outbound-only HTTPS/WSS. No inbound ports are opened.

The Defense.

You control the firewall. Allowlist app.harness.io and the specific endpoints your pipelines need, deny everything else. TeamPCP's exfiltration to typosquat domains would fail at the network layer — not because of a detection rule, but because the path doesn't exist. Both typosquat domains returned clean verdicts from threat intel feeds. Egress filtering by allowlist is more reliable than detection by reputation.

Wall 2: The Vault (Secret Isolation at the Source)

The Architecture.

Rather than bulk-injecting secrets as flat environment variables at job start, Harness can resolve secrets at runtime through your secret manager — HashiCorp Vault, AWS Secrets Manager, GCP Secret Manager, Azure Key Vault — via the Delegate, inside your network. Harness SaaS stores encrypted references and metadata, not plaintext secret values.

The Defense.

TeamPCP's Cloud Stealer worked because in an open execution pipeline, secrets are typically injected into the runner's process memory where they remain accessible for the job's duration. In a governed execution pipeline, this exposure is structurally reduced: secrets can be resolved from your controlled vault at the point they're needed, rather than broadcast as environment variables to every step in the pipeline.

An important caveat: Vault-based resolution alone doesn't eliminate runtime exfiltration. Once a secret is resolved and passed to a step that legitimately needs it — say, an npm token during npm publish — that secret exists in the step's runtime. If malicious code is executing in that same context (for example, a tampered package.json that exfiltrates credentials during npm run test), the secret is exposed regardless of where it came from. This is why the three walls work as a system: Wall 2 reduces the surface of secret exposure, Wall 1 blocks the exfiltration path, and (as we'll see) Wall 3 limits the blast radius to the scoped environment. No single wall is sufficient on its own.

To further strengthen how pipelines use secrets, leverage ephemeral credentials — AWS STS temporary tokens, Vault dynamic secrets, or GCP short-lived service account tokens — that auto-expire after a defined window, often minutes. Even if TeamPCP’s memory scraper extracted an ephemeral credential, it likely would have expired before the attacker could pivot to the next target.

Wall 3: The Dead End (Environment-Scoped Isolation)

The Architecture.

Harness supports environment-scoped delegates as a core architecture pattern. Your "Dev" scanner delegate runs in a different cluster, with different network boundaries and different credentials, than your "Prod" deployment delegate.

The Defense.

The credential cascade that defined TeamPCP hits a dead end. Stolen Dev credentials cannot reach Production publishing gates or AI API keys, because those credentials live in a different vault, resolved by a different delegate, in a different network segment. If the Trivy compromise only yielded credentials scoped to a dev environment, the attack stops at phase one.

Beyond the walls, governed execution pipelines provide additional structural controls:

- No default marketplace dependency: In GitHub Actions, the primary building block is a reference to an external Action in a public repository. In Harness, the primary building blocks are native pipeline steps that don't reference external Git repos. Harness does support running GitHub Actions as steps for teams that need compatibility, but external Actions are an optional path — not the default architecture.

- Reduced tooling and attack surface. Customers can use minimal delegate images with a significantly reduced binary footprint and least-privilege Kubernetes roles to restrict available tooling. TeamPCP's kubectl get secrets --all-namespaces would require tooling and permissions that a properly hardened delegate environment wouldn't provide.

The Comparison

What TeamPCP Actually Exploited — Mapped to Harness Defenses

Part V: The Nuance — Governed Doesn't Mean Automatically Safe

Architecture is a foundation, not a guarantee. Governed execution pipelines are materially safer against this class of attack, but you can still create avoidable risk by running unvetted containers on delegates, skipping egress filtering, using the same delegate across dev and prod, granting overly broad cloud access, or exposing excessive secrets to jobs that don't need them, or using long-lived static credentials when ephemeral alternatives exist.

I am not claiming that Harness is safe and GitHub Actions is unsafe. That would be too simplistic.

What I am claiming is that governed execution pipelines — where what runs is controlled through policy gates, customer-owned infrastructure, scoped credentials, and immutable references — are a materially safer foundation than open execution pipelines. We designed Harness as our implementation of a governed execution pipeline. But architecture is a starting point — you still have to operate it well.

Part VI: The Strategic Bottom Line — From Open to Governed

As we enter the era of Agentic AI — where AI is generating pipelines, suggesting dependencies, and submitting pull requests at machine speed — we can no longer rely on human review to catch a malicious tag in an AI-generated PR.

But there's a more fundamental shift: AI agents will become the primary actors inside CI/CD pipelines. Not just generating code — autonomously executing tasks, selecting dependencies, making deployment decisions, remediating incidents.

Now imagine an AI agent in an open execution pipeline — downloaded from a public marketplace, referenced by a mutable tag, executing with full privileges, making dynamic runtime decisions you didn't define. It has access to your secrets, your cloud credentials, and your deployment infrastructure. Unlike a static script, an agent makes decisions at runtime — fetching resources, calling APIs, modifying files.

If TeamPCP showed us what happens when a static scanner is compromised, imagine what happens when an autonomous AI agent is compromised — or simply makes a decision you didn't anticipate.

This is why governed execution pipelines aren't just a security improvement — they're an architectural prerequisite for the AI era. In a governed pipeline, even an AI agent operates within structural boundaries: it runs on infrastructure you control, accesses only scoped secrets, has restricted egress, and its actions are audited. The agent may be autonomous, but the pipeline constrains what it can reach.

The questions every engineering leader should be asking:

- Is my pipeline open or governed? Do I control what code executes, or is it determined by external references I don't audit?

- Where does execution happen? In infrastructure I control, or in an environment assembled from public dependencies?

- Who controls the network boundary? My security team, or the maintainer of a third-party Action?

- Are secrets sitting in runner memory or safely in my Vault?

- What stops a credential cascade from crossing environment boundaries?

- When AI agents start running autonomously in my pipelines, what structural boundaries constrain them?

What You Should Do Right Now

If you use Trivy, Checkmarx, or LiteLLM:

- Assume compromise if you ran any of these tools between March 19–25. Rotate all credentials accessible to affected CI/CD runners. Check your GitHub org for repos named tpcp-docs — their presence indicates successful exfiltration.

- Block scan.aquasecurtiy[.]org, checkmarx[.]zone, and models.litellm[.]cloud at the network level.

- Update to safe versions: check with the providers of each impacted package and update the scanner and actions.

If you use GitHub Actions:

- Pin every Action to an immutable commit SHA. Today.

- Commit SHAs are not sufficient. They pin to the right commit, but do not guarantee that the right repository is selected.

- Add provenance verification: To close the gap left by SHA pinning alone, verify the Action’s source and publisher, restrict which external Actions are allowed, and prefer artifacts with verifiable provenance or attestations.

- Audit workflows for pull_request_target triggers.

- Enforce Least Privilege on GitHub Tokens: Audit every Personal Access Token and GitHub App permission. If it’s not scoped to the specific repository and the specific task (e.g., "contents: read"), it is a liability.

- Monitor egress for unexpected destinations: Domain reputation alone is insufficient.

For the longer term:

- Evaluate whether your CI/CD pipelines are open or governed. If production credentials flow through your pipelines, you need a governed execution pipeline where you control the infrastructure, the network boundary, the secret resolution, and the audit trail.

- Establish policies: Implement platform-wide automated governance to enforce SHAs and least-privilege token usage programmatically through systems like OPA.

The Responsibility We Share

I'm writing this as the CEO of a company that competes with GitHub in the CI/CD space. I want to be transparent about that.

But I'm also writing this as someone who has spent two decades building infrastructure software and who saw this threat model coming. When we designed Harness, the open execution pipeline model had already evolved from Jenkins plugins to GitHub Actions — each generation making it easier for third-party code to run with full privileges and, by moving execution further from the customer's network perimeter, making exfiltration easier. We deliberately chose to build governed execution pipelines instead.

The TeamPCP campaign didn't teach us anything new about the risk. What it did was make the difference between open and governed execution impossible for the rest of the industry to ignore.

Open source security tools are invaluable. The developers and companies who build them — including Aqua Security and Checkmarx — are doing essential work. The problem isn't the tools. The problem is running them inside open execution pipelines where third-party code has full privileges, secrets sit in memory, and exfiltration faces no structural barrier.

If you want to explore how the delegate architecture works in practice, we're here to show you. But more importantly, regardless of what platform you choose, please take these structural questions seriously. The next TeamPCP is already studying the credential graph.

Latest Blogs

Beyond the Big Bang: De-risking Cloud Migrations with Progressive Delivery

At 2 am, your migration goes live. By 2:07, error rates spike, and rollback isn’t an option. Cloud migrations, API rewrites, and architecture transformations rarely fail because of bad code. They fail because of how that code is released.

Most teams still rely on a “big bang” cutover where infrastructure, services, and user-facing changes go live at once. This concentrates risk into a single moment. When something breaks, rollback is slow, visibility is limited, and the blast radius is large.

This is not just anecdotal. According to BCG, more than half of transformation efforts fail to achieve their intended outcomes within three years.

The difference between success and failure is not the migration itself. It is the release strategy.

Cloud Migration Is Not a Single Change

“Cloud migration” sounds simple, but in practice, it is a layered transformation.

Most migrations combine several of the following:

- Monolith to microservices

- API or data pipeline rewrites

- Frontend or UI rebuilds

- On-prem to cloud infrastructure moves

- Introduction of new service layers

These rarely happen in isolation. Teams often try to ship them together in a single coordinated release. That coupling increases complexity and multiplies risk.

Before your next migration, list every system involved. If they are all released together, you are carrying unnecessary risk.

The Core Anti-Pattern: Big Bang Releases

The failure mode is consistent:

- A new service is deployed

- Infrastructure flips to the cloud

- A redesigned UI is released

- All at once

There is no safe way to validate behavior in production. There is no gradual exposure. Rollback often requires redeploying an old stack that may no longer be compatible.

Even worse, teams lack a reliable baseline. They cannot answer simple questions:

- Is performance better?

- Is the cost lower?

- Is reliability improved?

Without that, migration becomes guesswork.

Decoupling Deployment from Release

Modern teams are adopting a different model:

- Deploy code anytime. Release it gradually.

Feature flags provide a control layer that separates deployment from exposure. Code can exist in production without being active for all users.

This enables:

- Controlled rollout by percentage, region, or cohort

- Instant rollback without redeployment

- Real-time measurement tied to specific changes

Start by putting one service behind a feature flag and releasing it to internal users first.

Progressive Delivery for Migrations

Replace Cutovers with Progressive Rollouts

Instead of switching everything at once:

- Deploy the new system alongside the old one

- Route a small percentage of traffic

- Observe behavior

- Increase exposure gradually

If something fails, you reduce traffic or revert instantly.

This shifts migration from a single high-risk event to a series of measurable steps.

The Strangler Fig Pattern in Practice

A common migration strategy is the strangler fig pattern.

- Build new functionality alongside the legacy system

- Gradually route traffic to the new components

- Retire legacy code over time

Feature flags make this executable in production by controlling routing and exposure. But to make this work in practice, you need a control layer that can manage traffic in real time.

How Progressive Migration Actually Works

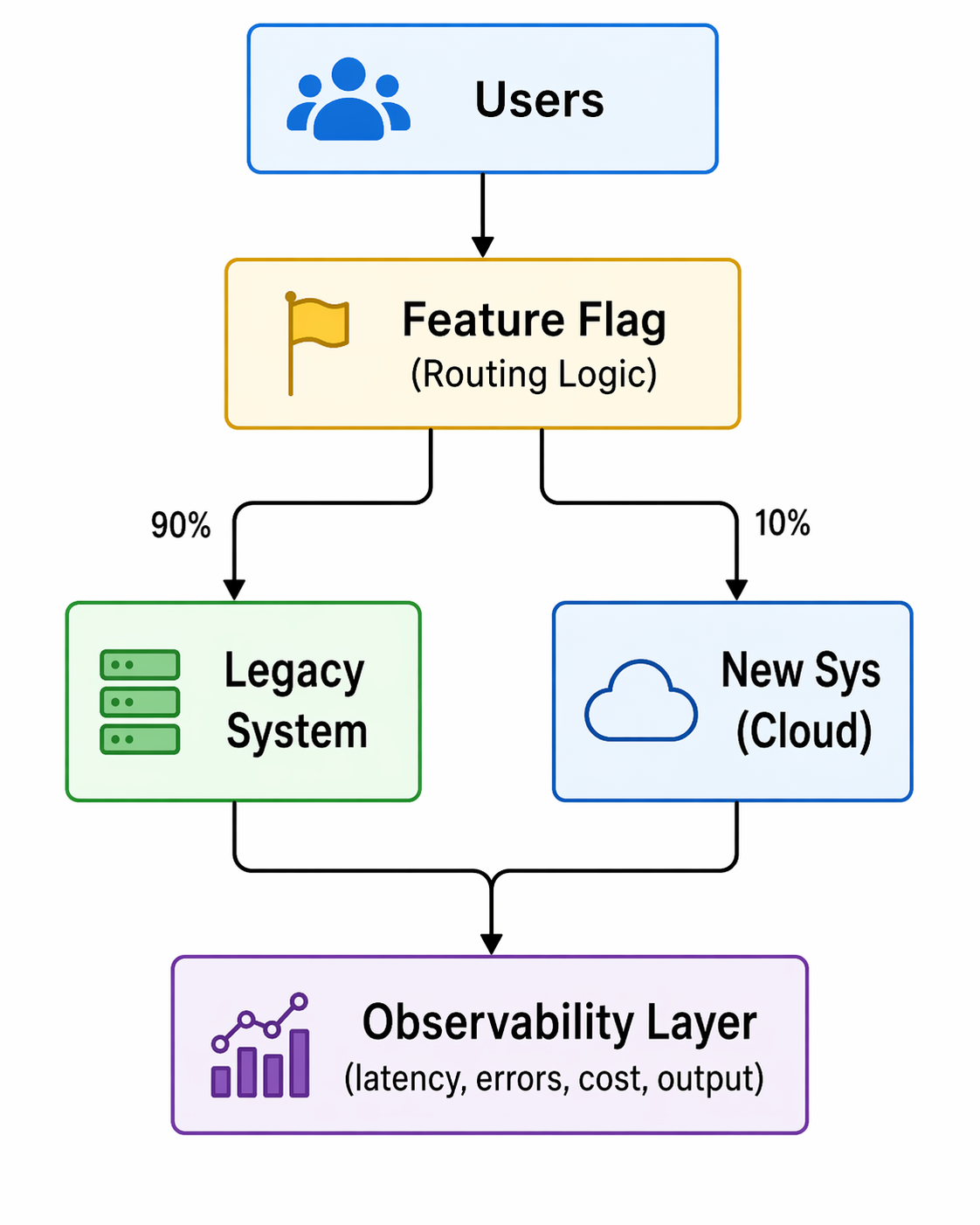

Below is a simplified view of how feature flags act as a control plane during migration:

Fig: Feature-flag–driven progressive traffic routing during migration

Two things matter here:

- Routing control: traffic can be shifted gradually

- Measurement: metrics are tied to each variation

This is not just a toggle. It is a runtime decision and an observability layer.

Measure What Actually Matters

A successful migration is not defined by deployment success. It is defined by outcomes.

Key metrics include:

- Latency and throughput

- Error rates

- Infrastructure cost

- Output correctness

These metrics are not theoretical. They are what teams use to validate migrations in real production environments.

Real Example: Dual Pipeline Migration

In the Beyond the Toggle ebook, a legacy Spark batch pipeline was replaced with a streaming architecture, with a progressive rollout rather than a cutover.

- Both pipelines ran in production

- A feature flag routed traffic between them

- Metrics were compared in real time

The new system showed faster processing and lower costs before the full rollout.

From the webinar, teams often go further:

- Run both systems simultaneously

- Compare outputs for correctness

- Measure performance differences per request

This allows validation of both performance and data integrity before committing.

Define your baseline metrics before migration. If you cannot measure improvement, you cannot prove success.

Staging Lies. Production Doesn’t.

Staging environments cannot replicate production conditions. They lack:

- Real traffic patterns

- Data scale

- Edge cases

Feature flags enable safe production testing through controlled exposure.

Common Patterns

- Canary releases by percentage or region

- Cohort-based rollouts (geo, customer segment)

- Dual execution for validation

Not all canary releases are percentage-based. Some teams roll out by country or user segment first, then expand globally.

Guardrails

To make this safe:

- Automated rollback based on thresholds

- Feature-level observability

- Access control and audit logs

Decision Making: Continuous Go / No-Go

A migration is a sequence of decisions, not a single moment.

At each stage:

- Define the metric

- Measure impact

- Decide to expand or roll back

In one example from the webinar:

- A rollout reached 30% traffic

- Error rates increased

- Traffic was reduced to 20%

- The issue was isolated and fixed

- Rollout continued safely

This approach removes pressure from a single “launch moment” and distributes risk across stages.

Advanced Considerations for Developers

Feature Flag Performance and Reliability

Modern flag systems avoid becoming a bottleneck:

- Evaluations happen locally via SDKs

- Configurations are cached

- Systems continue operating even if the flag service is unavailable

This ensures minimal latency and high reliability.

Handling Complex Systems

Not all migrations are equal.

- Data pipelines and database paths require more planning

- Read and write paths may need staged transitions

- Flags still apply, but design complexity increases

The key is incremental transition, not avoidance.

Managing Flag Lifecycle and Tech Debt

Feature flags are temporary by design.

If left unmanaged, they accumulate and create complexity. Teams need:

- Visibility into flag state and usage

- Defined lifecycle policies

- Cleanup after full rollout

Emerging approaches include automation that detects stale flags and generates pull requests to remove them.

This Is a Delivery Strategy Change

Adopting progressive delivery is not just a tooling decision. It changes how teams release software.

Key considerations:

- Align with existing change management processes

- Integrate flags into CI/CD pipelines

- Maintain governance and auditability

Feature flags do not bypass controls. They enhance them by adding visibility and control at runtime.

What to Look for in a Feature Flag Platform

For migration use cases, a Feature Flag platform should provide:

- Tight integration with CI/CD pipelines

- Built-in experimentation and metrics

- Governance, approvals, and audit logs

- Developer-friendly SDKs and workflows

Flags should not feel like a bolt-on. They should be part of how software is built and released.

Conclusion: From Risk Event to Controlled Process

The biggest mistake teams make is treating migration as a moment.

It is not.

It is a controlled progression of changes, each validated in production under real conditions.

Feature flags enable this by:

- Decoupling deployment from release

- Enabling gradual exposure

- Providing real-time measurement

- Allowing instant rollback

The result is simple:

Migrations become reversible, observable, and data-driven.

Want a deeper breakdown of these patterns and real-world examples? Read the full ebook or see a demo.

An Introduction to Disaster Recovery Testing: What You Need to Know in 2026

Businesses today run on computers, cloud systems, and digital tools. One big failure can stop everything. A cyber attack, a power outage, or a software glitch can shut down operations for hours or days. Disaster recovery testing is how you prove you can restore critical services when the unexpected happens.

In 2026, with hybrid and multi-cloud estates, distributed data, and tighter oversight, this is not a once-a-year fire drill. It is a continuous discipline that validates plans, uncovers weak links before they cause outages, and gives leaders confidence that customer-facing and internal systems can bounce back on demand.

Disaster recovery testing is a simple way to practice getting your systems back online after something goes wrong. It checks if your backup plans actually work before a real problem hits. This blog gives you a clear, step-by-step look at what it is, why it is essential right now, and how to get started.

What Is Disaster Recovery Testing?

Disaster recovery testing is a structured way to confirm that systems, data, and services can be restored to meet defined recovery goals after a disruption. The mandate is simple: verify that recovery works as designed and within the time and data loss thresholds the business requires. Effective programs test more than technology. They exercise people, processes, communications, and third-party dependencies end to end. The goal is to prove you can bring back data, apps, and services quickly with little loss.

A strong disaster recovery test plan typically covers:

- Clear recovery time objectives (RTOs) and recovery point objectives (RPOs) for each application tier.

- A current asset and application inventory with criticality tiers and upstream/downstream dependencies.

- Documented runbooks and playbooks for failover and failback, including decision criteria.

- Data protection strategies such as backups, replication, and snapshots with defined retention and immutability.

- Communication plans for internal teams, executives, customers, and partners.

- Roles and responsibilities, escalation paths, and an incident command structure.

- Third-party and vendor recovery commitments, service level agreements, and contact procedures.

- Metrics, governance, and reporting for audits and continuous improvement.

Without regular tests, even the best plan stays unproven. Many companies learn this the hard way when an outage lasts longer than expected.

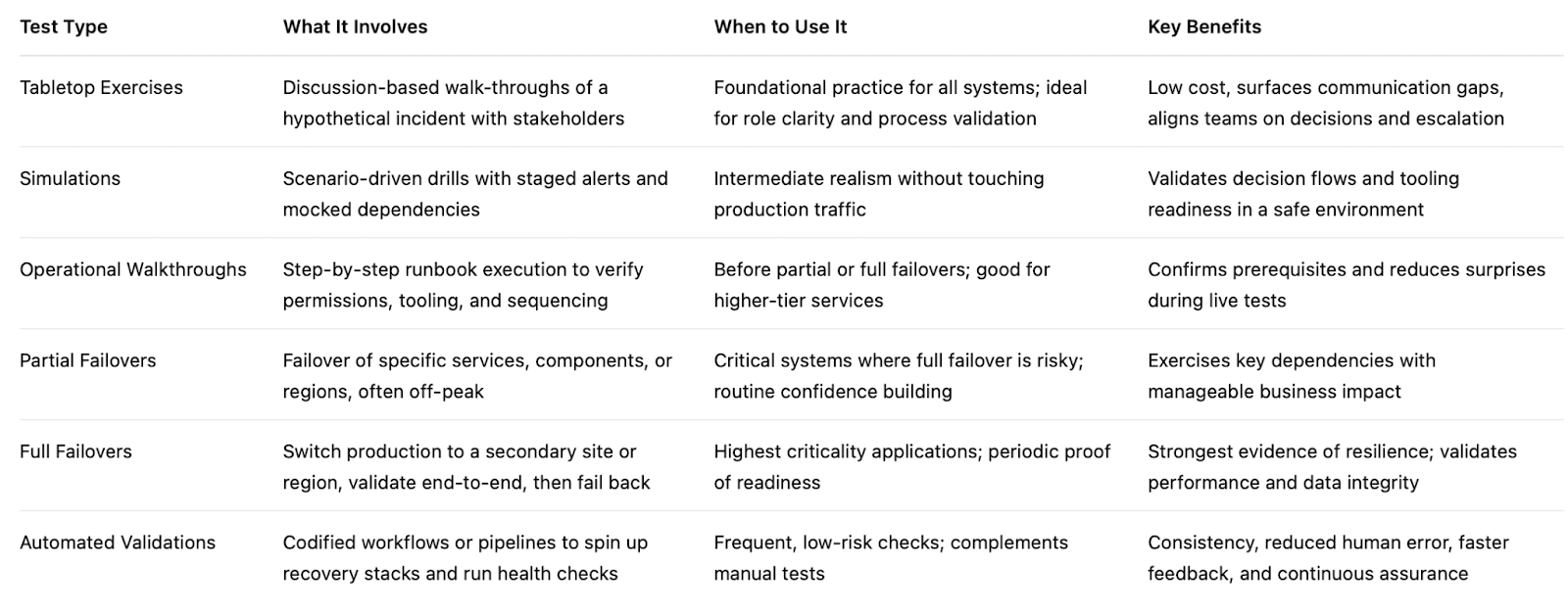

Types of Disaster Recovery Tests

Different systems require different levels of validation based on their criticality, risk, and business impact. A layered testing strategy helps teams build confidence gradually starting with low-risk discussions and moving toward full-scale failovers.

By combining multiple types of tests, organizations can validate both technical recovery and team readiness without unnecessary disruption.

Tabletop Exercises:

Tabletop exercises are discussion-based sessions where stakeholders walk through a hypothetical disaster scenario step by step. These are typically the starting point for any disaster recovery program, as they help clarify roles, responsibilities, and decision-making processes. While they do not involve actual system changes, they are highly effective in identifying communication gaps and aligning teams on escalation paths.

Simulations:

Simulations introduce more realism by creating scenario-driven drills with staged alerts and mocked dependencies. Teams respond as if a real incident is happening, but without impacting production systems. This type of testing is useful for validating how teams react under pressure and ensuring that tools, alerts, and workflows function as expected in a controlled environment.

Operational Walkthroughs:

Operational walkthroughs involve executing recovery runbooks step by step to verify that all prerequisites such as permissions, tooling, and sequencing are in place. These tests are more hands-on than simulations and are often conducted before attempting partial or full failovers. They help reduce surprises by ensuring that recovery procedures are practical and executable.

Partial Failovers:

Partial failovers test the recovery of specific services, components, or regions, usually during off-peak hours. This approach allows teams to validate critical dependencies and recovery workflows without risking the entire system. It is especially useful for building confidence in complex environments where a full failover may be too risky or costly to perform frequently.

Full Failovers:

Full failovers are the most comprehensive form of disaster recovery testing, where production systems are completely switched to a secondary site or region. After validation, systems are failed back to the primary environment. These tests provide the strongest proof of resilience, as they validate end-to-end recovery, including performance and data integrity, but they require careful planning due to their potential impact.

Automated Validations:

Automated validations use codified workflows or pipelines to continuously test recovery processes. These tests can automatically spin up recovery environments, validate configurations, and run health checks. They are ideal for frequent, low-risk testing and help reduce human error while providing fast and consistent feedback. Over time, automation becomes a key driver for maintaining continuous assurance in disaster recovery readiness.

Here’s the table outlines the primary types of disaster recovery testing and where they fit.

If you are building a disaster recovery testing checklist, include a mix of these types of disaster recovery testing and map each to the systems they protect. Over time, increase the frequency of automated validations and reserve full failovers for the highest-value services.

Why Disaster Recovery Testing Matters in 2026

The world is more connected than ever. Companies rely on cloud services, remote teams, and AI tools. At the same time, threats keep growing. Cyber attacks like ransomware are more common. Natural events and supply chain problems add extra risk. Cloud systems can fail without warning.

Recent studies show the cost of downtime keeps rising. For many large companies, one hour of downtime can cost more than 300,000 dollars. Some industries see losses climb into the millions per hour. Smaller businesses lose thousands per minute in lost sales and unhappy customers.

In 2026, experts note that most organizations still test their recovery plans only once or twice a year. That is not enough. Systems change fast. New software updates, new cloud setups, and new team members can break old plans.

Regular testing gives you confidence. It cuts recovery time and protects revenue. It also helps meet rules from banks, healthcare groups, and government agencies that require proof of preparedness.

How Modern Tools Make Disaster Recovery Testing Easier

Traditional testing took weeks of manual work. Today, platforms combine different testing methods in one place. This approach saves time and gives better results.

For example, Harness recently released its Resilience Testing module. It brings together chaos testing (to inject real-world failures safely), load testing (to check performance under stress), and disaster recovery testing. You can run everything inside your existing pipelines. This means you can test recovery steps automatically, validate failovers, and spot risks early.

Teams using this kind of integrated platform report faster recovery times and fewer surprises. It fits right into daily development work instead of feeling like an extra project.

The Role of AI in Disaster Recovery Testing

Artificial intelligence is making disaster recovery testing much smarter in 2026. It turns testing from a once-a-year chore into something fast, ongoing, and more accurate.

AI helps teams spot problems early by analyzing system data and predicting where failures might happen, allowing issues to be fixed before they cause real damage. It also enables continuous and automated testing, running scenarios in the background without interrupting normal business operations. Instead of manually creating test plans, AI can generate and recommend the most relevant scenarios based on your actual system setup, saving time and improving coverage.

Another major advantage is how quickly AI can analyze results. It processes test outcomes in real time and clearly points out what needs to be fixed, removing the guesswork. Over time, it learns from every test run and continuously improves your disaster recovery strategy, making it more reliable with each iteration.

Overall, AI helps teams recover faster and with fewer mistakes. Rather than relying on assumptions, teams get clear, data-driven insights to strengthen their systems. Tools like the Resilience Testing module from Harness already bring these capabilities into practice by combining chaos testing, load testing, and disaster recovery testing. With AI built into the platform, it can recommend the right tests, automate execution, and provide simple, actionable steps to improve system resilience.

Conclusion

Disaster recovery testing is not a one-time task. It is an ongoing habit that protects your business in 2026 and beyond. The companies that test regularly recover faster, lose less money, and keep customer trust.

Take a moment now to review your current plan. Pick one critical system and schedule a simple test this quarter. If you want a modern way to make the process simple and powerful, look at solutions like the Resilience Testing module from Harness. It helps you combine multiple testing types and use AI so you stay ready no matter what comes next.

Your business depends on technology. Make sure that technology can bounce back when it counts. Start testing today and build the confidence your team needs for whatever 2026 brings.

What is Terragrunt and how does it simplify Terraform Workflows?

- Terragrunt significantly reduces operational complexity for enterprise teams by enabling DRY configuration, orchestrating multi-stack deployments, and standardizing remote state management across dozens of environments.

- While Terragrunt solves orchestration and consistency challenges at scale, it is best used in conjunction with a robust Continuous Delivery platform like Harness to achieve end-to-end governance, auditability, and automated release verification.

- Terragrunt is most valuable for organizations managing complex, multi-environment infrastructures, but can introduce unnecessary complexity for smaller teams or simple setups.

Managing Terraform across dozens of AWS accounts becomes a maintenance nightmare fast. Teams end up copy-pasting the same backend configurations, provider blocks, and variable definitions hundreds of times.

Terragrunt acts as an orchestrator above Terraform, eliminating this duplication through shared configuration inheritance and dependency management. When financial services teams manage 200+ microservices across multiple environments, these DRY patterns become essential for governance and consistency.

For regulated industries requiring strict audit trails and policy enforcement, understanding when Terragrunt pays off can mean the difference between scalable infrastructure and operational chaos. Harness Infrastructure as Code Management provides the enterprise control plane that orchestrates these complex, multi-stack deployments while maintaining the governance standards your organization demands.

What is Terragrunt?

Terragrunt is an open-source tool (built by Gruntwork) that runs Terraform for you, but with helpful superpowers:

- It reduces repeated Terraform code across environments (DRY).

- It makes it easier to manage remote state and locking consistently.

- It provides a clean pattern for multi-environment and multi-account setups.

- It can orchestrate running Terraform across many modules in dependency order.

At a high level, you keep writing normal Terraform modules (the .tf files you already know). Terragrunt adds a lightweight configuration file, typically terragrunt.hcl, that tells Terragrunt how to call Terraform for a given environment/module.

Think of Terraform as the engine, and Terragrunt as the driver who keeps you from taking wrong turns.

Why Terragrunt exists

Terraform is excellent at defining infrastructure, but large Terraform codebases tend to develop a few recurring issues.

1) Repeating the same config everywhere

Common examples:

- Repeating backend config (S3 bucket name, DynamoDB lock table, key path, region…)

- Repeating provider config patterns

- Repeating environment wiring (prod/staging/dev) for the same module

Terraform doesn’t let you parameterize everything the way you might want, and backend config in particular can get awkward (because backends are configured before variables load).

2) Keeping environments consistent

You want:

- Same module version pinned across environments

- Same state conventions

- Same tagging rules

- Same guardrails (like “don’t apply prod from your laptop at 2am”)

Terraform can do some of this with discipline and tooling, but Terragrunt makes consistency easier to enforce.

3) Running lots of Terraform in the right order

In real life, stacks are composed:

- Networking first (VPC/VNet)

- Then clusters/compute

- Then databases

- Then apps

Terraform can model dependencies within a root module, but if you split concerns into separate state files (common in large orgs), you need an orchestration system. Terragrunt helps you run multiple Terraform modules in dependency order.

How Terragrunt works (conceptually)

Terragrunt reads terragrunt.hcl, then:

- Creates a temporary working directory

- Pulls or references the Terraform module source you specify

- Injects inputs (variables), remote state config, and other settings

- Executes Terraform commands (init, plan, apply, etc.)

You still get standard Terraform behavior, plans, state, providers, and modules; Terragrunt just standardizes the how.

A typical Terragrunt layout separates:

- Reusable Terraform modules (pure Terraform, no env-specific stuff)

- Live configuration per environment (Terragrunt files that point to modules + pass inputs)

This separation alone is often the “aha” moment for teams.

Key features that make Terragrunt attractive

1) DRY configuration with inheritance

Terragrunt supports patterns where child configs inherit from parent configs.

For example:

- A root terragrunt.hcl might define remote state + common inputs.

- Environment-level config sets region/account specifics.

- Module-level config passes module-specific inputs.

This keeps your live environment folders small and readable.

2) Remote state made consistent (and less painful)

Remote state is not optional if you’re serious about Terraform in teams.

Terragrunt makes it easy to define remote state once and reuse it everywhere, including consistent naming conventions for state keys.

This matters because “remote state drift” (people making small differences between stacks) is one of the fastest ways to make infra harder than it needs to be.

3) Dependency handling between modules

Terragrunt can define dependencies between modules so you can:

- Apply foundational infra first

- Reference outputs from other modules cleanly

- Run “the whole stack” safely

This is especially helpful when you intentionally split state by domain (network, security, compute, etc.).

4) run-all orchestration

Terragrunt can run Terraform across multiple modules with commands like:

- terragrunt run-all plan

- terragrunt run-all apply

It will traverse your folder structure, respect dependencies, and execute in the right order.

Is that always the best approach? Not always (we’ll talk about that). But it’s very useful for bootstrapping or for teams that want controlled multi-module workflows.

5) Keeping Terraform modules clean

Terragrunt encourages a clean separation:

- Modules = reusable infrastructure building blocks

- Live config = environment wiring

That separation tends to improve module quality and reuse over time.

What Terragrunt is not (important expectations)

Terragrunt will not magically fix:

- Poor module design

- A lack of testing or review

- Unclear ownership of infrastructure

- Weak CI/CD practices

- Provider misconfigurations

It also doesn’t replace Terraform Cloud/Enterprise features like policy enforcement, run history UI, remote operations, etc. (though it can work alongside them depending on how you run Terraform).

Terragrunt is best thought of as a scaling tool for Terraform code organization and orchestration.

The disadvantages of Terragrunt

Before you adopt it, be honest about the costs.

1) Another tool to learn and maintain

Terragrunt uses HCL, so it feels familiar, but it’s still:

- Another CLI

- Another config layer

- Another set of conventions

New engineers must learn both Terraform and Terragrunt patterns in your repo.

2) Debugging can feel more indirect

When Terragrunt runs Terraform, it may:

- Generate temp directories

- Fetch modules dynamically

- Inject config you don’t see in your Terraform files

If someone is used to running Terraform directly inside a root module, the indirection can be confusing at first.

3) Repo structure becomes a “system”

Terragrunt shines with a consistent folder structure, but that also means your layout becomes a contract.

If teams disagree on structure or naming, Terragrunt can amplify friction.

4) run-all can be risky if you treat it like a hammer

Running everything everywhere is tempting. In large organizations, you generally want:

- Smaller blast radius

- Targeted changes

- Change windows and approvals for sensitive layers

Terragrunt can support disciplined workflows, but it can also enable “oops I applied 42 modules” if you don’t lock things down.

5) Terraform itself keeps improving

Terraform has evolved a lot, and tooling around Terraform has grown too (Terraform Cloud, wrappers, CI patterns, monorepo tooling). Some teams find that modern Terraform workflows reduce the need for Terragrunt, especially if they prefer fewer layers.

When is Terragrunt worth it

Terragrunt tends to be worth it when you check several of these boxes:

You have multiple environments and they’re getting repetitive

If you’ve got dev, staging, prod, and every change involves copying small differences across folders, Terragrunt’s DRY patterns can immediately pay off.

You manage multiple accounts/subscriptions/projects

If your infra spans:

- Multiple AWS accounts

- Multiple Azure subscriptions

- Multiple GCP projects

…Terragrunt can help standardize how each environment is wired and keep the “live” config manageable.

You want a clear split between modules and live configuration

If your Terraform code mixes reusable module logic with environment specifics, Terragrunt’s recommended pattern can make your repo cleaner, faster.

You intentionally split state into multiple components

If you keep separate state for networking, IAM, compute, and apps, Terragrunt’s dependency management and orchestration become genuinely useful.

Your team wants consistent conventions enforced by structure

If you want to enforce:

- how remote state is configured

- how modules are pinned

- how inputs are organized

Terragrunt provides a “pit of success” structure that helps teams do the right thing by default.

When Terragrunt might not be worth it

Terragrunt can be overkill when:

Your Terraform setup is small or stable

If you have a handful of root modules and only one environment, you’ll probably feel more overhead than benefit.

You rely heavily on Terraform Cloud/Enterprise workflows

If your whole workflow revolves around Terraform Cloud runs, policies, and UI-driven execution, you may prefer to keep things simple with native Terraform patterns.

You prefer “one root module per environment” and it’s working

Some teams keep a single root module per environment and use standard Terraform practices (workspaces or separate state) without orchestration. If that’s clean and disciplined, you might not need Terragrunt.

Your team is already tool-fatigued

Adding Terragrunt is a cultural choice as much as a technical one. If your org struggles with consistency or onboarding already, introducing another abstraction layer can slow you down.

A practical checklist: should we adopt it?

Here’s a quick decision framework you can use with your team.

Best practices if you do adopt Terragrunt

If you go for it, these practices reduce pain and increase payoff.

1) Keep Terraform modules pure

Avoid putting environment-specific assumptions inside modules. Let Terragrunt handle env wiring and inputs.

2) Pin module versions intentionally

Terragrunt makes it easy to point to a module source. Don’t leave it floating.

- Use version tags for git sources

- Use module registry versions where possible

3) Standardize state naming conventions early

Decide how state keys are formed and stick to it. Your future self will thank you.

4) Put guardrails around production

Examples:

- Require CI for prod applies

- Restrict who can run apply in prod directories

- Separate credentials and enforce least privilege

Terragrunt won’t do governance by itself, but it works well with governance.

5) Be careful with run-all apply

Use it deliberately:

- Great for bootstrapping or non-prod

- Use extra controls for prod

- Prefer targeted plans when possible

Terragrunt Frequently Asked Questions

Teams evaluating Terragrunt often have specific questions about when it adds value versus complexity. These answers focus on practical trade-offs, compliance implications, and common pitfalls that enterprise teams encounter.

What problems does Terragrunt solve that Terraform modules alone do not?

Terraform modules provide code reuse but don't address backend configuration duplication, cross-stack dependencies, or consistent CLI flag management. Terragrunt centralizes remote state configuration, orchestrates deployment order through dependency blocks, and eliminates repetitive variable files. It also enables immutable module promotion across environments using git refs, which modules alone cannot enforce.

How does Terragrunt impact security, compliance, and audit trails in regulated environments?

Building on these orchestration capabilities, Terragrunt standardizes remote state backends and locking mechanisms, reducing configuration drift that auditors identify as compliance risks. However, it doesn't provide built-in audit trails or policy enforcement. Teams need complementary tools like Harness governance features for OPA policies, RBAC, and immutable audit logs. Terragrunt's dependency orchestration can improve compliance by ensuring security groups deploy before applications.

When is Terragrunt overkill, and what are the common anti-patterns to avoid?

While Terragrunt solves important orchestration challenges, it adds unnecessary complexity for teams managing fewer than 10 environments or simple single-account setups. The most critical anti-pattern is multiple .terragrunt-cache directories causing disk bloat and operational friction. Teams also struggle with overly complex dependency chains that create deployment bottlenecks. Consider whether centralized orchestration tooling better serves your scaling needs.

Does Terragrunt replace the need for a continuous delivery platform?

Despite its orchestration strengths, Terragrunt handles infrastructure provisioning but lacks deployment verification, rollback automation, and release coordination across multiple services. Enterprise teams typically use Terragrunt for infrastructure provisioning within broader CD workflows. Platforms like Harness provide AI-powered verification, automated rollbacks, and release orchestration that Terragrunt cannot deliver alone.

How does Terragrunt integrate with existing GitOps workflows?

Recognizing these platform limitations, Terragrunt works well within GitOps by generating plan files and managing state through PR-driven workflows. However, teams often struggle with PR sprawl across environments and lack centralized visibility. Consider using an enterprise GitOps control plane to orchestrate Terragrunt execution, enforce policies, and provide unified dashboards across your entire GitOps estate while maintaining your existing ArgoCD investments.

Is Terragrunt Worth It? Practical Next Steps and How to Orchestrate at Scale

Terragrunt becomes worthwhile when you're managing infrastructure across dozens of environments and need standardized remote state, DRY inheritance, and cross-stack orchestration. The key benefits of using terragrunt for automated infrastructure deployment deliver maximum value at enterprise scale, when managing 66+ services manually takes more effort than adopting new automation patterns.

To get started, pilot in your non-production environment using a clear hierarchy for repo structure and promotion flows. Define your policies, audit controls, and SLOs before expanding across accounts. This approach reduces risk while proving value to stakeholders who need evidence before broader adoption.

Ready to orchestrate complex releases without the manual toil?, Harness Infrastructure as Code Management provides the enterprise control plane that complements your Terragrunt investments with AI-powered pipelines, automated verification, and policy-driven governance across your entire software delivery lifecycle.

The Complete Guide to Feature Testing for Modern DevOps Teams

Today’s teams are challenged to ship fast without breaking things. Traditional deployment strategies tie every code change directly to user exposure, forcing teams to trade velocity for safety and live with stressful, all-or-nothing releases.

Feature testing changes that.

In modern DevOps, you don't have to cross your fingers during a big-bang rollout. Instead, you can use feature testing strategies to deploy code in the "off" state behind feature flags and then progressively make it available to real users through controlled rollouts, experiments, and real-time verification. You check to see if the feature works, if it works as expected, and if it demonstrably improves key metrics before you go all the way.

Harness Feature Management & Experimentation (FME) combines enterprise-scale feature flags, AI-driven release monitoring, and automated rollbacks into a single platform that eliminates manual toil and dramatically reduces the blast radius of every change.

Key Takeaways:

- Feature testing uses feature flags, progressive delivery, and experiments to make sure that new features work safely in real-world settings before they are fully rolled out.

- Automated guardrails, AI-driven verification, and instant rollbacks take the place of manual deployment babysitting and lower the risk of production releases.

- As your feature testing program grows, good governance, lifecycle management, and observability keep feature flags from becoming technical debt.

The Practical Benefit of Feature Testing

Feature testing is the practice of validating individual product features or changes by turning them on for specific users or segments, measuring their impact, and iterating based on real data. Instead of treating a release as a binary “on/off” event, you treat each feature as something you can test, tune, and prove in production-like conditions.

In practical terms, feature testing usually combines:

- Feature flags (toggles) that control who sees a feature and when.

- Progressive rollouts that move from a small percentage of traffic to full exposure based on guardrails.

- Experimentation and analytics to compare “feature on” vs “feature off” or different configurations of the same feature.

Compared to traditional functional testing, which answers “does this feature work according to spec?” and is well covered in Microsoft testing best practices documentation, feature testing answers broader questions: “Does this feature behave correctly under real load, in real environments, and does it actually improve user or business outcomes?”

How Feature Testing Improves Deployment Safety in CI/CD Pipelines

In many pipelines, code changes and user exposure are tightly coupled: once you deploy, everyone sees the change. That’s what creates big-bang releases, long regression cycles, and weekend war rooms, and it clashes with Google’s Site Reliability Engineering practices, which focus on balancing speed and reliability.

Modern feature testing in CI/CD improves safety through three mechanisms: safe deployments, cross-pipeline validation, and automated guardrails.

1. Deploy Code Safely in the “Off” State

With feature testing, new functionality is put behind feature flags. You deploy to production with flags disabled, so the code is present but dormant. If something goes wrong, you don’t scramble to roll back an entire deployment; you switch off a specific feature in seconds.

This pattern:

- Controls and minimizes the blast radius of each change.

- Enables safe testing in production, exposing a new feature to specific teams or selected beta users before making it generally available.

- Supports trunk-based development, where teams continuously merge small changes without exposing half-finished work.

You can reinforce these best practices with Harness CD’s ability to deploy anywhere across clusters, regions, and environments.

2. Validate Early in CI, Verify Live in CD

Feature testing spreads risk management across the pipeline. In CI, you run automated tests and static checks to catch regressions before code ever reaches production. In CD, you gradually enable the feature for real-world traffic and measure its impact on performance and behavior.

- CI validation ensures that the feature doesn’t break existing contracts or core flows.

- CD verification checks how the feature behaves under active real-world workloads, infrastructure, and user patterns.

To keep feedback loops tight, teams can use Harness CI Test Intelligence and Incremental Builds so that only the tests and assets impacted by feature changes are rebuilt and run. That means faster builds and more iterations of feature tests per day.

3. Replace Manual Monitoring with Automated Guardrails

Manual deployment babysitting doesn’t scale. Engineers watch dashboards, refresh logs, and debate in chats about whether a metric “looks bad enough” to roll back. We’ve all been there. Modern feature testing replaces these outdated practices with explicit guardrails tied to each feature.

You define thresholds for:

- System metrics (error rates, p95 latency, memory, CPU)

- User behavior (conversion, click-through, drop-off, task completion)

- Business KPIs (revenue per session, subscription starts, trial activations)

When metrics drift beyond acceptable ranges for a feature test, automated systems pause the rollout or roll the feature back automatically. Harness CD’s AI-assisted deployment verification and metric alert webhooks make these guardrails part of your standard pipeline.

Types of Feature Tests You’ll Actually Run

In practice, most teams cycle through a few common patterns of feature testing:

- Fit Validation Tests: Turn a feature on for a small audience (e.g. 1–5% of traffic) and measure these users’ key performance indicators. Compare these measurements with the KPIs of users who don’t see the feature. This answers “should we keep this feature at all?”

- Configuration and Variant Tests: Run different configurations of the same feature (layout, copy, price points, algorithm parameters) as variations. Measure which variant performs best, then roll out the winner.

- Rollout / Guardrail Tests: Use percentage-based ramps (1% → 5% → 25% → 50% → 100%) and validate guardrails at each stage. If a guardrail is breached, automatically roll the feature back.

- Performance and Reliability Tests: Turn the feature on in environments or segments that mimic worst-case scenarios (high load, specific device types, critical user journeys) to catch performance regressions before broad release.

- Long-Running Optimization Tests: Keep mature features under ongoing feature tests to continually refine configurations; for example, tuning search ranking, recommendation models, or pricing logic over time.

Enterprise Feature Flags: Best Practices for Sustainable Feature Testing

Naming, ownership, and lifecycle policies ensure that feature flagging remains an asset and essential tool to your engineering team, and never becomes technical debt.

Adopt these practices:

- Name flags with intent and an expiration horizon. Use descriptive patterns like checkout_v2_rollout_2026q1 and tag flags as “experiment,” “ops kill switch,” or “permanent config.” Temporary flags should have 30–90 day retirement targets.

- Assign clear ownership and document the business context. Every flag should have an owner, a purpose, and a link to the initiative or experiment it supports. When the experiment ends, the owner is accountable for the cleanup.

- Manage the entire feature flag lifecycle with pipelines. Standardize and take feature flag testing through each stage (e.g. internal testing, pre-production, external beta, experimenting, ramping, 100% released, removed from code) by using pipeline steps. You can use pipeline templates to ensure quality feature testing, visibility across teams, and flag cleanup.

- Evaluate flags locally for performance. Use SDKs that evaluate rules in memory with typed configurations and caching, so each flag check is sub-millisecond and doesn’t depend on a remote call. This keeps feature testing safe even at billions of evaluations per day.

- Target users with rich attributes and percentage controls. Roll out by segments (customer tier, geography, device type, beta cohort) with granular percentage ramps instead of flipping everything at once.

- Wire guardrails to real business KPIs, not just system metrics. Error rates are necessary but not sufficient. Great feature testing also measures how the feature affects conversion, retention, and revenue.

Tools like Harness FME help enforce these policies with lifecycle management, analytics, and governance built in.

Progressive Delivery with AI Verification and Safe Rollbacks

Progressive delivery is the natural evolution of continuous delivery: instead of shipping a change straight to 100% of users, you roll it out gradually while continuously evaluating its impact. Feature testing is how you operationalize progressive delivery day to day.

A typical progressive feature test might look like this:

- Stage 1: 1% of traffic

Validate that the feature works end-to-end and doesn’t cause obvious errors or crashes. - Stage 2: 5–10% of traffic

Watch performance metrics (latency, error rate) and basic user behavior (clicks, drop-offs). - Stage 3: 25–50% of traffic

Evaluate deeper KPIs such as conversion, sign-ups, and revenue per session. - Stage 4: 100% rollout

Once guardrails are stable and the feature’s impact is positive, promote to full exposure and clean up any temporary flags.

AI-driven verification makes this sustainable. Instead of manually eyeballing dashboards, you reuse the same guardrails you defined earlier and let the platform detect when a feature test is outside your risk tolerance.

Harness CD can automatically pause or roll back using AI-assisted deployment verification and your chosen rollback strategy. Combined with Harness FME, that rollback can be as simple as deactivating the flag—no new deployment required.

Feature Testing Best Practices for DevOps Teams

To get consistent results from feature testing, treat it as a disciplined practice, not just “turning on flags in prod.” You’ll see the same theme in Google SRE's reliability testing guidance, where tests are treated as a first-class component of the software development lifecycle, essential to running reliable systems.

Anchor your testing practices on these principles: