Harness Blog

Featured Blogs

Engineering teams are generating more shippable code than ever before — and today, Harness is shipping five new capabilities designed to help teams release confidently. AI coding assistants lowered the barrier to writing software, and the volume of changes moving through delivery pipelines has grown accordingly. But the release process itself hasn't kept pace.

The evidence shows up in the data. In our 2026 State of DevOps Modernization Report, we surveyed 700 engineering teams about what AI-assisted development is actually doing to their delivery. The finding stands out: while 35% of the most active AI coding users are already releasing daily or more, those same teams have the highest rate of deployments needing remediation (22%) and the longest MTTR at 7.6 hours.

This is the velocity paradox: the faster teams can write code, the more pressure accumulates at the release, where the process hasn't changed nearly as much as the tooling that feeds it.

The AI Delivery Gap

What changed is well understood. For years, the bottleneck in software delivery was writing code. Developers couldn't produce changes fast enough to stress the release process. AI coding assistants changed that. Teams are now generating more change across more services, more frequently than before — but the tools for releasing that change are largely the same.

In the past, DevSecOps vendors built entire separate products to coordinate multi-team, multi-service releases. That made sense when CD pipelines were simpler. It doesn't make sense now. At AI speed, a separate tool means another context switch, another approval flow, and another human-in-the-loop at exactly the moment you need the system to move on its own.

The tools that help developers write code faster have created a delivery gap that only widens as adoption grows.

What Harness Is Shipping

Today Harness is releasing five capabilities, all natively integrated into Continuous Delivery. Together, they cover the full arc of a modern release: coordinating changes across teams and services, verifying health in real time, managing schema changes alongside code, and progressively controlling feature exposure.

Coordinate multi-team releases without the war room

Release Orchestration replaces Slack threads, spreadsheets, and war-room calls that still coordinate most multi-team releases. Services and the teams supporting them move through shared orchestration logic with the same controls, gates, and sequence, so a release behaves like a system rather than a series of handoffs. And everything is seamlessly integrated with Harness Continuous Delivery, rather than in a separate tool.

Know when to stop — automatically

AI-Powered Verification and Rollback connects to your existing observability stack, automatically identifies which signals matter for each release, and determines in real time whether a rollout should proceed, pause, or roll back. Most teams have rollback capability in theory. In practice it's an emergency procedure, not a routine one. Ancestry.com made it routine and saw a 50% reduction in overall production outages, with deployment-related incidents dropping significantly.

Ship code and schema changes together

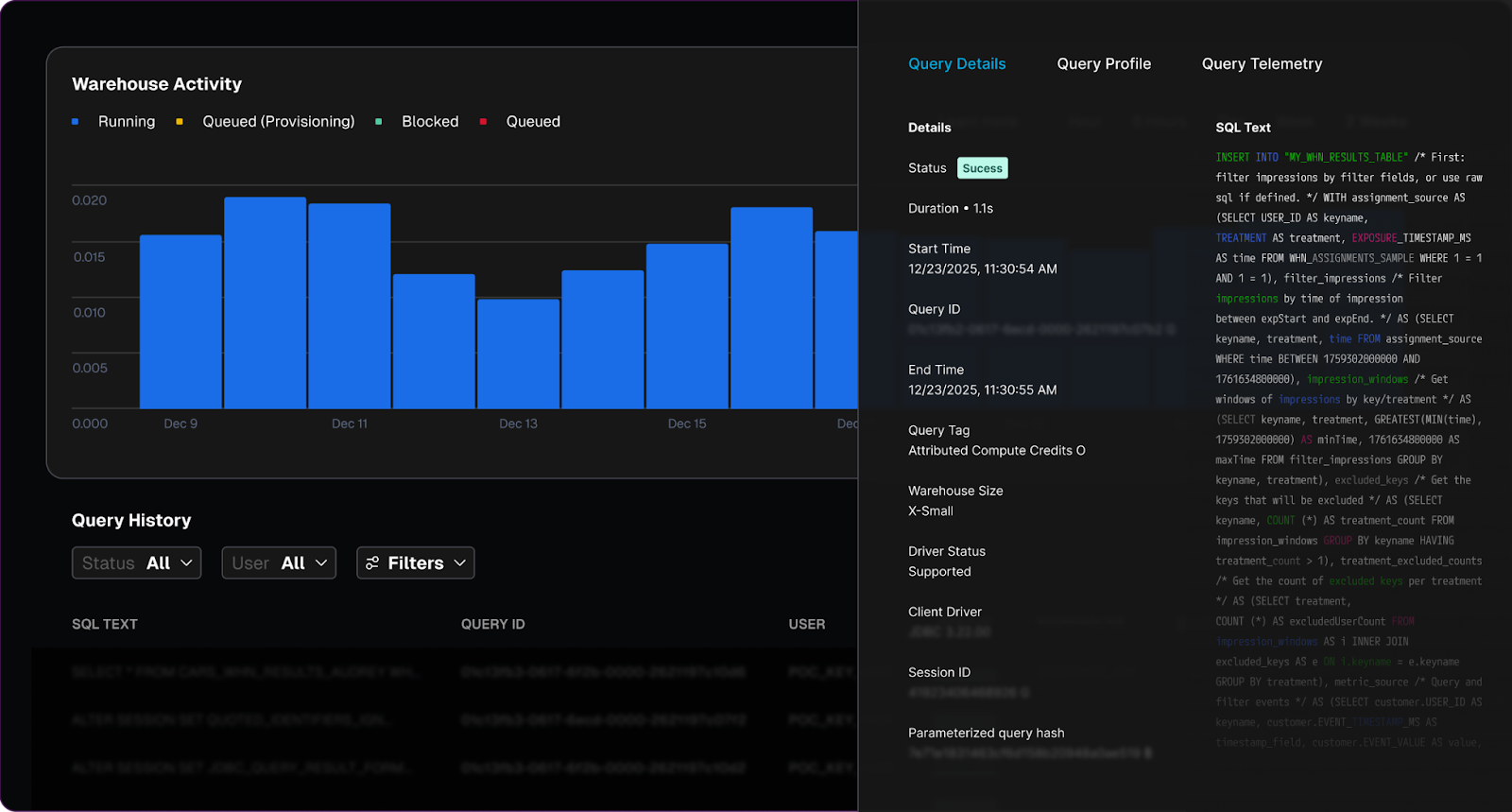

Database DevOps, now with Snowflake support, brings schema changes into the same pipeline as application code, so the two move together through the same controls with the same auditability. If a rollback is needed, the application and database schema can rollback together seamlessly. This matters especially for teams building AI applications on warehouse data, where schema changes are increasingly frequent and consequential.

Roll out features gradually, measure what actually happens

Improved pipeline and policy support for feature flags and experimentation enables teams to deploy safely, and release progressively to the right users even though the number of releases is increasing due to AI-generated code. They can quickly measure impact on technical and business metrics, and stop or roll back when results are off track. All of this within a familiar Harness user interface they are already using for CI/CD.

Warehouse-Native Feature Management and Experimentation lets teams test features and measure business impact directly with data warehouses like Snowflake and Redshift, without ETL pipelines or shadow infrastructure. This way they can keep PII and behavioral data inside governed environments for compliance and security.

These aren't five separate features. They're one answer to one question: can we safely keep going at AI speed?

From Deployment to Verified Outcome

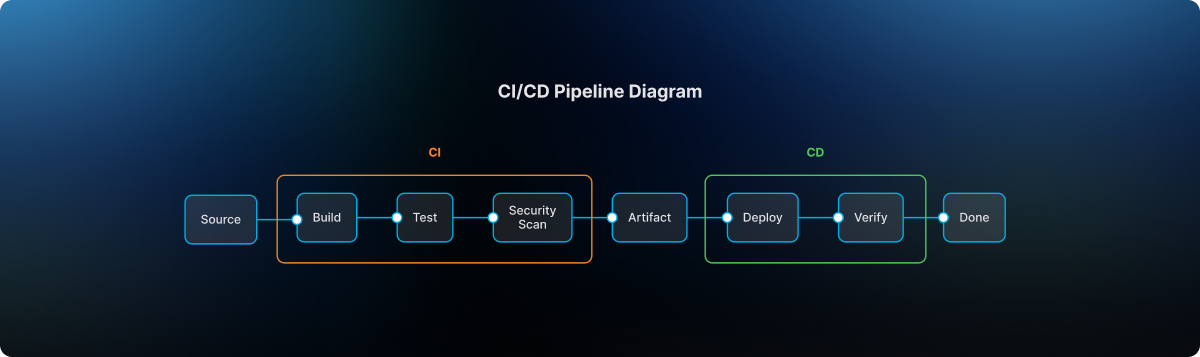

Traditional CD pipelines treat deployment as the finish line. The model Harness is building around treats it as one step in a longer sequence: application and database changes move through orchestrated pipelines together, verification checks real-time signals before a rollout continues, features are exposed progressively, and experiments measure actual business outcomes against governed data.

A release isn't complete when the pipeline finishes. It's complete when the system has confirmed the change is healthy, the exposure is intentional, and the outcome is understood.

That shift from deployment to verified outcome is what Harness customers say they need most. "AI has made it much easier to generate change, but that doesn't mean organizations are automatically better at releasing it," said Marc Pearce, Head of DevOps at Intelliflo. "Capabilities like these are exactly what teams need right now. The more you can standardize and automate that release motion, the more confidently you can scale."

Release Becomes a System, Not a Scramble

The real shift here is operational. The work of coordinating a release today depends heavily on human judgment, informal communication, and organizational heroics. That worked when the volume of change was lower. As AI development accelerates, it's becoming the bottleneck.

The release process needs to become more standardized, more repeatable, and less dependent on any individual's ability to hold it together at the moment of deployment. Automation doesn't just make releases faster. It makes them more consistent, and consistency is what makes scaling safe.

For Ancestry.com, implementing Harness helped them achieve 99.9% uptime by cutting outages in half while accelerating deployment velocity threefold.

At Speedway Motors, progressive delivery and 20-second rollbacks enabled a move from biweekly releases to multiple deployments per day, with enough confidence to run five to 10 feature experiments per sprint.

AI made writing code cheap. Releasing that code safely, at scale, is still the hard part.

Harness Release Orchestration, AI-Powered Verification and Rollback, Database DevOps, Warehouse-Native Feature Management and Experimentation, and Improve Pipeline and Policy support for FME are available now. Learn more and book a demo.

On March 19th, the risks of running open execution pipelines — where what code runs in your CI/CD environment is largely uncontrolled — went from theoretical to catastrophic.

A threat actor known as TeamPCP compromised the GitHub Actions supply chain at a scale we haven't seen before (tracked as CVE-2026-33634, CVSS 9.4). They compromised Trivy, the most widely used vulnerability scanner in the cloud-native ecosystem, and turned it into a credential-harvesting tool that ran inside victims' own pipelines.

Between March 19 and March 24, 2026, organizations running affected tag-based GitHub Actions references were sending their AWS tokens, SSH keys, and Kubernetes secrets directly to the attacker. SANS Institute estimates over 10,000 CI/CD workflows were directly affected. According to multiple security research firms, the downstream exposure extends to tens of thousands of repositories and hundreds of thousands of accounts.

Five ecosystems. Five days. One stolen Personal Access Token.

This is a fundamental failure of the open execution pipeline model — where what runs in your pipeline is determined by external references to public repositories, mutable version tags, and third-party code that executes with full privileges. GitHub Actions is the most prominent implementation.

The alternative, governed execution pipelines, where what runs is controlled through policy gates, customer-owned infrastructure, scoped credentials, and immutable references, is the model we designed Harness around years ago, precisely because we saw this class of attack coming.

Part I: The Long Road to TeamPCP (2025–2026)

TeamPCP wasn't an anomaly; it was the inevitable conclusion of an eighteen-month escalation in CI/CD attack tactics.

1. The tj-actions Proof of Concept (March 2025)

CVE-2025-30066. Attackers compromised a PAT from an upstream dependency (reviewdog/action-setup) and force-pushed malicious code to every single version tag of tj-actions/changed-files. 23,000 repositories were exposed. The attack was later connected to a targeted campaign against Coinbase. CISA issued a formal advisory.

This proved that the industry's reliance on mutable tags (like @v2) was a serious structural vulnerability. According to Wiz, only 3.9% of repositories pin to immutable SHAs. The other 96% are trusting whoever owns the tag today.

2. The Shai-Hulud Worm (Sept–Nov 2025)

The first self-replicating worm in the CI/CD ecosystem. Shai-Hulud 2.0 backdoored 796 npm packages representing over 20 million weekly downloads — including packages from Zapier, PostHog, and Postman.

It used TruffleHog to harvest 800+ credential types, registered compromised machines as self-hosted GitHub runners named SHA1HULUD for persistent C2 over github.com, and built a distributed token-sharing network where compromised machines could replace each other's expired credentials.

PostHog's candid post-mortem revealed that attackers stole their GitHub bot's PAT via a pull_request_target workflow exploit, then used it to steal npm publishing tokens from CI runner secrets. Their admission that this kind of attack "simply wasn't something we'd prepared for" reflects the industry-wide gap between application security and CI/CD security maturity. CISA issued another formal advisory.

3. The Trivy Compromise (March 19, 2026)

TeamPCP went after the security tools themselves.

They exploited a misconfigured GitHub Actions workflow to steal a PAT from Aqua Security's aqua-bot service account. Aqua detected the breach and initiated credential rotation — but reporting suggests the rotation did not fully cut off attacker access. TeamPCP appears to have retained or regained access to Trivy's release infrastructure, enabling the March 19 attack weeks after initial detection.

On March 19, they force-pushed a malicious "Cloud Stealer" to 76 of 77 version tags in trivy-action and all 7 tags in setup-trivy. Simultaneously, they published an infected Trivy binary (v0.69.4) to GitHub Releases and Docker Hub. Every pipeline referencing those tags by name started executing the attacker's code on its next run. No visible change to the release page. No notification. No diff to review.

Part II: Inside the "Cloud Stealer" Tradecraft

TeamPCP's payload was purpose-built for CI/CD runner environments:

Memory Scraping. It read /proc/*/mem to extract decrypted secrets held in RAM. GitHub's log-masking can't hide what's in process memory.

Cloud Metadata Harvesting. It queried the AWS Instance Metadata Service (IMDS) at 169.254.169.254, pivoting from "build job" to full IAM role access in the cloud.

Filesystem Sweep. It searched over 50 specific paths — .env files, .aws/credentials, .kube/config, SSH keys, GPG keys, Docker configs, database connection strings, and cryptocurrency wallet keys.

Encrypted Exfiltration. All data was bundled into tpcp.tar.gz, encrypted with AES-256 and RSA-4096, and sent to typosquatted domains like scan.aquasecurtiy[.]org (note the "tiy"). These domains returned clean verdicts from threat intelligence feeds during the attack. As a fallback, the stealer created public GitHub repos named tpcp-docs under the victim's own account.

The malicious payload executed before the legitimate Trivy scan. Pipelines appeared to work normally. CrowdStrike noted: "To an operator reviewing workflow logs, the step appears to have completed successfully."

The Five-Day Cascade

Sysdig observed that the vendor-specific typosquat domains were a deliberate deception — an analyst reviewing CI/CD logs would see traffic to what appears to be the vendor's own domain.

It took Aqua five days to fully evict the attacker, during which TeamPCP pushed additional malicious Docker images (v0.69.5 and v0.69.6).

Part III: Why Open Execution Pipelines Break at Scale

Why did this work so well? Because GitHub Actions is the leading example of an open execution pipeline — where what code runs in your pipeline is determined by external references that anyone can modify.

This trust problem isn't new. Jenkins had a similar issue with plugins. Third-party code ran with full process privileges. But Jenkins ran inside your firewall; exfiltrating data required getting past your network perimeter.

GitHub Actions took the same open execution approach but moved execution to cloud-hosted runners with broad internet egress, making exfiltration trivially easy. TeamPCP's Cloud Stealer just needed to make an HTTPS POST to an external domain, which runners are designed to do freely.

Here are a few reasons why open execution pipelines break at scale:

Mutable Trust. When you use @v2, you are trusting a pointer, not a piece of code. Tags can be silently redirected by anyone with write access. TeamPCP rewrote 76 tags in a single operation. 96% of the ecosystem is exposed.

Flat Privileges. Third-party Actions run with the same permissions as your code. No sandbox. No permission isolation. This is why TeamPCP targeted security scanners — tools that by design have elevated access to your pipeline infrastructure. The attacker doesn't need to break in. The workflow invites them in.

Secret Sprawl. Secrets are typically injected into the runner's environment or process memory during job execution, where they remain accessible for the job's duration. TeamPCP's /proc/*/mem scraper didn't need any special privilege. It just needed to be running on the same machine.

Unbounded Credential Cascades. There is no architectural boundary that stops a credential stolen in one context from unlocking another. TeamPCP proved this definitively: Trivy → Checkmarx → LiteLLM → AI API keys across thousands of enterprises. One PAT, five ecosystems.

Part IV: Governed Execution Pipelines — Three Structural Walls

Harness CI/CD pipelines are built as governed execution pipelines — where what runs is controlled through customer-owned infrastructure, policy gates, scoped credentials, immutable references, and explicit trust boundaries. At its core is the Delegate — a lightweight worker process that runs inside your infrastructure (your VPC, your Kubernetes cluster), executes tasks locally, and communicates with the Harness control plane via outbound-only connections.

When we designed this architecture, we assumed the execution plane would become the primary target in the enterprise. If TeamPCP tried to attack a Harness-powered environment, they would hit three architectural walls.

Wall 1: The Airlock (Outbound-Only, Egress-Filtered Execution)

The Architecture.

The Delegate lives inside your VPC or cluster. It communicates with our SaaS control plane via outbound-only HTTPS/WSS. No inbound ports are opened.

The Defense.

You control the firewall. Allowlist app.harness.io and the specific endpoints your pipelines need, deny everything else. TeamPCP's exfiltration to typosquat domains would fail at the network layer — not because of a detection rule, but because the path doesn't exist. Both typosquat domains returned clean verdicts from threat intel feeds. Egress filtering by allowlist is more reliable than detection by reputation.

Wall 2: The Vault (Secret Isolation at the Source)

The Architecture.

Rather than bulk-injecting secrets as flat environment variables at job start, Harness can resolve secrets at runtime through your secret manager — HashiCorp Vault, AWS Secrets Manager, GCP Secret Manager, Azure Key Vault — via the Delegate, inside your network. Harness SaaS stores encrypted references and metadata, not plaintext secret values.

The Defense.

TeamPCP's Cloud Stealer worked because in an open execution pipeline, secrets are typically injected into the runner's process memory where they remain accessible for the job's duration. In a governed execution pipeline, this exposure is structurally reduced: secrets can be resolved from your controlled vault at the point they're needed, rather than broadcast as environment variables to every step in the pipeline.

An important caveat: Vault-based resolution alone doesn't eliminate runtime exfiltration. Once a secret is resolved and passed to a step that legitimately needs it — say, an npm token during npm publish — that secret exists in the step's runtime. If malicious code is executing in that same context (for example, a tampered package.json that exfiltrates credentials during npm run test), the secret is exposed regardless of where it came from. This is why the three walls work as a system: Wall 2 reduces the surface of secret exposure, Wall 1 blocks the exfiltration path, and (as we'll see) Wall 3 limits the blast radius to the scoped environment. No single wall is sufficient on its own.

To further strengthen how pipelines use secrets, leverage ephemeral credentials — AWS STS temporary tokens, Vault dynamic secrets, or GCP short-lived service account tokens — that auto-expire after a defined window, often minutes. Even if TeamPCP’s memory scraper extracted an ephemeral credential, it likely would have expired before the attacker could pivot to the next target.

Wall 3: The Dead End (Environment-Scoped Isolation)

The Architecture.

Harness supports environment-scoped delegates as a core architecture pattern. Your "Dev" scanner delegate runs in a different cluster, with different network boundaries and different credentials, than your "Prod" deployment delegate.

The Defense.

The credential cascade that defined TeamPCP hits a dead end. Stolen Dev credentials cannot reach Production publishing gates or AI API keys, because those credentials live in a different vault, resolved by a different delegate, in a different network segment. If the Trivy compromise only yielded credentials scoped to a dev environment, the attack stops at phase one.

Beyond the walls, governed execution pipelines provide additional structural controls:

- No default marketplace dependency: In GitHub Actions, the primary building block is a reference to an external Action in a public repository. In Harness, the primary building blocks are native pipeline steps that don't reference external Git repos. Harness does support running GitHub Actions as steps for teams that need compatibility, but external Actions are an optional path — not the default architecture.

- Reduced tooling and attack surface. Customers can use minimal delegate images with a significantly reduced binary footprint and least-privilege Kubernetes roles to restrict available tooling. TeamPCP's kubectl get secrets --all-namespaces would require tooling and permissions that a properly hardened delegate environment wouldn't provide.

The Comparison

What TeamPCP Actually Exploited — Mapped to Harness Defenses

Part V: The Nuance — Governed Doesn't Mean Automatically Safe

Architecture is a foundation, not a guarantee. Governed execution pipelines are materially safer against this class of attack, but you can still create avoidable risk by running unvetted containers on delegates, skipping egress filtering, using the same delegate across dev and prod, granting overly broad cloud access, or exposing excessive secrets to jobs that don't need them, or using long-lived static credentials when ephemeral alternatives exist.

I am not claiming that Harness is safe and GitHub Actions is unsafe. That would be too simplistic.

What I am claiming is that governed execution pipelines — where what runs is controlled through policy gates, customer-owned infrastructure, scoped credentials, and immutable references — are a materially safer foundation than open execution pipelines. We designed Harness as our implementation of a governed execution pipeline. But architecture is a starting point — you still have to operate it well.

Part VI: The Strategic Bottom Line — From Open to Governed

As we enter the era of Agentic AI — where AI is generating pipelines, suggesting dependencies, and submitting pull requests at machine speed — we can no longer rely on human review to catch a malicious tag in an AI-generated PR.

But there's a more fundamental shift: AI agents will become the primary actors inside CI/CD pipelines. Not just generating code — autonomously executing tasks, selecting dependencies, making deployment decisions, remediating incidents.

Now imagine an AI agent in an open execution pipeline — downloaded from a public marketplace, referenced by a mutable tag, executing with full privileges, making dynamic runtime decisions you didn't define. It has access to your secrets, your cloud credentials, and your deployment infrastructure. Unlike a static script, an agent makes decisions at runtime — fetching resources, calling APIs, modifying files.

If TeamPCP showed us what happens when a static scanner is compromised, imagine what happens when an autonomous AI agent is compromised — or simply makes a decision you didn't anticipate.

This is why governed execution pipelines aren't just a security improvement — they're an architectural prerequisite for the AI era. In a governed pipeline, even an AI agent operates within structural boundaries: it runs on infrastructure you control, accesses only scoped secrets, has restricted egress, and its actions are audited. The agent may be autonomous, but the pipeline constrains what it can reach.

The questions every engineering leader should be asking:

- Is my pipeline open or governed? Do I control what code executes, or is it determined by external references I don't audit?

- Where does execution happen? In infrastructure I control, or in an environment assembled from public dependencies?

- Who controls the network boundary? My security team, or the maintainer of a third-party Action?

- Are secrets sitting in runner memory or safely in my Vault?

- What stops a credential cascade from crossing environment boundaries?

- When AI agents start running autonomously in my pipelines, what structural boundaries constrain them?

What You Should Do Right Now

If you use Trivy, Checkmarx, or LiteLLM:

- Assume compromise if you ran any of these tools between March 19–25. Rotate all credentials accessible to affected CI/CD runners. Check your GitHub org for repos named tpcp-docs — their presence indicates successful exfiltration.

- Block scan.aquasecurtiy[.]org, checkmarx[.]zone, and models.litellm[.]cloud at the network level.

- Update to safe versions: check with the providers of each impacted package and update the scanner and actions.

If you use GitHub Actions:

- Pin every Action to an immutable commit SHA. Today.

- Commit SHAs are not sufficient. They pin to the right commit, but do not guarantee that the right repository is selected.

- Add provenance verification: To close the gap left by SHA pinning alone, verify the Action’s source and publisher, restrict which external Actions are allowed, and prefer artifacts with verifiable provenance or attestations.

- Audit workflows for pull_request_target triggers.

- Enforce Least Privilege on GitHub Tokens: Audit every Personal Access Token and GitHub App permission. If it’s not scoped to the specific repository and the specific task (e.g., "contents: read"), it is a liability.

- Monitor egress for unexpected destinations: Domain reputation alone is insufficient.

For the longer term:

- Evaluate whether your CI/CD pipelines are open or governed. If production credentials flow through your pipelines, you need a governed execution pipeline where you control the infrastructure, the network boundary, the secret resolution, and the audit trail.

- Establish policies: Implement platform-wide automated governance to enforce SHAs and least-privilege token usage programmatically through systems like OPA.

The Responsibility We Share

I'm writing this as the CEO of a company that competes with GitHub in the CI/CD space. I want to be transparent about that.

But I'm also writing this as someone who has spent two decades building infrastructure software and who saw this threat model coming. When we designed Harness, the open execution pipeline model had already evolved from Jenkins plugins to GitHub Actions — each generation making it easier for third-party code to run with full privileges and, by moving execution further from the customer's network perimeter, making exfiltration easier. We deliberately chose to build governed execution pipelines instead.

The TeamPCP campaign didn't teach us anything new about the risk. What it did was make the difference between open and governed execution impossible for the rest of the industry to ignore.

Open source security tools are invaluable. The developers and companies who build them — including Aqua Security and Checkmarx — are doing essential work. The problem isn't the tools. The problem is running them inside open execution pipelines where third-party code has full privileges, secrets sit in memory, and exfiltration faces no structural barrier.

If you want to explore how the delegate architecture works in practice, we're here to show you. But more importantly, regardless of what platform you choose, please take these structural questions seriously. The next TeamPCP is already studying the credential graph.

Over the last few years, something fundamental has changed in software development.

If the early 2020s were about adopting AI coding assistants, the next phase is about what happens after those tools accelerate development. Teams are producing code faster than ever. But what I’m hearing from engineering leaders is a different question:

What’s going to break next?

That question is exactly what led us to commission our latest research, State of DevOps Modernization 2026. The results reveal a pattern that many practitioners already sense intuitively: faster code generation is exposing weaknesses across the rest of the software delivery lifecycle.

In other words, AI is multiplying development velocity, but it’s also revealing the limits of the systems we built to ship that code safely.

The Emerging “Velocity Paradox”

One of the most striking findings in the research is something we’ve started calling the AI Velocity Paradox - a term we coined in our 2025 State of Software Engineering Report.

Teams using AI coding tools most heavily are shipping code significantly faster. In fact, 45% of developers who use AI coding tools multiple times per day deploy to production daily or faster, compared to 32% of daily users and just 15% of weekly users.

At first glance, that sounds like a huge success story. Faster iteration cycles are exactly what modern software teams want.

But the data tells a more complicated story.

Among those same heavy AI users:

- 69% report frequent deployment problems when AI-generated code is involved

- Incident recovery times average 7.6 hours, longer than for teams using AI less frequently

- 47% say manual downstream work, QA, validation, remediation has become more problematic

What this tells me is simple: AI is speeding up the front of the delivery pipeline, but the rest of the system isn’t scaling with it. It’s like we are running trains faster than the tracks they are built for. Friction builds, the ride is bumpy, and it seems we could be on the edge of disaster.

The result is friction downstream, more incidents, more manual work, and more operational stress on engineering teams.

Why the Delivery System Is Straining

To understand why this is happening, you have to step back and look at how most DevOps systems actually evolved.

Over the past 15 years, delivery pipelines have grown incrementally. Teams added tools to solve specific problems: CI servers, artifact repositories, security scanners, deployment automation, and feature management. Each step made sense at the time.

But the overall system was rarely designed as a coherent whole.

In many organizations today, quality gates, verification steps, and incident recovery still rely heavily on human coordination and manual work. In fact, 77% say teams often have to wait on other teams for routine delivery tasks.

That model worked when release cycles were slower.

It doesn’t work as well when AI dramatically increases the number of code changes moving through the system.

Think of it this way: If AI doubles the number of changes engineers can produce, your pipelines must either:

- cut the risk of each change in half, or

- detect and resolve failures much faster.

Otherwise, the system begins to crack under pressure. The burden often falls directly on developers to help deploy services safely, certify compliance checks, and keep rollouts continuously progressing. When failures happen, they have to jump in and remediate at whatever hour.

These manual tasks, naturally, inhibit innovation and cause developer burnout. That’s exactly what the research shows.

Across respondents, developers report spending roughly 36% of their time on repetitive manual tasks like chasing approvals, rerunning failed jobs, or copy-pasting configuration.

As delivery speed increases, the operational load increases. That burden often falls directly on developers.

What Organizations Should Do Next

The good news is that this problem isn’t mysterious. It’s a systems problem. And systems problems can be solved.

From our experience working with engineering organizations, we've identified a few principles that consistently help teams scale AI-driven development safely.

1. Standardize delivery foundations

When every team builds pipelines differently, scaling delivery becomes difficult.

Standardized templates (or “golden paths”) make it easier to deploy services safely and consistently. They also dramatically reduce the cognitive load for developers.

2. Automate quality and security checks earlier

Speed only works when feedback is fast.

Automating security, compliance, and quality checks earlier in the lifecycle ensures problems are caught before they reach production. That keeps pipelines moving without sacrificing safety.

3. Build guardrails into the release process

Feature flags, automated rollbacks, and progressive rollouts allow teams to decouple deployment from release. That flexibility reduces the blast radius of new changes and makes experimentation safer.

It also allows teams to move faster without increasing production risk.

4. Remember measurement, not just automation

Automation alone doesn’t solve the problem. What matters is creating a feedback loop: deploy → observe → measure → iterate.

When teams can measure the real-world impact of changes, they can learn faster and improve continuously.

The Next Phase of AI in Software Delivery

AI is already changing how software gets written. The next challenge is changing how software gets delivered.

Coding assistants have increased development teams' capacity to innovate. But to capture the full benefit, the delivery systems behind them must evolve as well.

The organizations that succeed in this new environment will be the ones that treat software delivery as a coherent system, not just a collection of tools.

Because the real goal isn’t just writing code faster. It’s learning faster, delivering safer, and turning engineering velocity into better outcomes for the business.

And that requires modernizing the entire pipeline, not just the part where code is written.

Latest Blogs

The pipeline that never reached production

How template-driven CD prevents governance drift

Modern CI/CD platforms allow engineering teams to ship software faster than ever before.

Pipelines complete in minutes. Deployments that once required carefully coordinated release windows now happen dozens of times per day. Platform engineering teams have succeeded in giving developers unprecedented autonomy, enabling them to build, test, and deploy their services with remarkable speed.

Yet in highly regulated environments-especially in the financial services sector-speed alone cannot be the objective.

Control matters. Consistency matters. And perhaps most importantly, auditability matters.

In these environments, the real measure of a successful delivery platform is not only how quickly code moves through a pipeline. It is also how reliably the platform ensures that production changes are controlled, traceable, and compliant with governance standards.

Sometimes the most successful deployment pipeline is the one that never reaches production.

This is the story of how one enterprise platform team redesigned their delivery architecture to ensure that production pipelines remained governed, auditable, and secure by design.

The subtle risk in fast CI/CD platforms

A large financial institution had successfully adopted Harness for CI and CD across multiple engineering teams.

From a delivery perspective, the transformation looked extremely successful. Developers were productive, teams could create pipelines quickly, and deployments flowed smoothly through various non-production environments used for integration testing and validation. From the outside, the platform appeared healthy and efficient.

But during a platform architecture review, a deceptively simple question surfaced:

“What prevents someone from modifying a production pipeline directly?”

There had been no incidents. No production outages had been traced back to pipeline misconfiguration. No alarms had been raised by security or audit teams.

However, when the platform engineers examined the system more closely, they realized something concerning.

Production pipelines could still be modified manually.

In practice this meant governance relied largely on process discipline rather than platform enforcement. Engineers were expected to follow the right process, but the platform itself did not technically prevent deviations. In regulated industries, that is a risky place to be.

The architecture shift: separate authoring from execution

The platform team at the financial institution decided to rethink the delivery architecture entirely. Their redesign was guided by a simple but powerful principle:

Pipelines should be authored in a non-prod organization and executed in the production organization. And, if additional segregation was needed due to compliance, the team could decide to split into two separate accounts.

Authoring and experimentation should happen in a safe environment. Execution should occur in a controlled one.

Instead of creating additional tenants or separate accounts, the platform team decided to go with a dedicated non-prod organization within the same Harness account. This organization effectively acted as a staging environment for pipeline design and validation.

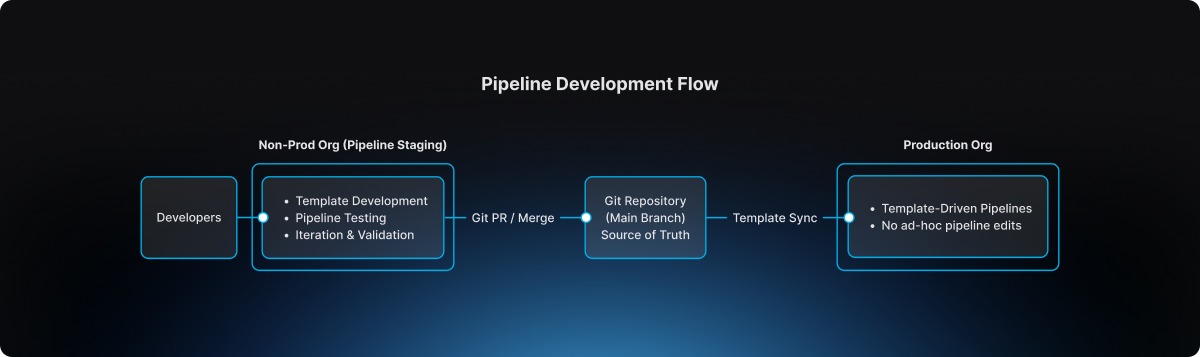

Architecture diagram

This separation introduced a clear lifecycle for pipeline evolution.

The non-prod organization became the staging environment where pipeline templates could be developed, tested, and refined. Engineers could experiment safely without impacting production governance.

The production organization, by contrast, became an execution environment. Pipelines there were not designed or modified freely. They were consumed from approved templates.

Guardrail #1: production pipelines must use templates

The first guardrail introduced by the platform team was straightforward but powerful.

Production pipelines must always be created from account-level templates.

Handcrafted pipelines were no longer allowed. Project-level template shortcuts were also prohibited, ensuring that governance could not be bypassed unintentionally.

This rule was enforced directly through OPA policies in Harness.

Example policy

package harness.cicd.pipeline

deny[msg] {

template_scope := input.pipeline.template.scope

template_scope != "account"

msg = "pipeline can only be created from account level pipeline template"

}

This policy ensured that production pipelines were standardized by design. Engineers could not create or modify arbitrary pipelines inside the production organization. Instead, they were required to build pipelines by selecting from approved templates that had been validated by the platform team.

As a result, production pipelines ceased to be ad-hoc configurations. They became governed platform artifacts.

Guardrail #2: governance starts in the non-prod organization

Blocking unsafe pipelines in production was only part of the solution.

The platform team realized it would be even more effective to prevent non-compliant pipelines earlier in the lifecycle.

To accomplish this, they implemented structural guardrails within the non-prod organization used for pipeline staging. Templates could not even be saved unless they satisfied specific structural requirements defined by policy.

For example, templates were required to include mandatory stages, compliance checkpoints, and evidence collection steps necessary for audit traceability.

Example policy

package harness.ci_cd

deny[msg] {

input.templates[_].stages == null

msg = "Template must have necessary stages defined"

}

deny[msg] {

some i

stages := input.templates[i].stages

stages == [Evidence_Collection]

msg = "Template must have necessary stages defined"

}

These guardrails ensured that every template contained required compliance stages such as Evidence Collection, making it impossible for teams to bypass mandatory governance steps during pipeline design.

Governance, in other words, became embedded directly into the pipeline architecture itself.

The source of truth: Git

The next question the platform team addressed was where the canonical version of pipeline templates should reside.

The answer was clear: Git must become the source of truth.

Every template intended for production usage lived inside a repository where the main branch represented the official release line.

Direct pushes to the main branch were blocked. All changes required pull requests, and pull requests themselves were subject to approval workflows that mirrored enterprise change management practices.

Governance flow

.png)

This model introduced peer review, immutable change history, and a clear traceability chain connecting pipeline changes to formal change management records.

For auditors and platform leaders alike, this was a significant improvement.

The promotion workflow

Once governance mechanisms were in place, the promotion workflow itself became predictable and repeatable.

Engineers first authored and validated templates within the non-prod organization used for pipeline staging. There they could test pipelines using real deployments in controlled non-production environments.

The typical delivery flow followed a familiar sequence:

After validation, the template definition was committed to Git through a branch and promoted through a pull request. Required approvals ensured that platform engineers, security teams, and change management authorities could review the change before it reached the release line.

Once merged into main, the approved template became available for pipelines running in the production organization. Platform administrators ensured that naming conventions and version identifiers remained consistent so that teams consuming the template could easily track its evolution.

Finally, product teams created their production pipelines simply by selecting the approved template. Any attempt to bypass the template mechanism was automatically rejected by policy enforcement

The day the model proved its value

Several months after the new architecture had been implemented, an engineer attempted to modify a deployment pipeline directly inside the production organization.

Under the previous architecture, that change would have succeeded immediately.

But now the platform rejected it. The pipeline violated the OPA rule because it was not created from an approved account-level template.

Instead of modifying the pipeline directly, the engineer followed the intended process: updating the template within the non-prod organization, submitting a pull request, obtaining the necessary approvals, merging the change to Git main, and then consuming the updated template in production.

The system had behaved exactly as intended. It prevented uncontrolled change in production.

Why this model works

The architecture introduced by the large financial institution delivered several key guarantees.

Production pipelines are standardized because they originate only from platform-approved templates. Governance is preserved because Git main serves as the official release line for pipeline definitions. Auditability improves dramatically because every pipeline change can be traced back to a pull request and associated change management approval. Finally, platform administrators retain the ability to control how templates evolve and how they are consumed in production environments.

The lesson for platform teams

Pipelines are often treated as simple automation scripts.

In reality they represent critical production infrastructure.

They define how code moves through the delivery system, how security scans are executed, how compliance evidence is collected, and ultimately how deployments reach production environments. If pipeline creation is uncontrolled, the entire delivery system becomes fragile.

The financial institution solved this problem with a remarkably simple model. Pipelines are built in the non-prod staging organization. Templates are promoted through Git governance workflows. Production pipelines consume those approved templates.

Nothing more. Nothing less.

Final takeaway

Modern CI/CD platforms have dramatically accelerated the speed of software delivery.

But in regulated environments, the true achievement lies elsewhere. It lies in building a platform where developers move quickly, security remains embedded within the delivery workflow, governance is enforced automatically, and production environments remain protected from uncontrolled change.

That is not just CI/CD. That is platform engineering done right.

Introducing Zero Trust Architecture for Software Delivery

For the world’s largest financial institutions, places like Citi and National Australia Bank, shipping code fast is just part of the job. But at that scale, speed is nothing without a rock-solid security foundation. It’s the non-negotiable starting point for every release.

Most Harness users believe they are fully covered by our fine-grained Role-Based Access Control (RBAC) and Open Policy Agent (OPA). These are critical layers, but they share a common assumption: they trust the user or the process once the initial criteria are met. If you let someone control and execute a shell script, you’ve trusted them to a great extent.

But what happens when the person with the "right" permissions decides to go rogue? Or when a compromised account attempts to inject a malicious script into a trusted pipeline?

Harness is changing the security paradigm by moving beyond Policy as Code to a true Zero Trust model for your delivery infrastructure.

The Challenge: When Permissions Aren't Enough

Traditional security models focus on the "Front Door." Once an employee is authenticated and their role is verified, the system trusts their actions. In a modern CI/CD environment, this means an engineer with "Edit" and "Execute" rights can potentially run arbitrary scripts on your infrastructure.

If that employee goes rogue or their credentials are stolen, RBAC won't stop them. OPA can control whether shell scripts are allowed at all, but it often struggles to parse the intent of a custom shell script in real-time.

The reality is that verify-at-the-door is a legacy mindset. We need to verify at execution time. CI/CD platforms are a supply-chain target that are often targeted. The recent attack against the Checkmarx GitHub Action has been a painful reminder of the lesson the Solarwinds fiasco should have taught the industry.

Introducing Harness Zero Trust

Harness Zero Trust is a new architectural layer that acts as a mandatory "interruption" service at the most critical point: the Harness Delegate (our lightweight runner in your infrastructure).

Instead of the Delegate simply executing tasks authorized by the control plane, it now operates on a "Never Trust, Always Verify" basis.

How It Works: The Final Line of Defense

When Zero Trust is enabled, the Harness Delegate pauses before executing any task. It sends the full execution context to a Zero Trust Validator, a service hosted and controlled by your security team.

This context includes:

- User Identity: Who triggered the action?

- Task Specifics: Exactly what is the Delegate being asked to do?

- Script Content: The full body of any shell scripts.

- Environment Variables: The inputs and secrets being injected into the task.

The Delegate waits a moment. Only if the validator returns a "True" signal does the task proceed. If the signal is "False," the execution is killed instantly.

Why This Matters for Enterprise DevSecOps

By moving validation to the Delegate level, we provide a "Last Line of Defense" that hits several key enterprise requirements:

- Rogue Employee Protection: Even if a user has the rights to run a pipeline, your security service can flag suspicious patterns (like a script attempting to delete a production database or exfiltrate data) and stop it before it starts.

- Architectural Superiority: While competitors struggle with stability and baseline security, Harness is doubling down on a hardened architecture. RBAC protects the door; OPA governs the "what"; and Zero Trust validates the "how."

- Custom Judgement: Because Harness sends the complete task details, you can point this validator at a customer algorithm or an AI-powered security tool to judge the "safety" of scripts in real-time. Essentially, you are peer-reviewing every line of automation at execution time.

The Takeaway

We built this capability alongside some of the world's most regulated institutions to ensure it doesn't become a bottleneck. It’s designed to be a silent guardian. It shuts down the 1% of rogue actions while the other 99% of your engineers continue to innovate at high velocity.

The bottom line: at Harness, we believe that the promise of AI-accelerated coding must be met with an equally advanced delivery safety net. We’re building out that safety net every day. Zero Trust is the next piece.

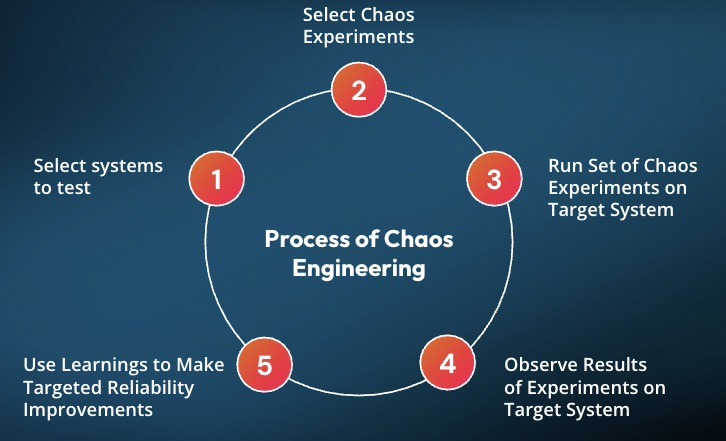

From Chaos to Confidence: Debunking the 3 Biggest Myths of Chaos Engineering

Many organizations hesitate to adopt chaos engineering because of persistent misconceptions that make it seem reckless or reserved for tech giants.

But the reality is far more practical and far more accessible.

Drawing from experience building the chaos engineering program at Target.com, Matt Schillerstrom breaks down the three biggest myths holding teams back and what is actually true.

Myth 1: Chaos Engineering Means Random Failure

The fear is understandable. Engineers unplugging servers, triggering outages, and hoping for the best.

The Reality: Chaos engineering is not random. It is disciplined, which helps teams build trust and confidence in their systems.

It is built on hypothesis-driven experimentation. Every test starts with a clear expectation: what should happen if this component fails?

Instead of breaking things randomly, teams run controlled experiments. For example, stopping one out of ten servers to observe how the system adapts. These scenarios are planned, reviewed, and executed with intention.

At Target, when Matt was working with engineering teams, they would learn something before running a test by getting the whole team aligned on the experiment's hypothesis. It would require teams to review their architecture diagrams, documentation, and runbooks, often revealing issues before a test was started.

The goal is not disruption. The goal is learning.

Today, teams are taking this further with AI, automatically identifying resilience risks and generating experiments before issues reach production.

Read how this works in practice: AI-Powered Resilience Testing with Harness MCP Server and Windsurf

Myth 2: It’s Only for FAANG Companies

Chaos engineering is often associated with Netflix, Google, and other hyperscalers. That makes it feel out of reach.

The Reality: You do not need massive scale to get meaningful value.

You can start small today.

A simple experiment, such as increasing memory utilization on a single service, can reveal whether your auto-scaling actually works. These small tests validate that the resilience mechanisms you are using will function when issues happen, rather than having your customers impacted.

What matters is not scale. What matters is consistency and learning how your system behaves under stress.

Myth 3: Chaos Engineering Replaces Traditional Testing

Some teams worry that adopting chaos engineering means replacing QA or existing testing workflows.

The Reality: Chaos engineering strengthens what you already do.

At Target, chaos experiments were layered into monthly load testing. While simulating peak traffic, failure scenarios such as payment authorization latency were introduced to observe system behavior under real pressure.

This approach does not replace testing. It makes it more realistic and more valuable.

Build Confidence, Not Chaos

Chaos engineering is not about breaking systems. It is about understanding them.

When teams move from ad hoc testing to small, continuous, hypothesis-driven experiments, they gain something far more valuable than test results. They gain confidence.

Confidence that their systems will behave as expected.

Confidence that failures will not become outages.

Confidence that they are ready for the unexpected.

See It in Action

If you are thinking about chaos engineering, the best way to understand it is to start.

Harness helps teams safely design, run, and learn from controlled chaos experiments without putting production at risk.

Want to try your first chaos engineering test? Sign up for your free Resilience Testing account today. Prefer a hands-on demo with an expert? Click here for a personalized demo.

.png)

.png)

Get Ship Done: Everything We Shipped in March 2026

According to our AI Velocity Paradox report, many engineering teams say AI has made them ship code faster, but quality and security issues have exasperated across the SDLC. That gap is the whole story. AI coding assistants are compressing the time to write and commit code, but the bottlenecks have just moved downstream: into builds, security scans, deployment pipelines, incident response, and cost controls. In March, we shipped 55 features, most of them targeting exactly those downstream stages. This is what closed-loop AI velocity looks like.

AI Built Into Every Step

Harness MCP v2 (Early Preview)

The next version of the Harness MCP server is rolling out to early access customers. It ships with 10 unified tools, CRUD and execute support across 119+ resource types, and 26 built-in prompt templates that chain tools together for multi-step workflows, debug a pipeline failure, deploy an app, review DORA metrics, and triage vulnerabilities. Install it in one command: npx harness-mcp-v2. No cloning, no local setup.

Learn more about how we redesigned our MCP server to be more agentic AI-friendly.

AI Skills for Your IDE

A new skills repository sits on top of MCP to let AI coding assistants, such as Claude Code, Cursor, and OpenAI Codex, act within Harness without the user needing to know Harness. Skills are structured instruction files. "Create a CI pipeline for my Node.js app" turns into the right tool calls automatically.

GitOps Troubleshooting via AI

The AI velocity paradox doesn't end at deployment. It continues into operations, especially in systems like GitOps, where small configuration issues can cascade quickly.

Harness AI now understands GitOps entities and can detect misconfigurations in manifests, identify missing dependencies or clusters, diagnose connectivity issues, and suggest fixes in context. With the expansion of the "Ask AI" assistant into GitOps, teams can troubleshoot issues directly where they occur, not after the fact.

Watch GitOps and Harness AI in action:

AI Chat: OPA Policy Enforcement on Generated Resources

With Harness AI, users can now do much more around Open Policy Agent (OPA). AI-driven entity creation is now automatically evaluated against your organization's Open Policy Agent policies, so when the agent generates a Harness resource, it checks compliance in real time and surfaces validation messages directly in the chat. This means governance isn't a post-creation audit; it's baked into the moment of creation.

Security Baked Into the Pipeline

EPSS-Based Vulnerability Prioritization

Vulnerability prioritization now includes EPSS (Exploit Prediction Scoring System) scores alongside CVSS severity. EPSS predicts the probability that a CVE will be exploited in the wild within 30 days. Teams can stop triaging by theoretical severity and focus on the vulnerabilities that attackers are actively targeting.

Manual Severity Override

Security teams can now adjust scanner-assigned severity levels when the tool's rating doesn't match real-world risk in their environment. Override the score, add context, and move on.

Full OSS Dependency Visibility

Supply Chain Security now covers both direct and transitive (indirect) open source dependencies in code repositories, with vulnerability intelligence from the Qwiet database. When a vulnerable child dependency is three layers deep, you can see exactly where it was introduced and trace the path to fix it.

AutoFix Directly in GitHub Pull Requests

A new GitHub App delivers AI-generated security fixes from Harness SAST and SCA scanning directly inside the GitHub PR workflow. Developers get automated fix suggestions and can have a back-and-forth conversation about the remediation without leaving GitHub.

AutoFix for Harness Code Repositories

The same AutoFix capability now works in Harness Code. SAST and SCA scans automatically open pull requests with AI-generated fixes, including plain-language explanations of what was changed and why.

Dependency Firewall

The Artifact Registry Dependency Firewall now ships with a full Harness CLI, letting developers audit dependencies for npm, Python, Maven, NuGet, and Go packages before they hit a build. Maven and Gradle plugins are included. In testing against a multi-module Maven project, artifact upload time improved 10x compared to standard flows.

AI Discovery for Your AI Ecosystem

Automatically discovers AI assets across models, APIs, and MCP servers in your environment. Provides deep visibility into prompts, responses, tool usage, and data flows, with continuous posture evaluation and centralized governance controls.

AI Firewall (Beta)

Runtime protection for AI applications: detects prompt injection, model misuse, unsafe outputs, and data leakage across multi-hop AI application flows with policy-driven enforcement.

DAST AI Testing (Beta)

DAST for LLM applications covering the OWASP LLM Top 10 vulnerability categories. Runs during development, before production.

Secure AI Coding in Cursor, Windsurf, and Claude (Beta)

Real-time security scanning now runs inside AI-native development environments. The existing IDE extension handles the integration; no new tooling is required.

Deploy Faster and More Reliably

Feature Flags as First-Class Pipeline Steps

14 out-of-the-box feature flag steps are now available in the step library: create flags, manage targets, set allocations, trigger kill switches. Combine them with approvals and manual gates to coordinate releases exactly when you want them to happen.

OPA Governance for Feature Flags

Policy as Code rules can now be enforced on feature flag saves, applying the same governance model you use for pipelines to your flag configurations.

Feature Flag Archiving

Retire feature flags without deleting them. Archived flags stop being sent to SDKs and disappear from default views, but all historical data, impressions, configurations, and audit logs are preserved for compliance and analysis.

ECS Scale Step

Scale ECS services up or down without triggering a full deployment. This is a dedicated step; it doesn't touch your service definition or redeploy anything.

ECS Scheduled Actions

Define time-based auto-scaling policies for ECS services directly in Harness, using the new EcsScheduledActionDefinition manifest type.

Helm Values Overrides in Service Hooks

Native Helm deployments can now expose Harness values overrides to service hooks before Helm runs. Use this to decrypt override files (e.g., with SOPS) in a pre-run hook.

Host Groups for WinRM Deployments

Physical data center WinRM deployments can now assign independent credentials to different groups of hosts within a single infrastructure definition. Unblocks environments running Just Enough Administration (JEA) configurations where each server group has distinct endpoint settings.

Google Cloud Storage for MIG Manifests

Managed Instance Group deployments on GCP can now pull manifests and templates from Google Cloud Storage.

Pipeline Notifications for Approval Waits

Pipelines now send notifications the moment they pause for user input, such as approvals, manual interventions, or runtime inputs.

Faster Builds

CPU and Memory Metrics in Build Execution View

Build stages now display real-time CPU and memory usage directly in the execution view. Use it to right-size infrastructure and troubleshoot memory pressure before it causes failures.

Branch-Based Build Version Counters

Build numbers now track independently per branch. Teams running parallel branches no longer share a global counter.

Real-Time Step Status for Container Step Groups

Container-based step groups report step status in real time during execution rather than waiting for the group to complete.

Cache Intelligence: Azure Blob Storage

Build caches can now be stored and retrieved from Azure Blob Storage with principal authentication and OIDC-based access.

Cache Intelligence: Go Builds on Linux

Automatic dependency caching is now available for Go projects building on Linux.

Docker Proxy Auto-Detection

The Docker Build and Push plugins now automatically detect and pass HARNESS_HTTP_PROXY, HARNESS_HTTPS_PROXY, and HARNESS_NO_PROXY as Docker build arguments. No manual proxy configuration needed.

API and Runtime Security

Traceable Now Embedded in Harness

Traceable's API security capabilities, discovery, inventory, threat detection, and runtime protection are now accessible directly in the Harness UI as a native embedded experience, without switching tools or tabs.

Self-Service Bot and Abuse Protection Policies

Bot and abuse protection now supports self-serve policy templates. The Velocity/Aggregation template lets you write rules like "Flag all users who have logged in from more than 5 countries in the last 30 minutes" or "Flag bot IPs distributing attacks across more than 10 countries over 24 hours." Covers both fast-moving and slow distributed attack patterns.

Dynamic Payload Matching in Custom API Policies

Custom policies, such as signature, rate-limiting, DLP, enumeration, and exclusion, now support dynamic payload matching. Both sides of a comparison can reference live values from the request, response, or extracted attributes.

Incident Response, Upgraded

Native ServiceNow Actions in Runbooks

Runbooks can now create ServiceNow incidents, update records, and add comments natively, without custom webhook configuration. Fields pull dynamically from your ServiceNow instance. Previously, this required PagerDuty or OpsGenie to accomplish via custom integrations; it's now first-class.

Reusable Webhook Templates

Configure a webhook once, save it as a template, and reuse it across integrations. Templates are organization-scoped and use copy-on-write, i.e., changes don't propagate to existing webhooks.

Named Alert Rules

Alert rules now support custom display names. Identify and manage rules by name instead of opaque identifiers.

Active Pages View for On-Call

On-call users can now see all currently active pages from a single view: status, assigned responders, escalation progress, and acknowledgment state in one table.

Cloud Cost Visibility

Partial Savings Auto-Inference

The savings inference engine now detects partial infrastructure changes, not just fully realized ones. Track savings as they accumulate, not only after a recommendation is fully implemented.

AWS Cost Optimization Hub Integration

Recommendations now expand across all major AWS resource types. Moving from Cost Explorer Hub to Cost Optimization Hub, with AWS costs shown as net-amortized directly from the console.

Anomaly Whitelisting for Reserved Instances and Savings Plans

Whitelist expected RI/SP billing events, renewals, purchases, and adjustments, to reduce false-positive noise in anomaly detection.

Budgets Decoupled from Perspectives

Budgets no longer require a Perspective to exist first. They're now based on Cost Categories, making them importable into BI dashboards and usable in more governance contexts.

Cluster Orchestrator Savings Report

A read-only savings report shows projected savings before Cluster Orchestration is enabled and actual savings after. Understand the value before committing, then track realized results over time.

Node Pool Recommendations with Cloud Billing Tags

Node pool recommendations now surface AWS cost allocation and environment tags alongside Kubernetes node labels, giving recommendations more operational context.

Database Changes Without the Drama

Snowflake Support

Harness Database DevOps now supports Snowflake with OAuth, PKI, and username/password authentication for apply and rollback steps.

Online Schema Changes for MySQL with Percona Toolkit

Run online schema changes on MySQL with zero table locks. Enable it from the DB schema edit dialog.

Keyless Auth for Google Spanner

Authenticate to Cloud Spanner using Workload Identity, eliminating service account keys from Spanner deployments entirely.

Code Management

Repo Forking

Harness Code now supports repository forking. Developers can fork any repo, make changes, and open a pull request back to the upstream source, the same workflow as GitHub.

Git LFS Upload Performance

Large file uploads via Git LFS are faster. File content now streams during OID calculation instead of buffering in memory.

Automated Testing

On the testing/AI Test Automation side, updates focused on handling complexity at scale: better organization with nested test tasks, improved traceability with Jira integration, more flexible AI-driven test creation, and UX improvements for navigating large test suites. Because if AI increases the volume of changes, testing systems need to become more adaptive, not more manual.

Other Updates

- Platform: Proactive email alerts at 80%, 95%, and 100% of account resource limits (users, projects, connectors, secrets, roles) with up to 5 configurable recipients.

- Build logs now display the actual machine size name (e.g., medium) instead of the internal flex label.

- Chaos Engineering: Splunk Observability probe support, user-defined variables, load test integration, native Windows network chaos faults, Linux chaos faults (network, API, JVM, process, service, DNS, disk fill), and Disaster Recovery component support added.

- AI SRE documentation restructured into separate tracks for administrators and incident responders.

Conclusion

The throughput you see here, 55 features in 31 days, reflects what happens when the AI acceleration loop closes end to end. Teams writing code faster with AI agents need pipelines, security scans, deployments, and incident response to keep pace. That's the bet we're making: engineering velocity compounds when AI works across the entire delivery chain, not just the code-generation process. What's next? Look out for our April updates.

.jpg)

.jpg)

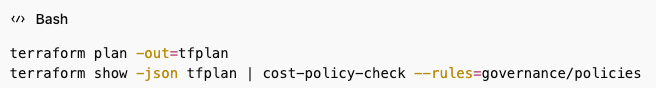

Cost Awareness in CI/CD Pipelines: A FinOps Guide

The Problem with Ignoring Cost in CI/CD

If you’ve ever pushed a feature branch that quietly triggered multiple production deployments—and only realized the impact when the AWS bill jumped 240% month-over-month—you already understand the problem.

Cost awareness in CI/CD pipelines isn’t about slowing teams down. It’s about avoiding financial surprises that lead to tense finance meetings and urgent cost-cutting exercises.

Many platform engineering teams treat cloud spend as something to review after deployment. Code ships. Infrastructure scales. Services consume resources. Weeks later, someone from finance posts a Slack screenshot of a sharply rising spend graph. By that point, the expensive workload has been running for days or weeks. Rolling it back risks disruption. And the engineers who shipped it are already focused on the next sprint.

That reactive model might work when cloud usage is stable and margins are wide. It breaks down quickly as deployment velocity increases and systems become more complex. Without pipeline cost visibility built into your workflows, teams optimize purely for speed—without seeing the financial impact of each merge.

Why CI/CD Cost Awareness Fails by Default

Traditional pipelines are designed for one purpose: delivering code to production quickly and reliably. Teams track build duration, deployment success rates, and test coverage. But cloud cost governance pipelines? That usually sits outside the CI/CD system.

This creates a structural gap.

Engineers making deployment decisions rarely see the cost implications of those decisions in real time. You can monitor CPU usage, memory, and latency—but not that your new microservice is quietly generating $400 per day in cross-region data transfer charges.

That disconnect leads to friction:

- Engineers optimize for performance and functionality.

- Finance focuses on budgets and spend controls.

- Platform teams are left reconciling the two after costs have already accumulated.

By the time a cost spike is discovered, the context behind the deployment is often lost. The feedback loop is simply too slow.

At enterprise scale, small inefficiencies compound. One team’s cost regression might be negligible. Ten teams introducing cost-heavy services every week becomes a serious budget issue. Without infrastructure cost tracking at the pipeline level, you can’t clearly attribute increases to specific deployments or commits. You see total spend rising—but not what caused it.

Building Cost Awareness into CI/CD Workflows

The goal isn’t to introduce manual approvals or slow down delivery. The goal is to make cost data visible early enough for teams to make smarter decisions before code hits production.

1. Automated Cost Feedback Loops

One of the most effective ways to enable CI/CD cost optimization is by integrating automated cost feedback loops directly into pipeline stages.

Before a deployment completes, your system should estimate the incremental cost impact and surface it alongside build and test results.

For example:

- Deploying a Kubernetes service? Estimate compute, storage, and networking costs based on the manifest.

- Provisioning infrastructure via Terraform? Calculate projected monthly run rate before applying changes.

- Scaling a service? Predict resource usage and associated cost impact.

The estimates don’t need to be perfect. Directional accuracy is enough to catch major regressions. If a deployment is projected to increase monthly spend by 30%, that’s a signal to pause and evaluate. If the cost delta is minimal, the pipeline proceeds normally.

This approach enables build pipeline cost control without adding unnecessary friction.

2. Pipeline Cost Visibility and Budget Guardrails

Once cost data flows through your pipelines, the next step is establishing budget guardrails.

Pipeline cost visibility allows you to define thresholds—for example, triggering a review if service-level spend increases by more than 20%. This doesn’t block innovation; it simply ensures cost increases are intentional.

With this model:

- Teams deploy freely within defined cost parameters.

- Significant cost jumps trigger additional context or approval.

- Cost regressions are caught before they become financial escalations.

Infrastructure cost tracking at the pipeline level also improves attribution. Instead of reviewing spend by account or department, you can tie cost increases directly to individual pipeline runs and commits. That clarity makes DevOps cost management far more actionable.

3. Shifting Cost Ownership Left

True FinOps CI/CD integration means shifting cost ownership closer to the engineers making infrastructure decisions.

Cost becomes a first-class operational metric—alongside performance, reliability, and security.

When cost data lives in the same interface as builds and deployments, teams naturally factor it into trade-offs. You reduce the need for reactive enforcement because engineers can see and adjust in real time.

This alignment benefits everyone:

- Engineers maintain speed.

- Platform teams maintain control.

- Finance gains visibility and predictability.

Cloud cost governance pipelines work best when they support engineering velocity—not compete with it.

How Harness Cloud Cost Management Enables Pipeline-Level Cost Awareness

Harness Cloud Cost Management is designed to connect DevOps execution with financial accountability.

Unlike traditional tools that focus on billing-level reporting, Harness embeds pipeline cost visibility directly into CI/CD workflows. Engineers receive real-time cost feedback in the same system where they manage builds and deployments.

Key capabilities include:

- Service- and pipeline-level cost analysis

- Cost anomaly detection and trend monitoring

- Policy-based budget thresholds and governance controls

- Cost allocation, showback, and chargeback models

If a deployment exceeds predefined thresholds, the pipeline can automatically flag it or enforce policy-based controls—supporting consistent build pipeline cost control across teams.

By connecting cost allocation directly to services, teams, and pipeline runs, organizations gain granular insight into what drives spend. Conversations between finance and engineering become fact-based and collaborative rather than reactive.

For teams already using Harness CI/CD, adding cost awareness becomes a natural extension of existing workflows—no context switching required.

Learn more about Harness Cloud Cost Management and explore the cost visibility and governance capabilities available in the platform.

Embedding Cost Discipline Without Slowing Teams Down

Cloud cost management at scale can’t rely on monthly budget reviews or occasional optimization sprints. It has to be embedded where cost decisions actually happen: inside your CI/CD pipelines.

When engineers see cost impact in real time, they make smarter trade-offs.

When platform teams enforce guardrails programmatically, they prevent regressions early.

When finance has attribution tied to specific deployments, discussions become clearer and more productive.

Cost awareness in CI/CD pipelines isn’t friction—it’s context.

The teams that succeed with CI/CD cost optimization don’t treat cost as a constraint. They treat it as an operational signal that improves engineering decisions.

If your organization has struggled with unexpected cloud spend or unclear attribution, it may be time to rethink where cost visibility lives. Embedding DevOps cost management directly into your CI/CD workflows gives you the speed of modern delivery—without sacrificing financial control.

Defeating Context Rot: Mastering the Flow of AI Sessions

In Part 1, we argued that most dev teams start in the wrong place. They obsess over prompts, when the real problem is structural: agents are dropped into repositories that were never designed for them. The solution was to make the repository itself agent-native through a standardized instruction layer like AGENTS.md.

But even after you fix the environment, something still breaks.

The agent starts strong. It understands the problem, follows instructions, seems super intelligent

Then, somewhere along the way, things begin to drift. The code still compiles, but the logic gets inconsistent. Small mistakes creep in. Constraints are ignored. Assumptions mutate.

Nothing fails loudly. Everything just gets slightly worse.

This is the second failure mode of AI systems: context rot.

Context Rot Is Not a Bug — It’s a Property

There is a persistent assumption in the industry that more context leads to better performance. If a model can handle large context windows, then giving it more information should improve accuracy.

In practice, the opposite is often true.

Recent research from Chroma shows that LLM performance degrades as input length increases, even when the model is operating well within its maximum context window. Similar observations are echoed across independent analyses, including this breakdown of why models deteriorate in longer sessions and practical explorations of how context mismanagement impacts production systems.

This is not an edge case. It is a structural limitation.

Models do not “understand” context in a hierarchical way. They distribute attention across tokens. As context grows, signal competes with noise. Important instructions lose weight. Irrelevant details gain influence. Conflicts accumulate.

What looks like a reasoning failure is often just context degradation.

Why Long Sessions Break Down

If you’ve worked with AI coding agents for more than a few hours, you’ve already seen this pattern.

A session starts with clear instructions and aligned reasoning. Over time, it fills with partial implementations, outdated assumptions, repeated instructions, and exploratory dead-ends. The model doesn’t forget earlier information, it simply cannot prioritize it effectively anymore.

Detailed guides on context management highlight this exact failure mode: as sessions grow, models become increasingly sensitive to irrelevant or redundant tokens, which degrade output quality. Platform-level documentation also reinforces the same principle - effective systems explicitly control how context is introduced, retained, and pruned.

In practice, this shows up as inconsistency. But underneath, it’s the predictable outcome of unmanaged context growth.

The Link Between Context Rot and Hallucination

This is where teams often misdiagnose the issue.

When agents hallucinate, the instinct is to blame the model. But hallucination is often downstream of context rot.

OpenAI’s work on hallucinations explains that models are optimized to produce plausible outputs even under uncertainty. When context degrades, uncertainty increases. The model fills gaps with statistically likely answers.

So the failure chain looks like this:

Context degradation → ambiguity → confident guessing → hallucination

In other words, hallucination is not always a knowledge problem.

It is often a context management problem.

Sessions Are Not Conversations

Most developers interact with AI through chat, so they treat sessions like conversations.

That mental model breaks at scale.

A long-running AI session is not a conversation. It is a stateful system.

And like any stateful system, it degrades without control.

Letting context accumulate indefinitely is equivalent to running a system without memory management. Eventually, performance collapses—not because the system is incapable, but because it is overloaded.

The Plan → Execute → Reset Discipline

Once you accept that context degrades, the solution becomes straightforward: you don’t try to out-prompt the problem. You control how context evolves.

Across production teams, a consistent pattern emerges:

Plan → Execute → Reset

This is not a trick. It is operational discipline.

Planning before execution

The most common mistake is asking the agent to write code immediately. This forces premature decisions and locks the model into an approach before it has fully understood the problem.

Instead, enforce a planning phase.

Have the model break down the task, identify dependencies, and surface uncertainties before implementation. This aligns closely with best practices in production-grade prompt engineering, where structured reasoning is prioritized over immediate generation.

Planning reduces unnecessary context growth and prevents incorrect assumptions from propagating.

Stepwise execution