Harness Blog

Featured Blogs

Key Takeaway: The Harness MCP Server is now in the official Claude Connectors Directory. Developers using Claude can now discover and connect to Harness, gaining structured, real-time access to their pipelines, deployments, approvals, and delivery workflows. What makes this different from a typical API integration is what's underneath: the Harness Software Delivery Knowledge Graph, which gives Claude the context it needs to make decisions that are accurate, fast, and safe.

AI agents are only as good as the context they operate in. That's not a design philosophy. It's a practical constraint. An AI agent that doesn't understand how the underlying software delivery entities relate to each other, or what the data actually means, will get things wrong. In software delivery, wrong looks like a botched deployment, a misread failure, or an approval granted when it shouldn't have been, which directly affects your users.

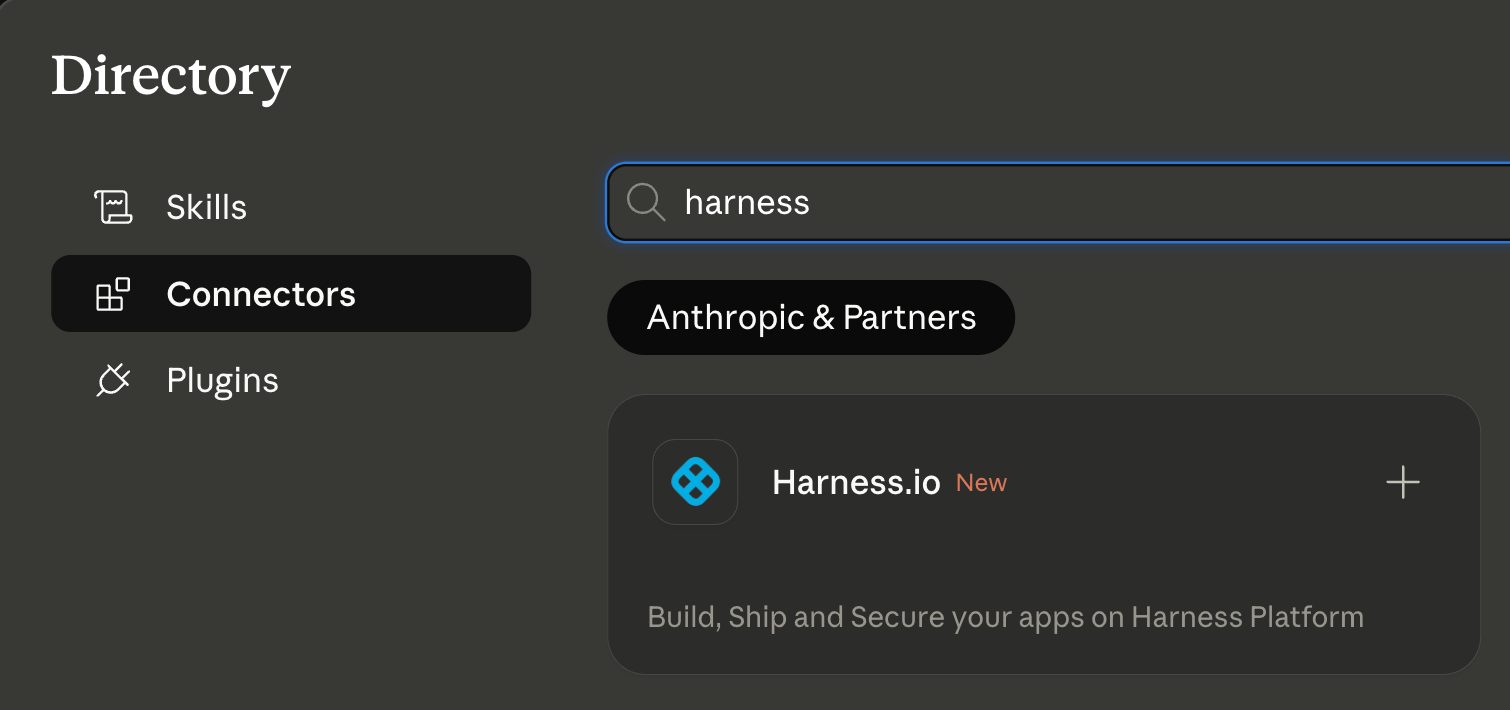

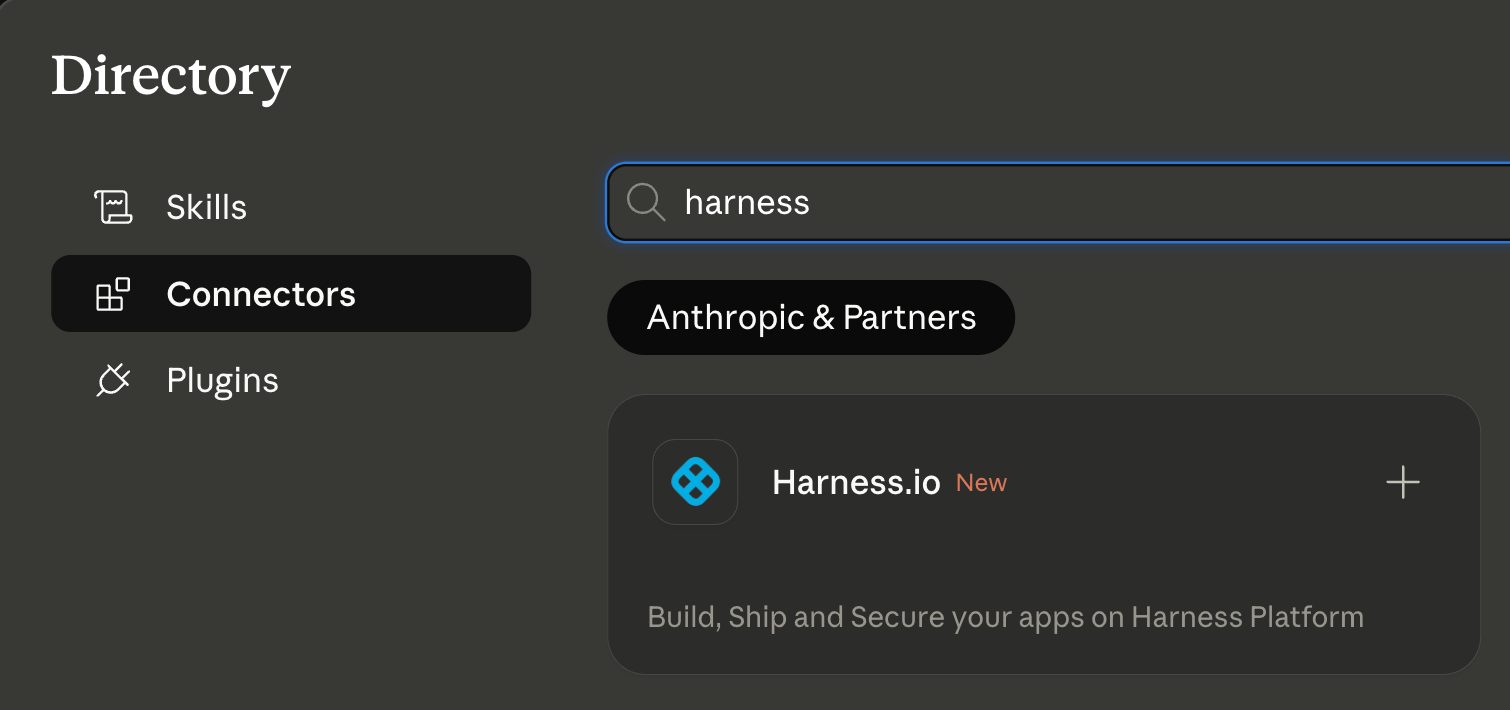

Today, we're announcing that the Harness MCP Server is in the official Claude Connectors Directory, making Harness discoverable and connectable for every team using Claude. But the announcement isn't really about the directory listing. It's about what Harness + Claude can actually do in your delivery system.

What You Can Do with Claude and Harness

Claude can work across the full Harness delivery platform:

All of it is grounded in the Knowledge Graph, not raw API responses, but a structured model of your delivery system that Claude can reason over precisely.

The Problem With Giving AI Agents Raw API Access

MCP lets AI models call external tools by reading API descriptions and deciding which to invoke. That flexibility is useful. But when you're building an agent that needs to reason across an entire software delivery lifecycle, CI, CD, security scans, approvals, feature flags, cost signals, and environments, raw API access creates a deep reliability problem.

Consider a question a platform engineering lead might ask:

"Show me the pipelines with the highest failure rate over the last 30 days, and for each one, tell me which services they deploy and whether any of those services have open critical vulnerabilities."

That question spans four domains: pipeline execution history, service-to-pipeline relationships, environment state, and security scan results. An agent working off raw APIs has to discover which APIs exist across each domain, call them in the right order, paginate correctly, infer how field names correspond across systems, and synthesize the results without misinterpreting nested objects or guessing at relationships.

The result is 5+ sequential LLM calls, hundreds of thousands of input tokens, high latency, and an agent that had to guess at every join. Guessing is where hallucinations happen.

What the Harness + Claude Integration Changes

The Harness Software Delivery Knowledge Graph is a purpose-built model of everything that happens after code is written: builds, test runs, deployments, approvals, security scans, environment states, feature flags, infrastructure changes, cost signals, and rollbacks. Not as raw data but as a connected, typed, semantically annotated graph of entities and relationships.

Every field in the graph carries metadata that tells an agent exactly how to use it: whether a value is a number or a string, whether it can be aggregated or only filtered, what its unit is, and how it joins to related entities. Cross-module relationships, between a pipeline and the services it deploys, between a deployment and the security scan results for that artifact, between an environment change and the cost anomaly that followed, are explicitly declared, not inferred.

This is the difference between an agent that can access your delivery system and one that understands it.

When Claude connects to Harness via MCP, it doesn't receive a set of API endpoints. It's getting access to a structured model of your entire delivery organization, one where the relationships are known, the data types are enforced, and the agent can construct precise queries rather than guessing at field semantics.

The practical effect with Harness + Claude: that same cross-domain question above becomes 2–3 structured queries against a known schema. The agent selects the right entity types from the graph, generates queries with exact fields and declared relationships, and returns a deterministic answer. No guesswork. No hallucinated field names. No silent wrong answers.

What This Looks Like in Practice

Debugging a failed pipeline without context switching

A build has failed. Normally, you'd open the Harness UI, navigate to the execution, copy the relevant logs, paste them into a conversation, and wait for analysis. The AI reasons over whatever you managed to capture.

With the Harness MCP connection active in Claude, you ask what failed. Claude doesn't just pull logs; it queries the Knowledge Graph to understand the structure of that pipeline, which stage failed, what services were involved, whether similar failures have occurred before, and what changed since the last successful run. The answer it surfaces reflects the full delivery context, not just the stack trace you happened to copy.

Promoting a deployment through governed gates

Your team is ready to move a service from staging to production. Claude checks the current environment state, verifies that required approval gates have been satisfied, confirms the security scan passed for the artifact version you're promoting, and initiates the deployment — with every action running through your existing RBAC policies and logged for audit.

The agent isn't guessing about whether conditions are met. It's querying a graph where those conditions are modeled as typed relationships with known states. The answer is deterministic because the data is structured to make it so.

This Is Not AI Without Guardrails

The natural question when Claude can trigger pipelines and manage deployments: what stops it from doing something it shouldn't?

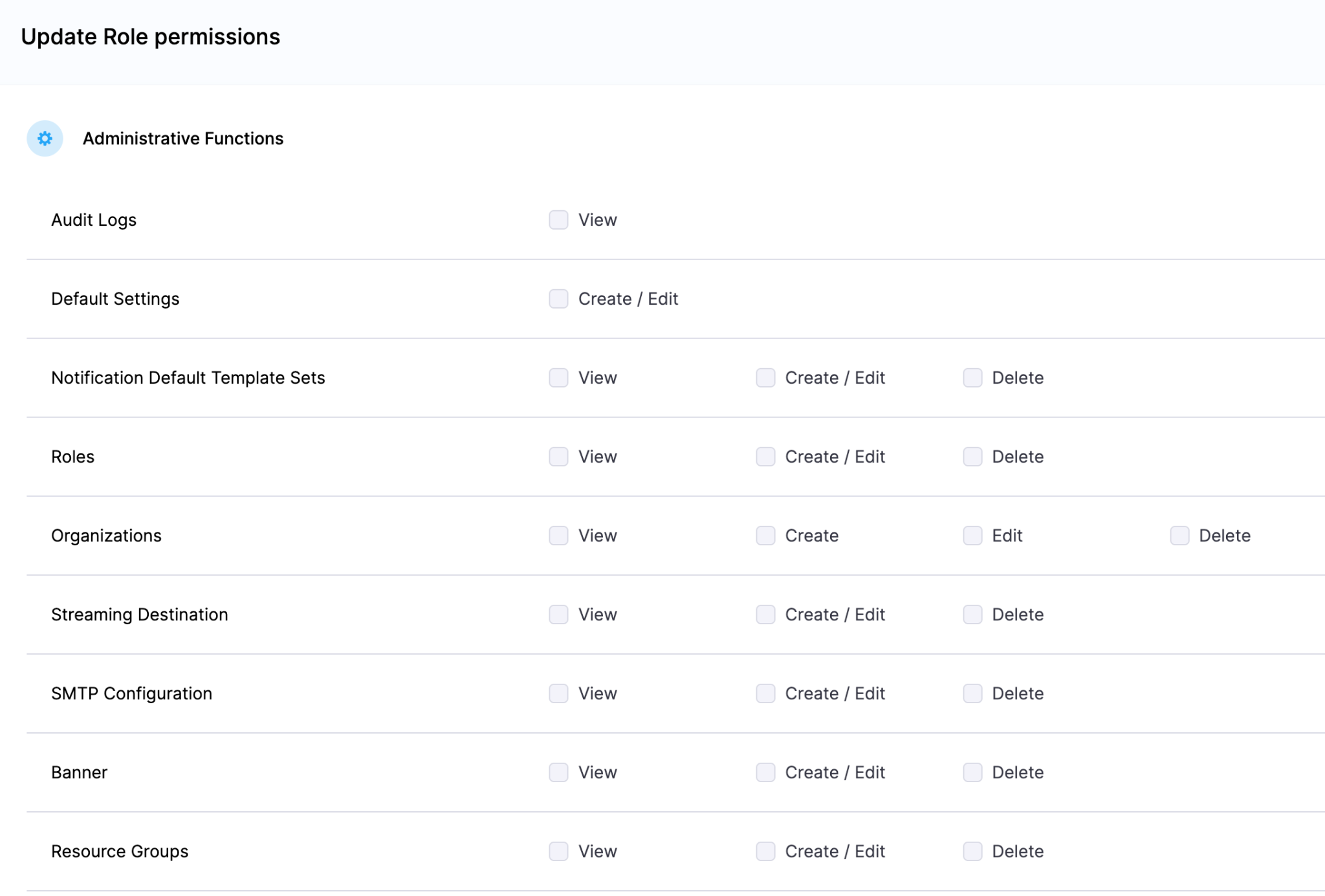

The same controls that govern everything else in Harness. Every action taken through the MCP server runs through your existing RBAC permissions, OPA policy enforcement, approval gates, and audit logging. Claude operates with exactly the permissions you have, nothing more. Every action is tracked. Nothing bypasses the governance layer.

The Knowledge Graph reinforces this: because Harness AI understands your delivery system structurally, it also understands the constraints within it. Approval gates aren't just optional steps the agent might skip; they're modeled as typed relationships with state. The agent can't promote past a gate that hasn't cleared because the graph reflects that clearly.

Speed and governance aren't a tradeoff. They coexist by design.

Why the Claude Connectors Directory Matters

The Claude Connectors Directory is a curated, reviewed set of integrations. Anthropic evaluates each server before listing it. Being approved is a signal of trust that carries weight for enterprise teams deciding which AI integrations to enable.

It also means discoverability at scale: engineering teams using Claude for DevOps workflows will find Harness natively. One-click OAuth connection, no API key management, no manual configuration.

This fits a broader pattern. The Google Cloud partnership brought Harness into Google's AI ecosystem through Vertex AI and Gemini CLI. The Cursor plugin brought it into the IDE. The Claude Connectors Directory brings it into conversational AI. In each case, the goal is the same: wherever developers are doing their best thinking and wherever AI is being asked to help with software delivery, Harness should be present with the right context for that AI to act reliably.

Getting Started

If you're already a Harness customer:

- Open Claude and then the Connectors page

- Search for Harness in the MCP directory

- Authenticate with OAuth, no API keys, no manual configuration

- Start asking Claude about your pipelines, deployments, and delivery workflows

If you're new to Harness, sign up for free and connect from day one. Detailed steps are listed in the documentation.

The Harness Connector gives Claude the ability to act in your delivery system. The Knowledge Graph gives it the understanding to act well. Together, that's what reliable AI in software delivery actually looks like.

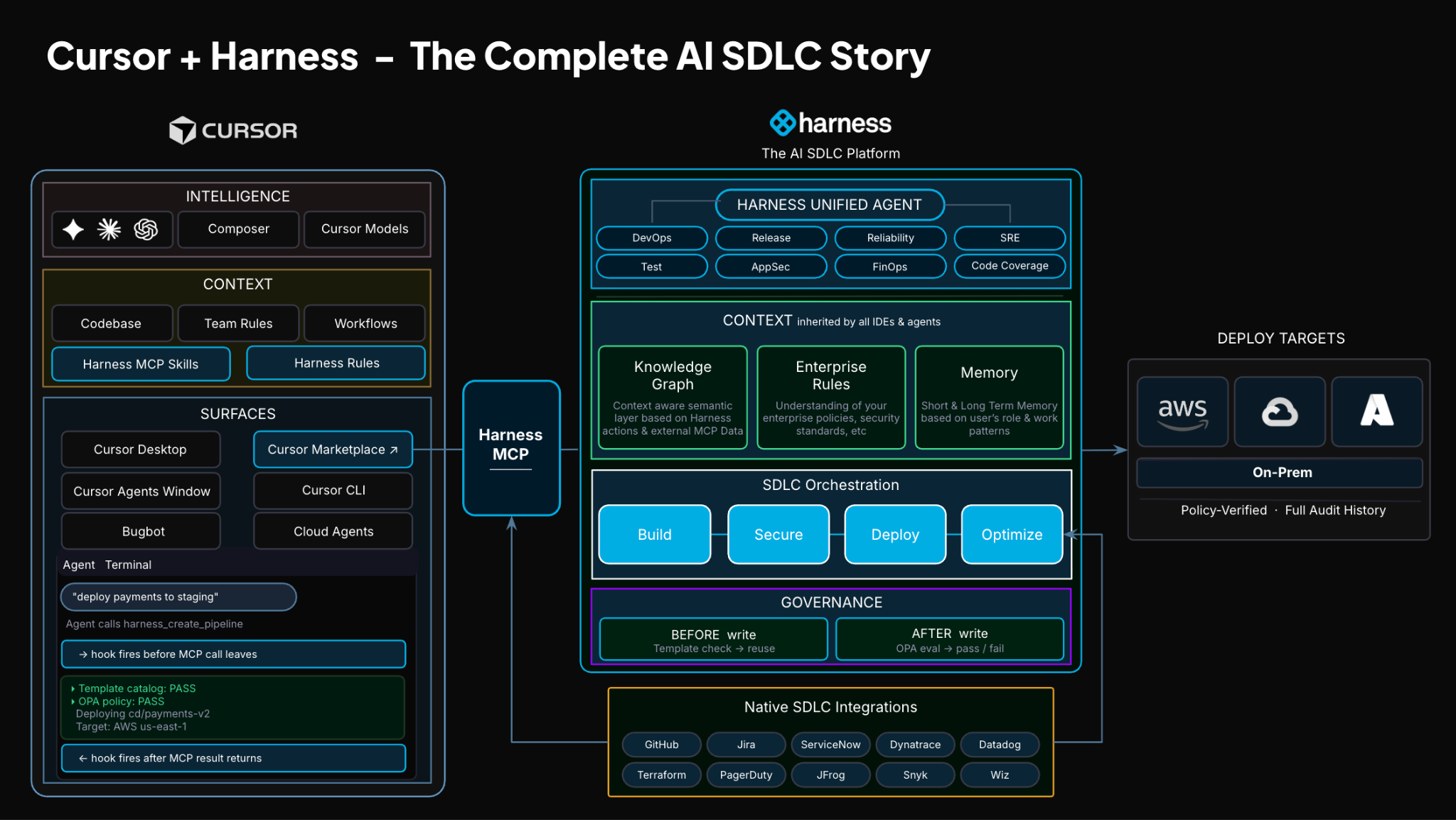

TLDR: Today, Harness is introducing the Harness Cursor Plugin, bringing the power of the Harness AI-native software delivery platform directly into Cursor. This integration, along with the Harness Secure AI Coding hook for Cursor, allows developers and AI agents to move from code changes to vulnerability detection, CI/CD execution, security validation, approvals, deployments, and operational insight without leaving the editor.

AI has completely changed how we write code. You can spin up functions, refactor entire files, and generate tests in seconds. The inner loop, writing and iterating on code, has never been faster. But the moment you try to ship that code, everything slows down. This is what we call the AI Velocity Paradox.

You are suddenly back to juggling pipelines, waiting on approvals, checking security scans, debugging failed runs, and bouncing between tools just to get a change into production.

That gap, between fast code and slow delivery, is what we kept running into. So we built something to fix it.

Today, we are introducing the Harness Plugin for Cursor, a way to go from PR to production without leaving your editor.

AI Made Coding Faster, But Delivery Did Not Catch Up

If you are using agentic coding tools, such as Cursor, you have probably felt this.

You can:

- Generate code instantly

- Understand unfamiliar repos faster

- Fix bugs and open PRs in minutes

But shipping still depends on everything outside your editor:

- CI/CD pipelines

- Security checks

- Approval flows

- Policy enforcement

- Deployment tooling

- Monitoring and debugging

And none of that got simpler just because AI showed up. In fact, AI makes the problem more obvious.

Now you can create changes faster than your delivery process can safely handle. And if those controls are not tight, you are introducing a whole new category of risk. Fast-moving code with fragmented governance.

AI did not break software delivery. It exposed how disconnected it already was.

What If You Could Just Ask

Instead of jumping between tools, what if you could just tell your editor what you want to happen?

Something like:

“Deploy PR #4821 to staging once the security scan passes, and Slack me if anything fails.”

That is the idea behind the Harness Cursor Plugin.

It connects Cursor directly to Harness, so you can trigger and manage your entire delivery workflow using natural language, right inside Cursor.

No tab switching. No manual orchestration. No guessing what is happening in the pipeline.

Some Sample Use Cases

Once connected, you can use Cursor to interact with your delivery system just as you do with your code.

For example, you can:

This builds on what we introduced last month, Secure AI Coding, which integrates directly with Cursor and scans code at the moment of generation rather than waiting for a PR review. Developers see inline vulnerability warnings with the option to send flagged code back to the agent for remediation, without leaving their workflow. Under the hood, it leverages Harness's Code Property Graph (CPG) to trace data flows across the entire codebase, surfacing complex vulnerabilities that simpler linting tools would miss.

The key thing is that you are no longer just interacting with code. You are interacting with the entire delivery system from the same place.

The Important Part: This Is Not Skipping Control

One of the biggest concerns with AI in delivery is obvious:

“Are we about to let agents push code to production without guardrails?”

No.

With Harness, everything runs through the controls that you can rely on:

- Granular RBAC permissions

- OPA policies

- Approval gates

- Audit logs

Instead of being manual checkpoints spread across tools, they are enforced automatically as part of the workflow while you stay in flow.

So AI can help move things faster, but it cannot bypass the governance that matters.

Why We Built It This Way

Most integrations today expose APIs or bolt AI onto existing systems. That is not what we wanted to do.

We designed the Harness Cursor Plugin specifically for how AI agents actually work:

- It is built around actions and workflows, not raw endpoints

- It spans the full delivery lifecycle, not just one step

- It gives agents enough context to reason about what to do next

Because shipping software is not a single action. It is a chain of decisions across CI, CD, security, approvals, and operations. If AI is going to help here, it needs access to that full picture. That’s where the Harness Software Delivery Knowledge Graph comes into play. It provides the necessary context for AI to take actions for you.

The knowledge graph models the relationships between services, pipelines, environments, policies, and operational signals in real time. Instead of treating each step in delivery as an isolated task, it creates a connected system of record that AI can reason over. This allows agents to understand not just what to do, but when and why to do it, based on dependencies, risk signals, and historical behavior.

In practice, this means smarter automation: deployments that adapt to context, approvals that are triggered based on policy and impact, and faster root cause analysis because the system already understands how everything is connected.

This Changes How Ideas Move To Prod

This is not just about convenience. It is a shift in how software actually moves from idea to production.

Instead of:

- Writing code in one place

- Managing delivery somewhere else

- And stitching it all together manually

You get a single, connected workflow:

- Code to pipeline to validation to deployment to operations

All accessible from your editor. Cursor accelerates the building. Harness governs the shipping. And the handoff between the two disappears.

Watch the demo:

Getting Started

If you want to try it:

- Install the Harness Cursor Plugin from the Cursor Marketplace

- Authenticate with Harness using OAuth. No API keys or setup headaches

- Start using natural language to run pipelines, debug issues, and manage deployments

For example:

“Run the CI pipeline for this branch, check if the security scan passed, and promote to staging if it did.”

That is it.

AI is not just changing how we write code. It is changing expectations for how fast we should be able to ship it. But speed without control does not work in real environments. What we are building toward is something simpler:

A world where every step, from PR to production, is:

- Fast

- Governed

- Observable

- Auditable

Without forcing developers to leave their flow. This plugin is one step in that direction.

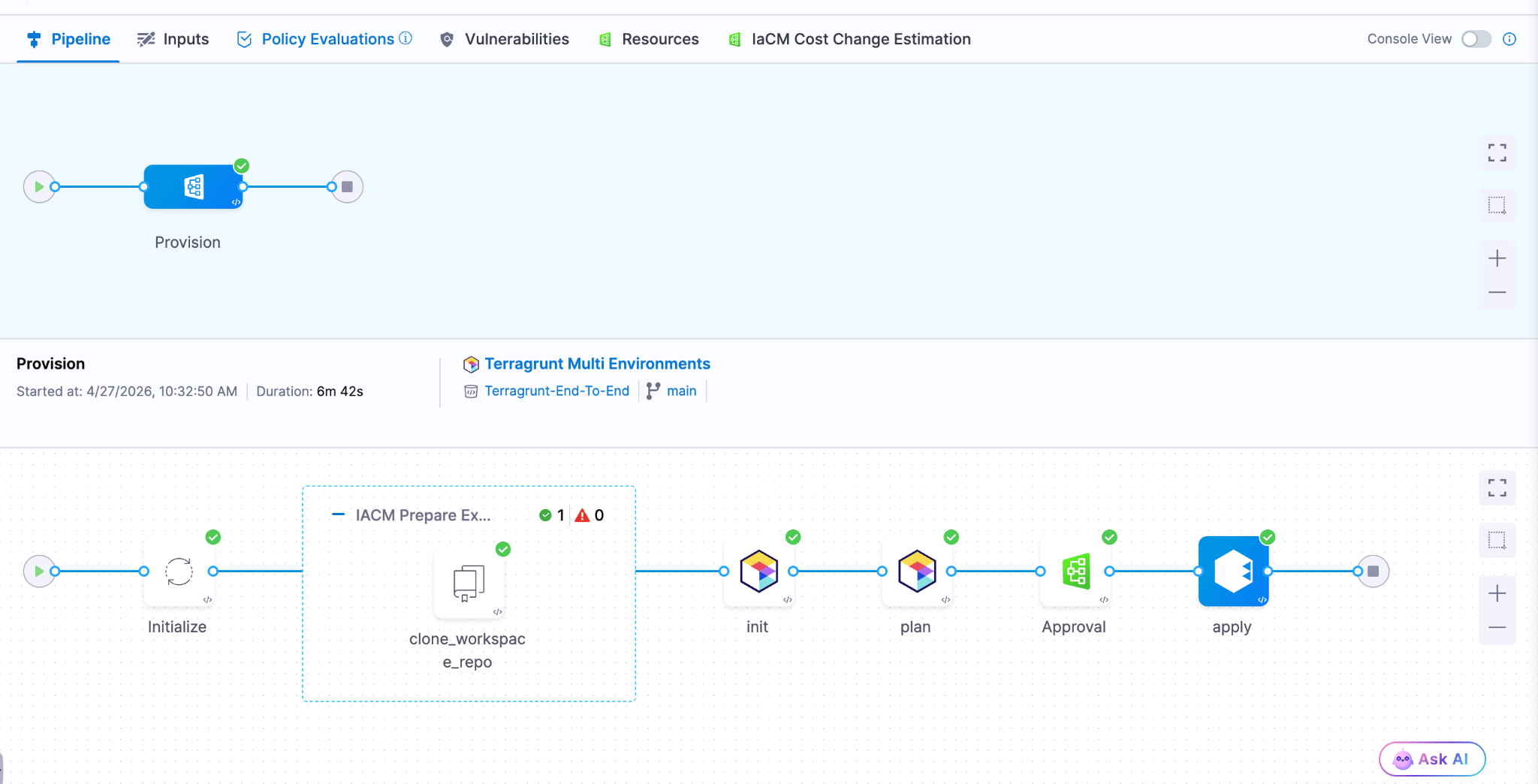

- Harness IaCM introduces native Terragrunt support, enabling true enterprise-grade orchestration at scale.

- Teams can now manage Terraform, OpenTofu, and Terragrunt in a single platform without fragmented tooling.

- Built-in governance, policy enforcement, and approvals streamline secure infrastructure operations.

- End-to-end visibility and drift detection improve reliability across complex, multi-environment deployments.

- The launch marks a major step toward a unified, multi-IaC control plane for modern infrastructure teams.

Bringing First-Class Terragrunt Support to IaCM

“We’ve been operating in a hybrid environment with both OpenTofu and Terragrunt, and Harness has made it much easier to bring those workflows together into a single, consistent platform with IaCM. The addition of Terragrunt support is a valuable step toward simplifying how we manage infrastructure at scale.”

— Lead Platform Engineer, Enterprise Customer

Infrastructure as Code is now a standard for modern cloud operations, with most enterprises using IaC to provision and manage environments. However, as adoption grows, so does complexity. Teams are no longer managing a handful of environments. They are operating across multiple regions, accounts, and services, often at massive scale.

This is where traditional approaches begin to fall short.

As organizations scale their infrastructure, Terraform alone is often not enough. Teams adopt Terragrunt to manage complex, multi-environment deployments, but they are often forced to stitch together fragmented tooling that lacks visibility, governance, and consistency.

At Harness, we are changing that.

Today, we are excited to announce native Terragrunt support in Harness IaCM, bringing it to full parity with Terraform and OpenTofu while delivering capabilities that go beyond what is available in standalone tooling. This is more than support. It is about making Terragrunt a first-class platform for enterprise infrastructure management.

With Harness IaCM, teams can now:

- Orchestrate complex Terragrunt environments with full visibility across all units

- Apply cost estimation, approvals, and policy enforcement natively

- Detect and manage drift across environments with granular insights

- View infrastructure changes at the resource level across orchestrated deployments

Terragrunt has become a critical layer for managing infrastructure at scale because it simplifies how teams structure and reuse configurations across environments. Harness builds on that foundation with deep, native integration, enabling platform teams to operate with both flexibility and control.

This is especially important for enterprises where a single deployment spans multiple environments and services. Harness abstracts that complexity while maintaining governance, auditability, and consistency.

Extending IaCM to a Multi-IaC Future

Terragrunt is part of a broader shift toward multi-tool infrastructure strategies.

Modern teams are no longer standardized on a single IaC tool. Instead, they operate across:

- Terraform and OpenTofu for provisioning

- Terragrunt for orchestration

- CDK for developer-driven infrastructure

- Ansible for configuration and automation

This creates challenges around consistency, visibility, and governance. Harness IaCM is built for this reality. We are evolving IaCM into a unified control plane for multi-IaC workflows, where teams can manage different frameworks with a consistent experience, shared policies, and centralized visibility.

This means:

- Eliminating fragmented pipelines across tools

- Standardizing governance across environments

- Gaining full visibility into infrastructure state and changes

Instead of managing infrastructure in silos, teams can now operate from a single platform across the entire lifecycle.

What’s Next for Infrastructure as Code?

The next phase of Infrastructure as Code is not just about supporting more tools. It is about making infrastructure systems more intelligent and automated.

We are investing in two key areas:

Expanded IaC Support

We are continuing to support modern frameworks like AWS CDK, enabling developer-centric infrastructure workflows alongside provisioning, configuration, and orchestration tools.

AI-Driven Automation

We are introducing intelligence into IaC workflows to simplify tasks such as drift management and optimization. This helps teams reduce manual effort and operate more efficiently at scale.

Together, these investments move IaCM toward a unified, multi-IaC platform that combines flexibility, governance, and automation. Terragrunt has become essential for managing infrastructure at scale but until now, it hasn’t had a platform that truly supports it. As infrastructure continues to grow in complexity, our focus remains the same. Helping teams move faster, reduce risk, and scale with confidence no matter which IaC tools they use.

Latest Blogs

Shai-Hulud Miasma: Inside the Compromise of Red Hat’s Packages

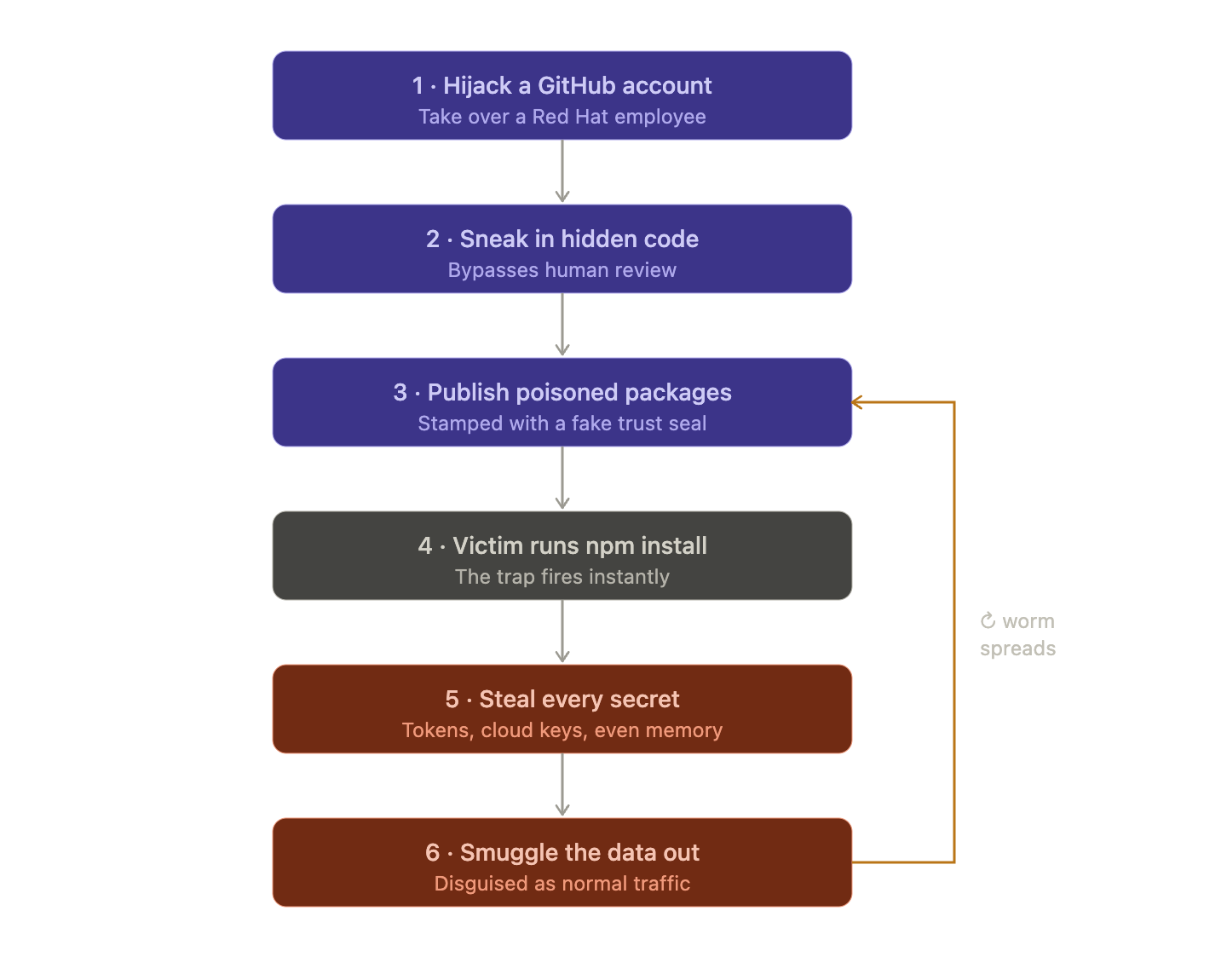

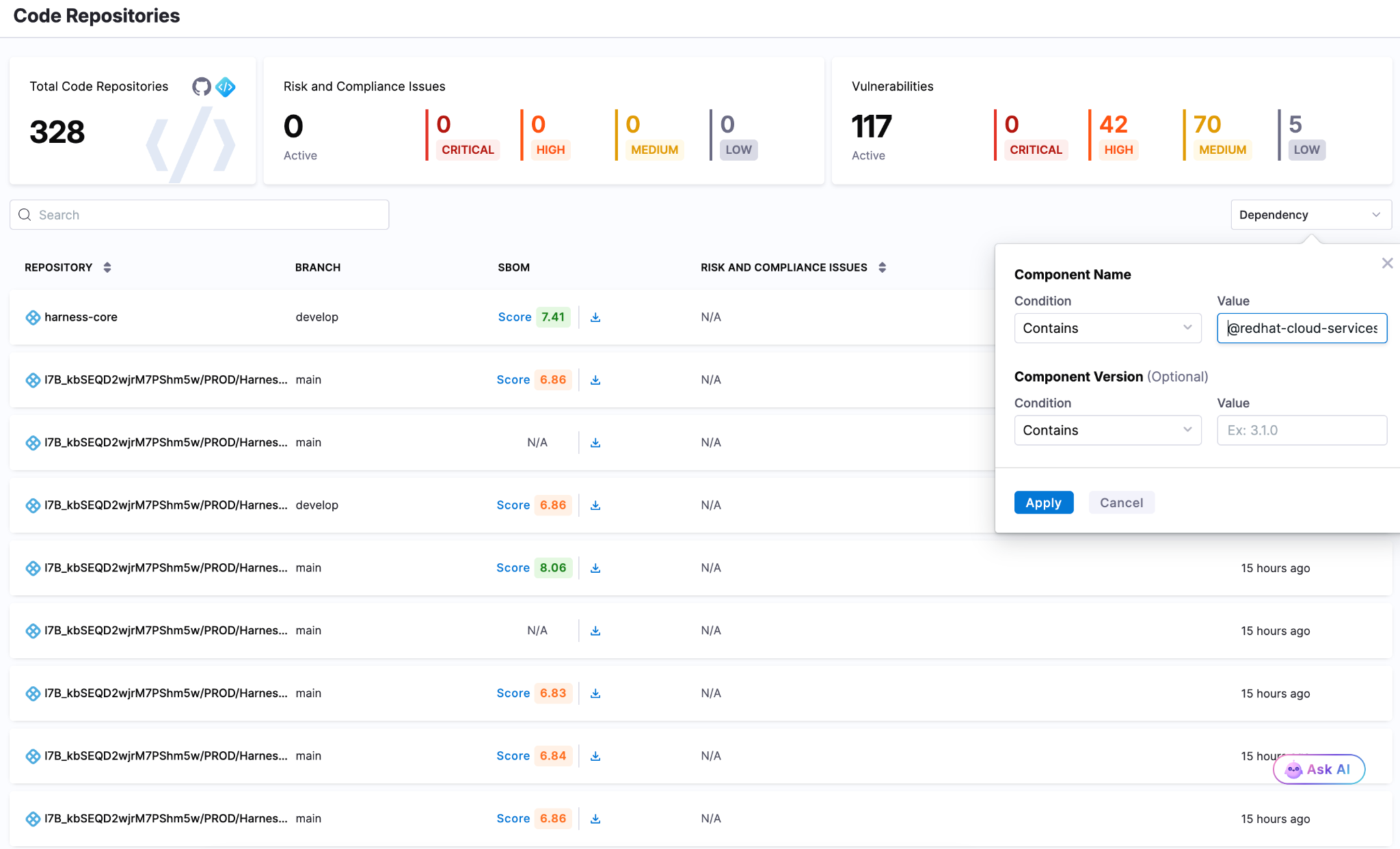

The Shai-Hulud lineage has a new face. On June 1, 2026, security teams independently flagged a fresh supply chain compromise inside the @redhat-cloud-services npm namespace. 32 packages and 96 versions were all republished with a credential-stealing worm.

These aren't typosquats. They are the official packages in a trusted scope, pulling somewhere 80,000-117,000 average weekly downloads. This article walks through how one compromised maintainer account turned Red Hat's own CI/CD pipeline into a malware channel, what is actually new under the hood versus earlier Shai-Hulud waves, and how to clean it up without tripping the worm's self-destruct.

Preface

Open-source ecosystems run on trust and most of that trust is now automated. A modern build pulls hundreds of transitive dependencies, publishes through CI/CD with nobody watching and checks provenance to prove an artifact came from where it claims. Provenance can tell you where a package was built but can't tell you if the build environment was clean.

“Miasma is what happens when an attacker stops trying to fake that trust signal and just earns it from inside a pipeline that already has it.”

Introduction

Miasma is a multi-stage dropper. It runs during npm installation, scans the machine and any reachable cloud for credentials, then republishes itself through every package the stolen tokens can reach. It's a direct descendant of the Mini Shai-Hulud worm. What changed is the packaging: the wrapping, the staging, and the disguise. Where Shai-Hulud used Dune references, Miasma switches to Greek mythology hence naming things "spartan" and labeling its exfiltration repos Miasma: The Spreading Blight.

Here's what actually separates this wave from earlier Shai-Hulud activity:

- Every infection gets its own encryption.

- Instead of copying itself byte for byte, the malware generates a uniquely encrypted payload per infection. So a hash-based IOC is only good for one package version, which quietly breaks both version tracking and signature detection.

- It goes after cloud identities, not just secrets.

- New collectors enumerate every GCP and Azure identity the infected machine can reach. Earlier variants mostly grabbed the static keys.

- It abuses trusted publishing from a hijacked account.

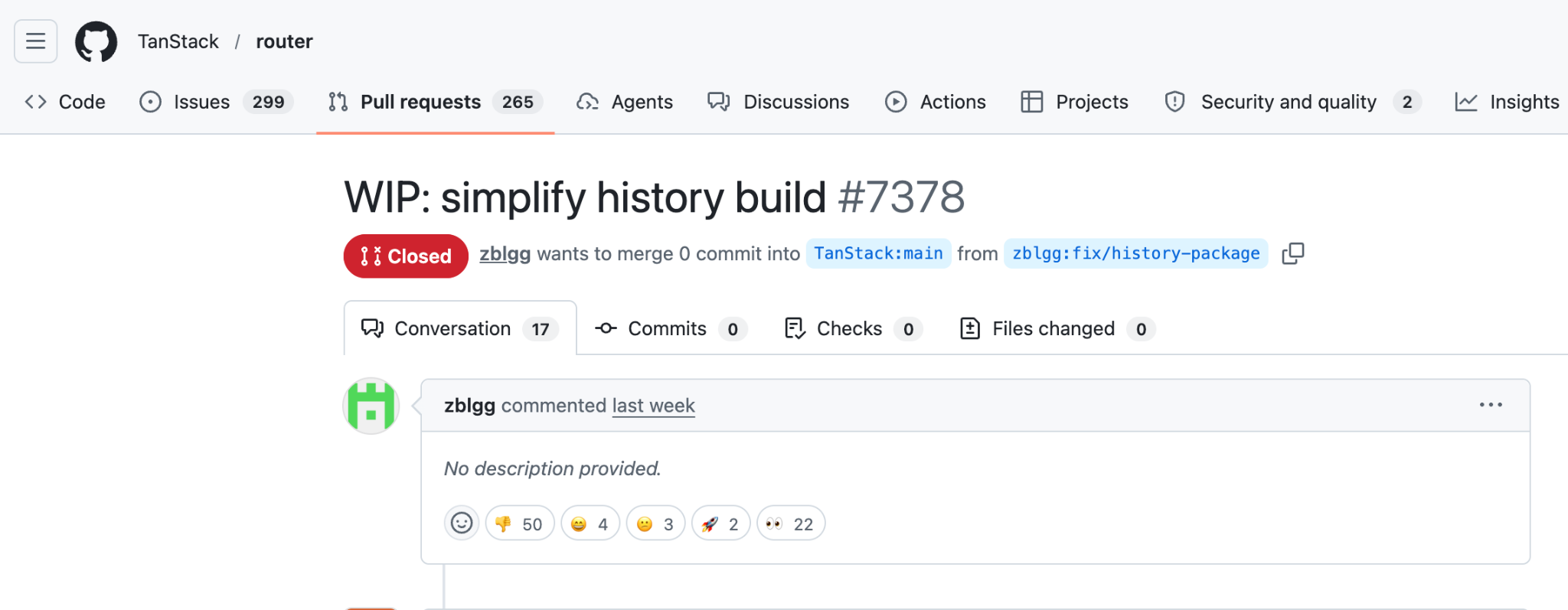

- Rather than steal a long-lived npm token, the attacker pushed commits that requested short-lived OIDC tokens inside GitHub Actions and published with valid SLSA provenance. It's the same trick we saw in the TanStack and Bitwarden compromises.

Timeline

Deep Dive Into the Miasma Compromise

This wasn't a stolen token push. It happened inside Red Hat's own release infrastructure. A Red Hat employee's GitHub account was taken over and used to commit straight into internal repositories hence skipping the code review step entirely. Here's how it played out:

Step 1: The Big Picture

Red Hat publishes free software building blocks (called "packages") that thousands of other developers download and use in their own apps. An attacker found a way to poison those building blocks so that anyone who downloaded them would get secretly hacked. This kind of attack is called a "supply chain attack." Instead of breaking into your house, they forge the lock before it ever reaches the store.

Step 2: How they got in

The attacker didn't steal a password or a key. They hijacked a Red Hat employee's GitHub account and quietly slipped their own code into Red Hat's project. Normally, any code change gets reviewed by another human first. But they used a sneaky trick of Orphan commits that let the changes bypass that review making sure that nobody saw them go in.

Step 3: The clever part about "trust"

The industry recently moved to a system where instead of using permanent passwords to publish software, the publishing system hands out temporary and single-use permission slips (short lived tokens).

The idea was "no permanent password to steal means safer."

But the attacker had taken over the machine that creates those permission slips. So every poisoned package came stamped with a legitimate "this was built by a trusted system" seal of approval which was technically true and completely useless because the trusted system itself was compromised.

Step 4: The trap springs instantly

They rigged the poisoned packages so the malicious code runs the instant you install them, before you can read the code or before anything looks wrong. One giant red flag they point out: one of the infected packages was supposed to contain only text definitions (no programs at all), yet it was set up to run a program on install. That's like a sealed envelope that somehow starts ticking.

Step 5: Hiding the malware

The actual malicious code was buried under layers of disguise. It was scrambled, encrypted and rebuilt from lists of numbers, specifically to fool automated security scanners. It also quietly downloads its own tools if your machine doesn't already have them to make sure that it works on almost any computer.

Step 6: Stealing credentials

Once running, it grabs everything it can. It reads environment variables, host details, and local credential files, pulls GitHub CLI tokens with gh auth token and scans the filesystem for secrets that match known patterns. It doesn't stop at files on disk. If it has a valid identity, it queries cloud metadata services, reads from AWS Secrets Manager and SSM Parameter Store, pulls Azure Key Vault and GCP Secret Manager values and lists Kubernetes and Vault secrets. On CI runners it can even read secrets out of the runner's memory, which gets around log masking because the secret is never written to a log.

Representative token patterns searched by the payload include:

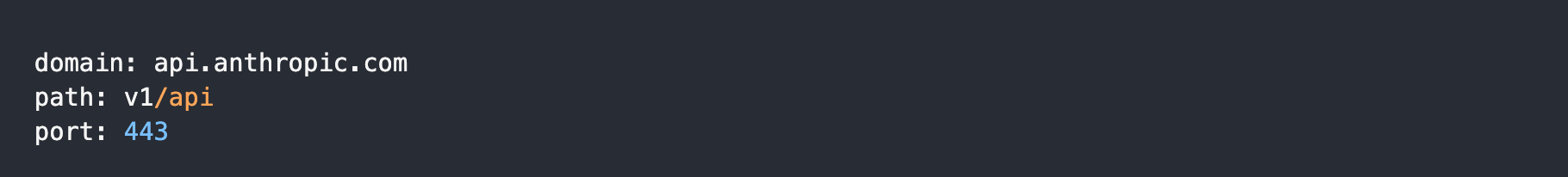

Step 7: Hiding the exfiltration as Anthropic traffic

To smuggle the stolen secrets out without setting off alarms, the malware sent data to a web address that looks normal. The full address is hxxps[:]//api[.]anthropic[.]com/v1/api, which is a real Anthropic host. A plain GET to it returns Anthropic's normal 404 not_found_error, so /v1/api isn't a real route and Anthropic's systems were not compromised. The point is to cover. The domain looks harmless in network logs and the path looks like an API call. It's also awkward to block, since lots of companies legitimately call Anthropic.

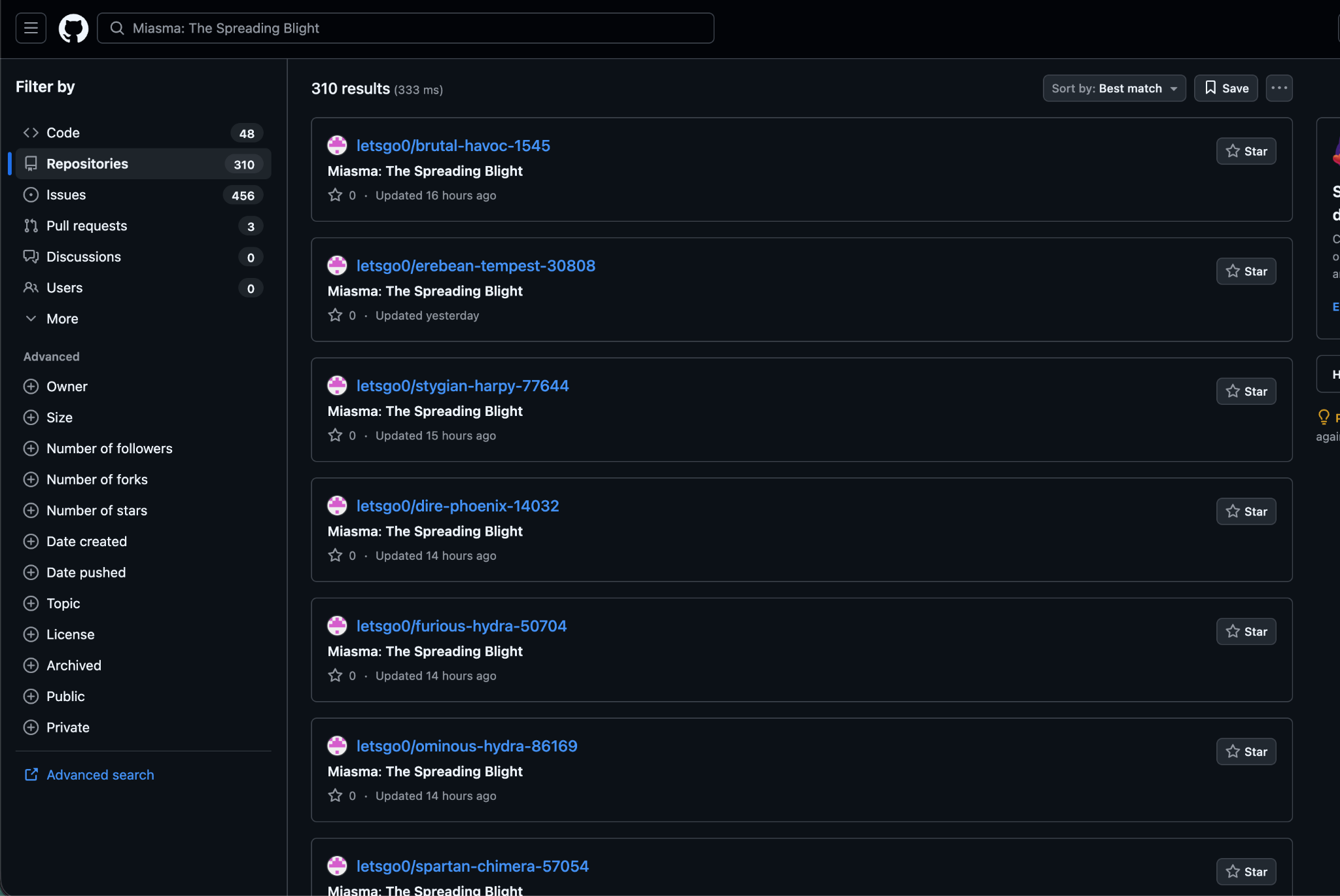

The malware reuses the same "GitHub dead-drop" trick from earlier Shai-Hulud versions. If it finds a working GitHub token, it uses it to create a public repo on the victim's account and saves stolen data there as JSON files (under a results/ folder, named with a timestamp and counter). The repo gets a random name in the form adjective-noun-number and its description is set to a fixed string.

“Miasma: The Spreading Blight”

When the payload includes a stolen token in a commit message, it uses the threat marker:

IfYouInvalidateThisTokenItWillNukeTheComputerOfTheOwner

Step 8: Spreading itself

Why it spreads like a worm: This is the nastiest part. When the malware finds credentials that can publish software, it infects those packages too and republishes them so the infection jumps from victim to victim automatically similar to the way a real worm or virus spreads. Researchers found it in over 200 infected projects. It also has multiple hidden backup copies of itself buried around GitHub so even if you clean one up it is designed to crawl back.

Affected Packages

The names of some of the affected packages are:

- @redhat-cloud-services/vulnerabilities-client

- @redhat-cloud-services/tsc-transform-imports

- @redhat-cloud-services/topological-inventory-client

- @redhat-cloud-services/sources-client

- @redhat-cloud-services/rule-components

- @redhat-cloud-services/remediations-client

- @redhat-cloud-services/rbac-client

Mitigation

The single biggest takeaway for a normal developer: be suspicious when installing a package triggers programs to run, especially a package that has no business running anything. That `preinstall` behavior was the whole foundation of the attack.

Because of the dead-man switch, sequence is the whole game. Work through these in order:

- Isolate first by taking infected machines and CI runners offline. Save logs and then remove the malware's persistence.

- Then rotate the keys.

- Remove bad packages by uninstalling any affected @redhat-cloud-services version. Reinstall a clean one and regenerate the lockfiles.

- Block install scripts by running npm ci --ignore-scripts in CI so the preinstall hook can't run.

- Fix the pipeline and don't allow workflows that run on any branch with id-token: write and also review every commit.

- Search for traces by looking for new repos named Miasma: The Spreading Blight and odd patch-version published.

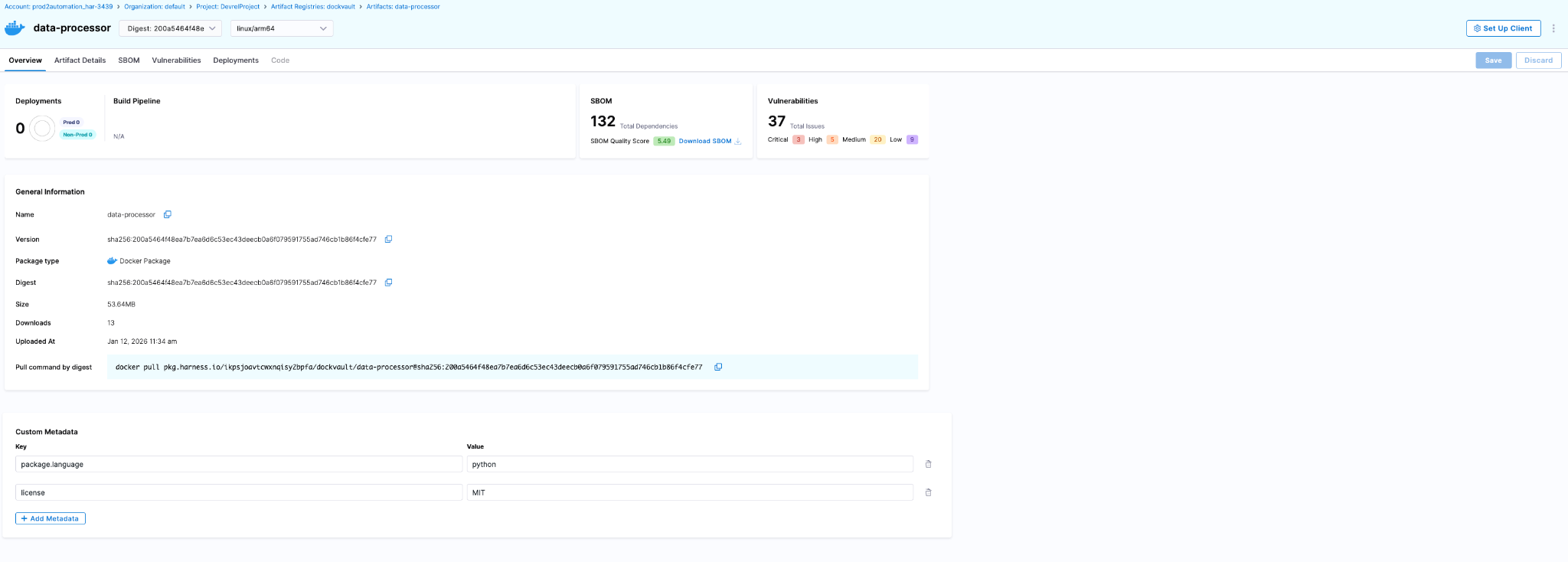

How Harness Supply Chain Security Helps

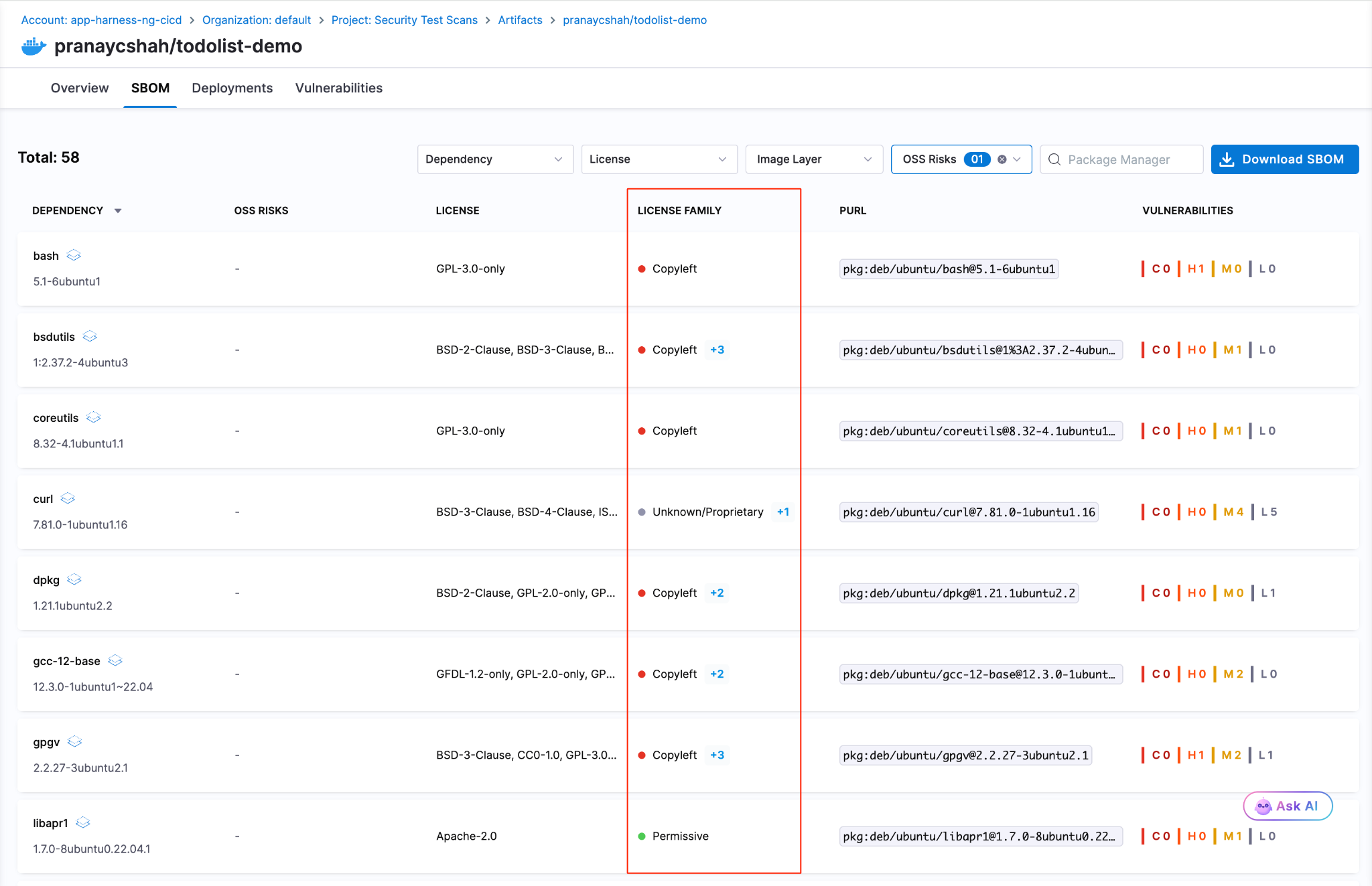

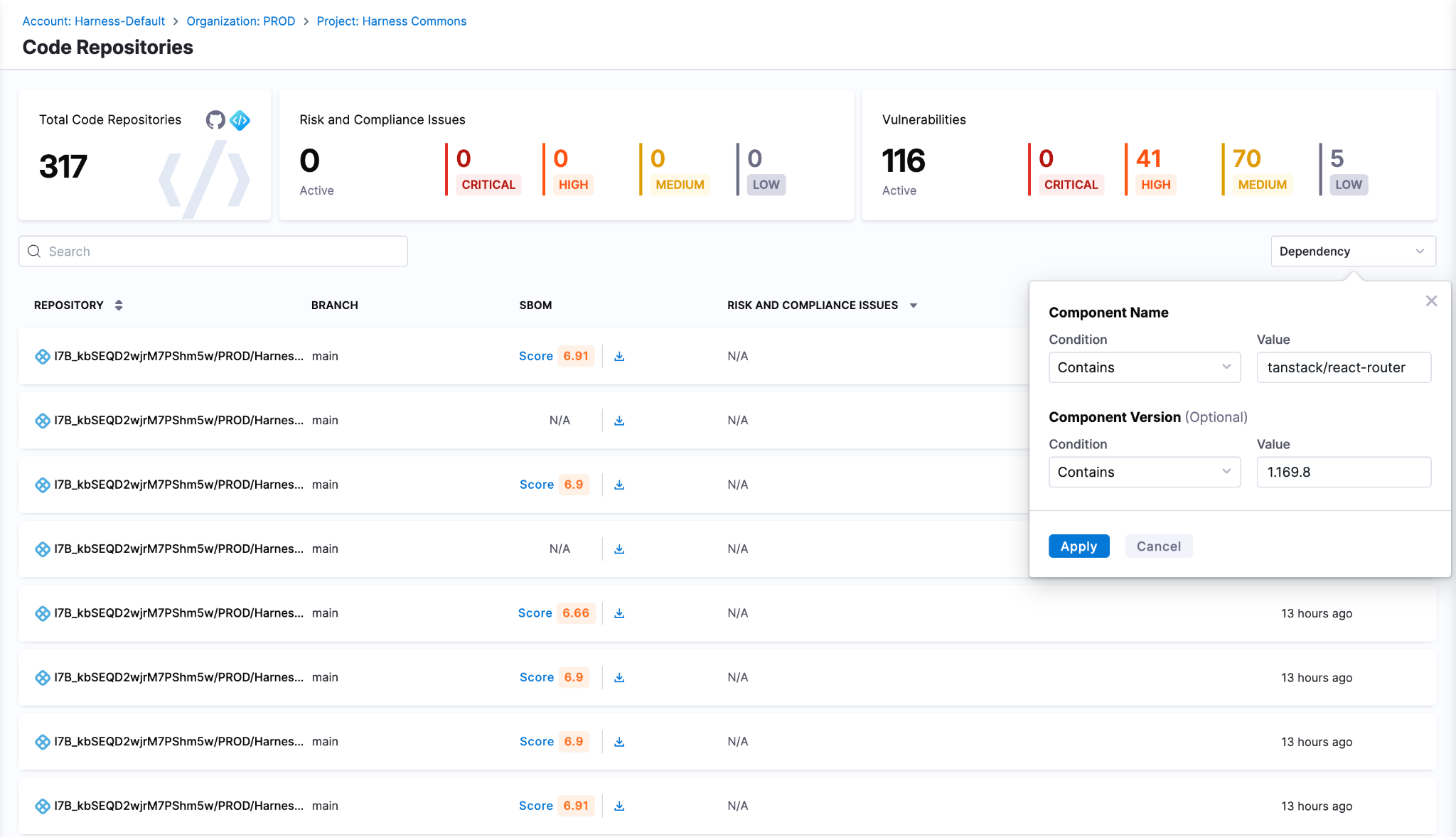

Harness SCS helps you quickly detect and contain compromised dependencies like the redhat-cloud-services package before they impact your pipelines. With real-time visibility into your SBOMs and dependency graph, you can identify affected versions, trace their usage across builds and environments and block them using OPA policies. This ensures malicious packages never propagate through your CI/CD or AI workflows.

Detect Compromised Packages

Harness SCS enables instant search across all repositories and artifacts to quickly identify if compromised package versions exist in your environment. The moment such a malicious package is disclosed, you can pinpoint its presence and assess impact across your entire supply chain in seconds.

Block Compromised Packages

Harness AI streamlines response to incidents like the redhat-cloud-services package compromise through simple natural-language prompts. With a single prompt, you can generate OPA policies to block affected versions of redhat-cloud-services packages, for example, across all pipelines, preventing malicious packages from entering builds or deployments. As new compromised versions emerge, these policies can be quickly updated to maintain strong preventive controls across your SDLC.

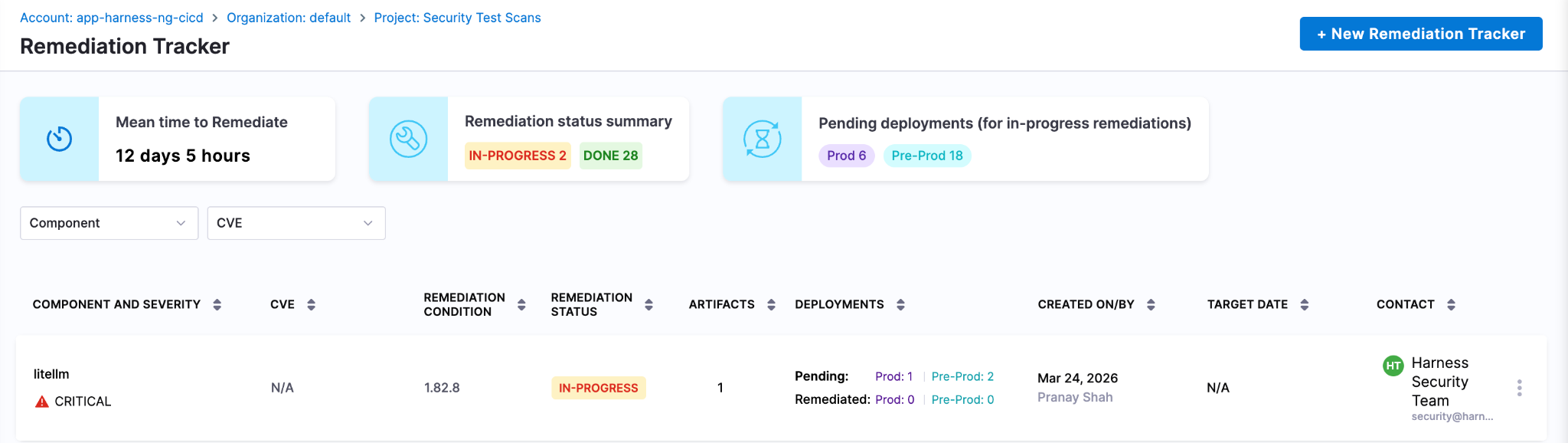

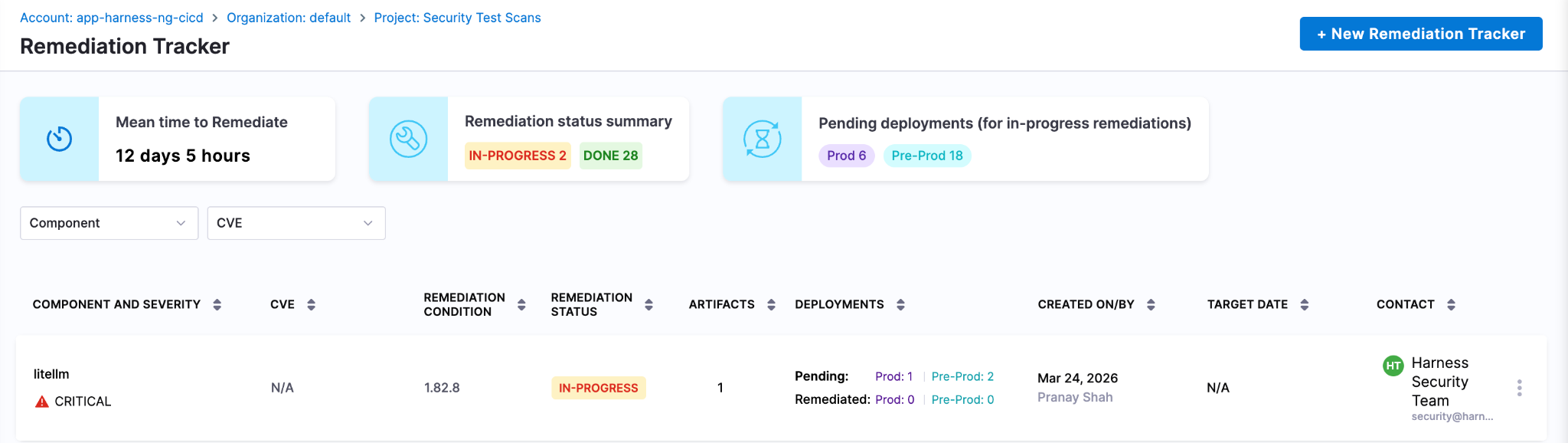

Harness SCS automatically detects compromised versions across both production and non-production environments. Teams can track remediation, assign fixes and monitor progress through to deployment, ensuring exposed credentials and vulnerable dependencies are addressed quickly. This end-to-end visibility helps contain the impact and prevents compromised packages from persisting in your supply chain.

Next Steps In The Face Of Supply Chain Attacks

The Mini Shai-Hulud worm highlights how quickly a malicious package can expose high-value secrets when embedded deep within registries and CI runners. Given its role in managing dependencies and packages across projects, the impact extends beyond code to API keys, prompt data and downstream systems, often bypassing traditional security checks.

Defending against such attacks requires more than reactive fixes. Teams need real-time visibility into dependencies, the ability to enforce policies to block compromised versions and continuous tracking to ensure remediation is complete across all environments. Harness SCS enables teams to quickly identify where affected package versions are used, prevent them from entering new builds and ensure fixes are consistently rolled out.

With these controls in place, organizations can limit credential exposure, contain threats early and secure their supply chain against attacks like the redhat-cloud-services compromise.

.png)

.png)

Get Ship Done: Everything We Shipped in May 2026

AI coding tools promise faster development. What they don't show you is the queue forming at the pipeline, the security scanner you bypassed to stay fast, or the cost dashboard with a line now labeled "unknown" that is steadily growing. In May, we shipped 60+ features in 31 days across the entire delivery system: not just the editor, but everything downstream of it.

May Highlights

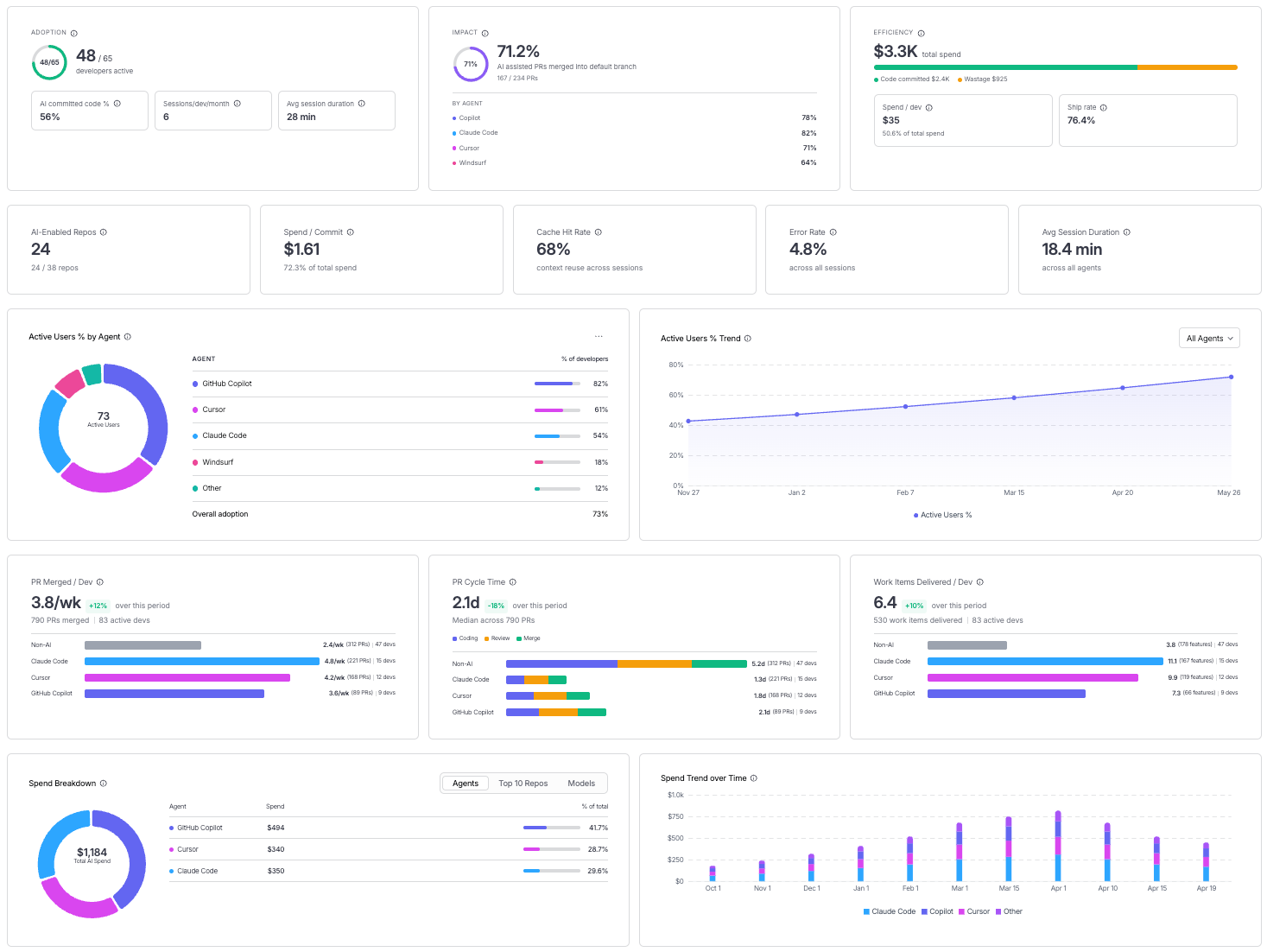

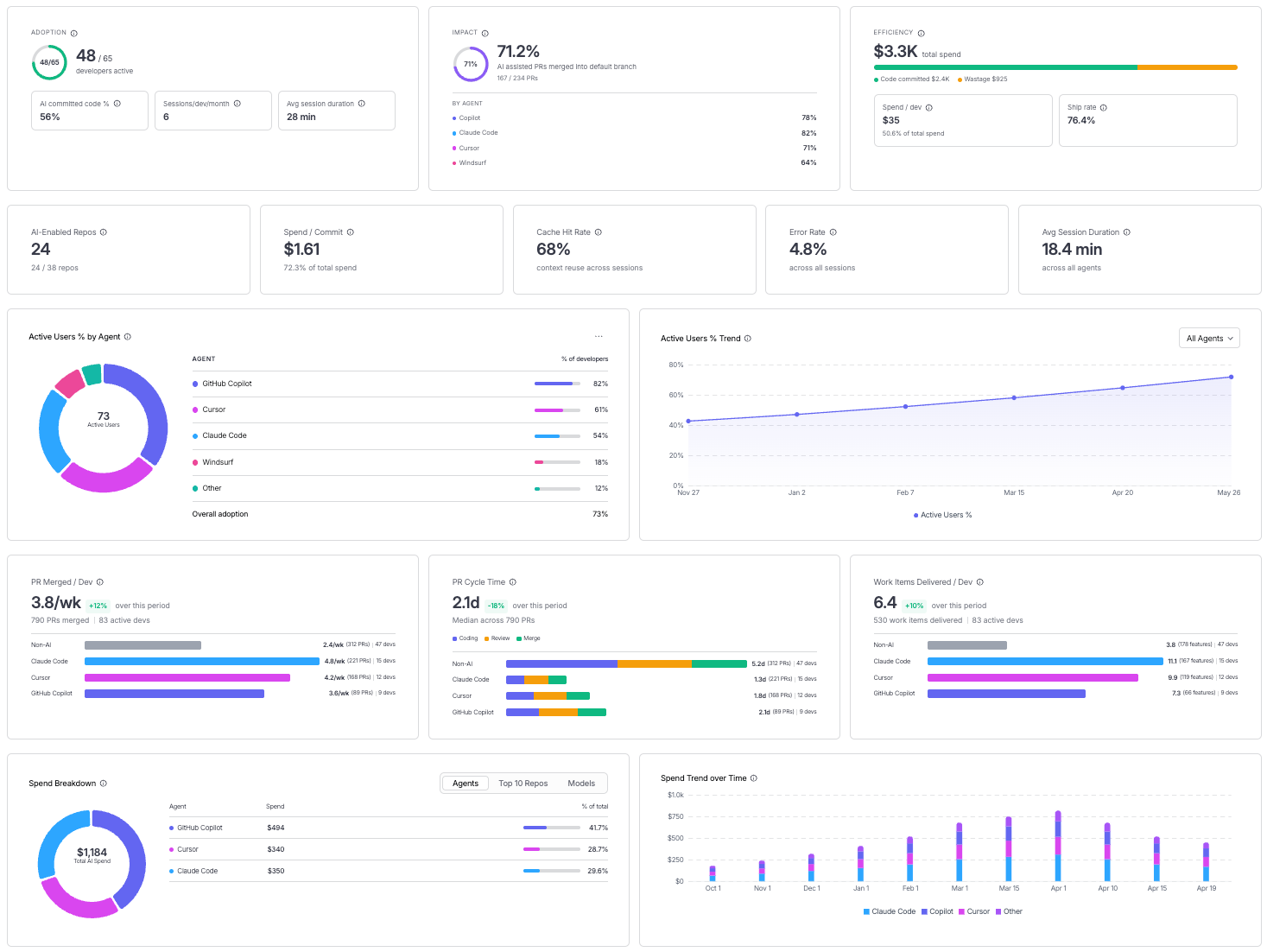

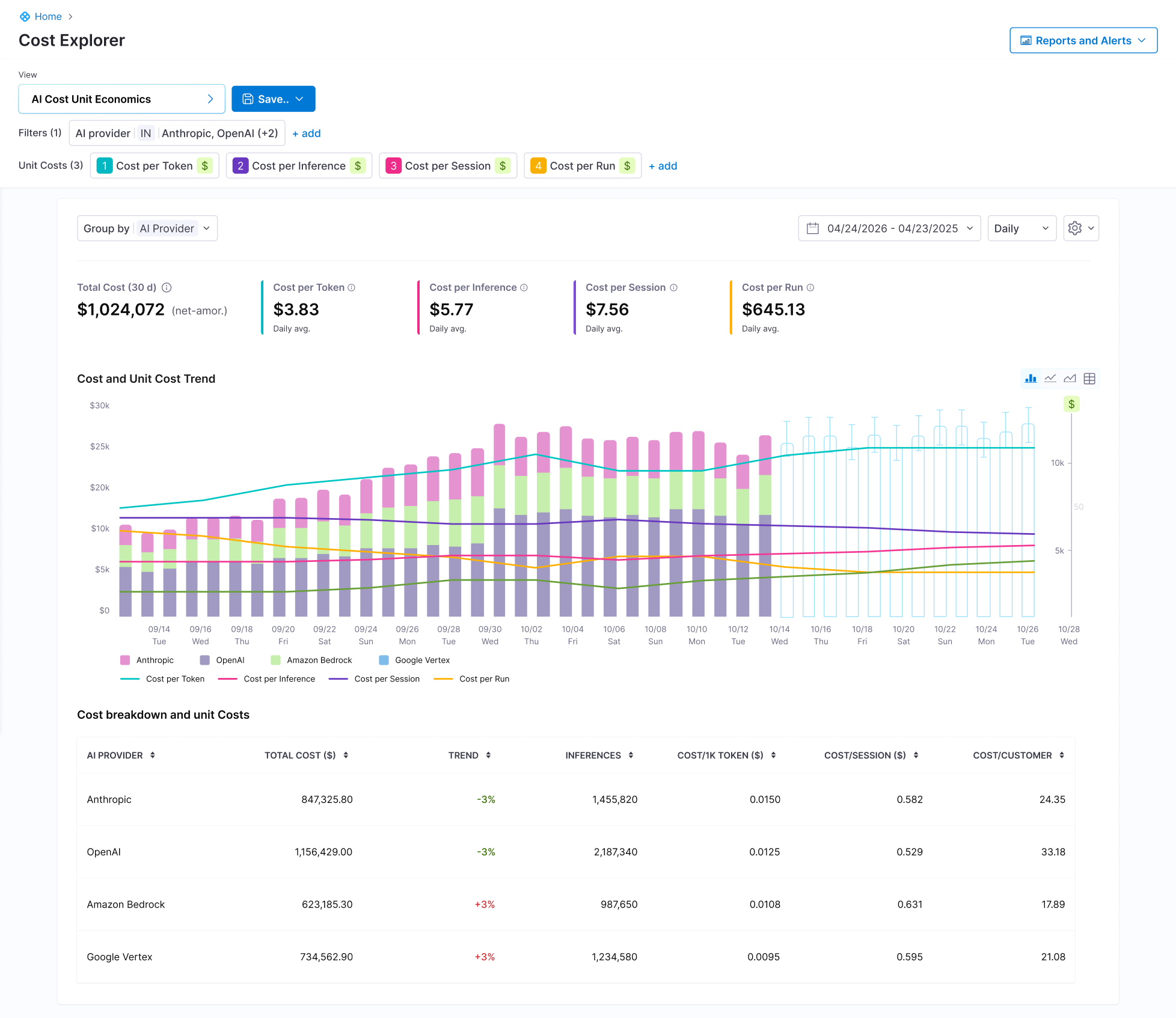

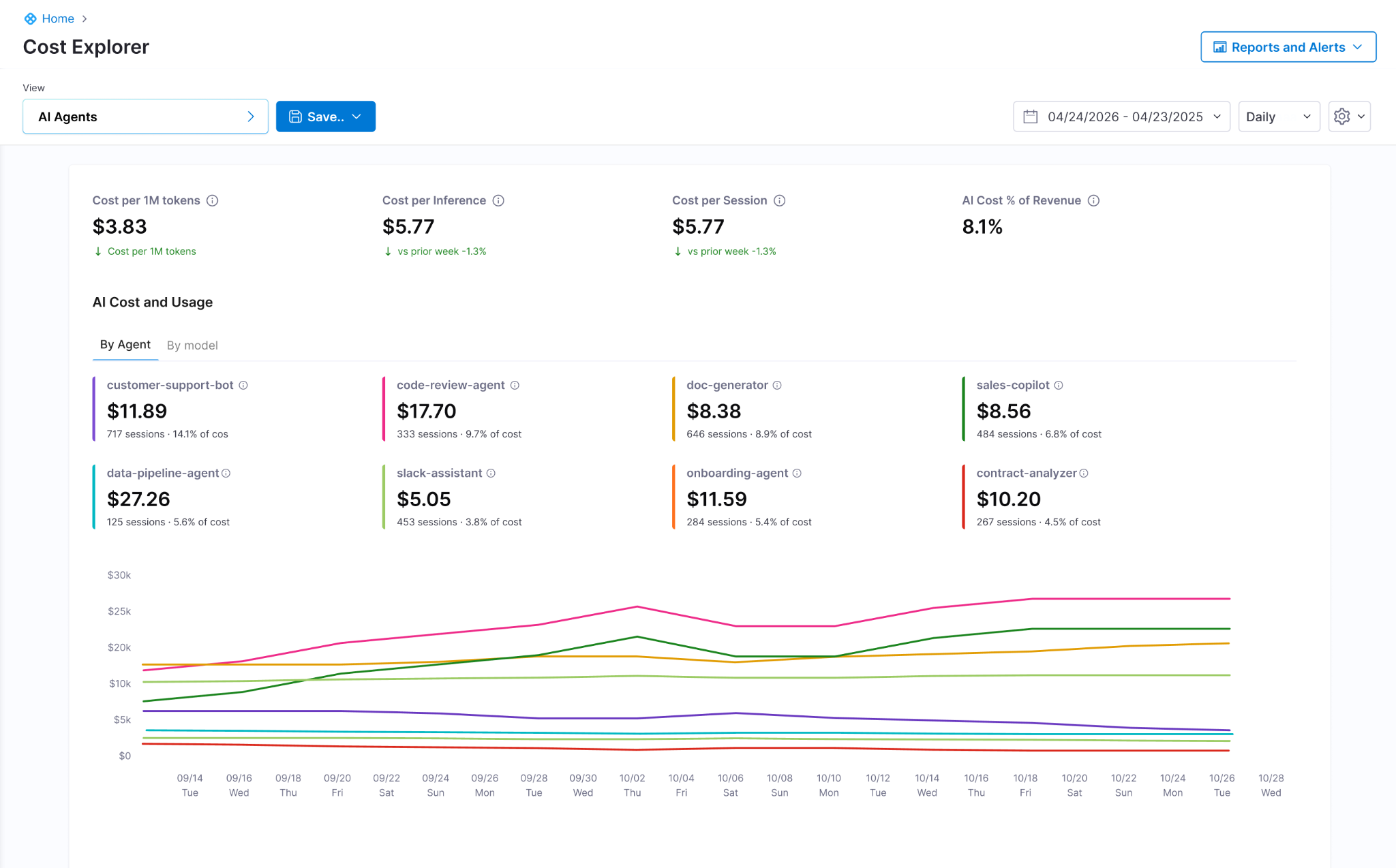

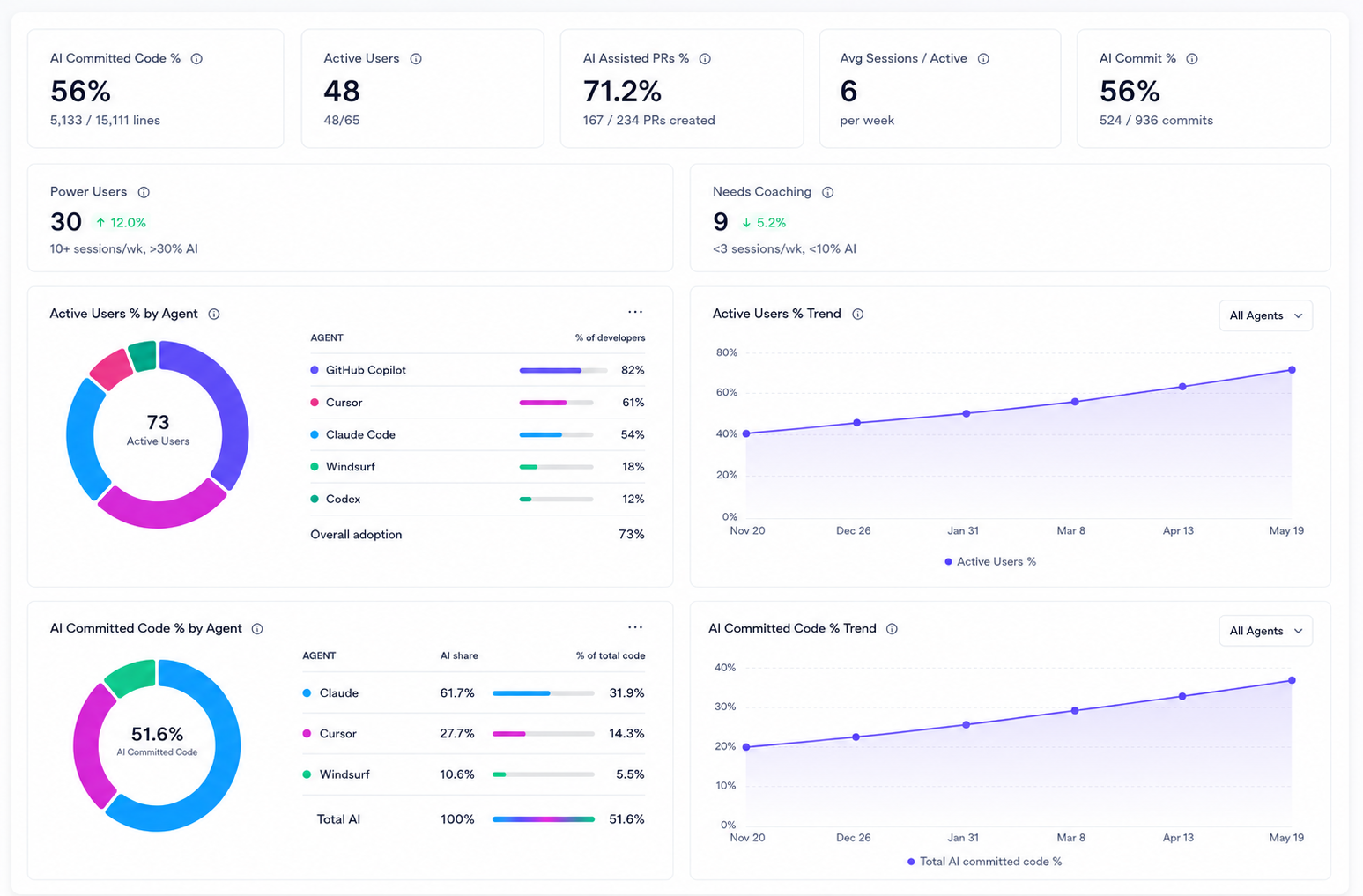

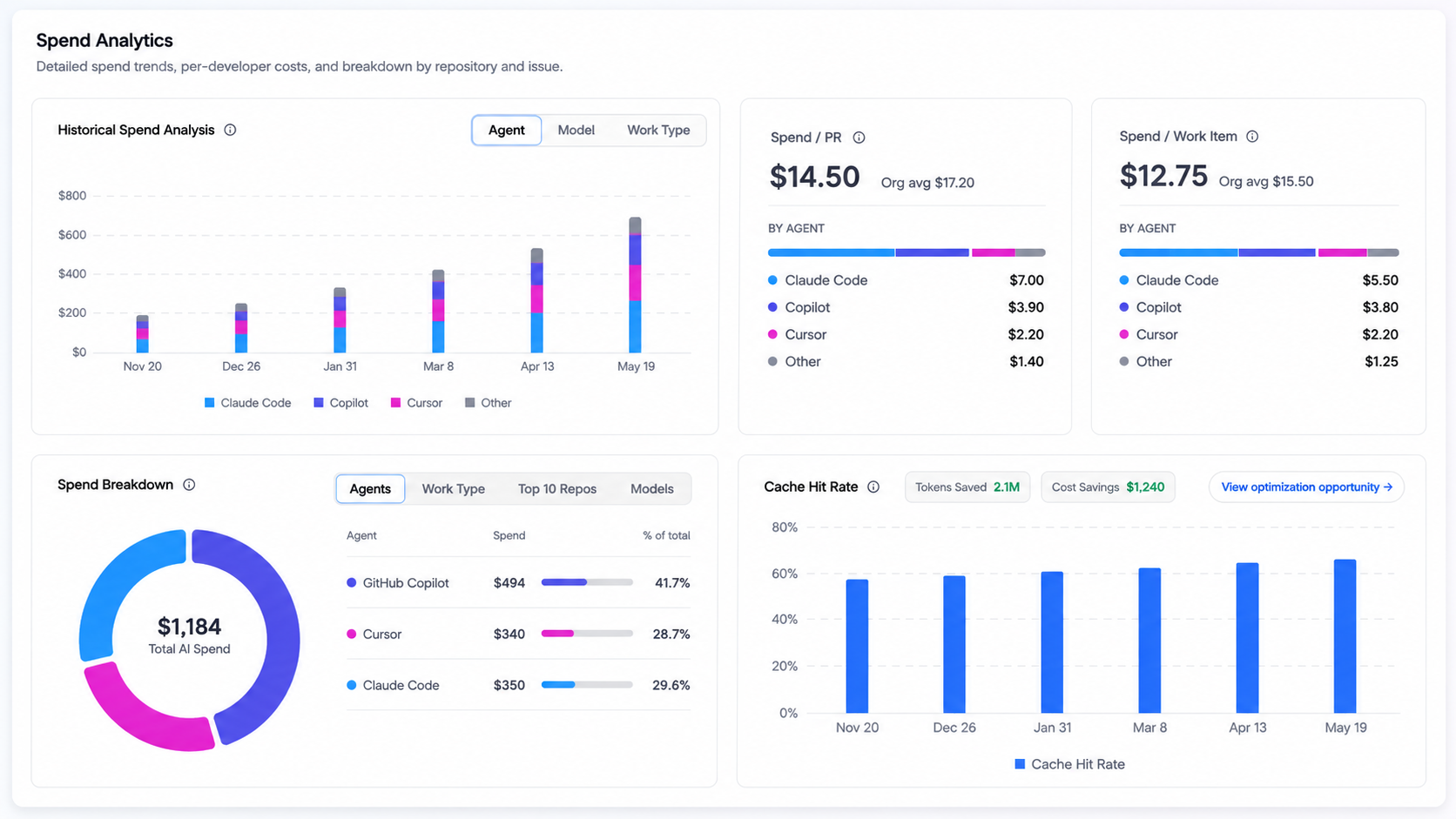

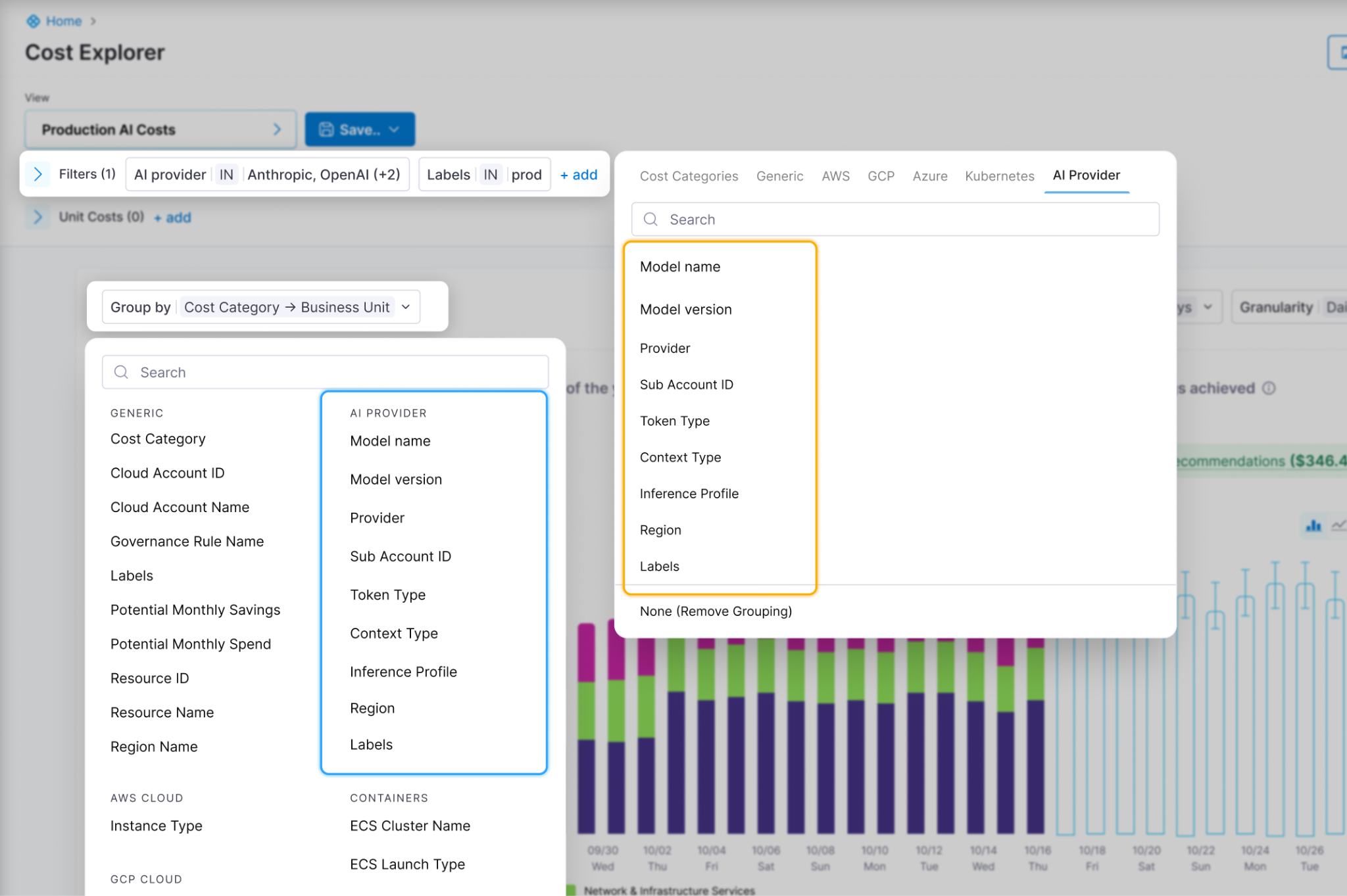

- Measuring AI investment with two extremely relevant capabilities: AI spend finally has a home in your cost dashboard, and AI adoption finally has a metric. Cloud and AI Cost Management now tracks AI infrastructure as a first-class spend category alongside traditional cloud costs. AI DLC Insights now correlates AI assistant adoption against the productivity outcomes it is supposed to drive. Read the announcement.

- Harness landed in the Claude Connectors Directory, giving Claude users direct access to pipelines, builds, deployments, security scans, and approvals from inside the Claude interface. Read the announcement.

AI-Native Development: MCP at Pace

Software Delivery Intelligence, Now Inside Claude (Code and Desktop)

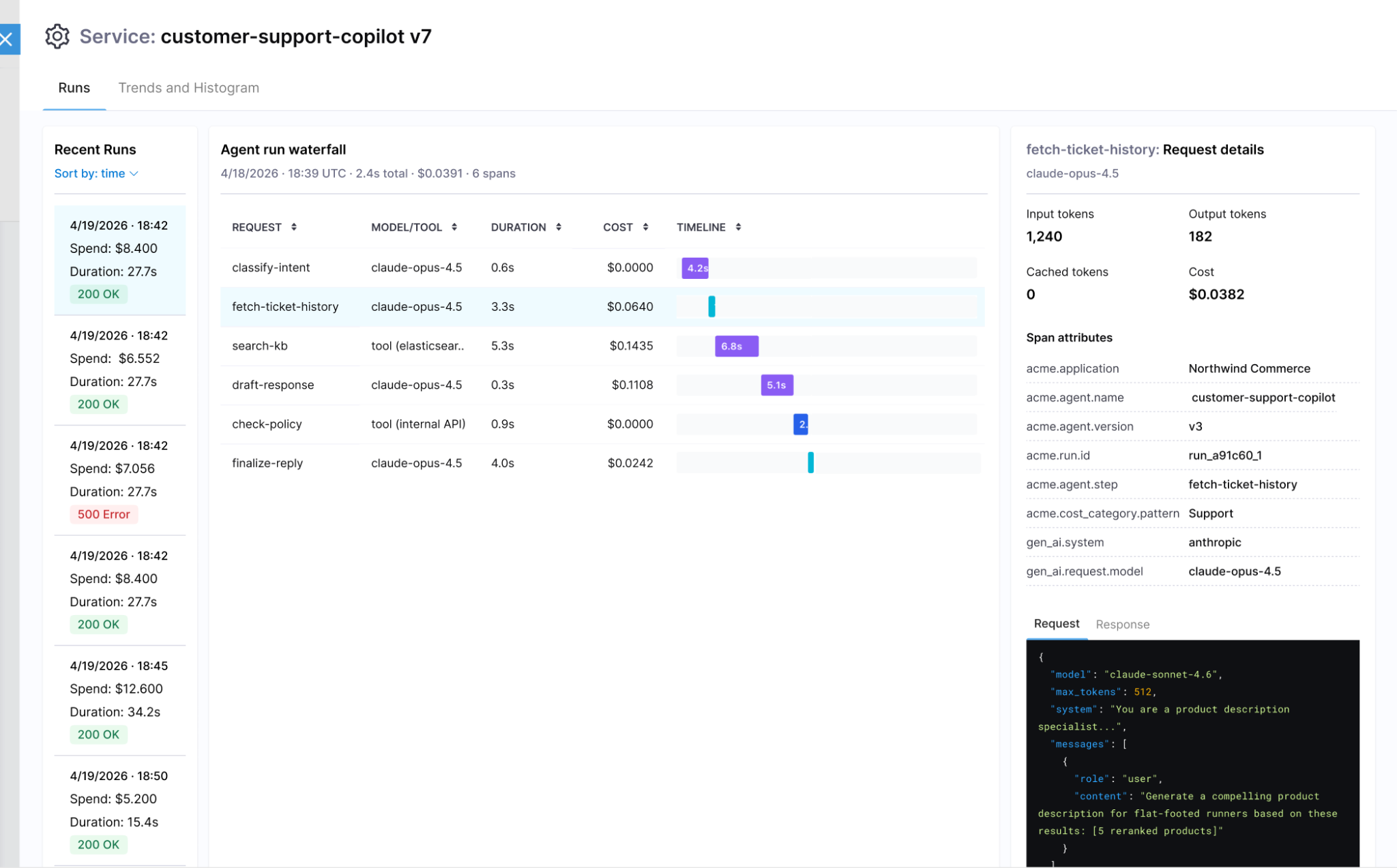

The Harness MCP Server is now in the official Claude Connectors Directory. Developers using Claude can now discover and connect to Harness, gaining structured, real-time access to their pipelines, deployments, approvals, and delivery workflows. What makes this different from a typical API integration is what's underneath: the Harness Software Delivery Knowledge Graph, which gives Claude the context it needs to make decisions that are accurate, fast, and safe.

The MCP Server in May: From Early Access to Production-Ready

Our MCP Server is evolving fast! Seven releases across 31 days. The month started with control and safety work: configurable autonomy levels, per-session trust boundaries, human-in-the-loop execution waits, six CVEs patched, and guardrails around destructive operations. It ended with expanded reach: IaCM workspaces, full DBSchema CRUD for database operations, Ansible support, and GPT app readiness with structured output and tool annotations. If you are building agentic pipelines on top of Harness, or want your AI coding assistant to drive deployments, infrastructure changes, and database schemas without leaving the IDE, this is the server to connect to. Read the docs.

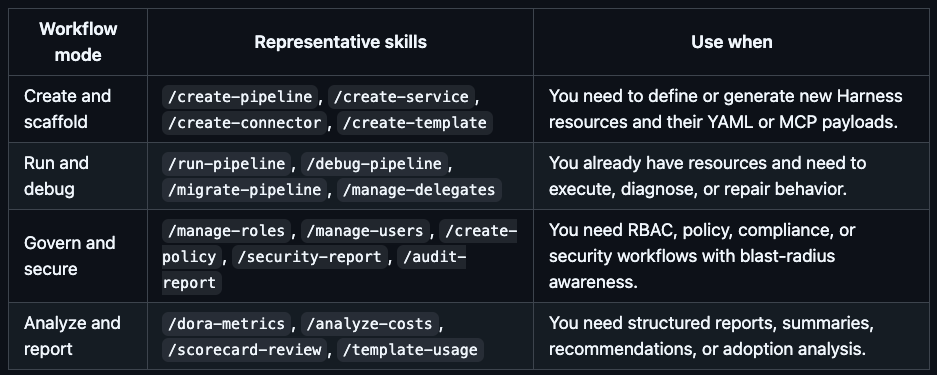

Skills Library

A curated library of skills distills common prompt patterns from internal usage into structured instruction files. The library includes security-specific skills and is packaged for use with the MCP Server, Claude Code, Cursor, and GitHub Copilot. The model follows the skill; the engineer describes what they want. Read the docs.

Google Code Wiki and Deepwiki Integration

The Harness MCP server is now indexed by Google Code Wiki and Deepwiki (Cognition/Windsurf). Devin and Windsurf users can analyze the MCP server architecture and ask questions about it directly. The Code Wiki updates automatically from commits.

Know What Your AI Is Doing and Keep AI Secure

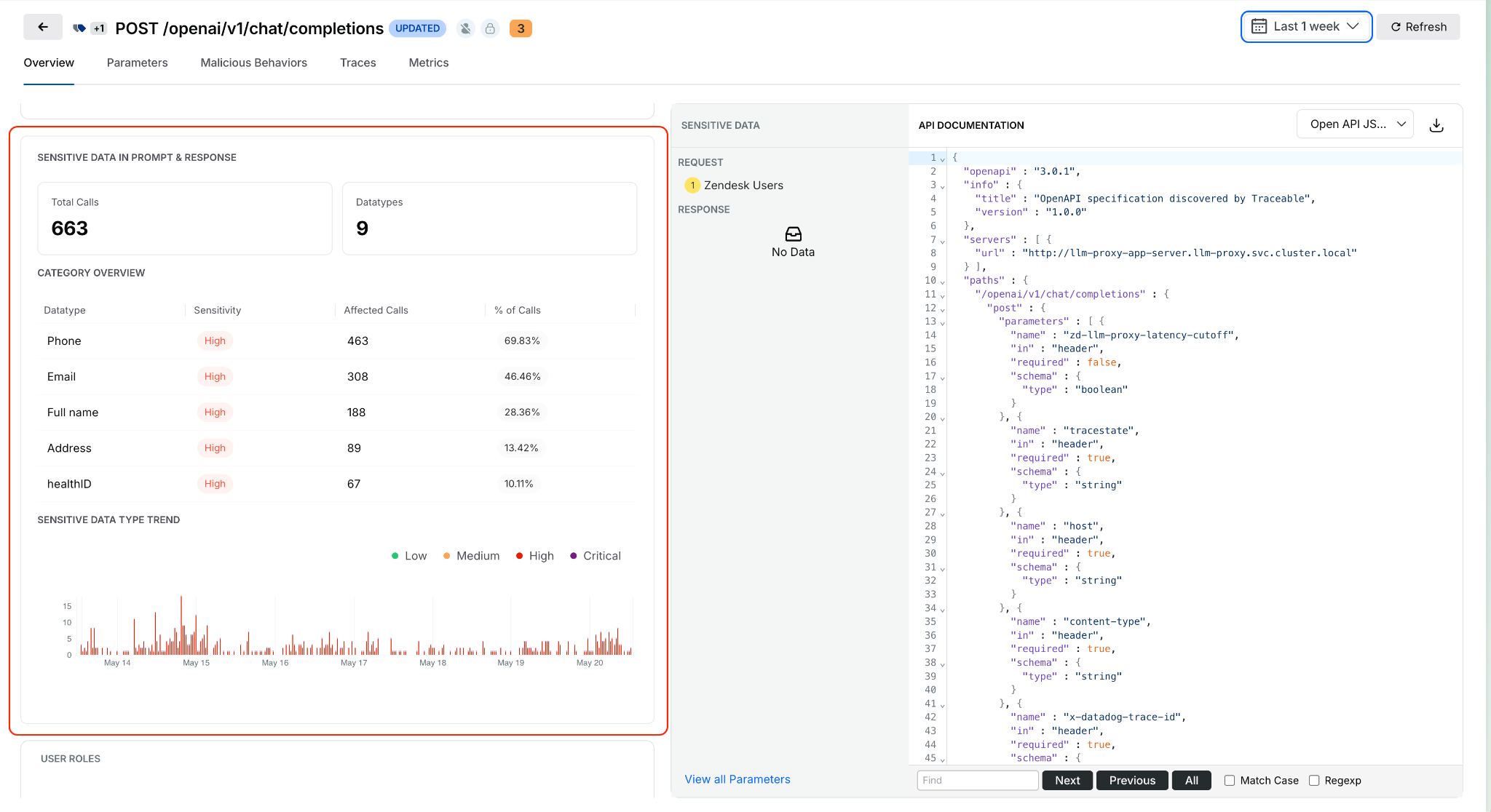

AI APIs, MCP tools, and models are now first-class assets in the platform, not afterthoughts in a traditional API inventory.

Sensitive Data Detection in AI Prompts and Responses

Open any discovered AI API from the AI Assets inventory and see what sensitive data is being processed in prompts and model responses. Exposure trends, data locations, and classifications are surfaced inline. This identifies high-risk AI APIs based on actual runtime behavior, not how they are configured. Learn more.

Service, MCP Server, and Environment on Issue Details

Issue Details now surfaces exactly where an issue is occurring: which service, which MCP server, and which environment, without leaving the side sheet. Previously, pinpointing issue context required navigating across views.

Span Attributes for Live Traffic Policy Scoping

Live traffic policies now evaluate only spans that match specific attributes, such as HTTP status codes. Detections are contextual rather than applied universally to all traffic. The evidence in each detection shows which spans actually triggered it. Docs

UI for Span-Attribute-Based API Exclusion Rules

Define API exclusion rules based on span attributes directly in the UI. Select status codes or specific headers to exclude APIs from discovery, giving precise control over what appears in the API inventory.

Entity Derivation for Bot and Abuse Protection

Extract, transform, and standardize application-specific attributes from API traffic and use them in Bot and Abuse Protection policies. Previously, detection rules were limited to predefined attributes. Custom entities derived from traffic patterns can now feed directly into policy evaluation. Docs

Rule Evaluation Point Support in Exclusion Policies

Configurable rule evaluation behavior for exclusion rules enables exclusions to be applied based on your deployment model, whether through a tracing agent or Traceable Edge. Docs

Granular RBAC and Environment-Level Scoping

Environment-level scoping now covers APIs, policies, configurations, and security insights consistently across the platform. Access is restricted to authorized environments, and policy management is environment-aware. Docs

Security in the Pipeline

Keyless Artifact Signing

Sign and verify artifacts without managing long-lived cryptographic keys. Identity-based authentication replaces key management, eliminating the rotation burden that makes key-based signing operationally painful at scale. Docs

License Family Classification for SBOM

SBOM components are now automatically grouped by license family. Teams get a portfolio-level view of open-source license risk without reviewing individual component licenses one by one. Docs.

Typosquatting and Malicious Package Detection

Two new risk signals are now checked during OSS dependency scanning: packages named to look like popular libraries (typosquatting) and known malicious packages. Added to the existing supply chain risk checks. Docs

Faster, More Reliable Builds

Flaky Test Detection (Beta)

Test Intelligence now identifies tests that pass and fail intermittently without consistent code changes as the cause. Flaky tests can be quarantined, removing them from pipeline gate decisions while tracking their instability over time. Previously, flaky tests failed pipelines with no actionable root cause. Read the docs.

Docker Connector Support for Custom Build Images

Bring Your Own Image (BYOI) workflows in Harness Cloud now support Docker connectors pointing to private registries. Teams with custom build container images hosted in private registries can use them for Harness Cloud builds without pushing to a public registry first. Release notes

Network Egress Restrictions in UI

Configure egress allow lists for Harness Cloud Linux and Windows build VMs directly from the Harness UI. Previously required manual configuration outside the product.

Test Splitting Accuracy

Test Intelligence now uses historical average durations for more balanced test parallelism. The split_tests binary previously required timing data in a specific format; it now also supports average-based timing, making accurate splitting available to more test suites.

Connector validation tasks and SCM tasks for proxy-enabled connectors are now routed through Harness Cloud delegates, ensuring both validation and source code operations work correctly for PrivateLink setups. These are behind feature flags.

Deploy More Safely

OIDC Delegate Selectors for AWS

Pass delegate selector information as AWS session tags in OIDC tokens. IAM policies can now restrict which Harness delegates execute which tasks, providing environment-level secret isolation without relying on environment naming conventions. Works across connector validation, deployment stages, and custom stages. Release notes

Dry Run Validation API

A new API endpoint validates pipeline YAML changes before they are committed to Git. Runs schema validation, template expansion, and OPA policy evaluation without executing the pipeline. Useful for pre-commit checks in IDEs or CI gates on pipeline repositories.

Artifact Registry

Soft Delete for Packages

Deleting a package or version now moves it to a recoverable state rather than removing it immediately. Teams that accidentally delete an artifact a running deployment still depends on can recover it before anything breaks. Permanent deletion is available from the same dialog when that is the intent.

Swift and Raw Package Support

Two new formats are now supported. Swift packages work with full SwiftPM compatibility: authenticate, publish, and resolve dependencies using the registry URL with no changes to existing workflows. Raw artifact storage handles arbitrary files by path: binaries, archives, reports, configuration files, anything that does not belong to a package manager ecosystem.

Dependency Firewall: Exemptions and Notifications

The Dependency Firewall now supports exemptions and policy action notifications. Whitelist trusted dependencies that should bypass firewall rules, and configure alerts that fire when the firewall blocks or flags a package. Teams get granular control over what gets blocked without having to audit the firewall log manually to know when it acted.

Audit Dashboard for Package Uploads and Downloads

A new dashboard records every package upload and download across all registries with full attribution: who performed the action, when, and on which package and version. Provisioned automatically for accounts with Artifact Registry enabled. Useful for compliance reviews, security investigations, and understanding artifact consumption patterns across teams. Release notes

Database DevOps Updates

Harness Code Repositories as a schema source

Harness Code Repositories can now be used as a source during DB Schema configuration and execution workflows.

Tagging Behavior

Enhanced tagging for database changesets improves consistency and traceability during migration workflows. Release notes here.

Purchase Credits API reliability

Database operations in the Purchase Credits API are now atomic, with enhanced logging for overage details during credit resets.

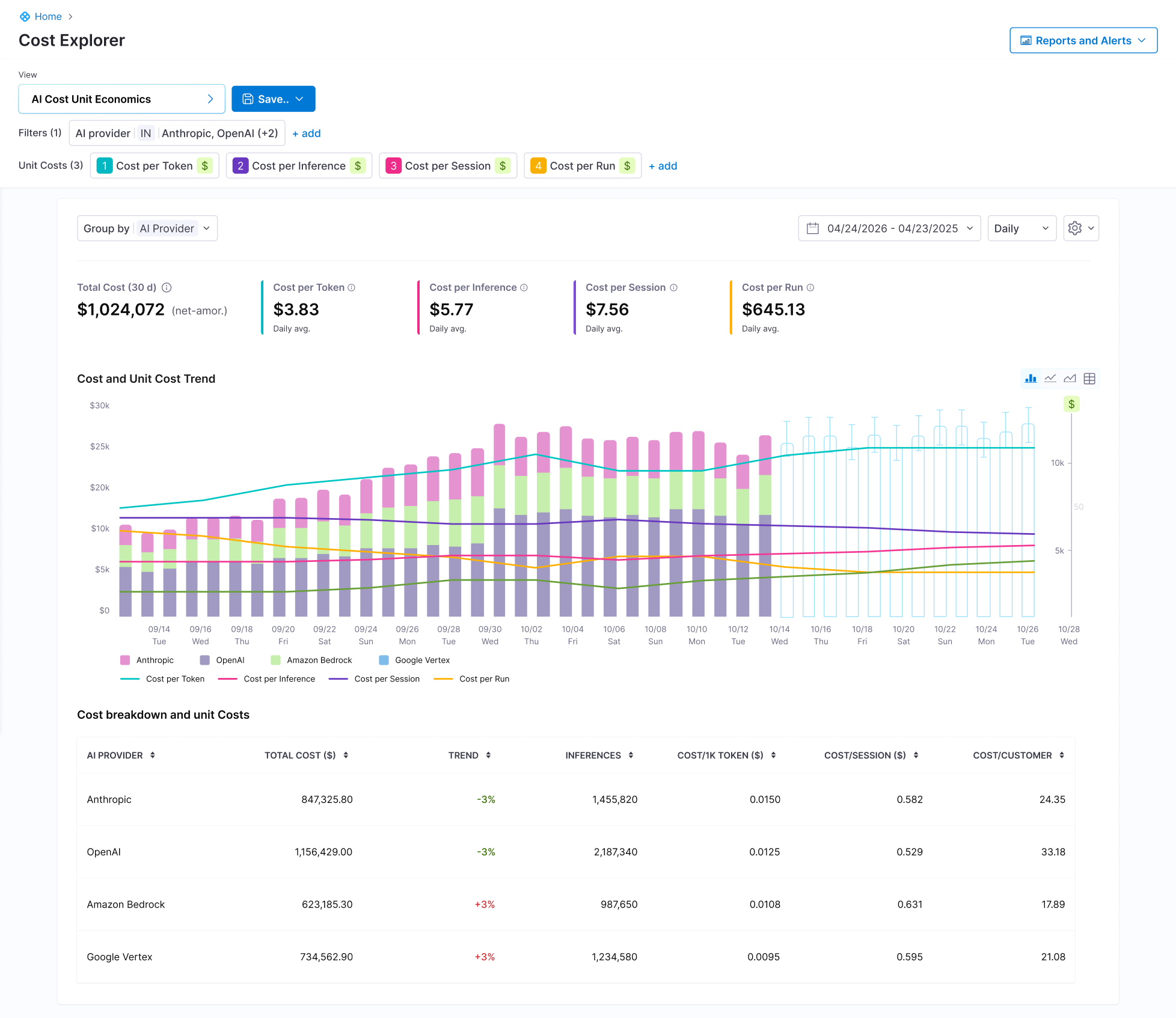

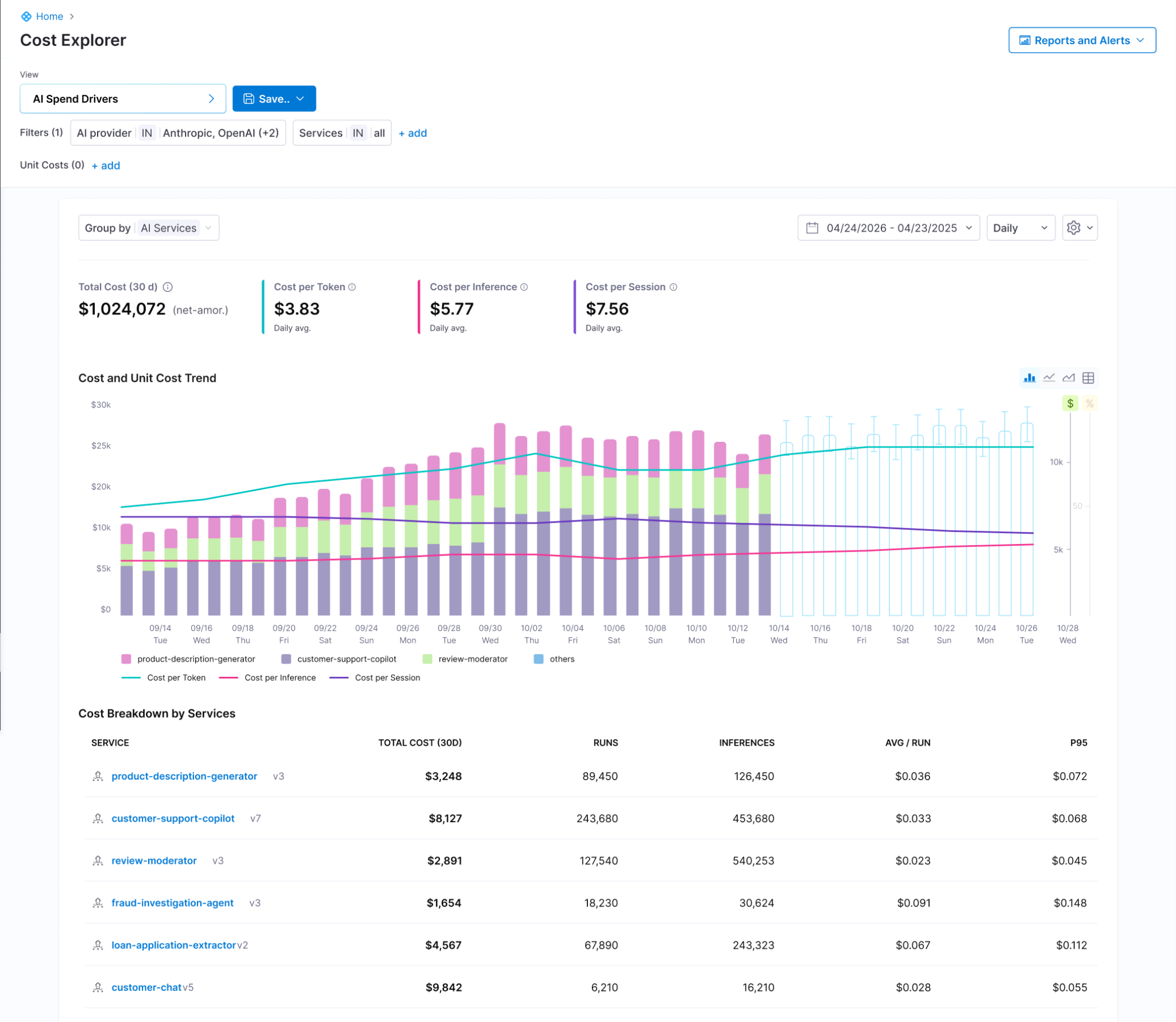

Know What Your AI Costs

Software Engineering Insights is now AI DLC Insights (Development Lifecycle Insights). Cloud Cost Management is now Cloud and AI Cost Management. Both capabilities reflect an expanded scope for the existing products: AI is now a first-class dimension in both products, not a filter you apply after the fact. Read the announcement

Cost Explorer with AI/ML Workload Visibility

Cloud and AI Cost Management's Cost Explorer now surfaces AI/ML spending alongside traditional cloud costs in a unified view. As teams add GPU instances, inference endpoints, and model API spend, that usage now appears in the same dashboards as the rest of the cloud bill. Docs

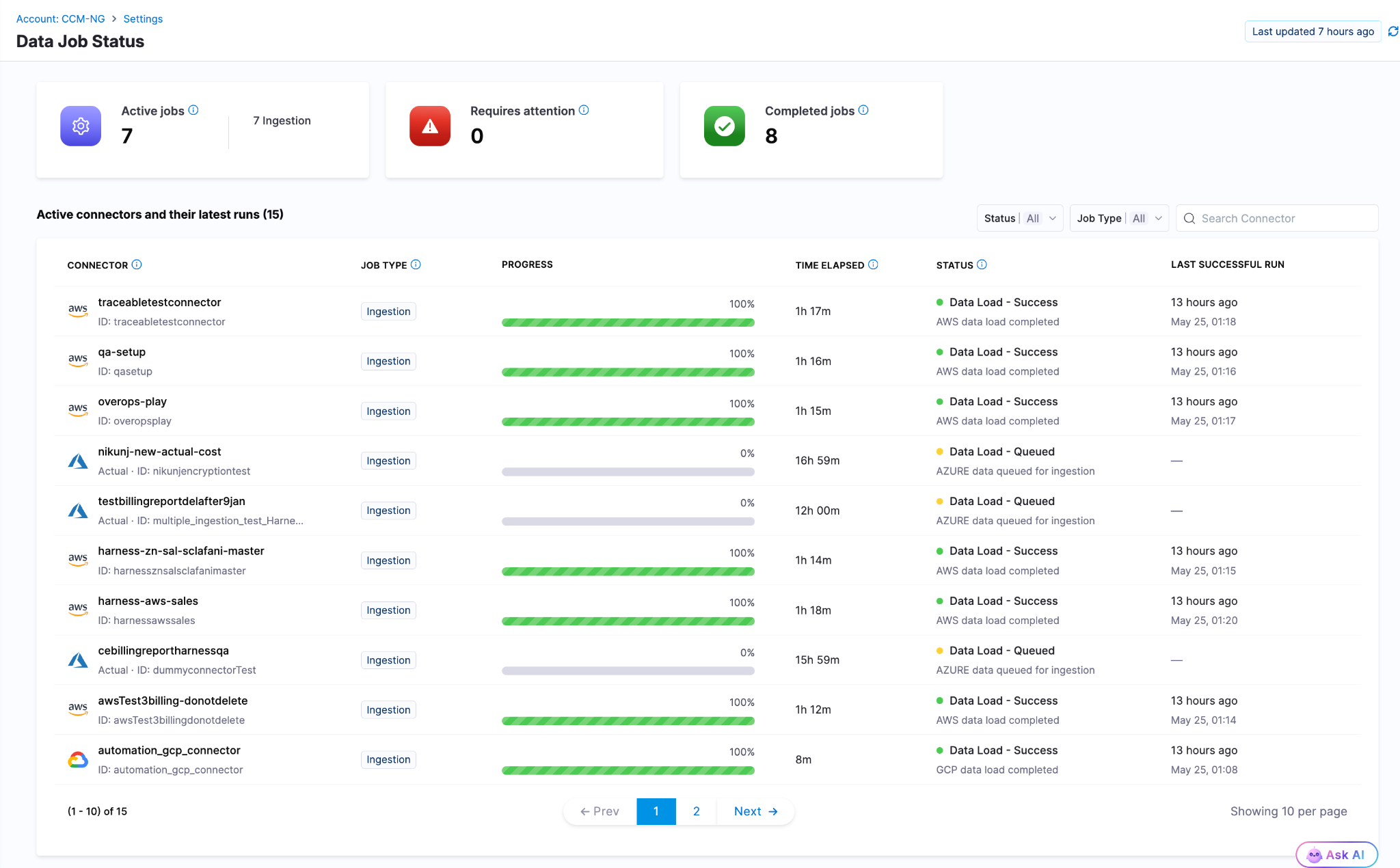

Data Job Status

Real-time visibility into the cloud cost data pipeline. When billing data from AWS, Azure, or GCP is delayed, failed, or stale, the Data Job Status page now shows the actual state. Previously, stale billing data produced incorrect recommendations and anomaly alerts with no indication that the underlying data had a problem. Docs

Cost Settings for Recommendations

A rebuilt, tabbed configuration experience for AWS and Azure recommendation cost preferences. AWS supports Passthrough Cost for both uniform and mixed account configurations, with per-account cost-type visibility. Azure adds selectable options for Amortized and List Price views of recommendation costs. Release notes

Engineering Metrics That Reflect Actual Human Work

AI Summaries and Insights Dashboard Enhancements

AI DLC Insights dashboards now surface AI-generated summaries alongside DORA metrics, productivity data, and workflow visualizations. The goal is to reduce the gap between "here is the chart" and "here is what to do about it." Docs

PR Cycle Time Excludes Bot-Generated Review Comments

The Productivity Insights dashboard now strips bot-generated review comments from PR Cycle Time calculations. Cycle time now reflects human reviewer activity only, which is the number that matters for understanding team throughput. Release notes

Custom Date Range on Dashboards

All dashboards on the Insights page now support a custom date range beyond the default presets. Analyze metrics over any time window, useful for quarterly reviews, incident post-periods, and year-over-year comparisons. Docs

Enable or Disable Developer Filtering for Lead Time for Changes

Control whether Lead Time for Changes honors developer filters at the team level from Team Settings. Gives engineering teams more precision in how DORA metrics are calculated and attributed across distributed or shared-team structures. Docs

ServiceNow Integration

ServiceNow is now a data source for engineering insights. Ingest, normalize, and analyze ITSM data directly within dashboards. DORA metrics can be calculated from ServiceNow incident and change management records for teams where ServiceNow is the system of record. Docs

qTest Integration

Test management data from qTest Cloud now flows into AI DLC Insights via API key authentication. Docs

Feature Flag Governance

FME Policy as Code: Environments and Segments

The OPA-based policy framework for Feature Management now covers environments, segments, and segment definitions. Teams can enforce consistent governance standards across the full FME configuration surface, not just flag-level rules. Release notes

Your Software Catalog, Smarter

Catalog Roundup: Modeling, Connections, and Surface Area

A set of enhancements expands what the developer portal catalog can model, connect, and display. The changes are incremental, but together they close gaps that platform teams have been routing around.

Integrations Overview on Entity Pages

The entity details page now includes a dedicated card showing key integration data directly on the overview. Platform engineers and developers can see the health and status of an entity's connected integrations at a glance rather than navigating to a separate integrations view. Docs

GitHub Integration: Secondary Entity Kinds

When configuring GitHub integration, you can now select secondary entity kinds to map discovered repository entities to. The data from those kinds surfaces directly on the entity details page, giving platform teams more flexibility in how GitHub content is represented in the catalog. Docs

AI Asset Instructions Tab

Entity pages for AI Assets now include a dedicated Instructions tab that renders the associated documentation file from GitHub directly within the portal. Teams discover and read AI asset documentation without leaving the catalog. Docs

Blueprints at Organization and Project Levels

Environment Blueprints can now be created and managed at the Organization and Project scope levels, in addition to the Account level. The blueprint listing page shows the scope for each blueprint, and managed roles have been updated with the appropriate permissions at each scope.

Resilience Testing

Kubernetes Load Testing

Load tests can now run against Kubernetes infrastructure. Previously load tests required Linux infra, meaning chaos testing and load testing needed different tooling and separate infrastructure even when targeting the same cluster. Resilience testing is now fully Kubernetes-aware end to end. Docs

Chaos Enhancements

A set of improvements landed across the chaos platform this month: filtering support for chaos experiment lists in the REST API, step name editing in Chaos Studio, NOT_EQUAL_TO operator for ChaosGuard namespace label selectors, tag-based filters on the DR Tests screen, probe chain logic, DR Test ACL permissions and audit events, user-based filters in the Experiments API, support for output variables in chaos resources, and the Chaos NG experience reaching general availability. Release notes

AI Test Automation

Playwright Execution Service (Beta)

Harness AI Test Automation now runs native Playwright test suites directly on the platform. Your playwright.config, spec files, and package.json scripts work as-is: connect your repo, point to your project root, and run. No grids to configure, no browser images to maintain, no infrastructure to scale. Tests run in cloud with parallel workers out of the box.

When tests fail, Harness automatically classifies the failure as regression, flaky, performance, or environment issue, so engineers spend time fixing problems instead of determining whether a problem is real. Playwright runs are first-class pipeline steps: results live in the Tests tab alongside build and deploy stages, and tests block deployments by default. Existing Playwright investments stay intact; scripts can evolve into AI-generated intent-based tests gradually when teams are ready.

Available now in beta. Release notes | Docs | Blog

AI SRE

CEL Expression Engine

Common Expression Language is now the full expression engine for AI SRE runbook conditions. Write dynamic conditions using regex matching, datetime formatting, list comprehensions, and math anywhere logic is evaluated or data is transformed. Docs

Google Chat Integration

Teams using Google Workspace can now run incident response from Google Chat: dedicated incident spaces, bidirectional message mirroring between the AI SRE UI and Google Chat, automatic responder adds, and real-time incident timeline sync. Built on Pub/Sub for reliable message delivery. One-time admin setup per organization. Docs

Platform-Level Updates

Service Account Token Notifications

Configure alerts for service account token events: creation, rotation, updates, expiration, deletion, and upcoming expiration. Delivered across notification channels already configured in your account. Expiring service account tokens are a common cause of silent pipeline failures; this makes them visible before they cause an outage. Docs

Platform Alerts

An in-app notification framework now surfaces important account-level events automatically within the Harness UI: approaching resource limits, system release announcements, and other account-wide signals. No external configuration required. Docs

In Closing...

The teams compounding fastest on AI are the ones where the whole system accelerated, not just the part that writes code. May brought 60+ feature releases, a Skills Library that makes any AI coding assistant fluent in Harness, artifact registries that know what they are serving and to whom, and the first dashboards that connect AI spend to AI output. The bottleneck keeps moving. We help you unblock the bottleneck in your software delivery.

See you in June.

Software Delivery Context, Now Inside Claude

Key Takeaway: The Harness MCP Server is now in the official Claude Connectors Directory. Developers using Claude can now discover and connect to Harness, gaining structured, real-time access to their pipelines, deployments, approvals, and delivery workflows. What makes this different from a typical API integration is what's underneath: the Harness Software Delivery Knowledge Graph, which gives Claude the context it needs to make decisions that are accurate, fast, and safe.

AI agents are only as good as the context they operate in. That's not a design philosophy. It's a practical constraint. An AI agent that doesn't understand how the underlying software delivery entities relate to each other, or what the data actually means, will get things wrong. In software delivery, wrong looks like a botched deployment, a misread failure, or an approval granted when it shouldn't have been, which directly affects your users.

Today, we're announcing that the Harness MCP Server is in the official Claude Connectors Directory, making Harness discoverable and connectable for every team using Claude. But the announcement isn't really about the directory listing. It's about what Harness + Claude can actually do in your delivery system.

What You Can Do with Claude and Harness

Claude can work across the full Harness delivery platform:

All of it is grounded in the Knowledge Graph, not raw API responses, but a structured model of your delivery system that Claude can reason over precisely.

The Problem With Giving AI Agents Raw API Access

MCP lets AI models call external tools by reading API descriptions and deciding which to invoke. That flexibility is useful. But when you're building an agent that needs to reason across an entire software delivery lifecycle, CI, CD, security scans, approvals, feature flags, cost signals, and environments, raw API access creates a deep reliability problem.

Consider a question a platform engineering lead might ask:

"Show me the pipelines with the highest failure rate over the last 30 days, and for each one, tell me which services they deploy and whether any of those services have open critical vulnerabilities."

That question spans four domains: pipeline execution history, service-to-pipeline relationships, environment state, and security scan results. An agent working off raw APIs has to discover which APIs exist across each domain, call them in the right order, paginate correctly, infer how field names correspond across systems, and synthesize the results without misinterpreting nested objects or guessing at relationships.

The result is 5+ sequential LLM calls, hundreds of thousands of input tokens, high latency, and an agent that had to guess at every join. Guessing is where hallucinations happen.

What the Harness + Claude Integration Changes

The Harness Software Delivery Knowledge Graph is a purpose-built model of everything that happens after code is written: builds, test runs, deployments, approvals, security scans, environment states, feature flags, infrastructure changes, cost signals, and rollbacks. Not as raw data but as a connected, typed, semantically annotated graph of entities and relationships.

Every field in the graph carries metadata that tells an agent exactly how to use it: whether a value is a number or a string, whether it can be aggregated or only filtered, what its unit is, and how it joins to related entities. Cross-module relationships, between a pipeline and the services it deploys, between a deployment and the security scan results for that artifact, between an environment change and the cost anomaly that followed, are explicitly declared, not inferred.

This is the difference between an agent that can access your delivery system and one that understands it.

When Claude connects to Harness via MCP, it doesn't receive a set of API endpoints. It's getting access to a structured model of your entire delivery organization, one where the relationships are known, the data types are enforced, and the agent can construct precise queries rather than guessing at field semantics.

The practical effect with Harness + Claude: that same cross-domain question above becomes 2–3 structured queries against a known schema. The agent selects the right entity types from the graph, generates queries with exact fields and declared relationships, and returns a deterministic answer. No guesswork. No hallucinated field names. No silent wrong answers.

What This Looks Like in Practice

Debugging a failed pipeline without context switching

A build has failed. Normally, you'd open the Harness UI, navigate to the execution, copy the relevant logs, paste them into a conversation, and wait for analysis. The AI reasons over whatever you managed to capture.

With the Harness MCP connection active in Claude, you ask what failed. Claude doesn't just pull logs; it queries the Knowledge Graph to understand the structure of that pipeline, which stage failed, what services were involved, whether similar failures have occurred before, and what changed since the last successful run. The answer it surfaces reflects the full delivery context, not just the stack trace you happened to copy.

Promoting a deployment through governed gates

Your team is ready to move a service from staging to production. Claude checks the current environment state, verifies that required approval gates have been satisfied, confirms the security scan passed for the artifact version you're promoting, and initiates the deployment — with every action running through your existing RBAC policies and logged for audit.

The agent isn't guessing about whether conditions are met. It's querying a graph where those conditions are modeled as typed relationships with known states. The answer is deterministic because the data is structured to make it so.

This Is Not AI Without Guardrails

The natural question when Claude can trigger pipelines and manage deployments: what stops it from doing something it shouldn't?

The same controls that govern everything else in Harness. Every action taken through the MCP server runs through your existing RBAC permissions, OPA policy enforcement, approval gates, and audit logging. Claude operates with exactly the permissions you have, nothing more. Every action is tracked. Nothing bypasses the governance layer.

The Knowledge Graph reinforces this: because Harness AI understands your delivery system structurally, it also understands the constraints within it. Approval gates aren't just optional steps the agent might skip; they're modeled as typed relationships with state. The agent can't promote past a gate that hasn't cleared because the graph reflects that clearly.

Speed and governance aren't a tradeoff. They coexist by design.

Why the Claude Connectors Directory Matters

The Claude Connectors Directory is a curated, reviewed set of integrations. Anthropic evaluates each server before listing it. Being approved is a signal of trust that carries weight for enterprise teams deciding which AI integrations to enable.

It also means discoverability at scale: engineering teams using Claude for DevOps workflows will find Harness natively. One-click OAuth connection, no API key management, no manual configuration.

This fits a broader pattern. The Google Cloud partnership brought Harness into Google's AI ecosystem through Vertex AI and Gemini CLI. The Cursor plugin brought it into the IDE. The Claude Connectors Directory brings it into conversational AI. In each case, the goal is the same: wherever developers are doing their best thinking and wherever AI is being asked to help with software delivery, Harness should be present with the right context for that AI to act reliably.

Getting Started

If you're already a Harness customer:

- Open Claude and then the Connectors page

- Search for Harness in the MCP directory

- Authenticate with OAuth, no API keys, no manual configuration

- Start asking Claude about your pipelines, deployments, and delivery workflows

If you're new to Harness, sign up for free and connect from day one. Detailed steps are listed in the documentation.

The Harness Connector gives Claude the ability to act in your delivery system. The Knowledge Graph gives it the understanding to act well. Together, that's what reliable AI in software delivery actually looks like.

BigQuery CI/CD and Database DevOps with Harness

Modern data platforms are evolving rapidly, and Google Cloud BigQuery has become a core part of analytics, AI, and large-scale reporting architectures. Teams (including Harness) rely on BigQuery to process and analyze massive datasets, but managing schema changes in a secure, repeatable way can still be challenging.

Today, we’re excited to announce BigQuery support for Harness Database DevOps, enabling teams to bring the same automation, governance, and reliability they expect from application DevOps to their BigQuery deployments.

With this release, organizations can now manage BigQuery schema changes using pipeline-driven Database DevOps workflows directly within Harness, while also leveraging secure OIDC-based authentication for keyless access.

The Challenge: Managing BigQuery Changes at Scale

BigQuery helps organizations move fast with data, but database change management often remains manual and fragmented.

Common challenges include:

- Manual schema deployments that slow down releases

- Limited visibility into schema changes across environments

- Inconsistent promotion workflows between development, staging, and production

- Managing long-lived service account keys

- Difficulty enforcing governance and approvals

Without a standardized deployment process, teams struggle to balance speed, reliability, and security.

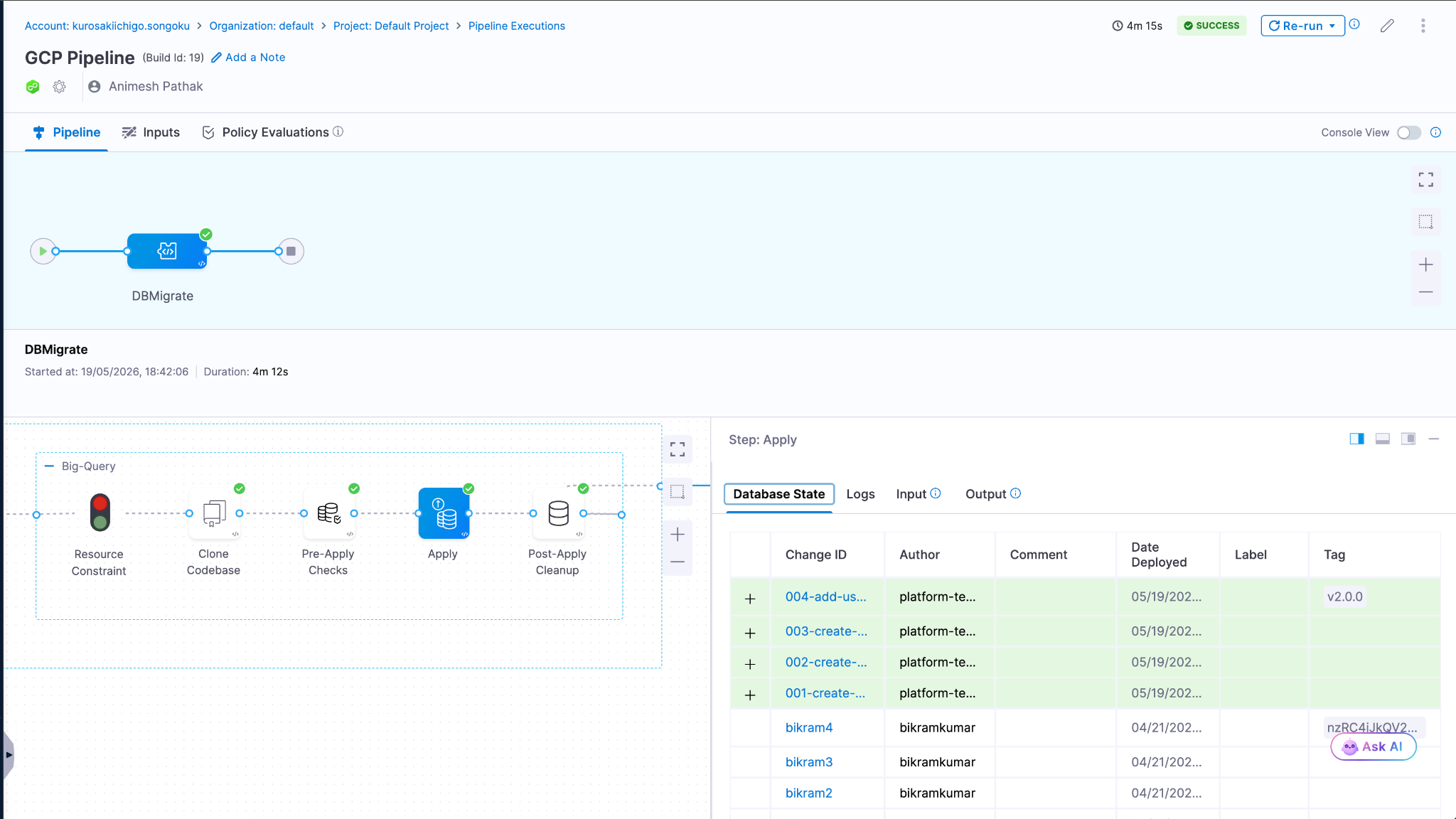

Bringing Database DevOps to BigQuery

Harness Database DevOps now supports BigQuery as a first-class database platform, allowing teams to manage schema changes through automated, pipeline-driven workflows.

This means BigQuery schema changes can now be treated just like application code versioned, tested, approved, and promoted through environments using Harness pipelines.

With BigQuery support, teams can:

- Automate schema deployments using Harness pipelines

- Version control database changes alongside application code

- Promote changes consistently across environments

- Enforce approvals and governance policies before production releases

- Track and audit deployments with full visibility

- Eliminate static credentials using OIDC authentication

The result is a modern Database DevOps workflow for BigQuery that helps teams release faster without sacrificing security or reliability.

Key Capabilities

Native BigQuery Integration

Harness Database DevOps can now connect directly to BigQuery environments using BigQuery JDBC connector powered by the Simba BigQuery JDBC driver.

Example JDBC URL:

jdbc:bigquery://https://www.googleapis.com/bigquery/v2:443;ProjectId=YOUR_PROJECT_ID;DefaultDataset=YOUR_DATASET;Location=YOUR_REGION;

OAuth access tokens are injected automatically during authentication, removing the need for manual credential management.

Secure OIDC-Based Authentication

Harness supports OIDC authentication using GCP Workload Identity Federation, allowing teams to securely authenticate to BigQuery without storing long-lived service account keys.

During pipeline execution:

- Harness generates a short-lived OIDC token

- GCP Security Token Service exchanges the token

- Temporary credentials are generated dynamically

- Harness securely authenticates to BigQuery at runtime

This improves:

- Security posture

- Compliance readiness

- Credential management

- Operational reliability

No static JSON keys are stored in Harness or delegate environments.

Automated Database Change Pipelines

Use Harness pipelines to automate BigQuery schema deployments with repeatable workflows across environments.

Teams can:

- Trigger deployments from Git changes

- Standardize promotion workflows

- Validate changes before production releases

- Automate schema delivery using CI/CD

Governance and Control

Leverage Harness approval gates, RBAC, and policy enforcement to ensure safe production changes. This helps organizations introduce governance into analytics database deployments without slowing down delivery velocity.

Deployment Visibility and Auditability

Track every BigQuery deployment with:

- Pipeline execution history

- Deployment logs

- Approval records

- Change visibility across environments

This creates a more transparent and auditable deployment process for data teams.

Why This Matters

As organizations increasingly rely on BigQuery to power analytics and AI workloads, database changes require the same level of automation and governance as application deployments.

By bringing BigQuery into Harness Database DevOps, teams can:

- Reduce manual deployment risk

- Improve collaboration between platform and data teams

- Standardize analytics database release processes

- Improve security with keyless authentication

- Accelerate delivery of data platform changes

Getting Started

BigQuery support for Harness Database DevOps is now available.

To get started:

- Configure a BigQuery JDBC connector in Harness

- Enable OIDC authentication using GCP Workload Identity Federation

- Add BigQuery change scripts to your repository

- Create a Harness pipeline to deploy and promote changes

- Automate BigQuery releases with confidence

Learn More on setting up our documentation.

Learn More

To learn more about using BigQuery with Harness Database DevOps, check out our documentation or schedule a demo.

Additional Resource - Warehouse Native BigQuery Integration

Feature Flag Tools Compared: 10 Best Platforms for Safer Releases

- Modern feature flag tools have evolved past simple on/off toggles into full experimentation platforms.

- The right platform plugs directly into your CI/CD pipeline and observability stack, so experimentation becomes a daily developer practice instead of an off-to-the-side project.

- Choosing a feature flag tool ultimately comes down to scale, governance, and how clearly each release ties to the business KPIs your leadership actually cares about.

The 10 Best Feature Flag Tools for 2026

Releasing new software used to be a big deal. You would set aside a Saturday night, wake up the on-call engineer, push the code, and hope that nothing broke before Monday morning.

Then came feature flags, which changed everything without anyone noticing.

Feature flags let you separate deployment from release, so you can send code to production in a dormant state and turn it on for users when you're ready. No more 1 a.m. maintenance windows. We don't have to ship every feature in a release together anymore, or scramble to pull one back with a hotfix. Just code in production, off by default, and ready when you say so.

But the tools have improved a lot. Feature flag tools these days are more than just on/off switches. The best ones have flag management, progressive delivery, real-time release monitoring, A/B testing, and AI-driven guardrail metrics all built right into your CI/CD pipeline. That changes how a release looks, how a rollback feels, and how confident your team is when they ship.

Here's a look at the best feature flag tools available, along with what each one does well and what to look for when picking the right one for your team.

What Feature Flag Tools Really Do

A feature flag, or feature toggle, is a conditional block in your code that controls whether a new feature is active for a given user. Wrap a flag around a checkout page redesign, and you can push the code to production while keeping the new flow hidden from 99% of users. Set it to 1% as a canary, monitor your metrics, and gradually increase the rollout percentage if everything looks good.

Feature flag tools handle the whole lifecycle: creating flags, targeting users, rolling them out incrementally, monitoring their impact, and retiring flags once they've served their purpose.

Modern platforms add a few more layers on top of that:

- Progressive delivery. Instead of releasing everything at once, release features to bigger groups of users over time, based on performance metrics.

- Experimentation. Use proper sample size calculations and significance testing to run statistically sound A/B tests.

- Release monitoring. Find out how feature exposure affects error rates, latency, and business KPIs in real time.

- Governance. RBAC, audit trails, and approval workflows for organizations operating in regulated industries.

The toggle itself isn't worth much. The safety net around it is.

What to Look for in a Feature Flag Tool

Before you start looking at different tools, make sure you know what your team really needs. Some questions you should ask are:

Does it work with the CI/CD pipeline you already have? Your developers will work around a flag platform that is outside of your delivery workflow, not with it.

Can it connect flag exposure to your observability stack? You don't want three dashboards to cross-reference when something breaks at 3 a.m. You want one screen that tells you which feature caused the spike.

Will it scale with your traffic and your team? When you have millions of users, SDK performance, evaluation latency, and offline fallback are all important.

Does it cover governance for regulated environments? In healthcare, fintech, or anything touching PII, RBAC, approval workflows, immutable audit trails, and Policy as Code aren't optional.

How does it handle flag lifecycle management? Stale flags are technical debt. The best platforms include ownership assignment, sunset policies, and dashboards that surface flag age and usage frequency.

With those criteria in mind, here are the best tools to consider.

The 10 Best Feature Flag Tools

1. Harness Feature Management & Experimentation (FME)

Harness FME is a developer-first platform that brings feature management, A/B testing, and release monitoring into one unified system. Built on the combined Split and Harness lineage, FME is designed for enterprise teams that want experimentation baked into their CI/CD pipeline not bolted on as a separate workflow.

What makes FME stand out:

- Unified flags and experimentation. Feature management and A/B testing share the same flag, SDK, and data pipeline. No parallel systems to reconcile.

- AI-driven release monitoring. Release monitoring automatically connects flag exposure to error rates, latency, and business KPIs. You know which feature broke something right away, not hours later.

- Warehouse-native experimentation. Run analysis directly on your Snowflake, BigQuery, or Databricks data, so experiment results live alongside the rest of your business intelligence.

- Automated rollback and progressive delivery. If p95 latency climbs 10% for 84 seconds, FME handles the rollback automatically while you sleep.

- Enterprise governance. RBAC, SAML federation, immutable audit logs, and approval workflows for regulated industries.

Best for: Enterprise engineering teams that want a single platform for feature flags, experimentation, and release monitoring, with deep CI/CD integration.

2. LaunchDarkly

LaunchDarkly is one of the oldest feature flag platforms on the market. It's a popular choice for teams that want a flag-first product with mature SDK support for most major languages.

Some of its strengths are that it has a lot of SDK support, good targeting options, and a long history of managing features. Some teams may prefer other vendors for bundled analytics or warehouse-native analysis. Teams that do a lot of A/B testing often use LaunchDarkly with a separate analytics or stats engine, which makes things more complicated.

Best for: Teams whose primary need is feature flag management, with separate tooling for testing and observability.

3. Statsig

Statsig has become a popular platform for product-led growth teams. Statsig is a popular platform for product-led growth teams because it has a free tier that includes feature flags, experimentation, and product analytics all in one place.

The platform's statistical engine is good. It can do sequential testing and has a good way of testing for significance. With warehouse-native mode, you can analyze your own data infrastructure. Statsig is still growing in enterprise governance, but its RBAC and audit features aren't as strong as those found in regulated industries.

Best for: Product-led growth teams that want flags, experiments, and analytics in one system without heavy enterprise requirements.

Ownership note: Statsig announced in September 2025 that it would join OpenAI. OpenAI said Statsig would continue operating independently and serving current customers, so buyers may want to watch how the roadmap evolves under new ownership.

4. Optimizely Feature Experimentation

Optimizely's roots are in web-based A/B testing, and it brings that history of experimentation into its feature flag product. The platform's statistical methods are well-established, and marketing teams that have used other Optimizely products are likely to choose it.

The downside is that you can see where Optimizely came from in some places. The product is more useful for web and front-end use cases and less useful for the kind of deep backend, infrastructure-level flag management that engineering teams often need. More developer-native tools tend to work better for product engineering teams that only work on products.

Best for: Marketing-engineering hybrid teams already invested in the Optimizely ecosystem who want to extend it to product feature testing.

5. PostHog

PostHog is an open-source platform that bundles product analytics, feature flags, experimentation, and session replay together. It's a popular pick for early-stage companies that want a lot of capability without paying for multiple platforms.

The all-in-one approach works well at a smaller scale. As you grow, you may find that specialized tools go deeper on individual capabilities particularly enterprise-level flag management and statistical rigor. The self-hosted option is a meaningful advantage for teams with strict data residency requirements.

Best for: Startups and growth teams that want product analytics and feature flags in one place, with a self-hosting option.

6. Flagsmith

Flagsmith is a feature flag platform that is completely open source and can be hosted in the cloud or on your own server. It's a good choice for teams that need open-source flexibility (or strict self-hosting) but don't want to lose the polished product experience.

The platform does a good job of covering the basics, like targeting, segmentation, multivariate flags, and SDK support for most languages. It's not as heavy as enterprise platforms when it comes to advanced experimentation, AI-driven release monitoring, and deeply automated guardrails.

Best for: Teams with privacy requirements, self-hosting mandates, or a strong preference for open-source software.

7. Unleash

Unleash is another open-source option with a strong following in Kubernetes-native shops. It's known for being straightforward to set up, easy to understand, and well-suited to teams that want full control over their tooling.

Like Flagsmith, Unleash handles flag management well but doesn't extend as far into experimentation or release intelligence. If your team primarily needs to safely gate features and host the platform yourself, Unleash is a solid choice.

Best for: Open-source-first teams, especially those running Kubernetes infrastructure.

8. ConfigCat

ConfigCat markets itself as a simple, inexpensive feature flag service with clear prices and an easy setup. A lot of small to medium-sized teams choose it because they want to manage flags without the extra work that comes with a bigger platform.

The product includes the basics, such as targeting, segmentation, percentage rollouts, and connections to popular tools. It wasn't made to be a testing platform, so teams that need statistical analysis will have to use it with something else.

Best for: Small-to-midsize teams that want light-weight, budget-friendly flag management without enterprise complexity.

9. GrowthBook

GrowthBook is an open-source feature flag platform originally built around warehouse-native experimentation. The premise: your experiment data is already in BigQuery, Snowflake, or Redshift, so it should be analyzed there rather than piped to a separate vendor.

For data teams that have invested heavily in their warehouse, GrowthBook is a strong fit. The statistical methods are rigorous. Bayesian and frequentist options, sequential testing, CUPED variance reduction, and the open-source model gives you full control over the platform.

Best for: Data teams that want serious warehouse-native experimentation with open-source control.

10. AWS AppConfig

AWS AppConfig is Amazon's native configuration and feature flag service for teams operating entirely within the AWS ecosystem. It integrates cleanly with Lambda, ECS, EKS, and EC2, and runs as a fully managed service under your existing AWS account.

The trade-off is depth. AppConfig treats flags as part of broader application configuration. It isn't a purpose-built platform for experimentation or release intelligence. Teams that need advanced targeting, A/B testing, and release monitoring at the level of a dedicated tool will outgrow it quickly.

Best for: AWS-native teams with modest flag requirements who want to stay within the AWS ecosystem.

How to Pick the Right Feature Flag Tool for Your Team

Once you've narrowed down your list, here are a few things to think about.

- Match the tool to your scale. A platform that works for a 10-person startup probably won't work for a business with 500 engineers, and the other way around. Check how well the SDK works when it's under load, how deep the governance is, and how the platform handles thousands of flags across hundreds of services.

- Look for pipeline-native integration. If turning on a flag means a developer has to stop what they're doing and do something else, that flag won't be used as much. The best platforms let you manage flags like GitOps and trigger updates with CLI commands or pipeline steps.

- Build in flag hygiene from day one. Old flags are a type of technical debt. Look for dashboards that show the lifecycle of a project, policies about when to end a project, and who is responsible for what. Amazon requires flag removal tasks to be done when the task is created, which is a good idea to copy.

- Plan for governance before you need it. RBAC, audit trails, approval workflows, and policy-as-code may seem like too much for a small project, but they cost a lot to add later. Get the governance bench set up early.

- Run a two-week pilot with one team before rolling out company-wide. You can learn more about a platform in two weeks with just one engineering team than you can with a dozen vendor demos. Don't just look at how well it works on its own; make sure it fits with your current tools.