.png)

E2E Testing Has a New Bottleneck, and It's Not the Code

End-to-end (E2E) testing has always been the hardest part of a QA strategy. You're simulating real users, navigating real flows, validating real outcomes across browsers, environments, and data states that never hold still.

Traditional test automation tackled this with scripts: rigid, deterministic sequences tied to element selectors and hard-coded values. They worked until the UI changed. Or the data changed. Or a new team member touched the wrong locator. The result: flaky, expensive test maintenance cycles that teams quietly stopped trusting.

AI-driven testing/AI Test Automation promised to fix this. And it has, but only for teams who figured out the new bottleneck. It's not the model. It's not the tooling. It's the prompt engineering.

In AI test automation, you don't write scripts anymore; you write instructions. And the quality of those instructions determines everything that follows.

What Is a Prompt in the Context of E2E Testing?

In general AI usage, a prompt is the input you give to get an output. In intelligent test automation, it's much more specific: a prompt is a natural language instruction that tells the AI testing engine what to do, what to verify, and how to handle what it finds.

A complete, well-formed test prompt for E2E automation includes five ingredients:

Goal

What business outcome is being tested? (e.g., 'User completes checkout with a promo code applied')

Context

Where does the test start? What preconditions exist? What user state or data should be assumed?

Specifics

Exact values, field names, amounts, account types, formats, and no ambiguity about inputs or expected data.

Assertion

What does success look like? A confirmation message? A balance update? A redirect to a specific URL?

Boundaries

What should the AI NOT do? What's out of scope for this particular test step?

Miss any one of these, and you've handed the AI a half-built blueprint. It will fill in the gaps, just not necessarily the way you intended.

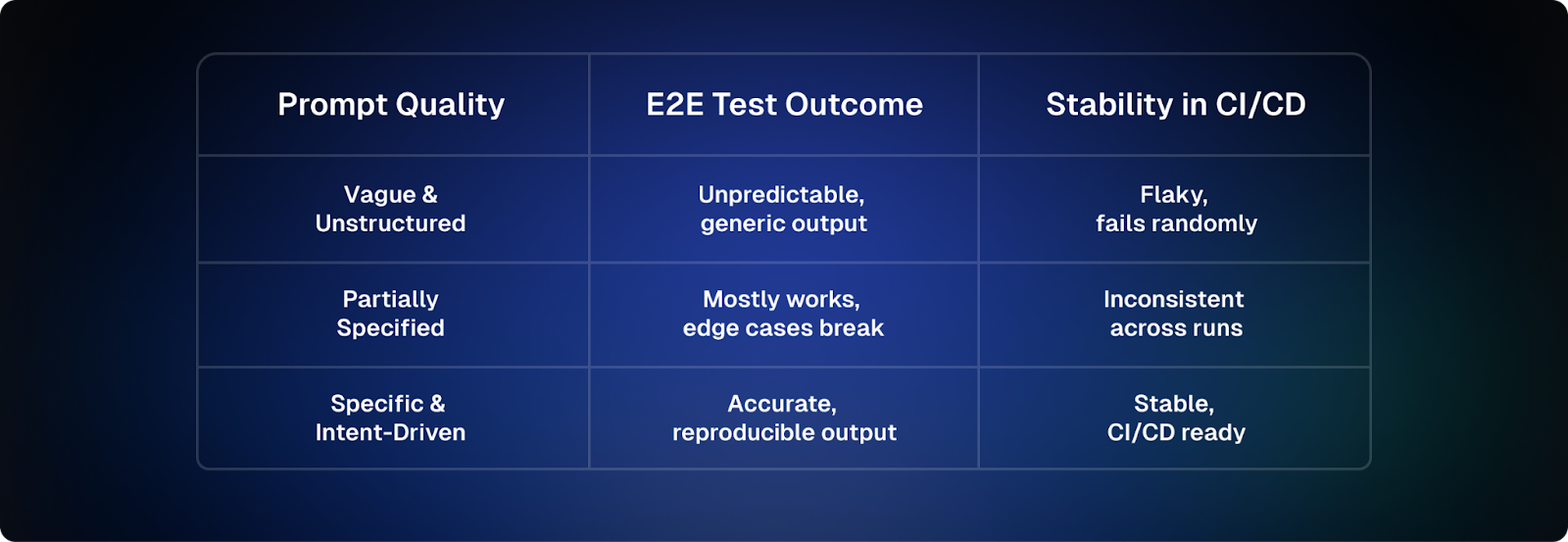

Why Prompt Quality Directly Determines Test Stability

Here's the fundamental truth of AI-driven testing: non-deterministic prompts produce non-deterministic tests. And non-deterministic tests are worse than no tests at all; they create false confidence and burn engineering time chasing phantom failures.

The good news: prompt quality is entirely within your control. Unlike flaky network conditions or unpredictable UI re-renders, a badly written prompt is just a rewrite away from being a reliable one. This is the foundation of self-healing tests. Better prompts dramatically increase the likelihood that the tests can self-heal. Let's break down where prompts go wrong and right.

The Most Common Prompt Failures in E2E Testing

✅ EFFECTIVE PROMPT

"Navigate to the checkout page, apply promo code SAVE20, and verify the order total shows $80.00 after the discount is applied from $100.00."

❌ WEAK PROMPT

"Go to checkout and check the discount works."

✅ EFFECTIVE PROMPT

"Click on the row in the Orders table where the Status column shows 'Completed,' and the Order ID matches the ORDER_ID parameter."

❌ WEAK PROMPT

"Click on the completed order."

✅ EFFECTIVE PROMPT

"After the payment confirmation spinner disappears, assert: Is the text 'Payment Successful' visible on screen?"

❌ WEAK PROMPT

"Check that payment worked."

5 Prompt Patterns That Make E2E Tests Reliable

Pattern 1: The Intent + Outcome Pattern

Lead with the business intent, end with the verifiable outcome. This structure forces you to be clear about both what you're doing and how you'll know it worked.

"Complete a standard checkout as a guest user with item SKU-4421, shipping to postcode 90210, and verify the order confirmation page displays an order number."

Why it works: The AI knows the starting intent, the data to use, and exactly what constitutes success. No room for interpretation.

Pattern 2: The Precondition Guard

State what must be true before the test action begins. This prevents cascading failures caused by the AI attempting steps when the application isn't in the right state.

"Given the user is logged in and has at least one saved payment method, navigate to the subscription renewal page and click 'Renew Now'."

Why it works: Guards against false failures. If the precondition isn't met, the test fails meaningfully, not mysteriously.

Pattern 3: Content-Based References (Not Positions)

Never reference UI elements by their position on screen. Reference them by their visible content, label, or semantic role. This is the single biggest driver of self-healing tests and reduces test maintenance dramatically.

✅ EFFECTIVE PROMPT

"Select the product named 'Wireless Mouse' from the search results."

❌ WEAK PROMPT

"Select the second item in the search results."

Why it works: Lists reorder. Pages change. Content-based references survive both.

Pattern 4: Atomic Assertions

One assertion should test one condition. Compound assertions ('check that X is visible AND says Y AND the button is enabled') are harder for the AI to evaluate cleanly and produce confusing failure messages.

"Is the error message 'Invalid credentials' visible below the login form?"

Not: 'Is the error message visible and does it say Invalid credentials and is the login button still enabled?', split these into three separate assertions.

Pattern 5: The Fallback Instruction

For data that may not always exist (discounts, optional fields, conditional UI elements), always specify what the AI should do when that data is absent.

"Extract the promotional banner text into PROMO_TEXT, or set PROMO_TEXT to 'none' if no promotional banner is displayed on the page."

Why it works: Tests that handle absence are far more stable across different data states and environments.

Spotlight: Harness AI Test Automation

Harness AI Test Automation (AIT) is one of the most complete implementations of prompt-driven E2E testing available today. It reduces the need to manually script Selenium/Playwright flows with an intent-driven model: you describe what a user wants to achieve, and Harness AI figures out how to test it.

The platform is built on an agentic AI testing architecture, an autonomous testing system that blends LLM reasoning with real-time application exploration, DOM analysis, and screenshot-based visual validation. What makes it especially relevant to this discussion is that Harness AIT exposes the quality of your prompts directly: write a vague intent, get an unreliable test. Write a precise one, get a test that runs stably in your CI/CD testing pipeline.

"Rather than scripting every step of 'add item to cart and checkout,' a tester writes: Verify that a user can add an item to the cart and complete checkout successfully. The AI testing tool interprets the intent and executes the full flow, including assertions."

The 4 Harness AI Command Types

Harness structures AI instructions into four command types for codeless test automation. Each has its own prompting rules; get them right and your tests become dramatically more stable.

AI Assertion - Verify application state at a specific point in execution

Write it like this:

"In the confirmation dialog, is the deposit amount displayed as $100.00?"

Avoid this:

"Is the amount correct?" AI has no memory of what amount was entered.

AI Command - Perform a specific, discrete UI interaction

Write it like this:

"After the loading spinner disappears, click the 'Continue' button in the payment form."

Avoid this:

"Click Continue." Which Continue? What if it's not ready yet?

AI Task - Execute a complete multi-step business workflow

Write it like this:

"Transfer $500 from Savings to Checking, confirm the transaction and verify both balances are updated correctly."

Avoid this:

"Transfer money between accounts.", missing values, accounts, and success criteria.

AI Extract Data - Capture dynamic values for use in subsequent test steps

Write it like this:

"Create parameter ORDER_ID and assign the order number from the confirmation message on this page."

Avoid this:

"Get the order number.", stored where? from which element?

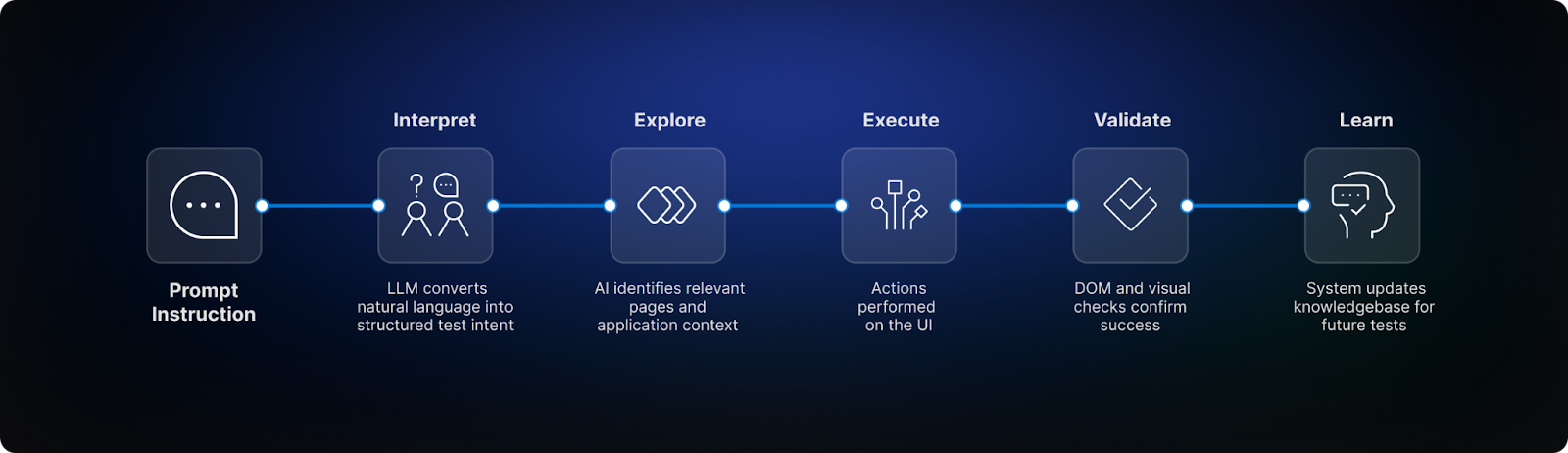

Harness AIT: How the Agentic Workflow Uses Your Prompts

When you submit an intent-driven prompt to Harness AIT, it goes through a five-stage pipeline, and the quality of your prompt shapes every stage:

1. Interpret

The LLM Interface Layer reads your natural language prompt and formulates a structured test intent. Vague prompts produce ambiguous intents.

2. Explore

The AI queries its App Knowledgebase (Application Context) to find relevant pages and flows. Specific context in your prompt narrows this search dramatically.

3. Execute

Each step is translated into an executable action. Content-based references in your prompt produce resilient steps. Positional ones produce fragile ones.

4. Validate

DOM and screenshot-based validation confirms both functional and visual state. Your assertion prompts define exactly what gets checked.

5. Learn

Each run updates the App Knowledgebase. Better prompts produce richer, more accurate knowledge, improving future test case generation and reducing test maintenance.

The Prompt Mistakes That Break E2E Tests

These are the most common prompt antipatterns seen in AI-driven E2E testing, each one a reliable way to introduce flakiness:

Positional References

Saying 'click the third row' or 'select the first option' creates tests that break every time data changes or UI reorders.

Missing Context

Assertions like 'Is the amount correct?' fail because the AI might not have any memory of previous steps. Restate the expected values in assertions. Every prompt must be self-contained.

Compound Assertions

Checking multiple conditions in one assertion makes failures ambiguous. One assertion, one condition, always.

No Success Criteria

Tasks like 'register a new user' without specifying what success looks like leave the AI guessing when to stop.

Assumed Data Formats

Not specifying 'extract the total as a number without a currency symbol' means you might get '$1,234.56' when you needed '1234.56'.

Ignoring Timing

Not accounting for loading states ('after the spinner disappears') is one of the top causes of intermittent test failures.

The Takeaway: Prompts Are Your New Test Scripts

End-to-end testing has always required precision. The medium has changed, from XPath selectors and coded steps to natural language testing instructions, but the requirement for precision hasn't. If anything, the stakes are higher because a poorly written prompt now fails invisibly: the AI will attempt something, just not what you intended.

The teams getting the most out of AI test automation are not the ones with the most sophisticated models. They're the ones who've learned to write clear, specific, self-contained instructions through effective prompt engineering. Who knows the difference between 'click the third button' and 'click the Submit button in the payment form.' Who ends every assertion with a question mark and every task with a success criterion.

Platforms like Harness AI Test Automation are built to reward exactly this kind of precision, turning well-crafted prompts into stable, self-healing tests that are CI/CD testing-ready and survive the real world with minimal test maintenance.

"The art of prompt engineering isn't about clever wording. It's about transferring your intent, completely and unambiguously, to an autonomous testing system that will act on every word you write."

Write with that precision, and your intelligent test automation will finally be the safety net it was always meant to be.

Ready to Transform Your Testing with AI?

Harness AI Test Automation empowers teams to move faster with confidence. Key benefits include:

- Faster test creation: Write robust, intent-driven tests in minutes rather than hours

- Reduced test maintenance: Self-healing tests adapt to UI changes automatically, slashing maintenance by up to 70%

- Improved collaboration: Align developers, testers, and product managers around shared intent with natural language testing

- CI/CD ready: Seamlessly integrate with your existi+ng pipelines and accelerate software delivery

Harness AI Test Automation turns traditional QA challenges into opportunities for smarter, more reliable automation, enabling organizations to release software faster while maintaining high quality.

If you're ready to eliminate flaky tests, simplify maintenance, and improve test reliability with intent-driven, natural-language testing, try Harness AI Test Automation today or contact our team to see how it can transform your testing experience.

%2520copy.png)