Releasing fearlessly isn't just about getting code into production safely. It's about knowing what happened after the release, trusting the answer, and acting on it without stitching together three more tools.

That is where many teams still break down.

They can deploy. They can gate features. They can even run experiments. But the moment they need trustworthy results, the workflow fragments. Event data moves into another system. Metric definitions drift from business logic. Product, engineering, and data teams start debating the numbers instead of deciding what to do next.

That's why Warehouse Native Experimentation matters.

Today, Harness is making Warehouse Native Experimentation generally available in Feature Management & Experimentation (FME). After proving the model in beta, this capability is now ready for broader production use by teams that want to run experiments directly where their data already lives.

This is an important launch on its own. It is also an important part of the broader Harness platform story.

Because “release fearlessly” is incomplete if experimentation still depends on exported datasets, shadow pipelines, and black-box analysis.

Releasing fearlessly requires more than safer deployments

The AI era changed one thing fast: the volume of change.

Teams can create, modify, and ship software faster than ever. What didn't automatically improve was the system that turns change into controlled outcomes. Release coordination, verification, experimentation, and decision-making are still too often fragmented across different tools and teams.

That's the delivery gap.

In a recent Harness webinar, Lena Sano, a Software Developer on the Harness DevRel team and I showed why this matters. Their point was straightforward: deployment alone is not enough. As I said in the webinar, feature flags are “the logical end of the pipeline process.”

That framing matters because it moves experimentation out of the “nice to have later” category and into the release system itself.

When teams deploy code with Harness Continuous Delivery, expose functionality with Harness FME, and now analyze experiment outcomes with trusted warehouse data, the release moment becomes a closed loop. You don't just ship. You learn.

Warehouse Native Experimentation is now generally available

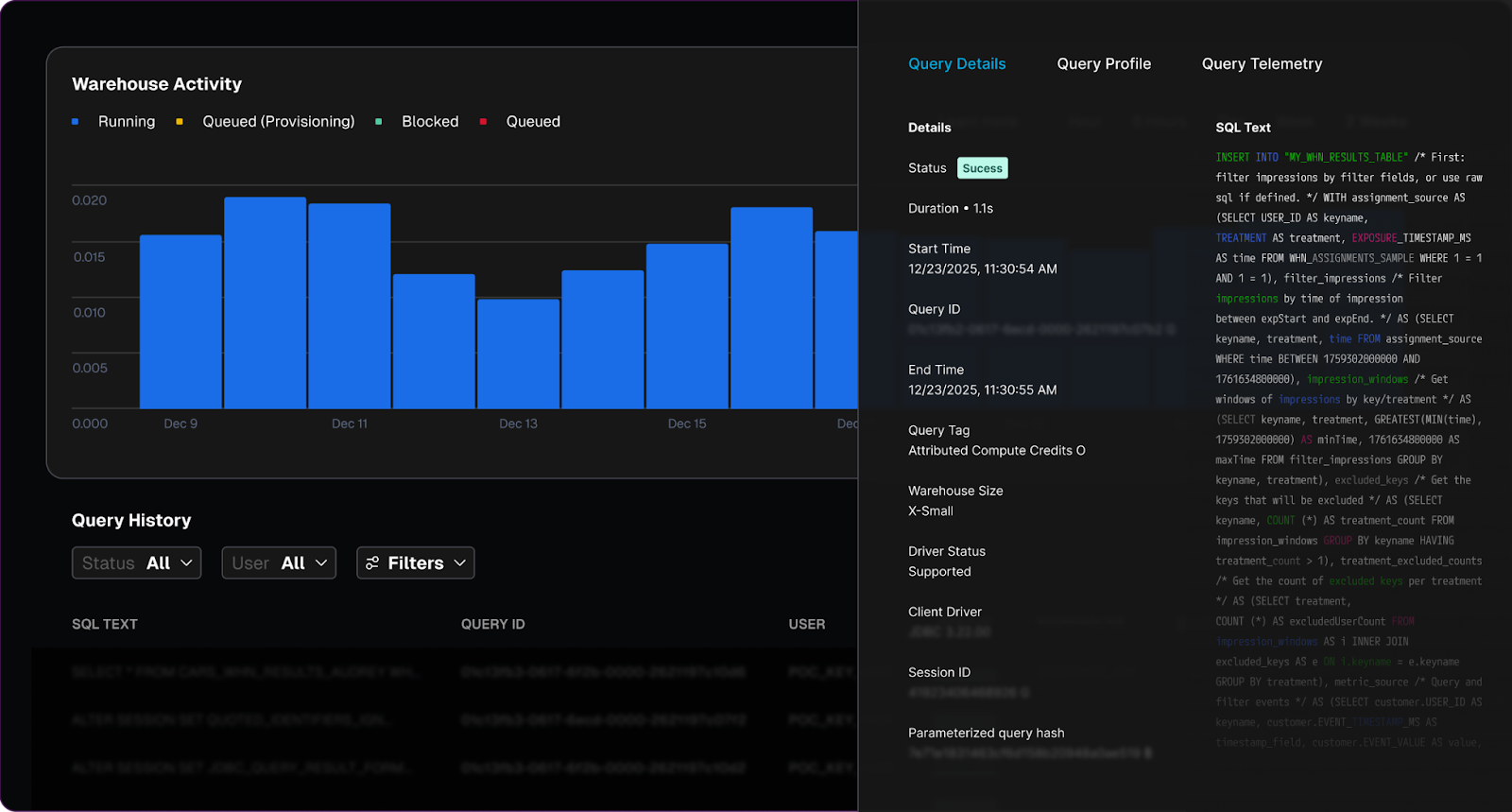

Warehouse Native Experimentation extends Harness FME with a model that keeps experiment analysis inside the data warehouse instead of forcing teams to export data into a separate analytics stack.

That matters for three reasons.

First, it keeps teams closer to the source of truth the business already trusts.

Second, it reduces operational drag. Teams do not need to build and maintain unnecessary movement of assignment and event data just to answer basic product questions.

Third, it makes experimentation more credible across functions. Product teams, engineers, and data stakeholders can work from the same governed data foundation instead of arguing over two competing systems.

General availability makes this model ready to support production experimentation programs that need more than speed. They need trust, repeatability, and platform-level consistency.

Why the old experimentation model breaks at AI speed

Traditional experimentation workflows assume that analysis can happen somewhere downstream from release. That assumption does not hold up well anymore.

When development velocity rises, so does the volume of features to evaluate. Teams need faster feedback loops, but they also need stronger confidence in the data behind the decision. If every experiment requires moving data into another system, recreating business metrics, and validating opaque calculations, the bottleneck just shifts from deployment to analysis.

That's the wrong pattern for platform teams.

Platform teams are being asked to support higher release frequency without increasing risk. They need standardized workflows, strong governance, and fewer manual handoffs. They do not need another disconnected toolchain where experimentation introduces more uncertainty than it removes.

Warehouse Native Experimentation addresses that by bringing experimentation closer to the release process and closer to trusted business data at the same time.

What Warehouse Native Experimentation does differently

This launch matters because it changes how experimentation fits into the software delivery model.

Experiment where your data lives

Warehouse Native Experimentation lets teams run analyses directly in supported data warehouses rather than exporting experiment data into an external system first.

That is a meaningful shift.

It means your experiment logic can operate where your product events, business events, and governed data models already exist. Instead of copying data out and hoping definitions stay aligned, teams can work from the warehouse as the source of truth.

For organizations already invested in platforms like Snowflake or Amazon Redshift, this reduces friction and increases confidence. It also helps avoid the shadow-data problem that shows up when experimentation becomes one more separate analytics island.

Create metrics that reflect the business

Good experimentation depends on metric quality.

Warehouse Native Experimentation lets teams define metrics from the warehouse tables they already trust. That includes product success metrics as well as guardrail metrics that help teams catch regressions before they become larger incidents.

This is a bigger capability than it may appear.

Many experimentation programs fail not because teams lack ideas, but because they cannot agree on what success actually means. When conversion, latency, revenue, or engagement are defined differently across tools, the experiment result becomes negotiable.

Harness moves that discussion in the right direction. The metric should reflect the business reality, not the reporting limitations of a separate experimentation engine.

Understand impact with transparent results

Speed matters. Trust matters more.

Warehouse Native Experimentation helps teams understand impact with results that are transparent and inspectable. That gives engineering, product, and data teams a better basis for action.

The practical benefit is simple: when a result looks surprising, teams can validate the logic instead of debating whether the tool is doing something hidden behind the scenes.

That transparency is a major part of the launch story. Release fear decreases when teams trust both the rollout controls and the data used to judge success.

Why this is a platform story, not a point feature

Warehouse Native Experimentation is valuable on its own. But its full value shows up when you look at how it fits into the Harness platform.

Pipelines standardize the release moment

In the webinar, Lena demonstrated a workflow where a pipeline controlled flag status, targeting, approvals, rollout progression, and even cleanup. I emphasized that “95% of it was run by a single pipeline.”

That is not just a demo detail. It is the operating model platform teams want.

Pipelines make releases consistent. They reduce team-to-team variation. They create auditability. They turn release behavior into a reusable system instead of a series of manual decisions.

FME controls exposure and learning

Harness FME gives teams the ability to decouple deployment from release, expose features gradually, target specific cohorts, and run experiments as part of a safer delivery motion.

That is already powerful.

It lets teams avoid full application rollback when one feature underperforms. It lets them isolate problems faster. It gives product teams a structured way to learn from real usage without treating every feature launch like an all-or-nothing event.

Warehouse-native analysis closes the loop with trusted data

Warehouse Native Experimentation completes that model.

Now the experiment does not end at exposure control. It continues into governed analysis using the data infrastructure the business already depends on. The result is a tighter loop from release to measurement to decision.

That is why this is a platform launch.

Harness is not asking teams to choose between delivery tooling and experimentation tooling and warehouse trust. The platform brings those motions together:

- Deploy the change safely with pipelines.

- Release it progressively with feature management.

- Measure it with experiments tied to trusted warehouse data.

- Standardize the workflow so teams can repeat it at scale.

That is what “release fearlessly” looks like when it extends beyond deployment.

The operating model: release, verify, learn, standardize

Engineering leaders should think about this launch as a better operating model for software change.

Release with control. Use pipelines and feature flags to separate deployment from feature exposure.

Verify with the right signals. Use guardrail metrics and rollout logic to contain risk before it spreads.

Learn from trusted data. Run experiments against the warehouse instead of recreating the truth somewhere else.

Standardize the process. Make approvals, measurement, and cleanup part of the same repeatable workflow.

This is especially important for platform teams trying to keep pace with AI-assisted development. More code generation only helps the business if the release system can safely absorb more change and turn it into measurable outcomes.

Warehouse Native Experimentation helps make that possible.

Who should care now

This feature will be especially relevant for teams that:

- already store product and business event data in a warehouse

- want to reduce the overhead of separate experimentation infrastructure

- need stronger trust and governance around experiment analysis

- are standardizing progressive delivery practices across multiple teams

- want experimentation to support release operations instead of sitting outside them

Want to see it in action?

As software teams push more change through the system, trusted experimentation can no longer sit off to the side. It has to be part of the release model itself.

Harness now gives teams a stronger path to do exactly that: deploy safely, release progressively, and measure impact where trusted data already lives. That is not just better experimentation. It is a better software delivery system.

Ready to see how Harness helps teams release fearlessly with trusted, warehouse-native experimentation? Contact Harness for a demo.

FAQ

What is Warehouse Native Experimentation?

Warehouse Native Experimentation is a capability in Harness FME that lets teams analyze experiment outcomes directly in their data warehouse. That keeps experimentation closer to governed business data and reduces the need to export data into separate analysis systems.

Why does general availability matter?

GA signals that the capability is ready for broader production adoption. For platform and product teams, that means Warehouse Native Experimentation can become part of a standardized release and experimentation workflow rather than a limited beta program.

How is warehouse-native experimentation different from traditional experimentation tools?

Traditional approaches often require moving event data into a separate system for analysis. Warehouse-native experimentation keeps analysis where the data already lives, which improves trust, reduces operational overhead, and helps align experiment metrics with business definitions.

How does this support the “release fearlessly” story?

Safer releases are not only about deployment controls. They also require trusted feedback after release. Warehouse Native Experimentation helps teams learn from production changes using governed warehouse data, making release decisions more confident and more repeatable.

How does this fit with Harness pipelines and feature flags?

Harness pipelines help standardize the release workflow, while Harness FME controls rollout and experimentation. Warehouse Native Experimentation adds trusted measurement to that same motion, closing the loop from deployment to exposure to decision.

Who benefits most from this capability?

Organizations with mature data warehouses, strong governance requirements, and a need to scale experimentation across teams will benefit most. It is especially relevant for platform teams that want experimentation to be part of a consistent software delivery model.