AI is proliferating across enterprise environments faster than security teams can govern it. From third-party LLM integrations to agentic frameworks like Model Context Protocol (MCP), most organisations have limited visibility into how many AI systems are running, what data they process, or what risks they introduce.

Why AI Security Posture Management Matters Now

Three realities are driving this to the top of the security agenda:

- Rapid, ungoverned adoption: AI integrations enter production through multiple vectors simultaneously - developer experiments, third-party SaaS with embedded LLM capabilities, and MCP servers that expose tools and data sources to autonomous AI agents - often without security review.

- Systemic lack of visibility: Most CISOs cannot accurately answer how many AI APIs are running in their environment. AI integrations do not announce themselves to security teams; they surface in compliance audits and incident investigations months after deployment.

- Sensitive data exposure at the AI layer: Every AI API is a potential data exposure point. Prompts carry confidential business context and personal information. Model responses can surface training data or infer sensitive attributes. Traditional API security tools were not built to address this risk.

Example: Shadow AI in a financial services firm

A quantitative analyst team integrates an LLM into their research workflow. The integration ships as a product feature. Six months later, a compliance review finds the endpoint is externally accessible, processes client PII, and transmits data to a third-party model provider outside the scope of the firm's data processing agreements. The AI system existed, processed regulated data, and created regulatory exposure - entirely outside the security programme's awareness.

The Framework: Discover - Understand - Assess Risk - Operationalise

Effective AI security is not a single capability - it is a continuous workflow across four phases:

|

01 Discover Shadow AI & MCP |

02 Understand Sensitive data flows |

03 Assess Risk AI-specific risks |

04 Operationalise Integrate into SecOps |

01 Discover: Build the Complete AI Asset Inventory

Harness continuously discovers and classifies every AI asset from live traffic and API specifications - no manual registration required:

- AI APIs: endpoints connecting to external LLM providers (OpenAI, Google, Anthropic, Azure OpenAI)

- MCP servers and tools: discrete capabilities exposed to AI agents, including database queries and external API calls

- MCP resources: data sources exposed to AI agents, including files, documents, and structured data that provide context to AI workflows.

- Non-AI APIs: APIs that interact with AI infrastructure - including calls to vector databases and RAG (Retrieval-Augmented Generation) data stores - that form part of the AI data pipeline

Shadow AI found by Harness is risk-scored, ownership-flagged, and surfaced for immediate security review. The finding moves directly into the vulnerability lifecycle with a URL, environment classification, and traffic record.

02 Understand: Map Sensitive Data Flows Across the AI Data Plane

Harness continuously analyzes AI API & MCP traffic to identify sensitive data types flowing through every discovered endpoint:

- Request payloads: prompts, user context, and input parameters carrying PII, financial data, or health information

- Response payloads: model outputs and generated content that may surface training data or infer sensitive attributes

- Third-party boundaries: every inference call to an external LLM provider is a data transfer event; Harness monitors exactly what data crosses the model provider boundary

When sensitive data appears in an AI endpoint for the first time, or is transmitted to an external provider, Harness surfaces a real-time Posture Event - giving privacy and compliance teams the window to act before an exposure becomes a breach notification obligation.

03 Assess Risk: AI-Specific Vulnerability Detection and Risk Scoring

Harness detects AI API & MCP tool vulnerabilities passively from live traffic - no active scanning, no disruption to production AI workloads. Detection covers:

- OWASP API Top 10: applied specifically to AI traffic patterns, including auth weaknesses, excessive data exposure in model responses, and missing rate limiting on LLM endpoints

- MCP-specific risks: tools exposed to the public internet without authentication

- Specification drift: shadow endpoints (traffic but no spec) and orphan endpoints (spec but no traffic) identified by continuous conformance analysis

Risk scoring applies AI-specific weighting: an unauthenticated, externally exposed LLM endpoint is simultaneously a prompt injection target, a data extraction vector, and a compute abuse surface. Scores are dynamic, recalculating as traffic patterns and sensitive data classifications change.

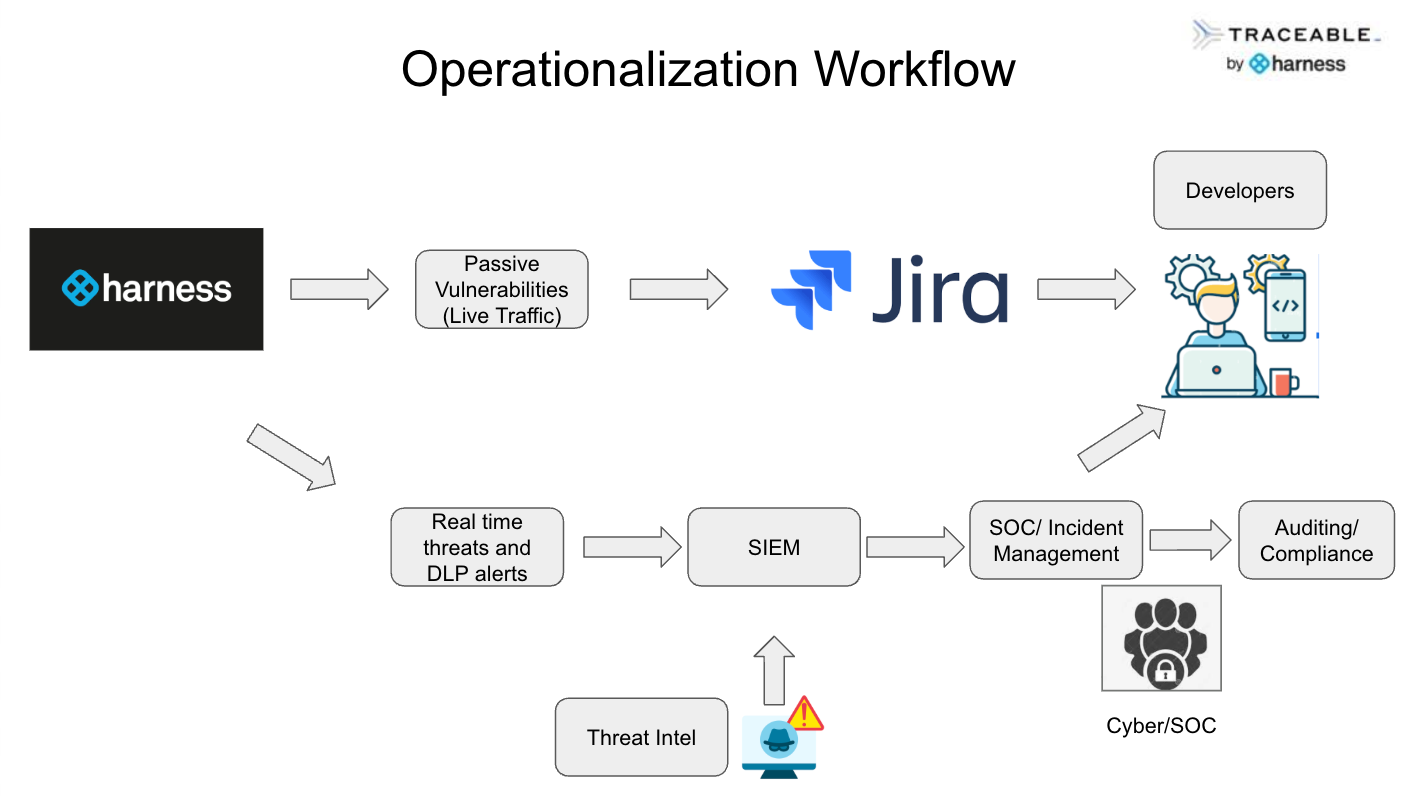

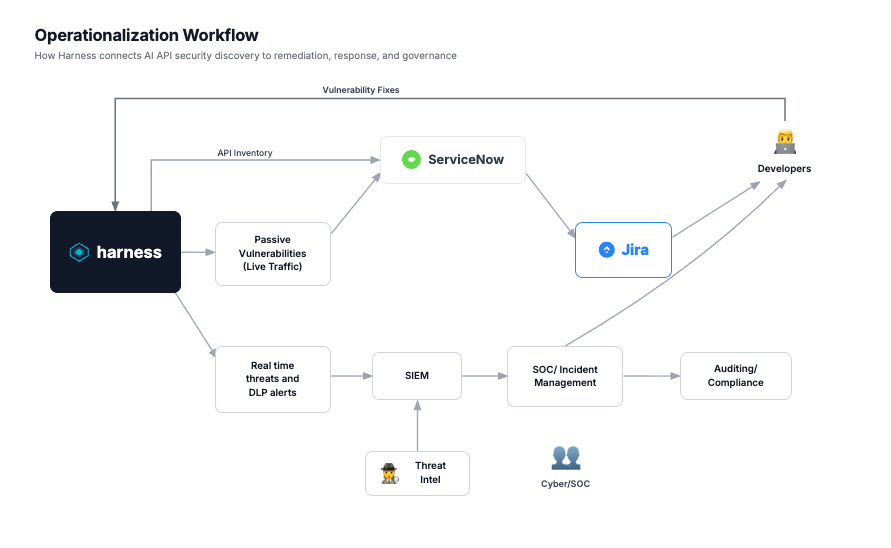

04 Operationalise: Integrate AI Posture into Security Workflows

Harness Posture Events feed connects AI security signals to the workflows security teams already run:

- Jira: vulnerabilities auto-generate tickets pre-populated with endpoint details, CVSS score, and mitigation guidance

- SIEM / SOC: real-time AI threats - prompt injection attempts, DLP alerts, anomalous model behaviour - flow into the SIEM for correlation; Harness provides the characterised asset context before the alert reaches the analyst

- Compliance evidence: every Posture Event is timestamped, filterable, and CSV-exportable, building a continuous audit trail without manual assembly

Custom notifications: privacy teams can alert on sensitive data to 3rd parties; SOC on risk score spikes; governance on new shadow AI assets

Maturity Model: Day 1 - Day 30 - Day 90

AI security posture management is a journey, not a deployment. Here is how organisations evolve:

| Stage | Security Focus | Outcome |

|---|---|---|

| Day 1 | Discover all AI APIs, MCP servers, MCP Tools, Vector DB, and RAG APIs across all environments | Complete AI asset inventory; shadow AI flagged for immediate review |

| Day 30 | Map sensitive data flows; apply AI-specific risk scoring; prioritise remediation | High-risk AI endpoints identified; 3rd-party data flows assessed against DPAs |

| Day 90 | Integrate AI posture into SOC, compliance reporting, and vulnerability SLAs | AI security governed continuously; audit evidence on demand; attack surface shrinking |

|

KEY INSIGHT |

The Day 1 to Day 30 transition is the most critical: moving from 'we have a list' to 'we understand what our AI systems touch and which carry the most risk.' Most organisations stall at Day 1 because they lack the data classification and risk scoring layer to act on what they found. |

Enterprise Extension: ServiceNow CMDB Integration

For organisations where CMDB governs asset lifecycle, Harness’s Service Graph Connector extends AI-SPM into ServiceNow. Key use cases:

- Change management: new AI APIs discovered by Traceable trigger a ServiceNow change request in 'Pending Review' state before accumulating production traffic

- Vendor risk: third-party AI providers are represented as vendor CIs linked to DPAs and risk assessment records

- Incident response: SOC analysts work on AI incidents with Traceable's threat intelligence and CMDB's organisational context simultaneously

- Compliance evidence: AI compliance questions answered directly from CMDB records populated by Traceable - no manual assembly

- Decommissioning: orphaned AI endpoints identified by Traceable's conformance analysis trigger governed retirement workflows; Traceable confirms the endpoint has gone dark

The Bottom Line

Operationalising AI security is not about scanning prompts. It is about continuously discovering AI systems, understanding how they access sensitive data, assessing the risks they introduce, and integrating AI posture into the security operations that already exist.

The organisations that build this capability now will govern what others are still trying to find, detect exposures before they become incidents, and answer regulatory questions with data rather than approximation - continuously, not periodically.