On March 24, 2026, the AI open-source ecosystem was impacted by a critical supply chain attack involving the widely used Python package LiteLLM. Attackers compromised the LiteLLM PyPI distribution pipeline and published malicious versions (notably in the 1.82.7-1.82.8 range), embedding a multi-stage payload designed to steal credentials and execute remote code.

The malicious code executed during normal library usage, making it particularly dangerous for AI applications that integrate LiteLLM into inference pipelines, RAG systems, and API gateways. The attack leveraged Python’s .pth file mechanism, a lesser-known but powerful feature that allows arbitrary code execution during interpreter initialization. The malicious package dropped a file (e.g., litellm_init.pth) into the Python environment, ensuring that the payload was executed every time the Python interpreter started, regardless of whether LiteLLM was explicitly imported.

However, this was not a simple static backdoor. The package implemented a multi-stage execution chain, where initial execution triggered outbound communication to attacker-controlled infrastructure to retrieve further instructions dynamically.

Notably, the malware leveraged blockchain-based command-and-control (C2) and extracting payload locations from transaction metadata. This allowed attackers to update payload delivery dynamically without modifying the package itself, making detection and takedown significantly harder.

The attack also performed a cross-language execution pivot, downloading a Node.js runtime onto the host system and executing an obfuscated second-stage JavaScript payload. This technique enabled evasion of Python-focused security controls and introduced runtime behavior that would not typically be expected during dependency execution.

Once triggered, the payload attempted to exfiltrate sensitive environment variables and secrets, including API keys for major LLM providers (OpenAI, Anthropic), cloud credentials, and internal tokens.

Python .pth Files: Silent Execution Hooks at Interpreter Startup

A critical aspect of this attack is the abuse of Python’s .pth file mechanism, an often overlooked feature that can be weaponized for persistent, pre-execution code injection.

.pth files are automatically processed during Python interpreter startup via the site module. They are typically located in site-packages or user site directories and are intended to extend Python’s module search path.

However, .pth files support more than just path configuration. If a line starts with an import, it is executed immediately:

Example: Simple Demo of Hidden Code Execution

DIY: Find your site-packages path

python3 -c "import site; print(site.getsitepackages())"

# Or

python3 -c "import site; print(site.getusersitepackages())"

# Choose the one with write access and

# write a demo1.pth file to that site packages dir

echo 'import sys; print("[PTH DEMO EXECUTED]")' > /path/to/site-packages/demo1.pthOr

Automated script to create the demo .pth file

git clone https://github.com/aspen-labs/python_pth_autoexecution.git

cd python_pth_autoexecution

python3 demo_pth.pyNow, every time Python runs,

> python3 -c "print('Hello')"

[PTH DEMO EXECUTED]

HelloImpact:

- Compromised PyPI versions of LiteLLM (primarily 1.82.x series) distributed malicious code to downstream users.

- Persistent execution via .pth interpreter hijacking, enabling code execution on every Python startup without intended use of the library.

- Use of decentralized C2 (blockchain) makes traditional takedown and IOC blocking less effective.

- Execution of second-stage JavaScript payloads (e.g., i.js), enabling cross-language runtime pivoting and evasion of Python-focused detection controls

- Exposure of sensitive credentials, including LLM API keys, cloud provider secrets, and environment variables.

- High risk to AI/LLM applications, especially RAG pipelines and backend services handling user prompts and responses.

- Potential lateral movement into CI/CD systems and production environments due to leaked credentials.

This attack highlights a new class of supply chain risk centered around AI infrastructure dependencies. Unlike traditional package compromises, the blast radius extends into model access, prompt data, and downstream AI workflows. It reinforces the need for continuous dependency monitoring, real-time SBOM visibility, and strict policy enforcement to prevent compromised packages from entering build and runtime environments.

How Harness Supply Chain Security Helps

Harness SCS helps you quickly detect and contain compromised dependencies like the LiteLLM package before they impact your pipelines. With real-time visibility into your SBOMs and dependency graph, you can identify affected versions, trace their usage across builds and environments, and block them using OPA policies. This ensures malicious packages never propagate through your CI/CD or AI workflows.

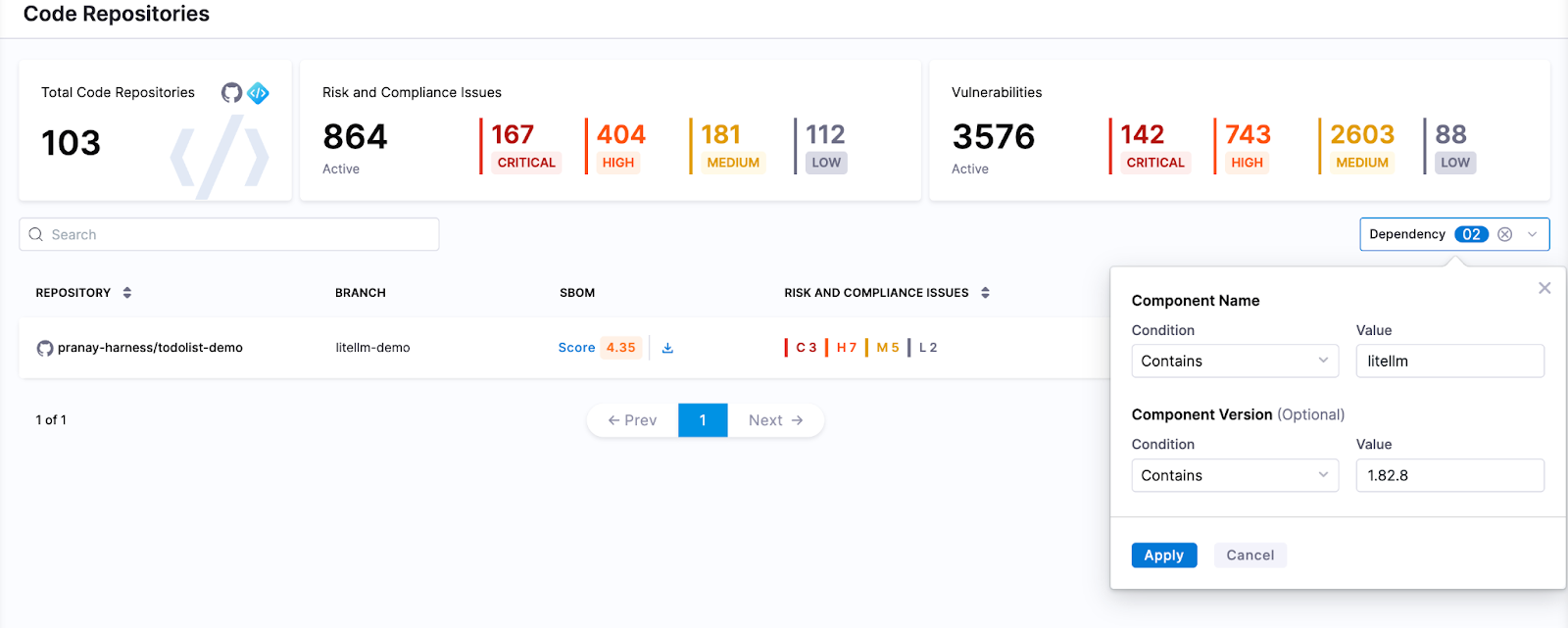

Detect Compromised LiteLLM Package

Harness SCS enables instant search across all repositories and artifacts to quickly identify if compromised LiteLLM versions exist in your environment. The moment such a malicious package is disclosed, you can pinpoint its presence and assess impact across your entire supply chain in seconds.

Block Compromised LiteLLM Packages

Harness AI streamlines response to incidents like the LiteLLM compromise through simple natural-language prompts. With a single prompt, you can generate OPA policies to block affected LiteLLM versions across all pipelines, preventing malicious packages from entering builds or deployments. As new compromised versions emerge, these policies can be quickly updated to maintain strong preventive controls across your SDLC. SCS customers can use this OPA policy to detect and block the affected versions

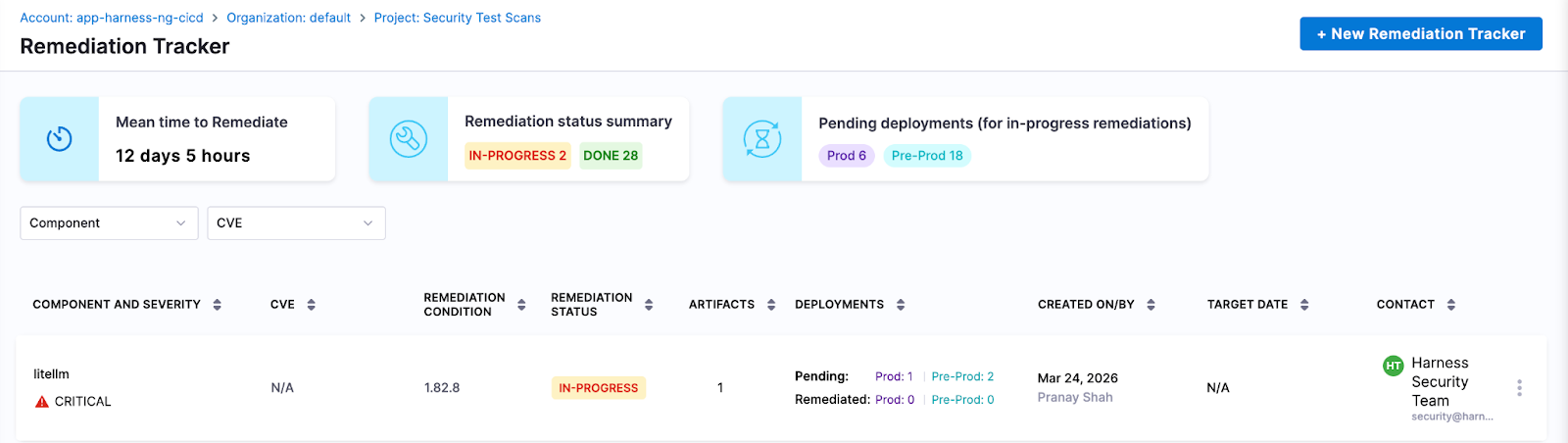

Track & Remediate Issues with Developers

Harness SCS automatically detects compromised LiteLLM versions across both production and non-production environments. Teams can track remediation, assign fixes, and monitor progress through to deployment, ensuring exposed credentials and vulnerable dependencies are addressed quickly. This end-to-end visibility helps contain the impact and prevents compromised packages from persisting in your supply chain.

Next Steps in the Face of Supply Chain Attacks

The LiteLLM compromise highlights how quickly a malicious package can expose high-value secrets when embedded deep within AI application stacks. Given its role in handling LLM requests, the impact extends beyond code to API keys, prompt data, and downstream systems, often bypassing traditional security checks.

Defending against such attacks requires more than reactive fixes. Teams need real-time visibility into dependencies, the ability to enforce policies to block compromised versions, and continuous tracking to ensure remediation is complete across all environments. Harness SCS enables teams to quickly identify where affected LiteLLM versions are used, prevent them from entering new builds, and ensure fixes are consistently rolled out.

With these controls in place, organizations can limit credential exposure, contain threats early, and secure their AI supply chain against attacks like the LiteLLM compromise.

Also, learn how Harness SCS defends against Shai-Hulud, TJ Actions, NPM 1.0, xz-utils supply chain attacks.