Featured Blogs

At Harness, we know developer velocity depends on everyday workflow. That is why we reimagined Harness Code with a faster, cleaner, and more intuitive experience that helps engineers stay in flow from the first clone to the final merge.

Why Developers Love the New Experience

Smarter Pull Request Reviews

Review diffs and conversations without constant context switching. Inline comments, keyboard shortcuts, and faster file rendering help you focus on the code instead of the clicks.

Faster File Tree and Change Listing

The new file browser is optimized for large repositories. You can search, jump, and scan changes instantly even when working with thousands of files.

Seamless Repo Navigation

Move between branches, commits, and repositories without losing your scroll position or comment state.

Unified Harness Design System

The entire interface now uses the same design system as the rest of the Harness platform, which reduces the learning curve and makes navigation feel natural.

Why Leaders Should Care

Every inefficiency in the developer experience is a hidden tax on velocity. Harness Code removes those blockers so your teams:

- Ship faster by reducing wasted time in code reviews.

- Collaborate better with a clear, intuitive UI that scales across teams.

- Standardize workflows with a design system that unifies the Harness platform.

All 500-plus Harness engineers are already using the new experience, proving it scales in real enterprise environments.

Seamless Rollout, Zero Migration

Adopting the new experience is effortless:

- Beginning of October 2025: Available for all users (opt-in).

- End of December 2025: Legacy UI deprecated.

- Beginning of January 2026: New experience becomes default.

There is nothing to migrate. Simply click 'Opt In', and your repositories, permissions, and integrations will continue to work as before.

What’s Next

The new Harness Code experience is only the beginning. Coming soon:

- Even faster repo load times.

- More native AI support for PR reviews and commit insights.

We’re continuing to invest in developer-first features that make Harness Code not just a repository, but the heartbeat of your software delivery pipeline.

Try It Today

If you have been looking for a modern, developer-first alternative to GitHub or GitLab that integrates directly with your CI/CD pipelines, now is the time to try it.

👉 Start your Harness Code trial today and experience a repo that helps you move faster and deliver more.

Learn more: Workflow Management, What Is a Developer Platform

.webp)

Harness Cloud is a fully managed Continuous Integration (CI) platform that allows teams to run builds on Harness-managed virtual machines (VMs) pre-configured with tools, packages, and settings typically used in CI pipelines. In this blog, we'll dive into the four core pillars of Harness Cloud: Speed, Governance, Reliability, and Security. By the end of this post, you'll understand how Harness Cloud streamlines your CI process, saves time, ensures better governance, and provides reliable, secure builds for your development teams.

Faster Builds

Harness Cloud delivers blazing-fast builds on multiple platforms, including Linux, macOS, Windows, and mobile operating systems. With Harness Cloud, your builds run in isolation on pre-configured VMs managed by Harness. This means you don’t have to waste time setting up or maintaining your infrastructure. Harness handles the heavy lifting, allowing you to focus on writing code instead of waiting for builds to complete.

The speed of your CI pipeline is crucial for agile development, and Harness Cloud gives you just that—quick, efficient builds that scale according to your needs. With starter pipelines available for various programming languages, you can get up and running quickly without having to customize your environment.

Streamlined Governance

One of the most critical aspects of any enterprise CI/CD process is governance. With Harness Cloud, you can rest assured that your builds are running in a controlled environment. Harness Cloud makes it easier to manage your build infrastructure with centralized configurations and a clear, auditable process. This improves visibility and reduces the complexity of managing your CI pipelines.

Harness also gives you access to the latest features as soon as they’re rolled out. This early access enables teams to stay ahead of the curve, trying out new functionality without worrying about maintaining the underlying infrastructure. By using Harness Cloud, you're ensuring that your team is always using the latest CI innovations.

Reliable and Scalable Infrastructure

Reliability is paramount when it comes to build systems. With Harness Cloud, you can trust that your builds are running smoothly and consistently. Harness manages, maintains, and updates the virtual machines (VMs), so you don't have to worry about patching, system failures, or hardware-related issues. This hands-off approach reduces the risk of downtime and builds interruptions, ensuring that your development process is as seamless as possible.

By using Harness-managed infrastructure, you gain the peace of mind that comes with a fully supported, reliable platform. Whether you're running a handful of builds or thousands, Harness ensures they’re executed with the same level of reliability and uptime.

Robust Security

Security is at the forefront of Harness Cloud. With Harness managing your build infrastructure, you don't need to worry about the complexities of securing your own build machines. Harness ensures that all the necessary security protocols are in place to protect your code and the environment in which it runs.

Harness Cloud's commitment to security includes achieving SLSA Level 3 compliance, which ensures the integrity of the software supply chain by generating and verifying provenance for build artifacts. This compliance is achieved through features like isolated build environments and strict access controls, ensuring each build runs in a secure, tamper-proof environment.

For details, read the blog An In-depth Look at Achieving SLSA Level-3 Compliance with Harness.

Harness Cloud also enables secure connectivity to on-prem services and tools, allowing teams to safely integrate with self-hosted artifact repositories, source control systems, and other critical infrastructure. By leveraging Secure Connect, Harness ensures that these connections are encrypted and controlled, eliminating the need to expose internal resources to the public internet. This provides a seamless and secure way to incorporate on-prem dependencies into your CI workflows without compromising security.

Next Steps

Harness Cloud makes it easy to run and scale your CI pipelines without the headache of managing infrastructure. By focusing on the four pillars—speed, governance, reliability, and security—Harness ensures that your development pipeline runs efficiently and securely.

Harness CI and Harness Cloud give you:

✅ Blazing-fast builds—8X faster than traditional CI solutions

✅ A unified platform—Run builds on any language, any OS, including mobile

✅ Native SCM—Harness Code Repository is free and comes packed with built-in governance & security

If you're ready to experience a fully managed, high-performance CI environment, give Harness Cloud a try today.

.webp)

As software projects scale, build times often become a major bottleneck, especially when using tools like Bazel. Bazel is known for its speed and scalability, handling large codebases with ease. However, even the most optimized build tools can be slowed down by inefficient CI pipelines. In this blog, we’ll dive into how Bazel’s build capabilities can be taken to the next level with Harness CI. By leveraging features like Build Intelligence and caching, Harness CI helps maximize Bazel's performance, ensuring faster builds and a more efficient development cycle.

How Harness CI Enhances Bazel Builds

Harness CI integrates seamlessly with Bazel, taking full advantage of its strengths and enhancing performance. The best part? As a user, you don’t have to provide any additional configuration to leverage the build intelligence feature. Harness CI automatically configures the remote cache for your Bazel builds, optimizing the process from day one.

Build Intelligence: Speeding Up Bazel Builds

Harness CI’s Build Intelligence ensures that Bazel builds are as fast and efficient as possible. While Bazel has its own caching mechanisms, Harness CI takes this a step further by automatically configuring and optimizing the remote cache, reducing build times without any manual setup.

This automatic configuration means that you can benefit from faster, more efficient builds right away—without having to tweak cache settings or worry about how to handle build artifacts across multiple machines.

How It Works with Bazel

Harness CI seamlessly integrates with Bazel’s caching system, automatically handling the configuration of remote caches. So, when you run a build, Harness CI makes sure that any unchanged files are skipped, and only the necessary tasks are executed. If there are any changes, only those parts of the project are rebuilt, making the process significantly faster.

For example, when building the bazel-gazelle project, Harness CI ensures that any unchanged files are cached and reused in subsequent builds, reducing the need for unnecessary recompilation. All this happens automatically in the background without requiring any special configuration from the user.

Benefits for Bazel Projects

- Automatic Remote Caching: No manual configuration needed—Harness CI configures the remote cache for you.

- Faster Builds: Builds run faster by reusing previously built outputs and skipping redundant tasks.

- Optimized Resource Usage: Reduces the use of CPU and memory by only running the tasks that are necessary.

Sample Pipeline: Harness CI with Bazel

Benchmarking: Harness CI vs. GitHub Actions with Bazel

We compared the performance of Bazel builds using Harness CI and GitHub Actions, and the results were clear: Harness CI, with its automatic configuration and optimized caching, delivered up to 4x faster builds than GitHub Actions. The automatic configuration of the remote cache made a significant difference, helping Bazel avoid redundant tasks and speeding up the build process.

Results:

- Harness CI with Bazel: Builds were up to 4x faster, thanks to automatic remote cache configuration and build optimization.

- GitHub Actions with Bazel: Slower builds due to less efficient caching and redundant task execution.

Next Steps

Bazel is an excellent tool for large-scale builds, but it becomes even more powerful when combined with Harness CI and Harness Cloud. By automatically configuring remote caches and applying build intelligence, Harness CI ensures that your Bazel builds are as fast and efficient as possible, without requiring any additional configuration from you.

By combining other Harness CI intelligence features like Cache Intelligence, Docker Layer Caching, and Test Intelligence, you can speed up your Bazel projects by up to 8x.With the hyper optimized build infrastructure, you can experience lightning-fast builds on Harness Cloud at reasonable costs. This seamless integration allows you to spend less time waiting for builds and more time focusing on delivering quality code.

If you're looking to speed up your Bazel builds, give Harness CI a try today and experience the difference!

Latest Blogs

Reduce CI Costs Without Slowing Down Development

Continuous integration (CI) costs can escalate quickly as engineering teams scale. While most organizations focus on cloud bills, the true cost of CI includes slow build times, developer wait time, inefficient test execution, and overprovisioned infrastructure.

CI cost optimization is the practice of reducing the total cost of CI pipelines by improving build efficiency, minimizing compute usage, and eliminating unnecessary work without slowing down development.

In this guide, you will learn how to reduce CI costs using four proven strategies: test optimization, intelligent caching, infrastructure right-sizing, and governance controls. Teams that implement these approaches often reduce build times and costs by 50 to 75 percent, while improving developer productivity and feedback cycles.

What Are CI Costs?

CI costs extend far beyond your cloud invoice. They include both direct infrastructure expenses and indirect productivity losses.

Direct costs:

- Compute resources such as build runners, containers, and virtual machines

- Storage for artifacts, caches, and logs

- Networking and data transfer

Indirect costs:

- Developer wait time during slow builds

- Context switching due to pipeline failures

- Time spent debugging flaky tests

- Engineering effort maintaining CI infrastructure

Why this matters

Research on developer productivity shows that interruptions can take 15 to 25 minutes to recover focus. When builds are slow or unreliable, this hidden cost compounds across teams and often exceeds infrastructure spend.

What Drives CI Costs?

CI costs are primarily driven by four factors:

- Build duration: which increases compute usage

- Test execution volume: which expands the runtime

- Infrastructure inefficiency: which resources waste the budget

- Pipeline design: which can create redundant work

Understanding these drivers is the first step toward meaningful cost reduction.

Strategy 1: Optimize Your Testing

Testing is typically the largest contributor to CI runtime and cost. Optimizing test execution delivers the highest return on investment.

Selective Test Execution

Most teams run their full test suite on every commit. This is inefficient, especially in large repositories.

Selective test execution runs only the tests affected by a code change.

Benefits:

- Reduces test volume by 50 to 80 percent

- Shortens feedback loops

- Lowers compute usage

For example, large engineering teams using test selection techniques have reduced build times from more than 20 minutes to under five minutes, saving significant developer time.

Flaky Test Management

Flaky tests are tests that fail intermittently without code changes. They introduce hidden costs:

- Trigger unnecessary reruns

- Reduce trust in CI results

- Waste developer time

Industry studies suggest flaky tests consume a measurable portion of engineering productivity.

Best practices:

- Automatically detect flaky tests

- Quarantine them so they do not block pipelines

- Track flaky test rate and aim for less than 2 percent

- Prioritize fixes based on impact

Test Parallelization

Running tests sequentially is inefficient.

Parallelization distributes tests across multiple runners, reducing execution time.

Example:

- Sequential execution: 30 minutes

- Parallel execution: 5 to 10 minutes

Parallelization may not significantly reduce total compute usage, but it dramatically reduces developer wait time, which is often the larger cost.

Strategy 2: Implement Intelligent Caching

CI pipelines often repeat the same work, such as downloading dependencies or rebuilding artifacts.

Caching reduces redundant work by reusing previous outputs.

What to Cache

High-impact caching targets include:

- Dependency packages such as npm, Maven, or Gradle

- Docker image layers

- Build artifacts

- Compiled modules

How to Cache Effectively

An effective caching strategy includes:

- Cache keys based on lockfiles or commit hashes

- Proper cache invalidation to avoid stale artifacts

- Storage optimization to balance speed and cost

- Security practices to avoid caching sensitive data

Real Impact

In controlled benchmarks, Docker layer caching and dependency reuse have shown significant improvements in build performance.

However, many teams underutilize caching by applying it inconsistently or misconfiguring cache keys.

Key insight:

There is a difference between simply enabling caching and implementing a well-optimized caching strategy.

Strategy 3: Use Cost-Effective Infrastructure

CI workloads are well-suited for cost optimization because they are stateless, short-lived, and parallelizable.

Use Spot Instances

Cloud providers offer spot instances at discounts of up to 90 percent compared to on-demand pricing.

Why they work for CI:

- Builds are short-lived

- Interruptions can be retried

- Workloads are fault-tolerant

Important nuance:

Retries are usually manageable, but frequent interruptions can impact time-sensitive pipelines.

Right-Size Build Runners

Many teams use oversized instances by default.

Right-sizing involves:

- Monitoring CPU and memory usage

- Matching workloads to appropriate instance types

- Eliminating overprovisioning

This reduces cost without affecting performance.

Enable Auto-Scaling

Static runner pools create inefficiencies:

- Idle resources during low demand

- Bottlenecks during peak demand

Auto-scaling allows:

- Scaling up during high activity

- Scaling down during idle periods

Real-World Outcome

Teams that optimize infrastructure often achieve:

- 30 to 50 percent cost reduction

- Faster build times

- Better resource utilization

Strategy 4: Implement Governance and Cost Controls

Without guardrails, CI costs tend to increase over time.

Common Cost Issues

- Oversized runners in new pipelines

- Redundant workflows

- Excessive environments

- Untracked cost growth

Policy as Code

Policy as Code enables automated enforcement of cost controls.

Examples:

- Limit maximum runner size

- Restrict expensive configurations

- Enforce caching usage

- Standardize pipeline templates

Tools such as Open Policy Agent are commonly used for this purpose.

Improve Visibility

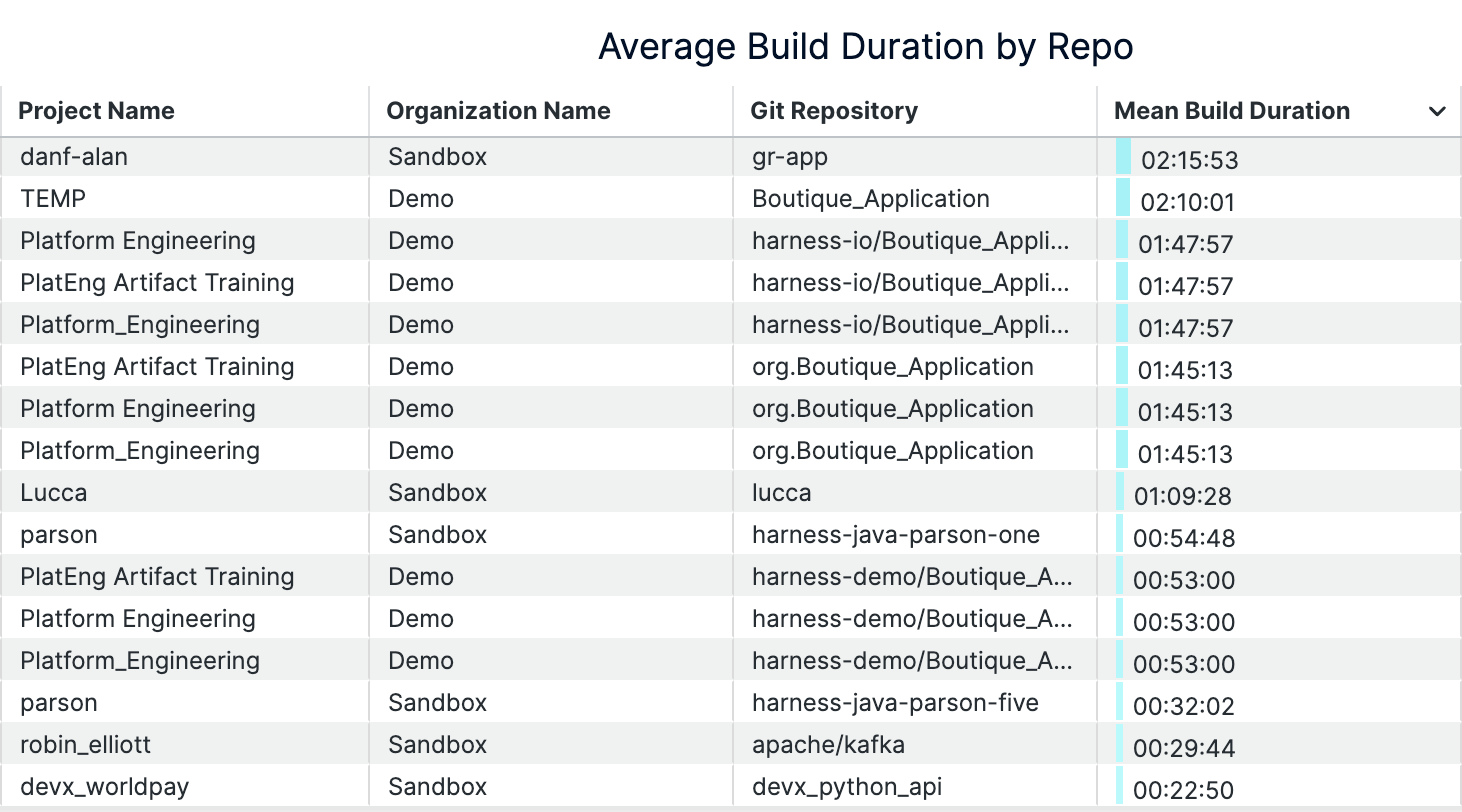

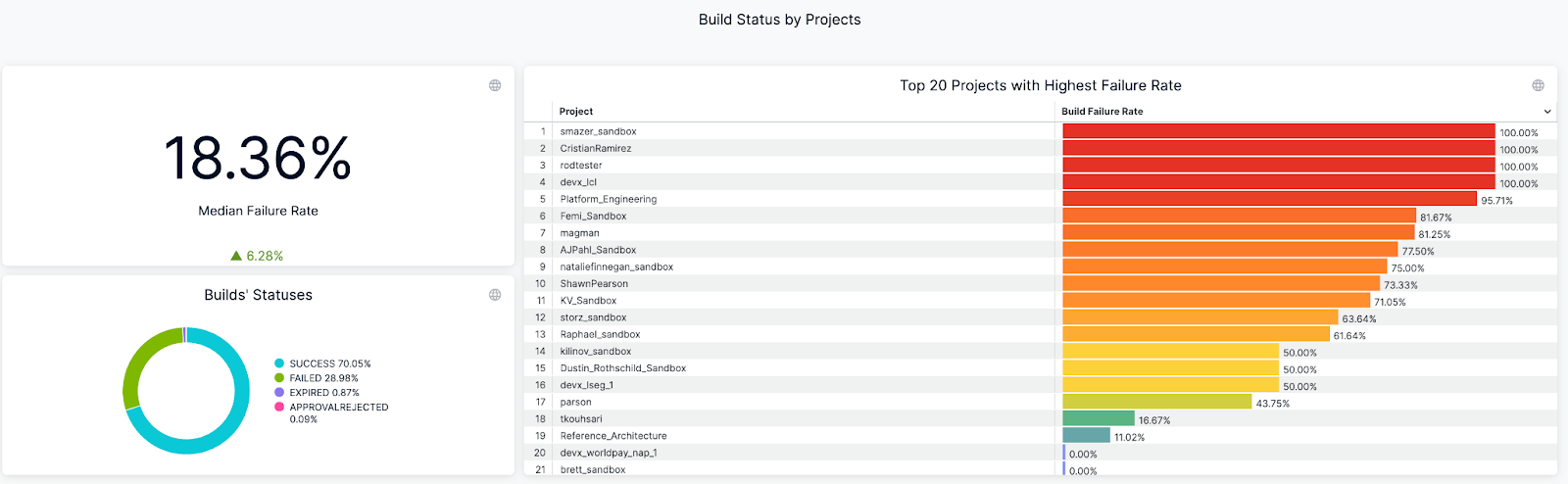

You cannot optimize what you cannot measure.

Key metrics include:

- Cost per build

- Build duration, including median and P95

- Failure rate

- Flaky test rate

- Cost by team or pipeline

Dashboards and analytics help identify inefficiencies and cost drivers.

How to Measure CI Costs

To reduce CI costs effectively, start with clear metrics.

Core Metrics

- Cost per build

- Cost per developer

- Build duration

- Queue time

- Failure rate

Benchmarking Progress

Establish a baseline and track improvements:

A Practical Roadmap to Reduce CI Costs in 3 to 6 Months

A phased approach helps teams implement changes effectively.

Month 1: Baseline and Quick Wins

- Measure current performance

- Enable dependency and Docker caching

- Identify slow pipelines

The expected impact is a 30 to 50 percent improvement.

Months 2 to 3: Test Optimization

- Implement selective test execution

- Parallelize test suites

- Identify and isolate flaky tests

This phase delivers the largest improvements.

Months 4 to 6: Infrastructure and Governance

- Right-size runners

- Introduce spot instances

- Enable auto-scaling

- Implement Policy as Code

This ensures long-term cost control.

Why Modern CI Platforms Simplify Cost Optimization

These strategies can be implemented manually, but doing so requires significant effort.

Modern CI platforms provide:

- Automated test selection

- Intelligent caching

- Cloud-native execution environments

- Built-in cost visibility

This reduces operational overhead and improves consistency.

Key Takeaways

- CI costs include both infrastructure spend and developer productivity loss

- Test optimization and caching deliver the highest return

- Infrastructure right-sizing reduces waste

- Governance prevents cost increases over time

- Teams can reduce CI costs by 50 to 75 percent within months

Conclusion

CI costs do not have to scale with your team size. By focusing on efficiency, you can reduce costs while improving developer experience.

The most effective strategies are:

- Reducing unnecessary tests

- Implementing caching

- Optimizing infrastructure

- Enforcing governance

The key difference is not just tooling but intentional optimization.

Call to Action

Want to reduce CI costs without slowing development?

Explore how modern CI platforms can help optimize test execution, caching, and infrastructure, so your team can build faster while reducing spend.

Frequently Asked Questions

What is the highest hidden cost in CI?

Developer wait time. Slow builds reduce productivity and increase context switching.

How much can CI costs be reduced?

Most teams achieve 30 to 75 percent cost reduction, depending on their starting point.

Is it safe to use spot instances for CI?

Yes. CI workloads are well-suited for spot instances, though retries may occasionally occur.

Where should teams start?

Start with:

- Measuring baseline metrics

- Enabling caching

- Optimizing test execution

The pipeline that never reached production

How template-driven CD prevents governance drift

Modern CI/CD platforms allow engineering teams to ship software faster than ever before.

Pipelines complete in minutes. Deployments that once required carefully coordinated release windows now happen dozens of times per day. Platform engineering teams have succeeded in giving developers unprecedented autonomy, enabling them to build, test, and deploy their services with remarkable speed.

Yet in highly regulated environments-especially in the financial services sector-speed alone cannot be the objective.

Control matters. Consistency matters. And perhaps most importantly, auditability matters.

In these environments, the real measure of a successful delivery platform is not only how quickly code moves through a pipeline. It is also how reliably the platform ensures that production changes are controlled, traceable, and compliant with governance standards.

Sometimes the most successful deployment pipeline is the one that never reaches production.

This is the story of how one enterprise platform team redesigned their delivery architecture to ensure that production pipelines remained governed, auditable, and secure by design.

The subtle risk in fast CI/CD platforms

A large financial institution had successfully adopted Harness for CI and CD across multiple engineering teams.

From a delivery perspective, the transformation looked extremely successful. Developers were productive, teams could create pipelines quickly, and deployments flowed smoothly through various non-production environments used for integration testing and validation. From the outside, the platform appeared healthy and efficient.

But during a platform architecture review, a deceptively simple question surfaced:

“What prevents someone from modifying a production pipeline directly?”

There had been no incidents. No production outages had been traced back to pipeline misconfiguration. No alarms had been raised by security or audit teams.

However, when the platform engineers examined the system more closely, they realized something concerning.

Production pipelines could still be modified manually.

In practice this meant governance relied largely on process discipline rather than platform enforcement. Engineers were expected to follow the right process, but the platform itself did not technically prevent deviations. In regulated industries, that is a risky place to be.

The architecture shift: separate authoring from execution

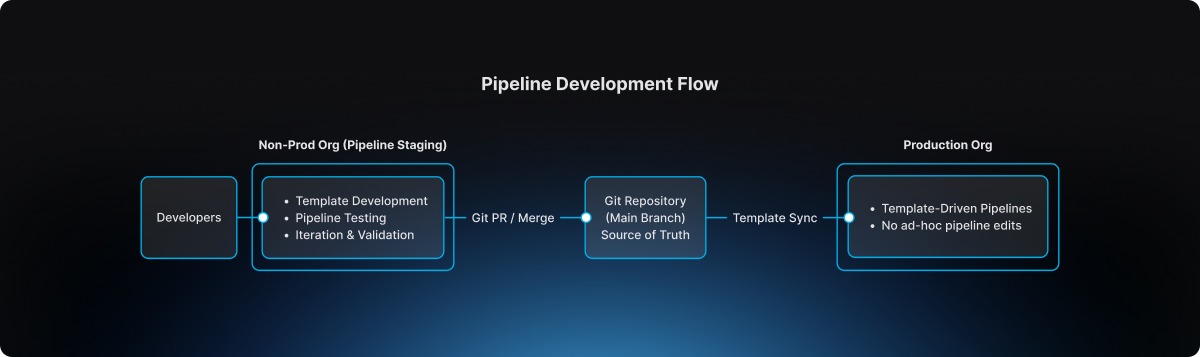

The platform team at the financial institution decided to rethink the delivery architecture entirely. Their redesign was guided by a simple but powerful principle:

Pipelines should be authored in a non-prod organization and executed in the production organization. And, if additional segregation was needed due to compliance, the team could decide to split into two separate accounts.

Authoring and experimentation should happen in a safe environment. Execution should occur in a controlled one.

Instead of creating additional tenants or separate accounts, the platform team decided to go with a dedicated non-prod organization within the same Harness account. This organization effectively acted as a staging environment for pipeline design and validation.

Architecture diagram

This separation introduced a clear lifecycle for pipeline evolution.

The non-prod organization became the staging environment where pipeline templates could be developed, tested, and refined. Engineers could experiment safely without impacting production governance.

The production organization, by contrast, became an execution environment. Pipelines there were not designed or modified freely. They were consumed from approved templates.

Guardrail #1: production pipelines must use templates

The first guardrail introduced by the platform team was straightforward but powerful.

Production pipelines must always be created from account-level templates.

Handcrafted pipelines were no longer allowed. Project-level template shortcuts were also prohibited, ensuring that governance could not be bypassed unintentionally.

This rule was enforced directly through OPA policies in Harness.

Example policy

package harness.cicd.pipeline

deny[msg] {

template_scope := input.pipeline.template.scope

template_scope != "account"

msg = "pipeline can only be created from account level pipeline template"

}

This policy ensured that production pipelines were standardized by design. Engineers could not create or modify arbitrary pipelines inside the production organization. Instead, they were required to build pipelines by selecting from approved templates that had been validated by the platform team.

As a result, production pipelines ceased to be ad-hoc configurations. They became governed platform artifacts.

Guardrail #2: governance starts in the non-prod organization

Blocking unsafe pipelines in production was only part of the solution.

The platform team realized it would be even more effective to prevent non-compliant pipelines earlier in the lifecycle.

To accomplish this, they implemented structural guardrails within the non-prod organization used for pipeline staging. Templates could not even be saved unless they satisfied specific structural requirements defined by policy.

For example, templates were required to include mandatory stages, compliance checkpoints, and evidence collection steps necessary for audit traceability.

Example policy

package harness.ci_cd

deny[msg] {

input.templates[_].stages == null

msg = "Template must have necessary stages defined"

}

deny[msg] {

some i

stages := input.templates[i].stages

stages == [Evidence_Collection]

msg = "Template must have necessary stages defined"

}

These guardrails ensured that every template contained required compliance stages such as Evidence Collection, making it impossible for teams to bypass mandatory governance steps during pipeline design.

Governance, in other words, became embedded directly into the pipeline architecture itself.

The source of truth: Git

The next question the platform team addressed was where the canonical version of pipeline templates should reside.

The answer was clear: Git must become the source of truth.

Every template intended for production usage lived inside a repository where the main branch represented the official release line.

Direct pushes to the main branch were blocked. All changes required pull requests, and pull requests themselves were subject to approval workflows that mirrored enterprise change management practices.

Governance flow

.png)

This model introduced peer review, immutable change history, and a clear traceability chain connecting pipeline changes to formal change management records.

For auditors and platform leaders alike, this was a significant improvement.

The promotion workflow

Once governance mechanisms were in place, the promotion workflow itself became predictable and repeatable.

Engineers first authored and validated templates within the non-prod organization used for pipeline staging. There they could test pipelines using real deployments in controlled non-production environments.

The typical delivery flow followed a familiar sequence:

After validation, the template definition was committed to Git through a branch and promoted through a pull request. Required approvals ensured that platform engineers, security teams, and change management authorities could review the change before it reached the release line.

Once merged into main, the approved template became available for pipelines running in the production organization. Platform administrators ensured that naming conventions and version identifiers remained consistent so that teams consuming the template could easily track its evolution.

Finally, product teams created their production pipelines simply by selecting the approved template. Any attempt to bypass the template mechanism was automatically rejected by policy enforcement

The day the model proved its value

Several months after the new architecture had been implemented, an engineer attempted to modify a deployment pipeline directly inside the production organization.

Under the previous architecture, that change would have succeeded immediately.

But now the platform rejected it. The pipeline violated the OPA rule because it was not created from an approved account-level template.

Instead of modifying the pipeline directly, the engineer followed the intended process: updating the template within the non-prod organization, submitting a pull request, obtaining the necessary approvals, merging the change to Git main, and then consuming the updated template in production.

The system had behaved exactly as intended. It prevented uncontrolled change in production.

Why this model works

The architecture introduced by the large financial institution delivered several key guarantees.

Production pipelines are standardized because they originate only from platform-approved templates. Governance is preserved because Git main serves as the official release line for pipeline definitions. Auditability improves dramatically because every pipeline change can be traced back to a pull request and associated change management approval. Finally, platform administrators retain the ability to control how templates evolve and how they are consumed in production environments.

The lesson for platform teams

Pipelines are often treated as simple automation scripts.

In reality they represent critical production infrastructure.

They define how code moves through the delivery system, how security scans are executed, how compliance evidence is collected, and ultimately how deployments reach production environments. If pipeline creation is uncontrolled, the entire delivery system becomes fragile.

The financial institution solved this problem with a remarkably simple model. Pipelines are built in the non-prod staging organization. Templates are promoted through Git governance workflows. Production pipelines consume those approved templates.

Nothing more. Nothing less.

Final takeaway

Modern CI/CD platforms have dramatically accelerated the speed of software delivery.

But in regulated environments, the true achievement lies elsewhere. It lies in building a platform where developers move quickly, security remains embedded within the delivery workflow, governance is enforced automatically, and production environments remain protected from uncontrolled change.

That is not just CI/CD. That is platform engineering done right.

Regression Testing in CI/CD: Deliver Faster Without the Fear

A financial services company ships code to production 47 times per day across 200+ microservices. Their secret isn't running fewer tests; it's running the right tests at the right time.

Modern regression testing must evolve beyond brittle test suites that break with every change. It requires intelligent test selection, process parallelization, flaky test detection, and governance that scales with your services.

Harness Continuous Integration brings these capabilities together: using machine learning to detect deployment anomalies and automatically roll back failures before they impact customers. This framework covers definitions, automation patterns, and scale strategies that turn regression testing into an operational advantage. Ready to deliver faster without fear?

What Is Regression Testing? (A Real-world Example)

Managing updates across hundreds of services makes regression testing a daily reality, not just a testing concept. Regression testing in CI/CD ensures that new code changes don’t break existing functionality as teams ship faster and more frequently. In modern microservices environments, intelligent regression testing is the difference between confident daily releases and constant production risk.

- The Simple Definition: Regression testing is the practice of re-running existing tests after code changes to ensure nothing that previously worked is unintentionally broken. Instead of validating new features, it safeguards stable functionality across your application.

- When Small Changes Create Big Problems: Even “low-risk” tweaks, like changing a payments API header, can silently break downstream jobs and critical flows like checkout. Regression tests catch these integration issues before production, protecting revenue and user experience.

- How This Fits Into Modern CI/CD: In modern CI/CD, regression tests run continuously on pull requests, main branch merges, and staged rollouts like canaries. In each case, the tests ensure the application continues to work as expected.

Regression Testing vs. Retesting

These terms often get used interchangeably, but they serve different purposes in your pipeline. Understanding the distinction helps you avoid both redundant test runs and dangerous coverage gaps.

- Retesting validates a specific fix. When a bug is found and patched, you retest that exact functionality to confirm the fix works. It's narrow and targeted.

- Regression testing protects everything else. After that fix goes in, regression tests verify the change didn't break existing functionality across dependent services.

In practice, you run them sequentially: retest the fix first, then run regression suites scoped to the affected services. For microservices environments with hundreds of interdependent services, this sequencing prevents cascade failures without creating deployment bottlenecks.

The challenge is deciding which regression tests to run. A small change to one service might affect three downstream dependencies, or even thirty. This is where governance rules help. You can set policies that automatically trigger retests on pull requests and broader regression suites at pre-production gates, scoping coverage based on change impact analysis rather than gut feel.

To summarize, Regression testing checks that existing functionality still works after a change. Retesting verifies that a specific bug fix works as intended. Both are essential, but they serve different purposes in CI/CD pipelines.

Where Regression Fits in the CI/CD Pipeline

The regression testing process works best when it matches your delivery cadence and risk tolerance. Smart timing prevents bottlenecks while catching regressions before they reach users.

- Run targeted regression subsets on every pull request to catch breaking changes within developer workflows. Keep these under 10 minutes for fast feedback.

- Execute broader suites on main branch merges using parallelization and cloud resources to compress full regression cycles from hours to minutes.

- Gate pre-production deployments with end-to-end smoke tests and contract validation before progressive rollout begins.

- Monitor live metrics during canary releases and feature experiments to detect regressions under real traffic patterns that test environments can't replicate.

- Combine synthetic monitoring with AI-powered automated rollback triggers to validate actual user impact and revert within seconds when thresholds are breached.

This layered approach balances speed with safety. Developers get immediate feedback while production deployments include comprehensive verification. Next, we'll explore why this structured approach becomes even more critical in microservices environments where a single change can cascade across dozens of services.

Why Regression Testing Matters for Microservices, Risk, and Compliance

Modern enterprises managing hundreds of microservices face three critical challenges: changes that cascade across dependent systems, regulatory requirements demanding complete audit trails, and operational pressure to maintain uptime while accelerating delivery.

Microservices Amplify Blast Radius Across Dependent Services

A single API change can break dozens of downstream services you didn't know depended on it.

- Cascade failures are the norm, not the exception. A payment schema change that seems harmless in isolation can break reconciliation jobs, notification services, and reporting pipelines across 47 dependent services. Resilience or “Chaos” testing can help you assess your exposure to cascading failures.

- Loosely coupled doesn't mean independent. NIST guidance confirms that cloud-native architectures consist of multiple components where individual changes can have system-wide impact.

- Higher deployment frequency requires higher automation. Research demonstrates that microservices require automated testing integration into CD pipelines to maintain reliability at scale.

Regulated Environments Demand Complete Audit Trails

Financial services, healthcare, and government sectors require documented proof that tests were executed and passed for every promotion.

- Compliance requires traceability. The DoD Cyber DT&E Guidebook mandates traceable test evidence for continuous authorization, noting that minor software changes can significantly impact system risk posture.

- Policy-as-code turns testing into a compliance enabler. Harness governance features enforce required test gates and generate comprehensive audit logs of every approval and pipeline execution.

- Auditors expect timestamped evidence on demand. Automated frameworks with audit logging eliminate scrambling when validation requests arrive.

Pre-Production Detection Reduces Operational Costs and MTTR

Catching regressions before deployment saves exponentially more than fixing them during peak traffic.

- The math is simple. A failed regression test costs developer time; a production incident costs customer trust, revenue, and weekend firefighting.

- Automated gates prevent breaks from reaching users. Research confirms that regression testing in pipelines stops new changes from introducing functionality failures.

- AI verification adds a final safety net. Harness detects anomalies post-deployment and triggers automated rollbacks within seconds, eliminating expensive emergency responses.

With the stakes clear, the next question is which techniques to apply.

Types of Regression Testing Techniques You'll Actually Use

Once you've established where regression testing fits in your pipeline, the next question is which techniques to apply. Modern CI/CD demands regression testing that balances thoroughness with velocity. The most effective techniques fall into three categories: selective execution, integration safety, and production validation.

Types of Regression Testing Techniques You'll Actually Use

Once you've established where regression testing fits in your pipeline, the next question is which techniques to apply. Modern CI/CD demands regression testing that balances thoroughness with velocity. The most effective techniques fall into three categories: selective execution, integration safety, and production validation—with a few pragmatic variants you’ll use day-to-day.

- Full regression suites rerun your critical end-to-end and high-value scenarios before major releases or architectural changes. They’re slower, but essential for high‑risk changes and compliance-heavy environments.

- Smoke and sanity regression focus on a small, fast set of tests that validate core flows (login, checkout, core APIs) on every commit or deployment. These suites act as your “always on” safety net.

- Unit-level regression runs targeted unit tests around recently changed modules. This is your fastest feedback loop, catching logic regressions before they ever hit cross-service integration or UI layers.

- Selective regression and test impact analysis run only the suites that exercise changed code paths, using dependency mapping to cut execution time without sacrificing confidence.

- Contract testing enforces backward compatibility through consumer-driven contracts like Pact, preventing integration failures between teams and services.

- API/UI regression testing locks in behavior at the interaction layer—REST, GraphQL, or UI flows—so refactors behind the scenes don’t break user-visible behavior.

- Performance and scalability regression ensure that latency, throughput, and resource usage don’t degrade between releases, especially for high-traffic or revenue-critical paths.

- Progressive delivery verification combines canary deployments with real-time metrics and error signals to surface regressions under actual traffic, with automated halt/rollback when thresholds are breached.

These approaches work because they target specific failure modes. Smart selection outperforms broad coverage when you need both reliability and rapid feedback.

How to Automate Regression Testing Across Your Pipeline

Managing regression testing across 200+ microservices doesn't require days of bespoke pipeline creation. Harness Continuous Integration provides the building blocks to transform testing from a coordination nightmare into an intelligent safety net that scales with your architecture.

Step 1: Generate pipelines with context-aware AI. Start by letting Harness AI build your pipelines based on industry best practices and the standards within your organization. The approach is interactive, and you can refine the pipelines with Harness as your guide. Ensure that the standard scanners are run.

Step 2: Codify golden paths with reusable templates. Create Harness pipeline templates that define when and how regression tests execute across your service ecosystem. These become standardized workflows embedding testing best practices while giving developers guided autonomy. When security policies change, update a single template and watch it propagate to all pipelines automatically.

Step 3: Enforce governance with Policy as Code. Use OPA policies in Harness to enforce minimum coverage thresholds and required approvals before production promotions. This ensures every service meets your regression standards without manual oversight.

With automation in place, the next step is avoiding the pitfalls that derail even well-designed pipelines.

Best Practices and Common Challenges (And How to Fix Them)

Regression testing breaks down when flaky tests erode trust and slow suites block every pull request. These best practices focus on governance, speed optimization, and data stability.

- Quarantine flaky tests automatically using policy enforcement and require test owners before suite re-entry. Research shows flaky tests reproduce only 17-43% of the time, making governance more effective than debugging individual failures.

- Parallelize and shard test execution across multiple agents to keep PR feedback under 5 minutes.

- Apply test impact analysis to run only tests affected by code changes, reducing unnecessary execution.

- Provision ephemeral test environments with seeded datasets to eliminate data drift between runs.

- Use contract-backed mocks for external dependencies to ensure consistent test behavior.

- Add AI-powered verification as a final backstop to catch regressions that slip past test suites.

Turn Regression Testing Into a Safety Net, Not a Speed Bump

Regression testing in CI/CD enables fast, confident delivery when it’s selective, automated, and governed by policy. Regression testing transforms from a release bottleneck into an automated protection layer when you apply the right strategies. Selective test prioritization, automated regression gates, and policy-backed governance create confidence without sacrificing speed.

The future belongs to organizations that make regression testing intelligent and seamless. When regression testing becomes part of your deployment workflow rather than an afterthought, shipping daily across hundreds of services becomes the norm.

Ready to see how context-aware AI, OPA policies, and automated test intelligence can accelerate your releases while maintaining enterprise governance? Explore Harness Continuous Integration and discover how leading teams turn regression testing into their competitive advantage.

FAQ: Practical Answers for Regression Testing in CI/CD

These practical answers address timing, strategy, and operational decisions platform engineers encounter when implementing regression testing at scale.

When should regression tests run in a CI/CD pipeline?

Run targeted regression subsets on every pull request for fast feedback. Execute broader suites on the main branch merges with parallelization. Schedule comprehensive regression testing before production deployments, then use core end-to-end tests as synthetic testing during canary rollouts to catch issues under live traffic.

How do we differentiate regression testing from retesting in practice?

Retesting validates a specific bug fix — did the payment timeout issue get resolved? Regression testing ensures that the fix doesn’t break related functionality like order processing or inventory updates. Run retests first, then targeted regression suites scoped to affected services.

How much regression coverage is enough for production?

There's no universal number. Coverage requirements depend on risk tolerance, service criticality, and regulatory context. Focus on covering critical user paths and high-risk integration points rather than chasing percentage targets. Use policy-as-code to enforce minimum thresholds where compliance requires it, and supplement test coverage with AI-powered deployment verification to catch regressions that test suites miss.

Should we run full regression suites on every commit?

No. Full regression on every commit creates bottlenecks. Use change-based test selection to run only tests affected by code modifications. Reserve comprehensive suites for nightly runs or pre-release gates. This approach maintains confidence while preserving velocity across your enterprise delivery pipelines.

What's the best way to handle flaky tests without blocking releases?

Quarantine flaky tests immediately, rather than letting them block pipelines. Tag unstable tests, move them to separate jobs, and set clear SLAs for fixes. Use failure strategies like retry logic and conditional execution to handle intermittent issues while maintaining deployment flow.

How do we maintain regression test quality at scale?

Treat test code with the same rigor as application code. That means version control, code reviews, and regular cleanup of obsolete tests. Use policy-as-code to enforce coverage thresholds across teams, and leverage pipeline templates to standardize how regression suites execute across your service portfolio.

Build Numbers That Actually Make Sense: Branch-Scoped Sequence IDs in Harness CI

You're tagging Docker images with build numbers.

-Build #47 is your latest production release on main. A developer pushes a hotfix to release-v2.1, that run becomes build #48.

-Another merges to develop, build #49. A week later someone asks: "What build number are we on for production?" You check the registry.

-You see #47, #52, #58, #61 on main. The numbers in between? Scattered across feature branches that may never ship. Your build numbers have stopped telling a useful story.

That's the reality when your CI platform uses a single global counter. Every run, on every branch, increments the same number. For teams using GitFlow, trunk-based development, or any branching strategy, that means gaps, confusion, and versioning that doesn't match how you actually ship.

TL;DR: Harness CI now supports branch-scoped build sequence IDs via <+pipeline.branchSeqId>.

Each branch gets its own counter. No gaps. No confusion.

Why Global Build Counters Break Down

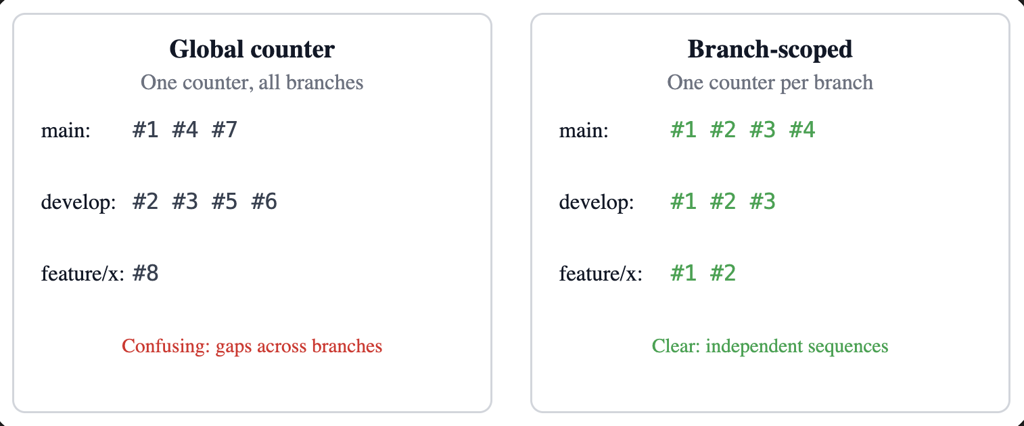

Most CI platforms give you one incrementing counter per pipeline. Push to main, push to develop, push to a feature branch, same counter. So you get:

- Gaps in the sequence for any given branch (e.g. main might have #1, #4, #7).

- No clear answer to "what's the latest build on main?"

- Semantic versioning and artifact naming that don't line up with branch reality.

- Registries and artifact stores full of numbers that don't map to how you release.

This is now built directly into Harness CI as a first-class capability.

What It Feels Like in Practice

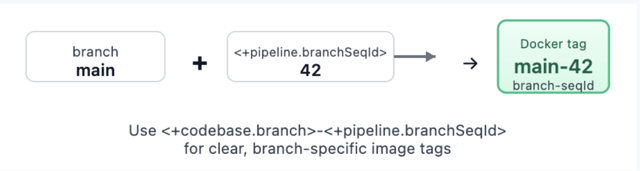

Add <+pipeline.branchSeqId> where you need the number—for example, in a Docker build-and-push step:

tags:

- <+pipeline.branchSeqId>

- <+codebase.branch>-<+pipeline.branchSeqId>

- latest

Trigger runs on main, then on develop, then on a feature branch. Each branch gets its own sequence: main might be 1, 2, 3… develop 1, 2, 3… feature/x 1, 2. Your tags become meaningful: main-42, develop-15, feature-auth-3. No more guessing which number belongs to which branch.

What You Get

- Per-branch counters – One sequence per pipeline + repo + branch, stored and incremented atomically.

- Pipeline expression –

<+pipeline.branchSeqId>. Check out Harness variables documentation. - REST API – List sequences for a pipeline, get the current value for a branch/repo, reset a branch counter, or set it to a specific value (for example, after a major release or when migrating from another CI).

- Consistent identification – Repo URLs and branch names are normalized (for example, refs/heads/main → main, different URL forms to one canonical host/owner/repo). Same logical branch and repo always share the same counter.

- Cleanup – When a pipeline is deleted, its branch-sequence data is removed so you don't leave orphaned counters.

Webhook triggers (push, PR, branch, release) and manual runs (with branch from codebase config) are supported. For tag-only or other runs without branch context, the expression returns null so you can handle that in your pipeline if needed.

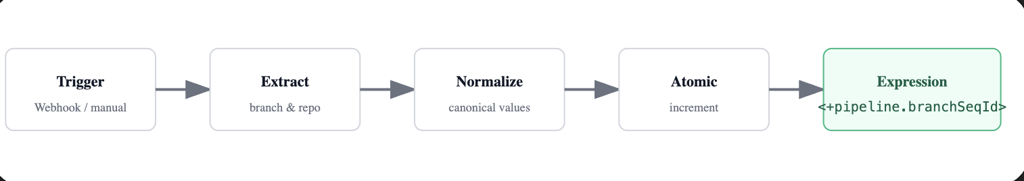

How It Works Under the Hood

Branch and repo are taken from the trigger payload when possible (webhooks) or from the pipeline's codebase configuration (for example, manual runs). We normalize them so that the same repo and branch always map to the same logical key: branch names get refs/heads/ (or similar) stripped, and repo URLs are reduced to a canonical form (for example, github.com/org/repo). That way, whether you use https://..., git@..., or different casing, you get one counter per branch.

The counter is stored and updated with an atomic increment. Parallel runs on the same branch still get distinct, sequential numbers. The value is attached to the run's metadata and exposed through the pipeline execution context so <+pipeline.branchSeqId> resolves correctly at runtime.

Putting It to Work

- Docker image tagging: use

<+pipeline.branchSeqId>and optionally <+codebase.branch>-<+pipeline.branchSeqId> for clear, branch-specific tags. - Helm chart versioning: e.g. --version 1.0.

<+pipeline.branchSeqId> --app-version <+codebase.commitSha>so the chart version tracks the build number and the app version tracks the commit. - Release notes or deployment labels: for example, "Release Build #

<+pipeline.branchSeqId>" so production and staging each have a clear, branch-local build number.

For teams that need control or migration support, branch sequences are also manageable via API:

# List all branch sequences for a pipeline

GET /pipelines/{pipelineIdentifier}/branch-sequences

# Reset counter for a specific branch

DELETE /pipelines/{pipelineIdentifier}/branch-sequences/branch?branch=main&repoUrl=github.com/org/repo

# Set counter to a specific value (e.g., after major release)

PUT /pipelines/{pipelineIdentifier}/branch-sequences/set?branch=main&repoUrl=github.com/org/repo&sequenceId=100All of this is gated by the same feature flag so only accounts that have adopted the feature use the APIs.

Try It (With Smart Guardrails)

- Enable the feature – Turn on

CI_ENABLE_BRANCH_SEQUENCE_ID(Account Settings → Feature Flags, or Reach out to the Harness team). - Use the expression – Add

<+pipeline.branchSeqId>in steps, tags, or env vars. - Verify – Run the pipeline on two or three branches and confirm each branch has its own 1, 2, 3…

If branch context isn't available, the expression returns null. Design your pipeline to handle that (for example, skip tagging or use a fallback) for tag builds or edge cases.

Feature availability may vary by plan. Check with your Harness account or Harness Developer Hub for your setup.

How Other CI Platforms Handle This (Spoiler: Most Don't)

This isn't just a Harness problem we solved—it's an industry gap. Here's how major CI platforms compare:

Most platforms treat build numbers as an afterthought. Harness CI treats them as a first-class versioning primitive. For teams migrating from Jenkins or Azure DevOps, the model will feel familiar. For teams on GitHub Actions, GitLab, or CircleCI, this fills a gap that previously required external services or custom scripts

What's Coming

This is the first release of branch-scoped sequence IDs. The foundations are in place: per-branch counters, expression support, and APIs. We're not done.

We're listening. If you use this feature and hit rough edges—or have ideas for tag-scoped sequences, dashboard visibility, or trigger conditions—we want to hear about it. Share feedback .

AI Deployment in Production: Orchestrate LLMs, RAG, Agents

For the past few years, the narrative around Artificial Intelligence has been dominated by what I like to call the "magic box" illusion. We assumed that deploying AI simply meant passing a user’s question through an API key to a Large Language Model (LLM) and waiting for a brilliant answer.

Today, we are building systems that can reason, access private databases, utilize tools, and—hopefully—correct their own mistakes. However, the reality is that while AI code generation tools are helping us write more code than ever , we are actually getting worse at shipping it. Google's DORA research found that delivery throughput is decreasing by 1.5% and stability is worsening by 7.5%. Deploying AI is no longer a machine learning experiment; it’s one of the most complex system integration challenges in modern software engineering.

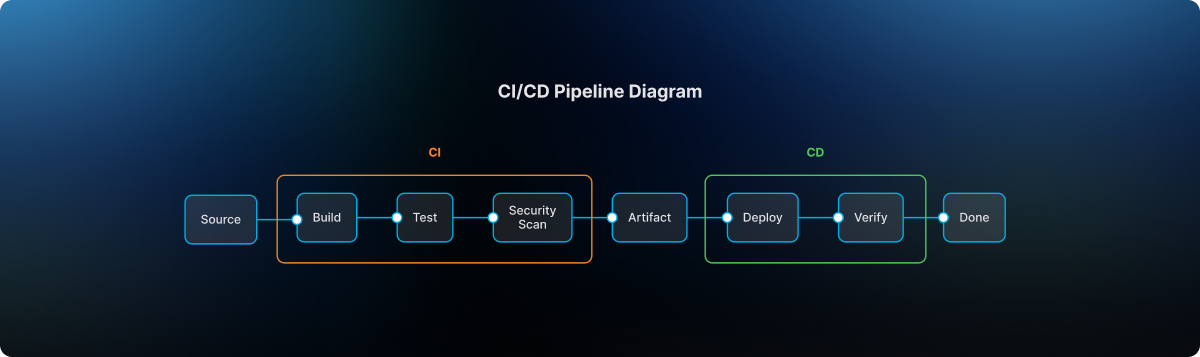

That's why integrated CI/CD is no longer optional for AI deployment—it's the foundation. As teams adopt platforms like Harness Continuous Integration and Harness Continuous Delivery, testing and release orchestration shift from isolated checkpoints to continuous safeguards that protect quality and safety at every layer of the AI stack.

What Is AI Deployment in 2026?

Most definitions of AI deployment are stuck in the "model era." They describe deployment as taking a trained model, wrapping it in an API, and integrating it into a single application to make predictions.

That description is technically accurate—but strategically wrong.

In 2026, AI deployment means:

Integrating a full AI application stack—models, prompts, data pipelines, RAG components, agents, tools, and guardrails—into your production environment so it can safely power real user workflows and business decisions.

You're not just deploying "a model." You are deploying the instructions that define the AI's behavior, the engines (LLMs and other models) that do the reasoning, the data and embeddings that feed those engines context, the RAG and orchestration code that glue everything together, the agents and tools that let AI take actions in your systems, and the guardrails and policies that keep it all safe, compliant, and affordable.

Classic "model deployment" was a single component behind a predictable API. Modern AI deployment is end‑to‑end, cross‑cutting, and deeply entangled with your existing software delivery process.

If you want a great reference for the more traditional view, IBM's overview of model deployment is a good baseline. But in this article, we're going to go beyond that to talk about the compound system you are actually shipping today.

Why AI Deployment Has Become the Bottleneck

The paradox of this moment is simple: coding has sped up, but delivery has slowed down.

AI coding assistants take mere seconds to generate the scaffolding. Platform teams spin up infrastructure on demand. Product leaders are under pressure to add "AI" to every experience. But in many organizations, the actual path from "we built it" to "it's safely in front of customers" is getting more fragile—instead of less.

There are a few reasons for this:

- The AI stack is multi‑layered and non‑deterministic. Traditional CI/CD pipelines were designed for deterministic systems: if the code compiles and tests pass, you can be reasonably confident in the behavior. With LLMs and agents, the same input might result in a range of outputs, some acceptable and some dangerous. Testing no longer has a simple pass/fail shape.

- Ownership is fractured. MLOps teams worry about training and serving models. Application teams bolt on AI features. Security teams scramble to backfill policies around data access and tool usage. Platform teams are left trying to orchestrate releases that touch all of the above, often without having clear control over any of them.

- We've created tool silos instead of integrated delivery. We now talk about MLOps, LLMOps, AgentOps, DevOps, SecOps—as if each deserved its own stack and dashboard—while the actual releases that matter to customers cut straight across those boundaries.

The result is what many teams are feeling right now: shipping AI features feels risky, brittle, and slow, even as the pressure to "move faster" keeps rising.

To fix that, we have to start with the stack itself.

Part 1: Deconstructing the Modern AI Stack

To understand how to deploy AI, you have to stop treating it as a single entity. The modern AI application is a compound system of highly distinct, interdependent layers. If any single component in this stack fails or drifts, the entire application degrades.

1. The Instructions: Prompts as Code

A prompt is no longer just a text string typed into a chat window; it is the source code that dictates the behavior and persona of your application.

- The Deployment Reality: Prompts require the same rigor as traditional code—version control, peer review, and automated testing. Because LLMs are sensitive to minute phrasing changes, updating a prompt requires running it against hundreds of baseline test cases to ensure the model doesn't experience "regression" and forget its core instructions.

2. The Engine: Large Language Models (LLMs)

The LLM is the reasoning engine. It has vast general knowledge but zero awareness of your company’s proprietary data.

- The Deployment (LLMOps) Reality: Most companies consume these via APIs or host smaller models on cloud infrastructure. The deployment challenge is routing. A sophisticated pipeline will dynamically route simple tasks to faster, cheaper models and complex reasoning tasks to massive, expensive models to optimize both latency and cloud spend, which currently sees significant waste in many organizations.

3. The Fuel: Data and Vector Embeddings

An AI's output is only as reliable as the context it is given. To make an LLM useful, it needs a continuous feed of your company’s internal data.

- The Deployment Reality: This requires automated data pipelines that ingest raw information, "chunk" it, and store it in a Vector Database. If the embedding model changes, the entire database must be re-indexed. This data pipeline must be continuously deployed and synced without disrupting the live application.

4. The Architecture: Retrieval-Augmented Generation (RAG)

RAG is not a model; it is a separate software architecture deployed to act as the LLM's research assistant.

- The RAG Deployment Reality: When a user asks a question, the RAG code intercepts it, queries the Vector Database, and packages that data into a prompt. Deploying RAG means deploying the integration code that securely manages this retrieval and hand-off process.

5. The Doer: AI Agents

If RAG is a researcher, an AI Agent is an employee. Agents are LLMs given access to external tools. Instead of just answering a question, an agent can formulate a plan, search the web, and execute code.

- The Deployment Reality: Moving from linear flows to "Agentic Workflows" introduces massive complexity. You are now deploying systems that iterate and loop. Deploying an agent requires monitoring its step-by-step reasoning traces and ensuring it doesn't get stuck in an infinite loop or misuse its tools.

Part 2: The Guardrails (DevSecOps for AI)

You cannot expose a raw LLM or an autonomous agent to the public, or even to internal employees, without armor. Because AI is non-deterministic, traditional software security falls short. Modern AI deployment requires distinct "Guardrails as Code".

Input Guardrails

- Prompt Injection Defenses: Malicious users will attempt to "jailbreak" the AI. Input guardrails use separate, smaller models to intercept adversarial prompts before they reach the core LLM.

- PII Scrubbing: Automated systems must redact Personally Identifiable Information (PII) to ensure sensitive data never leaves your secure environment or reaches a third-party LLM provider.

These kinds of controls are a natural fit for policy‑as‑code engines and CI/CD gates. With something like Harness Continuous Delivery & GitOps, you can enforce Open Policy Agent (OPA) rules at deployment time—ensuring that applications with missing or misconfigured input guardrails simply never make it to production.

Output Guardrails

- Hallucination Detection: These cross-reference the model’s answer against the retrieved RAG documents to increase confidence by checking support/citations for key claims in your proprietary data.

- Schema Enforcement: If your system expects the AI to return data in a strict format, output guardrails will validate the structure and automatically reject or re-prompt the LLM if it outputs unstructured text.

Agentic and Operational Guardrails

- Blast-Radius Containment: When deploying agents that can execute actions, strict Role-Based Access Control (RBAC) must be enforced. Agents must operate on the principle of least privilege.

- FinOps and Rate Limiting: AI is computationally expensive. Guardrails must enforce strict token-usage tracking and throttle actions to prevent a runaway agent from racking up thousands of dollars in cloud compute costs.

Part 3: The Interplay and the Need for Release Orchestration

Understanding the stack reveals the ultimate challenge: The Cascade Effect. In traditional software, a database error throws a clean error code. In an AI application, a bug in the data pipeline silently ruins everything downstream. This is why deployment cannot be disjointed. It requires rigorous Release Orchestration.

- Fuzzy Integration Testing: Traditional CI/CD pipelines rely on exact-match assertions. Because LLMs return varying text, we now require "semantic evaluation"—often using a separate LLM acting as a judge to grade the output based on meaning and accuracy during the automated testing phase.

- Progressive Rollout Strategies: Because you cannot perfectly predict AI behavior, orchestration must support Canary releases—rolling out the new model to 5% of users to monitor drift before a full launch.

- Synchronizing the Moving Parts: A prompt update might require a different RAG strategy. A new embedding model demands a full database re-indexing. Release orchestration ensures that when one layer is updated, the corresponding dependencies are automatically tested and deployed in lockstep.

How to Deploy AI in Production (A Practical Pipeline)

- Version prompts/configs/policies as code

- Build eval suite (golden set + safety tests)

- CI: semantic eval + regression thresholds

- Security gates: PII redaction + prompt injection tests

- CD: canary rollout for prompt/model/RAG changes

- Observability: quality + safety + cost signals

- Rollback rules tied to metrics

- Post-deploy review and dataset refresh cadence

The Bottom Line: Orchestrate or Stall

For years, we've been obsessed with specialized silos: MLOps, LLMOps, AgentOps. But a vital realization is sweeping the enterprise: the time of siloed, specialized AI operations tools is coming to an end.

The future belongs to unified release management. The organizations that succeed will not be the ones with the smartest standalone AI models, but the ones who master the orchestration required to deploy and evolve those models, alongside everything else they ship, safely, efficiently, and continuously.

If you want a platform that brings semantic testing, progressive rollouts, and coordinated AI releases into your day-to-day workflows, Harness Continuous Integration and Harness Continuous Delivery were built for this.

Key Takeaways:

- AI deployment is deploying a stack, not a model.

- Treat prompts, evals, policies, and configs as code.

- Use semantic evaluation plus standard CI tests.

- Use progressive delivery (canaries) for models/prompt/RAG changes.

- Orchestrate dependencies (prompt ↔ RAG ↔ embeddings ↔ guardrails) to prevent silent regressions.

AI Deployment: Frequently Asked Questions (FAQs)

What is AI deployment?

AI deployment is the process of integrating AI systems, models, prompts, data pipelines, RAG architectures, agents, tools, and guardrails, into production environments so they can safely power real applications and business workflows.

How is AI deployment different from traditional model deployment?

Traditional model deployment focuses on serving a single model behind an API. Modern AI deployment involves a multi‑layer stack: instructions, engines, context, retrieval, agents, and policies. Failures are more likely to be silent regressions or unsafe behaviors than obvious crashes, which is why you need semantic testing, guardrails, and release orchestration.

How do you deploy AI safely in production?

Safe AI deployment starts with treating prompts and configurations as code, embedding guardrails at input, output, and action levels, and using semantic evaluation and progressive rollout strategies. It also requires immutable logging and audit trails so you can trace decisions back to specific versions of your AI stack. Combining CI for semantic tests with CD for orchestrated releases is the practical path to safety.

What tools are used for AI deployment?

Teams typically use a mix of LLM providers or model‑serving platforms, vector databases, observability tools, and CI/CD systems for orchestrating releases. On top of that, they add policy engines and specialized evaluation frameworks. The critical shift is moving from isolated "AI tools" to integrated pipelines that tie everything together.

How do canary releases work for AI models and prompts?

With canary releases, you send a small portion of traffic to the new behavior, a new model, prompt, or RAG strategy, while most users continue on the old path. You observe semantic quality, safety signals, and performance. If the canary behaves well, you gradually increase its share. If it misbehaves, you automatically roll back to the previous version.

.jpg)

.jpg)

How to Plan a Successful CI/CD Migration Without Disrupting Developers

- Treat CI/CD migration like a developer platform launch: define what “no disruption” means, baseline your metrics, and set clear cutover rules.

- Migrate the foundations before the YAML: runners, networking, caching, and artifact handling determine whether feedback stays fast and reliable.

- Roll out in waves with parallel runs: start with a representative pilot, then expand using a repeatable checklist for readiness, performance, and rollback.

Modern engineering teams run on CI/CD. It’s where pull requests get validated, artifacts get produced, and releases get promoted to production. That also makes CI/CD migration very risky because you're not just moving a "tool"; you're moving the workflow that developers use dozens or hundreds of times a day.

The good news: disruption is optional. If you plan the migration like a product launch for developers, you can change platforms while keeping shipping velocity steady, often improving reliability, security, and cost along the way.

Harness CI can help you reduce migration friction by standardizing pipeline patterns and improving build performance without asking every team to rebuild their workflows from scratch.

What a CI/CD Migration Really Includes (and What to Defer)

A CI/CD migration is more than just "moving pipelines." In reality, you're moving or re-implementing four layers that work together:

- Workflow definitions: pipelines, templates, triggers, branch rules, environments, and approvals.

- Execution layer: build agents/runners, container orchestration, machine pools, concurrency, network access.

- Integrations and dependencies: source control, artifact registries, IaC tools, notifications, ticketing, scanners, and secrets.

- Governance: RBAC, SSO, approvals, audit logs, policy enforcement, and compliance evidence.

What to defer on purpose so you don’t disrupt developers:

- A full rewrite of every edge-case pipeline “to make it perfect.”

- A complete standardization effort across every language, framework, and release process.

- A platform-wide re-architecture that turns the migration into an 18‑month program.

Aim for parity first, then iterate for standardization and optimization once the new platform is stable.

CI/CD Migration Steps (A Practical Plan)

Use this step-by-step plan to migrate safely while developers keep shipping. Start with measurable guardrails, prove parity in a pilot, then scale with wave-based cutovers.

Step 1: Define “No Disruption” for Your CI/CD Migration (and Measure It)

You can’t protect developer experience if you don’t define it.

Start by writing a one-page “rules of engagement” that answers:

- What must keep working with zero/minimal downtime (for example: production deployments, security scans, release approvals)?

- What can tolerate change (for example: non-prod deploys, nightly builds)?

- What does rollback look like if a cutover fails?

- Who owns decisions, and who is on point when a pipeline breaks?

Then baseline two sets of metrics: delivery outcomes and pipeline health.

Delivery outcomes (DORA metrics)

- Deployment frequency

- Lead time for changes

- Change failure rate

- Recovery time/time to restore service (DORA has expanded the model over time)

You can use DORA’s official guide as your shared vocabulary and measurement reference.

Pipeline health

- Median and P95 pipeline duration (by pipeline type: PR checks, mainline builds, deploys)

- Queue time and agent utilization

- Failure rate (overall and by stage)

- Flake rate (tests that fail and pass without code changes)

- Cost per run (compute + licensing + developer time)

Tip: pick a small number of “must not regress” thresholds (for example: PR checks stay under your current P95, deployment approvals still work, and failure rate doesn’t spike).

Step 2: Inventory Your Current CI/CD Reality

Most migration pain comes from what you didn’t discover up front: the secret integration, the shared library, the one pipeline that deploys five services, the hardcoded credential that “nobody owns.”

Build a pipeline catalog with the minimum fields needed to plan waves and parity:

- Repo/service name and owner (team + on-call)

- Pipeline type (PR checks, mainline build, release, deploy)

- Triggers (branch rules, tags, schedules, manual)

- Environments and approvals (dev/stage/prod, gates, checks)

- Artifact outputs (container image, package, Helm chart, etc.)

- Integrations (registry, secrets manager, scanners, Slack/Jira, cloud accounts)

- Execution details (runner type, machine size, caches, custom images)

- “Break glass” notes (special cases, manual steps, tribal knowledge)

Then do two passes:

- Critical path first: production deploy pipelines, shared templates/libraries, monorepo builds, release trains.

- Representative complexity: to show how complicated things can get, add a few "messy but real" pipelines early on so you can find edge cases before the big wave.

If you’re planning migration waves, the Azure Cloud Adoption Framework has a good, useful overview of "wave planning" that works well for CI/CD moves if you're planning migration waves.

Step 3: Choose a CI/CD Migration Strategy That Keeps Teams Shipping

There are three common CI/CD migration strategies. The safest choice depends on your risk tolerance, your compliance constraints, and how tightly coupled your current system is.

Parallel run (recommended for most teams)

- Run old and new pipelines side-by-side until outputs match and reliability stabilizes.

- Use the new platform to build confidence before it becomes the system of record.

Strangler pattern (migrate shared steps first)

- Migrate shared templates, artifact publishing, caching, and scanning first.

- Move full pipelines once the building blocks and standards are stable.

Big bang (use only when forced)

- Sometimes required (tool EOL, hard compliance deadlines), but it needs rehearsals, rollback drills, and heavy coverage.

If you want one crisp rule: default to waves + parallel run. Avoid turning your CI/CD migration into a cliff.

Step 4: Design the Execution Layer Before You Move YAML

Developers don’t experience “YAML,” they experience feedback time and pipeline reliability. Execution decisions will make or break disruption.

Use this checklist to design the execution layer intentionally:

Where do builds run?

- Managed cloud build infrastructure, Kubernetes-based runners, VMs, or a mix.

- Network placement for private dependencies (databases, internal package registries).

- Egress controls and allowlists.

How do you protect performance?

- Dependency caching (language/package caches)

- Docker layer caching (if you build images)

- Reusing build outputs when inputs haven’t changed

- Concurrency limits and resource sizing

How do you handle artifacts and promotion?

- Standard artifact naming and versioning

- Artifact retention rules

- Promotion rules between environments

This is also where you can win developer trust quickly: if the new system’s PR checks are noticeably faster (or at least not slower), adoption becomes easier.

Step 5: Make Identity, Secrets, and Governance “Day 1” Work

CI/CD systems are a big target because if an attacker can change your pipeline, they can change what gets deployed. The U.S. CISA and NSA have published guidance just for protecting CI/CD environments. Use it to make your migration plan and your target platform more secure.

Treat security and governance as migration requirements, not a later phase.

Lock down access with RBAC + separation of duties

- Define who can edit pipelines and templates, manage connectors and secrets, approve promotions, and override gates.

- If you have separation-of-duties requirements, document them and build them into the model.

Prefer short-lived credentials for automation

- Static credentials in pipelines are a long-term risk.

- Where possible, use OIDC-based federation or workload identity.

- AWS’s guidance is explicit about preferring temporary credentials when you can.

Centralize secrets (and plan rotation)

- When you can, use an external secrets manager.

- Minimize secret exposure by keeping secrets out of logs and environment variables whenever possible.

- Before the cutover, make sure you know what rotation ownership and cadence are.

Don't forget proof of compliance.

Don’t forget compliance evidence. CI/CD migration often changes approval workflows, audit logging, and evidence retention. Validate evidence captured during the pilot, not at the end of wave three.

Step 6: Build a Migration Starter Kit Developers Can Copy

To avoid disrupting developers, you need a migration path that feels familiar and removes decision fatigue.

Build a “starter kit” that includes:

- Golden-path templates for the top 5–10 pipeline patterns (PR checks, mainline build, container build, deploy to stage, deploy to prod).

- Standard integrations, configured once: registries, IaC, scanners, notifications, tickets.