Featured Blogs

Over the last few years, something fundamental has changed in software development.

If the early 2020s were about adopting AI coding assistants, the next phase is about what happens after those tools accelerate development. Teams are producing code faster than ever. But what I’m hearing from engineering leaders is a different question:

What’s going to break next?

That question is exactly what led us to commission our latest research, State of DevOps Modernization 2026. The results reveal a pattern that many practitioners already sense intuitively: faster code generation is exposing weaknesses across the rest of the software delivery lifecycle.

In other words, AI is multiplying development velocity, but it’s also revealing the limits of the systems we built to ship that code safely.

The Emerging “Velocity Paradox”

One of the most striking findings in the research is something we’ve started calling the AI Velocity Paradox - a term we coined in our 2025 State of Software Engineering Report.

Teams using AI coding tools most heavily are shipping code significantly faster. In fact, 45% of developers who use AI coding tools multiple times per day deploy to production daily or faster, compared to 32% of daily users and just 15% of weekly users.

At first glance, that sounds like a huge success story. Faster iteration cycles are exactly what modern software teams want.

But the data tells a more complicated story.

Among those same heavy AI users:

- 69% report frequent deployment problems when AI-generated code is involved

- Incident recovery times average 7.6 hours, longer than for teams using AI less frequently

- 47% say manual downstream work, QA, validation, remediation has become more problematic

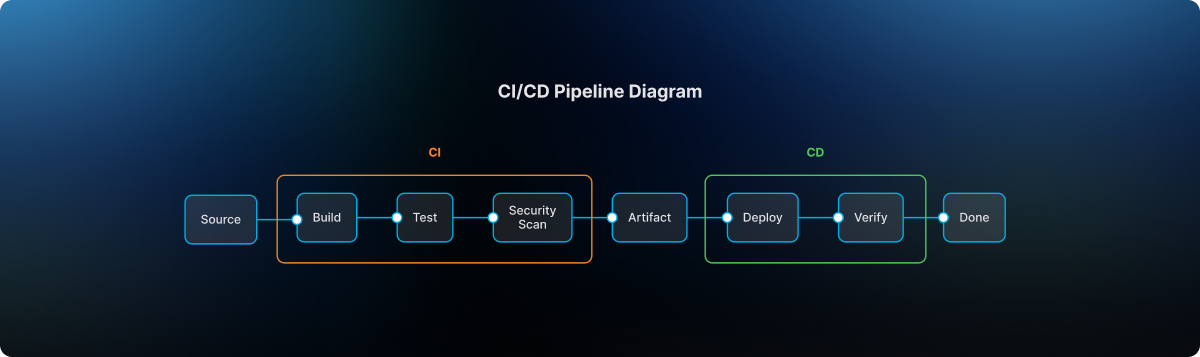

What this tells me is simple: AI is speeding up the front of the delivery pipeline, but the rest of the system isn’t scaling with it. It’s like we are running trains faster than the tracks they are built for. Friction builds, the ride is bumpy, and it seems we could be on the edge of disaster.

The result is friction downstream, more incidents, more manual work, and more operational stress on engineering teams.

Why the Delivery System Is Straining

To understand why this is happening, you have to step back and look at how most DevOps systems actually evolved.

Over the past 15 years, delivery pipelines have grown incrementally. Teams added tools to solve specific problems: CI servers, artifact repositories, security scanners, deployment automation, and feature management. Each step made sense at the time.

But the overall system was rarely designed as a coherent whole.

In many organizations today, quality gates, verification steps, and incident recovery still rely heavily on human coordination and manual work. In fact, 77% say teams often have to wait on other teams for routine delivery tasks.

That model worked when release cycles were slower.

It doesn’t work as well when AI dramatically increases the number of code changes moving through the system.

Think of it this way: If AI doubles the number of changes engineers can produce, your pipelines must either:

- cut the risk of each change in half, or

- detect and resolve failures much faster.

Otherwise, the system begins to crack under pressure. The burden often falls directly on developers to help deploy services safely, certify compliance checks, and keep rollouts continuously progressing. When failures happen, they have to jump in and remediate at whatever hour.

These manual tasks, naturally, inhibit innovation and cause developer burnout. That’s exactly what the research shows.

Across respondents, developers report spending roughly 36% of their time on repetitive manual tasks like chasing approvals, rerunning failed jobs, or copy-pasting configuration.

As delivery speed increases, the operational load increases. That burden often falls directly on developers.

What Organizations Should Do Next

The good news is that this problem isn’t mysterious. It’s a systems problem. And systems problems can be solved.

From our experience working with engineering organizations, we've identified a few principles that consistently help teams scale AI-driven development safely.

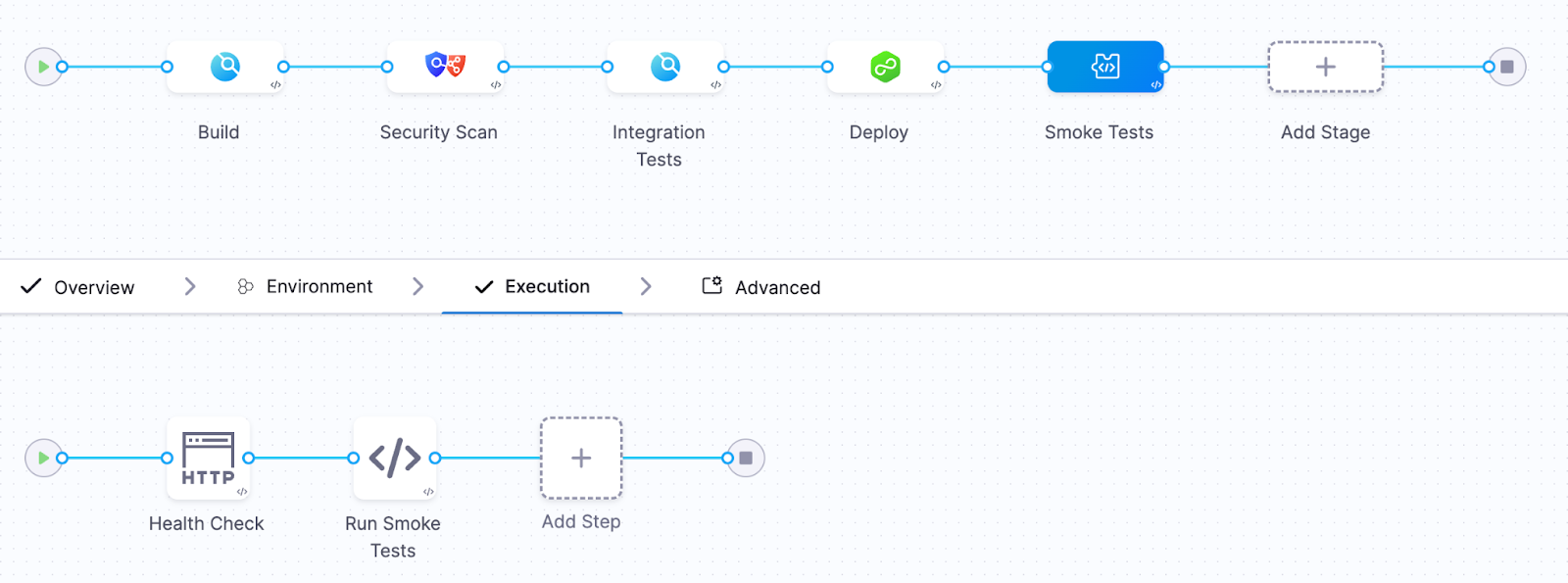

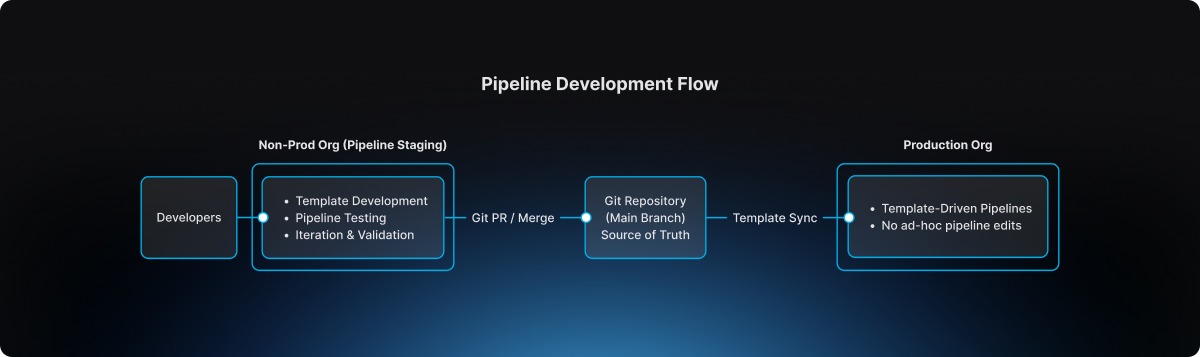

1. Standardize delivery foundations

When every team builds pipelines differently, scaling delivery becomes difficult.

Standardized templates (or “golden paths”) make it easier to deploy services safely and consistently. They also dramatically reduce the cognitive load for developers.

2. Automate quality and security checks earlier

Speed only works when feedback is fast.

Automating security, compliance, and quality checks earlier in the lifecycle ensures problems are caught before they reach production. That keeps pipelines moving without sacrificing safety.

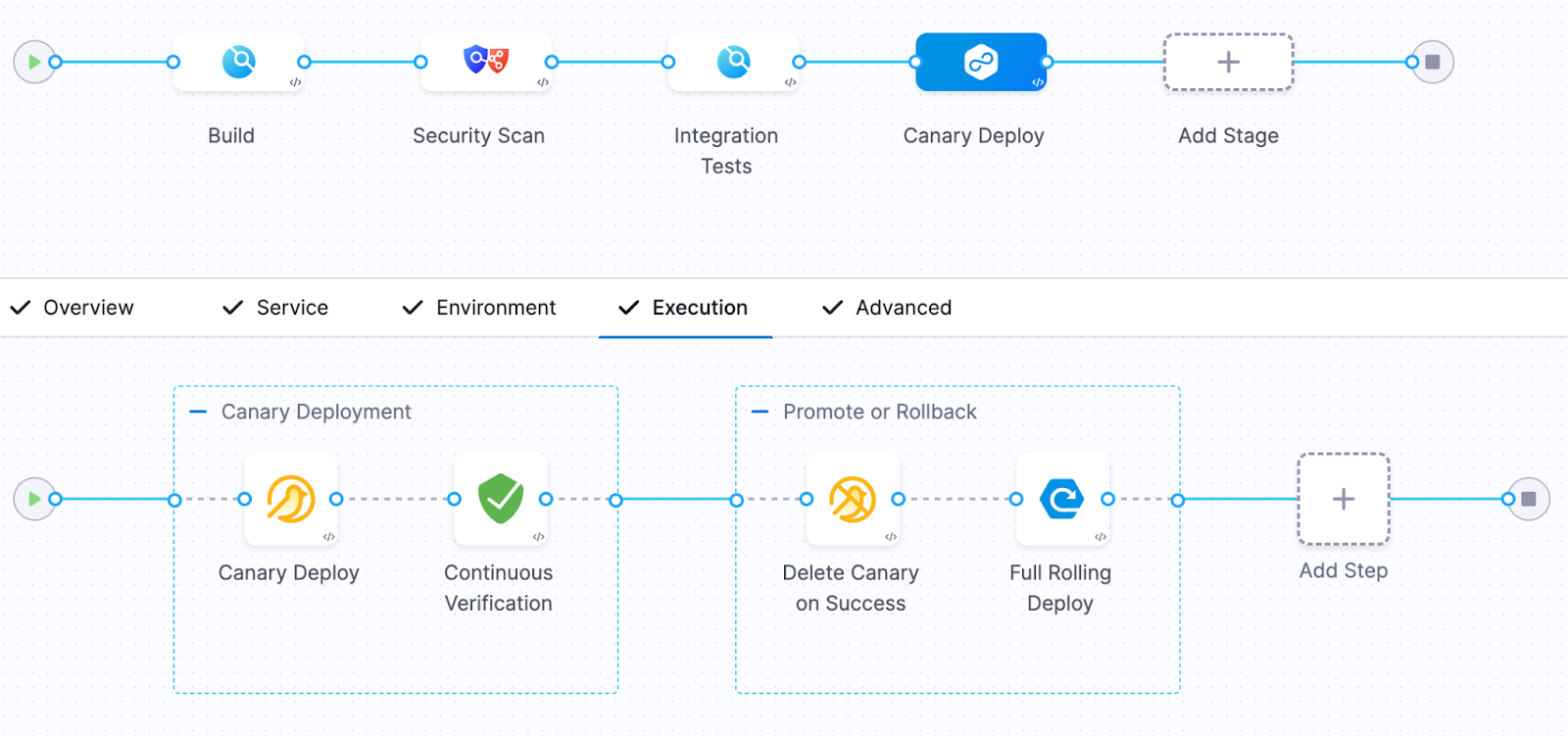

3. Build guardrails into the release process

Feature flags, automated rollbacks, and progressive rollouts allow teams to decouple deployment from release. That flexibility reduces the blast radius of new changes and makes experimentation safer.

It also allows teams to move faster without increasing production risk.

4. Remember measurement, not just automation

Automation alone doesn’t solve the problem. What matters is creating a feedback loop: deploy → observe → measure → iterate.

When teams can measure the real-world impact of changes, they can learn faster and improve continuously.

The Next Phase of AI in Software Delivery

AI is already changing how software gets written. The next challenge is changing how software gets delivered.

Coding assistants have increased development teams' capacity to innovate. But to capture the full benefit, the delivery systems behind them must evolve as well.

The organizations that succeed in this new environment will be the ones that treat software delivery as a coherent system, not just a collection of tools.

Because the real goal isn’t just writing code faster. It’s learning faster, delivering safer, and turning engineering velocity into better outcomes for the business.

And that requires modernizing the entire pipeline, not just the part where code is written.

Road to KubeCon 2025: Smarter AI Delivery

KubeCon 2025 Atlanta is here! For the next four days, Atlanta is the undisputed center of the cloud native universe. The buzz is palpable, but this year, one question seems to be hanging over every keynote, session, and hallway track: AI.

We've all seen the impressive demos. But as developers and engineers, we have to ask the hard questions. Can AI actually help us ship code better? Can it make our complex CI/CD pipelines safer, faster, and more intelligent? Or is it just another layer of hype we have to manage?

At Harness, we believe AI is the key to solving software delivery's biggest challenges. And we're not just talking about it—we're here to show you the code with Harness AI, purpose-built to bring intelligence and automation to every step of the delivery process.

⚡ AI in Action: The Can't-Miss Talk at the Google Booth

We are thrilled to team up with Google Cloud to present a special lightning talk on Agentic AI and its practical use in CI/CD. This is where the hype stops and the engineering begins.

Join our Director of Product Marketing, Chinmay Gaikwad, for this deep-dive session.

- Talk: Creating Enterprise-ready CI/CD using Agentic AI

- Where: Google Cloud Booth 200

- When: Tuesday, November 11th, at 1:00 PM ET (1:00 – 1:15 PM)

Chinmay will be on hand to demonstrate how Agentic AI is moving from a concept to a practical, powerful tool for building and securing enterprise-grade pipelines. Be sure to stop by, ask questions, and get personalized guidance.

Your Map for KubeCon Week

AI is our big theme, but we're everywhere this week, focusing on the core problems you face. Here's where to find us.

1. Main Event: The Harness Home Base (Nov 11-13)

- 📍 : Booth #522 (Solutions Showcase)

This is our command center. Come by Booth #522 to see live demos of our Agentic AI in action. You can also talk to our engineers about the full Harness platform, including how we integrate with OpenTofu, empower platform engineering teams, and help you get a handle on cloud costs. Plus, we have the best swag at the show.

2. Co-located Event: Platform Engineering Day (Nov 10)

- 📍 : Booth #Z45

As a Platinum Sponsor, we're kicking off the week with a deep focus on building Internal Developer Platforms (IDPs). Stop by Booth #Z45 to chat about building "golden paths" that developers will actually love and how to prove the value of your platform.

3. Co-located Event: OpenTofu Day (Nov 10)

- 📍 : Level 2, Room B203

We are incredibly proud to be a Gold Sponsor of OpenTofu Day. As one of the top contributors to the OpenTofu project, our engineers are in the trenches helping shape the future of open-source Infrastructure as Code.

The momentum is undeniable:

- 26K+ GitHub stars

- 10M+ total downloads

- 180+ engineering contributors

Our engineers have contributed major features like the AzureRM backend rewrite and the new Azure Key Provider, and we serve on the Technical Steering Committee. Come find us in Room B203 to meet the team and talk all things IaC.

Can't wait? Download the digital copy of The Practical Guide to Modernizing Infrastructure Delivery and AI-Native Software Delivery right now.

KubeCon 2025 Atlanta is about what's next. This year, "what's next" is practical AI, smarter platforms, and open collaboration. We're at the center of all three.

See you on the floor!

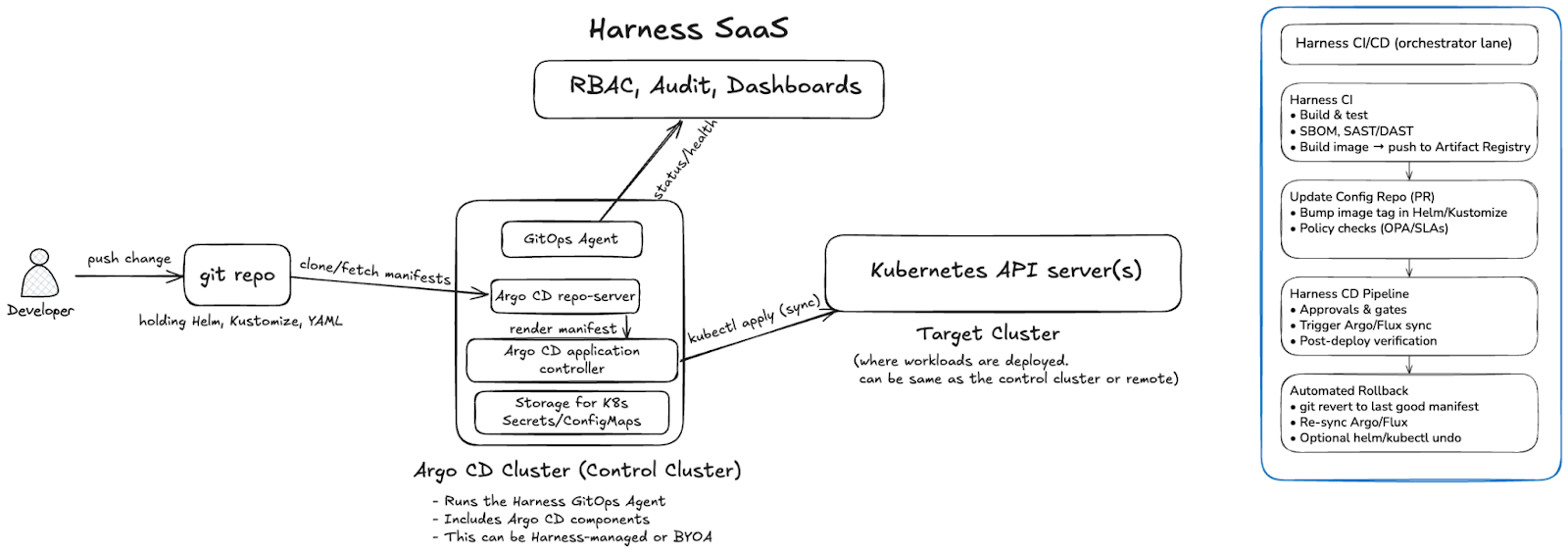

Harness GitOps builds on the Argo CD model by packaging a Harness GitOps Agent with Argo CD components and integrating them into the Harness platform. The result is a GitOps architecture that preserves the Argo reconciliation loop while adding visibility, audit, and control through Harness SaaS.

The Control Plane: Argo CD Cluster

At the center of the architecture is the Argo CD cluster, sometimes called the control cluster. This is where both the Harness GitOps Agent and Argo CD’s core components run:

- GitOps Agent: a lightweight worker installed via YAML that establishes outbound connections to Harness SaaS. The GitOps Agent establishes an outbound-only connection to Harness SaaS, executes SaaS-initiated requests locally, and continuously reports the state of Argo CD resources (Applications, ApplicationSets, Repositories, and Clusters).

- Repo-server: pulls manifests from Git repositories.

- Application Controller: compares desired state with live cluster state and applies changes through the Kubernetes API.

- ApplicationSet Controller: automates the creation and management of multiple Argo CD Applications from a single definition, using generators (for example, list, Git, or cluster) to create parameterized applications. This makes it easier to handle large-scale and dynamic deployments. Learn more in the Argo CD docs(Argo CD : Generating Applications with ApplicationSet(https://argo-cd.readthedocs.io/en/latest/user-guide/application-set/)).

The control cluster can be deployed in two models:

- Harness-managed: Harness provides a pre-packaged installation bundle (Kubernetes manifests or Helm configs). You apply these to your cluster, and they set up the required Argo CD components along with the GitOps Agent. Harness makes it easier to get started, but you still own the install action. In the Harness-managed model, Harness provides upgrade bundles for Argo CD components; in BYOA, you retain full responsibility for Argo lifecycle management and version drift.

- Bring Your Own Argo (BYOA): If you already operate Argo CD, Harness only provides the GitOps Agent installation instructions. You continue managing the full lifecycle and upgrades of Argo CD yourself.

Target Clusters

The Argo CD Application Controller applies manifests to one or more target clusters by talking to their Kubernetes API servers.

- In the simplest setup, the control cluster and target cluster are the same (in-cluster).

- In a hub-and-spoke setup, a single Argo CD cluster can manage multiple remote target clusters.

- Multiple agents can be deployed if you want to isolate environments or scale out reconciliation.

Git as the Source of Truth

Developers push declarative manifests (YAML, Helm, or Kustomize) into a Git repository. The GitOps Agent and repo-server fetch these manifests. The Application Controller continuously reconciles the cluster state against the desired state. Importantly, clusters never push changes back into Git. The repository remains the single source of truth. Harness configuration, including pipeline definitions, can also be stored in Git, providing a consistent Git-based experience.

Harness SaaS Integration

While the GitOps loop runs entirely in the control cluster and target clusters, the GitOps Agent makes outbound-only connections to Harness SaaS.

Harness SaaS provides:

- User interface for GitOps operations.

- Audit logging of syncs and drifts.

- RBAC enforcement at the project, org, or account level.

All sensitive configuration data, such as repository credentials, certificates, and cluster secrets, remain in the GitOps Agent’s namespace as Kubernetes Secrets and ConfigMaps. Harness SaaS only stores a metadata snapshot of the GitOps setup (Applications, ApplicationSets, Clusters, Repositories, etc.), never the sensitive data itself. Unlike some SaaS-first approaches, Harness never requires secrets to leave your cluster, and all credentials and certificates remain confined to your Kubernetes namespace.

End-to-End Flow

- A developer commits or merges a change to Git.

- The Argo CD repo-server fetches the updated manifests.

- The Application Controller compares the desired vs live state.

- If drift exists, it is reconciled by applying the manifests through the Kubernetes API.

- The GitOps Agent reports sync and health status back to Harness SaaS for visibility and governance.

In short: a developer commits, Argo fetches and reconciles, and the GitOps Agent reports status back to Harness SaaS for governance and visibility.

This is the pure GitOps architecture: Git defines the desired state, Argo CD enforces it, and Harness provides governance and observability without altering the core reconciliation model.

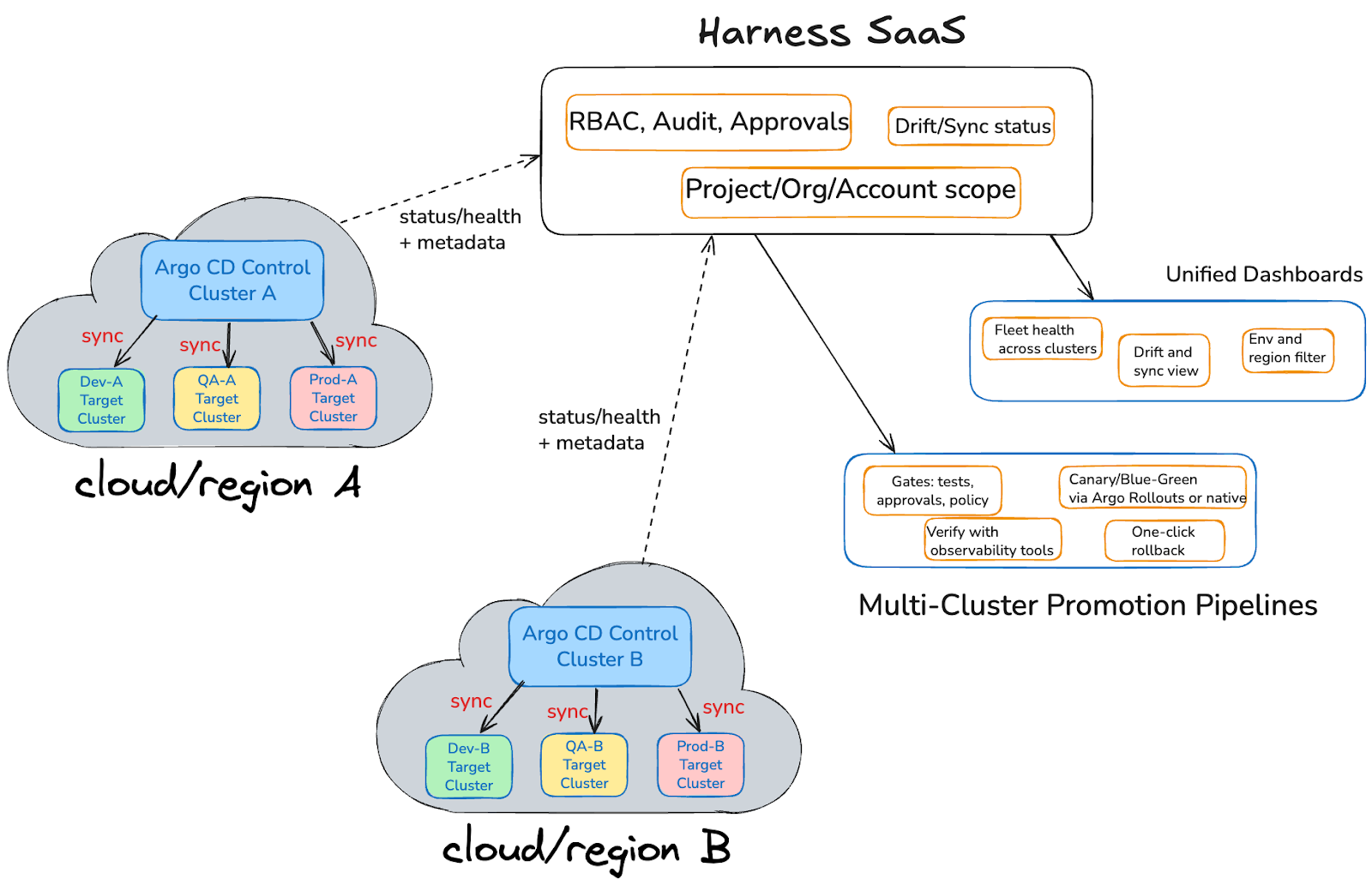

Scaling Beyond a Single Cluster

Most organizations operate more than one Kubernetes cluster, often spread across multiple environments and regions. In this model, each region has its own Argo CD control cluster. The control cluster runs the Harness GitOps Agent alongside core Argo CD components and reconciles the desired state into one or more target clusters such as dev, QA, or prod.

The flow is straightforward:

- Developers push declarative manifests into Git.

- Each control cluster fetches those manifests, compares the desired state to the live state, and applies changes to its target clusters through Kubernetes API calls (sync).

- The control cluster then reports status, health, and metadata back to Harness SaaS over outbound-only connections.

Harness SaaS aggregates data from all control clusters, giving teams a single view and a single place to drive rollouts:

- Unified Dashboards:

- Fleet health across clusters

- Drift and sync visibility

- Environment and region filtering

- Fleet health across clusters

- Multi-Cluster Promotion Pipelines:

- Gates for tests, approvals, and policies

- Canary or blue/green rollouts with Argo Rollouts or native strategies

- Verification using Harness Verify with integrations to observability tools such as AppDynamics, Datadog, Prometheus, New Relic, Elasticsearch, Grafana Loki, Splunk, and Sumo Logic, enabling automated analysis of metrics and logs to gate promotions with confidence.

- One-click rollback that restores applications, infrastructure, and cluster resources defined in Git, and database schema when migrations are stored alongside your manifests, providing a true rollback to a known good state.

- Gates for tests, approvals, and policies

This setup preserves the familiar Argo CD reconciliation loop inside each control cluster while extending it with Harness’ governance, observability, and promotion pipelines across regions.

Note: Some enterprises run multiple Argo CD control clusters per region for scale or isolation. Harness SaaS can aggregate across any number of clusters, whether you have two or two hundred.

Next Steps

Harness GitOps lets you scale from single clusters to a fleet-wide GitOps model with unified dashboards, governance, and pipelines that promote with confidence and roll back everything when needed. Ready to see it in your stack? Get started with Harness GitOps and bring enterprise-grade control to your Argo CD deployments.

Latest Blogs

Core Java vs Enterprise Java: Jakarta EE, Spring Boot & Modern Trade-offs [2026 Guide]

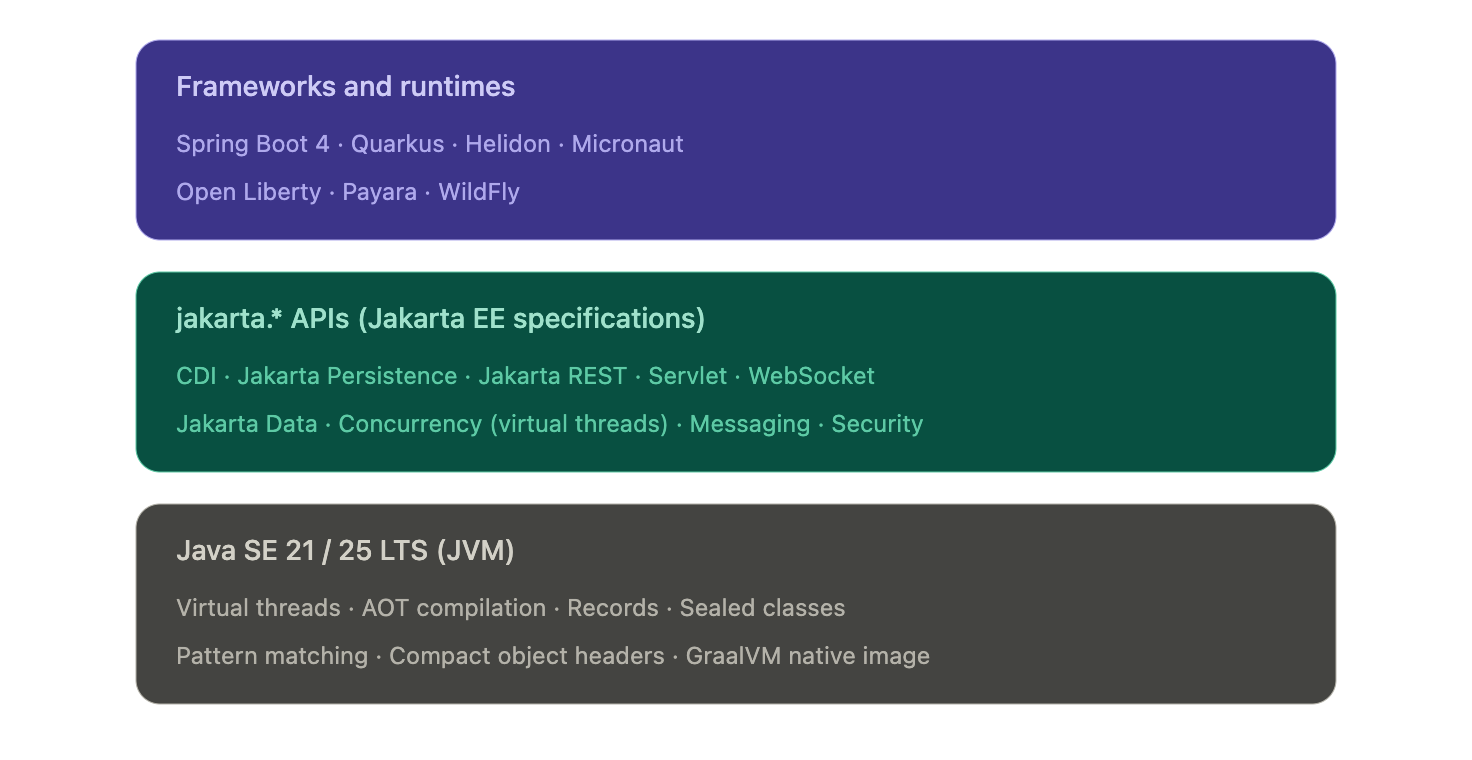

- The "Java EE vs Java SE" framing is dated. In 2026, every modern enterprise Java app runs on Java SE 21 or 25 LTS. The real decision is which framework or runtime sits on top — Spring Boot, Quarkus, Helidon, Micronaut, or vanilla Jakarta EE on Open Liberty, Payara, or WildFly.

- The javax.* → jakarta.* namespace migration is the upgrade gate most teams are still working through. Jakarta EE 9 (2020) renamed every package. Spring Boot 3 and 4 require the new namespace. Any framework or library jump in 2026 has to reckon with it.

- The "heavyweight app server" critique no longer applies to the runtimes anyone is choosing. Quarkus, Helidon, and Open Liberty's lightweight profiles compile to native images, start in tens of milliseconds, and run in under 100 MB — competitive with Go and Node on cold-start and footprint.

- Standardizing delivery velocity matters more than framework preference. Mixed Java fleets (Spring Boot + Quarkus + legacy Jakarta EE) are the norm. AI-powered CD, GitOps, and policy-as-code give platform teams a single operational model across all of them, without forcing framework consolidation.

When you're architecting an enterprise Java application, one decision quietly shapes everything downstream: runtime footprint, deployment pipelines, and how your platform team handles incidents at 3 a.m. For two decades, that decision was framed as Java SE vs Java EE. In 2026, that framing has quietly inverted.

Nearly every modern enterprise Java app runs on Java SE 21 or 25 LTS. The real choice now sits one layer up: which framework or runtime sits on top of the JVM. Spring Boot. Quarkus. Helidon. Micronaut. Vanilla Jakarta EE on Open Liberty, Payara, or WildFly. These options have converged on the same underlying APIs. Spring Boot 3 and 4 sit on jakarta.* packages, the same namespace Jakarta EE itself uses. But they differ sharply in startup time, memory footprint, deployment topology, and what your CI/CD pipeline has to do to ship them safely.

This guide is for the platform engineer, architect, or staff engineer who needs to make that call once and live with it across dozens of services. We'll cover what changed, where the stacks still diverge, and how to standardize delivery across a mixed Java fleet without forcing consolidation no team wants.

What is Java SE?

Java SE (Standard Edition) is the foundation of every Java application, from a five-line script to a globally distributed system. It's the language, the runtime, and the core libraries every Java program assumes is there.

But describing Java SE as just "the foundation" undersells what's happened to it in the last three years. Java SE in 2026 is not the Java SE of 2018.

What Java SE provides

At its core, Java SE includes:

- The Java language itself, including modern features like records, sealed classes, pattern matching, and switch expressions

- The JVM, which gives you platform independence and decades of mature garbage collection, JIT compilation, and observability tooling

- Core libraries for collections, concurrency, file I/O, networking, and HTTP

- Build and dev tools: javac, jshell, jpackage, and the AOT cache introduced in recent LTS releases

These pieces form the runtime baseline that every Java framework, including Spring Boot, Quarkus, and Jakarta EE implementations, sits on top of.

What's new in Java SE that actually matters

If you've been away from the platform for a few years, four changes are worth knowing about before you make any architectural decisions:

Virtual threads (stable in Java 21). Project Loom collapsed the cost of a thread from megabytes of stack to a few hundred bytes. A single JVM can now run millions of concurrent virtual threads. This is the biggest concurrency change in Java's history and it removes the main argument for reactive frameworks like WebFlux on most workloads. Blocking code is fast again.

AOT compilation and native images. GraalVM native image and the JDK's own ahead-of-time caching turn Java apps into binaries that start in tens of milliseconds and use a fraction of the memory of a warm JVM. This used to be a Quarkus or Micronaut differentiator. It's now table stakes across the ecosystem, including Spring Boot 3+.

Records, sealed classes, and pattern matching. The boilerplate that used to push teams toward Lombok or Kotlin is mostly gone. Data-oriented programming in modern Java looks closer to Scala or Kotlin than to Java 8.

Java 25 LTS performance work. Compact object headers shrink object overhead by roughly 22% on heap-heavy workloads. The G1 garbage collector got a redesigned card table in Java 26 that delivers measurable throughput gains on reference-heavy code.

What Java SE doesn't give you

Plain Java SE is honest about its scope. It does not give you:

- A web server or HTTP routing layer

- Dependency injection

- Database access beyond raw JDBC

- Transaction management

- Security, authentication, or authorization frameworks

- A configuration system

You can build all of these by hand. Almost no one does. In practice, "I'm using Java SE" in 2026 means "I'm using Java SE plus a framework that supplies the missing pieces." That framework is the actual decision, which is where the rest of this guide focuses.

What is Jakarta EE? (Formerly Java EE)

Jakarta EE is the modern successor to Java EE, the standardized set of APIs and specifications for building enterprise-scale Java applications. If you wrote enterprise Java between 2000 and 2017, you wrote Java EE. Everything since 2018 is Jakarta EE.

The name change wasn't cosmetic. It came with a migration that every Java team upgrading in 2026 still has to plan for.

What changed: Java EE became Jakarta EE

Oracle transferred Java EE to the Eclipse Foundation in 2017. The platform was renamed Jakarta EE because Oracle retained the "Java" trademark. Java EE 8 (2017) was the last release under the old name. Jakarta EE 8 (2019) was the same platform under new governance.

Then came the breaking change. Starting with Jakarta EE 9 (2020), every package was renamed from javax.* to jakarta.*. An import that used to read import javax.persistence.Entity now reads import jakarta.persistence.Entity. The change was mechanical, but it touched every file in every Jakarta EE codebase on the planet, and it forced every framework that depended on those APIs to publish a major-version break.

This is why Spring Boot 3 (late 2022) was a hard upgrade. Spring Boot 3 dropped javax.* and adopted jakarta.*. Any Spring Boot 2.x application moving to 3.x or 4.x has to migrate the namespace. Tools like Eclipse Transformer and OpenRewrite automate most of it, but the migration is still the gating event for many platform upgrades happening in 2026.

What Jakarta EE provides today

Jakarta EE 11, released in 2025, is the current stable platform. Jakarta EE 12 is in development. The headline specifications most teams interact with are:

- CDI (Contexts and Dependency Injection), the dependency injection container at the center of every modern Jakarta EE app. CDI replaced EJB as the default DI mechanism years ago. EJB still exists but is largely a legacy concern.

- Jakarta Persistence (JPA) for ORM and database access

- Jakarta REST (JAX-RS) for REST endpoints

- Jakarta Servlet and WebSocket for HTTP and bidirectional communication

- Jakarta Data, new in Jakarta EE 11. A standardized repository pattern, similar in feel to Spring Data, that simplifies persistence access

- Jakarta Concurrency, updated in Jakarta EE 11 with first-class virtual thread support

- Jakarta Messaging (JMS), Jakarta Transactions (JTA), Jakarta Security, Jakarta Validation, and Jakarta Batch for the rest of the platform

If you're a Spring developer, several of these will look familiar. That's not coincidence. Spring's annotations and patterns shaped Jakarta EE's modernization, and Jakarta EE's specifications now define the underlying APIs Spring builds on. The two ecosystems converged.

Jakarta EE Core Profile: the cloud-native subset

A common objection to Jakarta EE is that it's too heavy for microservices. Jakarta EE 10 answered this directly with the Core Profile: a minimal subset of specifications (CDI Lite, JAX-RS, JSON-P, JSON-B, Annotations, Interceptors, Dependency Injection) explicitly designed for lightweight cloud-native runtimes and AOT compilation.

The Core Profile is what runtimes like Quarkus implement when they want Jakarta EE compatibility without the full platform's footprint. It's the answer to "Jakarta EE doesn't fit in a container." It does. The original critique was about WebSphere and WebLogic, not about Jakarta EE the specification.

Modern Jakarta EE runtimes

In 2026, picking Jakarta EE doesn't mean picking a multi-gigabyte application server. The runtimes teams actually choose are:

- Quarkus (Red Hat). Compiles to GraalVM native images. Cold start under 50 ms, memory footprint under 50 MB. Built for containers, serverless, and Kubernetes from day one.

- Helidon (Oracle). Available in two flavors: Helidon SE (reactive, lightweight) and Helidon MP (full MicroProfile and Jakarta EE). Native image support.

- Open Liberty (IBM). Modular runtime where you load only the features you need. The lightweight profile is competitive with Spring Boot on memory.

- Payara Micro and Payara Server. The successor to GlassFish, with strong support for incremental modernization of legacy Java EE workloads.

- WildFly (Red Hat). The community upstream of JBoss EAP. Suitable for both traditional app server deployments and bootable JAR packaging.

The legacy "heavyweight Java EE" stereotype belongs to WebSphere full profile and WebLogic. Those are real products with real footprints, but in 2026 they're an active migration target, not a forward choice for new development.

Figure: Modern enterprise Java is a layered stack. Frameworks and runtimes pick their packaging and opinions, but they all sit on the same jakarta.* API surface and the same JVM.

Where the modern Java stacks actually differ

- The honest answer to "Spring Boot vs Jakarta EE" in 2026 is that they differ less than they used to and more than the convergence story implies. The two questions worth separating are: what's actually shared now, and where does the choice still change your life as a platform engineer.

- What's converged (no longer a real differentiator)

- Three things used to be on every Java EE vs Spring comparison and aren't anymore:

- The API surface. Spring Boot 3 and 4 use the same jakarta.* packages Jakarta EE itself defines. A Servlet is a Servlet. A @PersistenceContext is a @PersistenceContext. The annotations and types your business logic touches are the same on both stacks.

- Concurrency model. Virtual threads (Java 21) work identically under any framework. Both Spring Boot and Jakarta EE Concurrency expose virtual-thread executors. The reactive-or-blocking debate that defined the last five years has largely collapsed for typical CRUD services.

- Native compilation. GraalVM native image works for Spring Boot (via Spring AOT), Quarkus, Helidon, Micronaut, and most Jakarta EE runtimes. Cold-start under 100 ms and memory under 100 MB are no longer Quarkus differentiators. They're available on every modern stack with varying degrees of polish.

- If a comparison article tells you the choice between Spring Boot and Jakarta EE comes down to APIs, threading, or native compilation, it's working from a 2020 mental model.

- Where the stacks still diverge

- Four areas actually shape your platform team's day-to-day:

- Packaging and deployment. Spring Boot's fat-JAR plus embedded Tomcat or Netty is the assumed baseline across most of the industry. Quarkus and Helidon SE produce equally simple bootable JARs but lean harder on native images for cold-start-sensitive workloads. Open Liberty, Payara, and WildFly support deployable WAR or EAR archives onto a runtime, which still matters in regulated environments where the runtime is provisioned and audited separately from the application.

- Startup and memory profile. This is where the real numbers diverge. A typical Spring Boot service on the JVM starts in 2 to 5 seconds and runs in 200 to 400 MB. Quarkus on the JVM lands closer to 1 second and 150 MB. Quarkus or Helidon as a native binary starts in 30 to 80 ms and runs in 30 to 80 MB. If you're scaling to zero, running on edge nodes, or paying per-millisecond on a serverless platform, that gap is the entire reason to look beyond Spring Boot.

- Configuration philosophy. Spring Boot leans hard on auto-configuration: pull in a starter, get sane defaults, override what you need. Jakarta EE leans harder on explicit declaration through CDI and standard configuration sources. Neither is objectively better, but they shape how readable a 50-service codebase is to a new hire. Spring Boot wins on initial productivity. Jakarta EE wins on "what is this service actually doing" once the codebase has aged for three years.

- Ecosystem and hiring. Spring Boot has the larger community, the larger Stack Overflow corpus, and the deeper integration library ecosystem. For most enterprise teams, that gravity is the dominant factor. Jakarta EE runtimes and Quarkus, Helidon, and Micronaut all have first-class documentation, but the available talent pool is meaningfully smaller. This is a delivery risk, not a technology risk, and it's worth treating it as one.

- The honest framing for a platform team in 2026: pick the stack whose packaging, runtime profile, and ecosystem maturity match your actual workload. Don't pick based on philosophical preferences for "standards" or "convention over configuration." Those debates were settled in the convergence.

From convergence to choice: what actually drives the decision in 2026

By this point in the article, the framing should be obvious: Spring Boot, Quarkus, Helidon, Micronaut, and vanilla Jakarta EE on Open Liberty or Payara are not five different platforms. They're five different opinions sitting on the same jakarta.* APIs and the same JVM. So how do teams actually decide?

In practice, four signals do most of the work.

Signal 1: What does the rest of your fleet run?

The single biggest predictor of which stack a new service uses is which stack the team's other services already use. This is not laziness. It's a sound platform decision. Two services on the same framework share build tooling, base container images, observability libraries, configuration patterns, deployment templates, and on-call runbooks. A team running 40 Spring Boot services will pay a real operational tax to introduce a Quarkus service, even if Quarkus is technically the better fit for that one workload.

The exception is when the new workload has a specific profile that the existing stack genuinely can't serve well. A Spring Boot shop building one event-driven function that needs to scale to zero on AWS Lambda has a legitimate reason to reach for Quarkus or a native Spring Boot image. A Jakarta EE shop building one async data-processing service has a legitimate reason to reach for Spring Boot's mature integration ecosystem. The decision rule is not "best tool for the job in isolation," it's "best tool given what we already operate."

Signal 2: What's the deployment target?

The deployment target matters more than most architecture discussions admit. Three patterns dominate:

- Long-running services on Kubernetes or VMs. Any framework works. Spring Boot is the path of least resistance because the ecosystem assumes it. Quarkus, Helidon, and Open Liberty's lightweight profiles are competitive on the JVM and pull ahead on memory.

- Serverless and scale-to-zero. Cold start is the dominant cost. Native compilation moves from a nice-to-have to a requirement. Quarkus native and Spring Boot native are the realistic options. Helidon SE native is competitive.

- Traditional application servers. If the deployment target is an existing WebLogic or WebSphere environment, the question isn't which framework to adopt. The question is whether to keep deploying onto that runtime (Open Liberty and Payara are the modernization paths) or to refactor toward a JAR-based deployment model.

Signal 3: What's the team's reactive vs imperative bias?

Five years ago, this was a religious debate. Virtual threads have mostly settled it for new code. But existing services that are already reactive don't get a free migration, and teams that have built fluency with Project Reactor, RxJava, or Mutiny will keep getting value from those investments.

The practical guidance:

- New service, no existing reactive code, typical CRUD or RPC workload: write imperative code on virtual threads. Spring Boot or Jakarta EE either way.

- New service, high-fan-out integration or backpressure-sensitive streaming: reactive still wins. Spring WebFlux or Quarkus with Mutiny.

- Existing reactive codebase: do not migrate to imperative just because virtual threads exist. The migration cost is real. The benefit is marginal for code that already works.

Signal 4: How much governance do you need?

This is the question that quietly distinguishes Jakarta EE from Spring Boot in regulated environments. Jakarta EE is a specification with multiple compatible implementations. A regulated bank or insurer can require "any Jakarta EE 11 compatible runtime" in a procurement document and have meaningful vendor portability. Spring Boot is a single implementation, governed by VMware. That's fine for most teams. It's a real consideration for organizations with compliance requirements around vendor lock-in.

Quarkus, Helidon, and Open Liberty all sit on the Jakarta EE side of this line because they implement Jakarta EE specifications. Spring Boot does not, despite using jakarta.* packages. The distinction matters less than it used to, but it has not gone away.

The takeaway

The convergence at the API layer means most teams can pick any of these stacks and ship perfectly good software. The choice is no longer a technology bet. It's a fit-to-fleet, fit-to-deployment-target, and fit-to-governance-model decision. The teams that get this wrong are the ones still litigating it as a technology choice.

Why your stack choice shapes reliability and AI SRE

Stack choice does not end at deployment. It shapes how your services emit telemetry, how incidents propagate, and how quickly your platform team can pin down the root cause when something breaks at 2 a.m. The convergence story makes parts of this easier (shared APIs mean shared observability standards) and parts of it harder (mixed fleets mean more surface area for incidents to hide in).

Three operational realities worth thinking through.

1. Mixed fleets are the norm, not the exception

The 2026 platform team rarely operates a single-framework fleet. Most enterprise Java estates look like this: a long tail of Spring Boot services, a growing edge of Quarkus or native-compiled services for cold-start-sensitive workloads, and a stable core of older Jakarta EE applications running on Open Liberty, Payara, or WildFly. Sometimes a few WebLogic or WebSphere systems are still in active modernization.

This mix is fine. It reflects real organizational decisions made over time. But it means your reliability strategy cannot assume framework homogeneity. Health endpoint conventions, log formats, metric names, and tracing instrumentation differ across these stacks unless you actively unify them. The teams that struggle most with incident response are the ones who let each service team pick its own conventions.

2. Observability standards have converged. Implementations have not.

OpenTelemetry has become the cross-stack standard for traces, metrics, and logs in enterprise Java. Spring Boot, Quarkus, Helidon, Micronaut, and most Jakarta EE runtimes all ship with OpenTelemetry instrumentation either built-in or one dependency away. This is genuinely good news for platform teams.

The catch: standardization at the protocol layer does not give you standardization at the convention layer. Two services emitting OpenTelemetry traces can still tag spans with completely different attribute names. Two services emitting metrics can still use different naming conventions for the same operation. AI SRE platforms perform best when the signals they ingest are semantically consistent. That consistency is a platform-engineering decision, not a framework decision.

The practical guidance: pick a single OpenTelemetry semantic convention (the OTel HTTP and database conventions are reasonable defaults) and enforce it across stacks through your shared observability libraries. The framework choice does not matter as much as whether you've made the convention choice at all.

3. Cold-start patterns differ enough to change incident behavior

A typical Spring Boot service on the JVM takes 2 to 5 seconds to start, hits steady-state CPU and memory after another 30 to 60 seconds of JIT warmup, and produces meaningful traces and metrics throughout. A Quarkus native binary starts in under 100 milliseconds and reaches steady state immediately. These are different operational profiles. They produce different incident patterns.

Spring Boot deployments tend to fail visibly during startup or warmup. Native deployments tend to fail at build time or never. Spring Boot scaling events are slower and more forgiving. Native scaling events are faster but more brittle when something is wrong with the binary itself. AI SRE platforms detect anomalies based on baselines, and your baselines should reflect the runtime profile of the service being monitored. A 3-second startup that is normal for a JVM service is a critical anomaly for a native service.

Where AI-driven reliability earns its keep

This is where AI SRE platforms like Harness AI SRE become operationally meaningful. In a single-framework fleet, a senior SRE can mostly hold the operational model in their head. In a mixed fleet of 50 to 500 services across Spring Boot, Quarkus, and legacy Jakarta EE, no human can. The questions AI SRE answers well are exactly the questions mixed-fleet teams ask:

- Which of these 12 simultaneous alerts are symptoms of the same root cause?

- Is this latency spike on Service A correlated with the deployment of Service B 40 minutes ago?

- Has this service's startup pattern drifted from its historical baseline in a way that predicts a future outage?

- Across this fleet, which services share the dependency that just got a critical CVE?

These questions are tractable for AI when the underlying telemetry is consistent. They are intractable for humans regardless of telemetry quality. That's the operational case for treating AI SRE as platform infrastructure rather than as a tool individual teams adopt.

The framework choice shapes the data. The platform decision is what you do with it.

See how Harness AI SRE correlates incidents across mixed Java fleets.

Framework decision matrix: which stack fits which workload

The honest answer to "which Java stack should we use" depends on what you're building, what you already operate, and what your deployment target looks like. The matrix below is opinionated and concrete. Use it as a starting point, not a final answer.

Spring Boot

Choose when:

- Your team already runs Spring Boot. The hiring pool, ecosystem, and shared platform tooling pay for themselves several times over.

- You need the broadest integration library coverage. Spring Data, Spring Security, Spring Cloud, and the surrounding ecosystem are deeper than any Jakarta EE alternative.

- You want a single mainstream choice that any senior Java engineer will recognize on day one.

- You need a mature reactive option for backpressure-sensitive or very high fan-out workloads (Spring WebFlux).

Avoid when:

- Cold-start time is your dominant cost and you don't want to take on Spring Boot AOT and native image build complexity.

- Procurement requires multi-vendor specification compatibility. Spring Boot is a single VMware-governed implementation.

Current version baseline: Spring Boot 4.0 (released late 2025), running on Java 21 or 25 LTS. Spring Boot 3.x remains a reasonable choice for teams not ready to upgrade Spring Framework to 7.

Quarkus

Choose when:

- You're deploying to serverless, edge, or scale-to-zero environments where cold start and memory footprint are the dominant operational costs.

- Native image is a first-class concern, not an afterthought. Quarkus was designed around it.

- You want Jakarta EE compatibility with cloud-native packaging and a developer experience optimized for fast feedback loops (live reload, dev services).

- You're greenfield or building a clearly-bounded subset of services where the team can absorb the smaller talent pool.

Avoid when:

- The team has no existing Quarkus expertise and the workload doesn't actually need native compilation. The startup-time gains on the JVM are real but marginal compared to the hiring cost.

- You're deeply invested in Spring-specific libraries (Spring Cloud, Spring Data's full feature set). Quarkus has equivalents for most things, but the migration cost is real.

Helidon

Choose when:

- You're an Oracle-aligned shop or run on Oracle Cloud Infrastructure. Helidon is well-supported there.

- You want a clear choice between a reactive flavor (Helidon SE) and a full Jakarta EE / MicroProfile flavor (Helidon MP) inside the same product family.

- You need native image support backed by an enterprise vendor.

Avoid when:

- You're not in an Oracle-leaning environment. The community and ecosystem around Helidon are smaller than Quarkus or Spring Boot, and the network effects matter.

Micronaut

Choose when:

- You want compile-time dependency injection to avoid reflection overhead and improve startup time on the JVM, without committing to a native image build.

- You're building polyglot teams across Java, Kotlin, and Groovy and want consistent ergonomics.

- You like the architectural opinions: ahead-of-time everything, no runtime classpath scanning, fast cold start without GraalVM as a hard requirement.

Avoid when:

- You need a deep ecosystem of integration libraries on day one. Micronaut's ecosystem is solid but smaller than Spring's.

Vanilla Jakarta EE on Open Liberty, Payara, or WildFly

Choose when:

- You have existing Java EE applications you're modernizing incrementally. These runtimes support the deployable WAR or EAR model and let you upgrade Java versions and Jakarta EE versions without rewriting deployment topology.

- Procurement or compliance requires multi-vendor specification compatibility. "Any Jakarta EE 11 compatible runtime" is a meaningful procurement clause. "Spring Boot 4" is not.

- You want long-term stability over framework innovation. Jakarta EE specifications evolve more slowly and with stronger backwards compatibility guarantees than Spring Boot.

- Your operations team is already proficient with one of these runtimes and the cost of switching outweighs the benefit.

Avoid when:

- You're greenfield with no Jakarta EE legacy. The other options on this list will move faster.

A note on legacy WebSphere and WebLogic

Neither of these is a forward choice in 2026. Both are real products with real production footprints, but new development on them is rare outside very specific enterprise circumstances. If you're running WebSphere full profile or WebLogic, the relevant question is the modernization path: typically Open Liberty (the IBM-supported migration target from WebSphere) or Helidon and WildFly (common WebLogic migration targets).

How to actually decide

If you've read this far and the matrix still feels like five reasonable options, default to one of two answers:

- Greenfield, no strong existing fleet bias: Spring Boot. The ecosystem advantage compounds over time, and any future "we should have picked X for cold start" pain is fixable with Spring Boot AOT or by introducing a second framework for that specific workload.

- Greenfield, cold start matters from day one: Quarkus. The investment in native image tooling and the Quarkus dev experience pays off when scale-to-zero and per-millisecond billing are real costs.

For everything else, the matrix above is a tiebreaker. The decision rule that beats every other rule is: pick the framework your platform team can operate well at 2 a.m.

What this looks like in practice

The article has been pushing toward one conclusion: in 2026, most enterprise Java estates are mixed-framework by design, and the platform team's job is to make that mix operable rather than to force consolidation.

What that looks like concretely:

A Spring Boot core handles the long tail of CRUD services and customer-facing APIs. A handful of Quarkus or native Spring Boot services sit at the edges where cold start matters: serverless functions, event handlers, scale-to-zero workloads. A stable set of Jakarta EE applications on Open Liberty or Payara handles the deeply-integrated systems that have been running reliably for years and would cost more to rewrite than to maintain. Java 21 is the floor across all of it, with a planned migration to Java 25 LTS over the next 12 to 18 months.

This is not an architectural compromise. It is the correct answer for organizations that have grown over time and have services with genuinely different operational profiles. The mistake is treating the mix as a problem to solve rather than an environment to operate.

Four questions worth asking before any new service

When a team proposes adding a new service to the fleet, four questions separate good decisions from defaults:

- What does the rest of our fleet run, and is there a specific reason this service should differ? If yes, name the reason. If no, match the fleet.

- What's the deployment target, and does cold start materially affect cost or user experience? If yes, native compilation is on the table. If no, the JVM is fine.

- What does the service need to integrate with, and which framework's ecosystem makes that easiest? This is usually the strongest signal.

- Who's going to operate this at 2 a.m., and what do they already know? The answer almost always points back to the existing fleet.

These questions matter more than any framework comparison because they're the questions a senior platform engineer asks before writing the first line of code. The frameworks themselves have converged enough that the operational fit dominates the technical fit.

From stack choice to delivery velocity: standardize with AI-powered CD and GitOps

The four questions at the end of the previous section all point at the same operational problem. A platform team running a mixed-framework Java fleet faces the same delivery bottleneck regardless of which frameworks are in the mix: ticket-ops and pipeline sprawl that compound with every new service.

The frameworks have converged. The pipelines have not. Most enterprise Java teams still operate one CI/CD configuration for Spring Boot, a different one for Quarkus, a third for Jakarta EE on Open Liberty or Payara, and a long tail of bespoke automation for whatever legacy systems are still in flight. Every new service adds operational surface area. Every framework upgrade creates a coordination problem.

This is the layer where AI-powered continuous delivery and GitOps practices stop being aspirational and become structural. Pull-based deployments through GitOps eliminate the manual approval steps that previously gated Spring Boot rollouts but not Quarkus ones. Policy as Code guardrails enforce the same release strategies, security requirements, and resource limits across every framework in the fleet. Automated verification catches deployment anomalies against each service's own baseline, whether that baseline is a 3-second JVM startup or a 50-millisecond native cold start. Intelligent rollbacks protect production without requiring on-call engineers to remember which framework needs which recovery playbook.

The platform decision is no longer which Java framework to standardize on. It's how to operate the mix you already have without paying a coordination tax on every change.

Frequently asked questions

What is the difference between Java SE and Jakarta EE in 2026?

Java SE is the language, JVM, and core libraries every Java application runs on. Jakarta EE is a set of standardized APIs (CDI, Jakarta Persistence, Jakarta REST, Servlet, Jakarta Data, and others) that extend Java SE for enterprise applications. In 2026, the choice is rarely between Java SE and Jakarta EE directly. It's between frameworks and runtimes (Spring Boot, Quarkus, Helidon, Micronaut, Open Liberty, Payara, WildFly) that all sit on Java SE and most of which implement or interoperate with the Jakarta EE specifications.

Is Java EE the same as Jakarta EE?

Jakarta EE is the direct successor to Java EE under new governance at the Eclipse Foundation. Oracle transferred Java EE to Eclipse in 2017 and the platform was renamed because Oracle retained the "Java" trademark. Java EE 8 (2017) was the last release under the old name. Jakarta EE 8 (2019) was the same platform under the new name. Jakarta EE 11 (2025) is the current stable version.

What is the javax to jakarta namespace migration?

Starting with Jakarta EE 9 in 2020, every Jakarta EE package was renamed from javax.* to jakarta.*. An import that used to read import javax.persistence.Entity now reads import jakarta.persistence.Entity. Spring Boot 3 (late 2022) and Spring Boot 4 both require the new namespace, which means any Spring Boot 2.x application upgrading to 3.x has to migrate every affected import. Tools like Eclipse Transformer and OpenRewrite automate most of the migration, but it remains the gating event for many platform upgrades happening in 2026.

Should I use Spring Boot or Jakarta EE for a new microservice in 2026?

For most greenfield services, Spring Boot is the path of least resistance because of its ecosystem and hiring advantages. Choose a Jakarta EE runtime like Quarkus when cold start time and memory footprint are your dominant operational costs, when you need native compilation as a first-class concern, or when procurement requires multi-vendor specification compatibility. The technical capabilities have largely converged. The decision is mostly about ecosystem fit, deployment target, and what your platform team already operates well.

What's the performance difference between Spring Boot and Quarkus?

On the JVM, a typical Spring Boot service starts in 2 to 5 seconds and runs in 200 to 400 MB, while a Quarkus service starts closer to 1 second and runs in 150 to 250 MB. As GraalVM native binaries, both Spring Boot (via Spring AOT) and Quarkus start in 30 to 100 milliseconds and run in 30 to 80 MB. The real performance difference shows up in cold-start-sensitive deployments like serverless and scale-to-zero workloads, where native compilation moves from a nice-to-have to a requirement.

Which Java version should I target in 2026?

Java 21 LTS is the production baseline for most enterprise Java fleets, and Java 25 LTS (released September 2025) is what platform teams are migrating to over the next 12 to 18 months. Java 17 should be treated as the floor, not the target. Avoid non-LTS releases (currently Java 26) for production unless you have a specific reason to track preview features, since support windows for non-LTS versions are six months. Both Spring Boot 4 and Jakarta EE 11 support Java 21 with first-class enhancements when running on Java 25.

Can Jakarta EE and Spring Boot services run in the same Kubernetes cluster?

Yes, and most enterprise Java fleets do exactly this. The technical compatibility is straightforward because both stacks produce standard container images and both expose health, metrics, and logs through OpenTelemetry-compatible instrumentation. The harder problem is operational consistency: enforcing the same release strategies, observability conventions, and governance policies across both stacks. Policy-as-code and unified delivery pipelines solve this regardless of which frameworks are in the mix.

Is Java EE dead?

Java EE under that name ended in 2017, but the platform is alive and actively developed under the Jakarta EE name at the Eclipse Foundation. Jakarta EE 11 shipped in 2025 with new specifications including Jakarta Data and first-class virtual thread support. Modern runtimes like Quarkus, Helidon, Open Liberty, Payara, and WildFly implement Jakarta EE specifications in cloud-native form. The "Java EE is dead" narrative was specifically about heavyweight application servers like WebLogic and WebSphere full profile, which are an active migration target rather than a forward choice.

Experience AI-powered continuous delivery and native GitOps with Harness

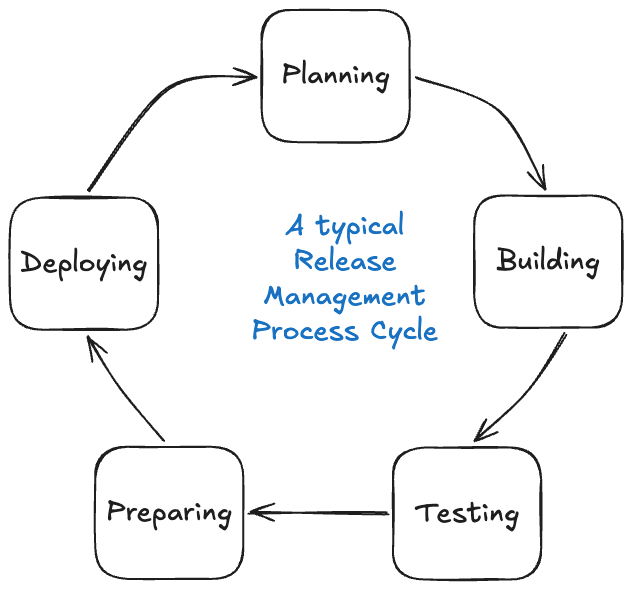

Automated Release Management: From CABs to Continuous Delivery

- CABs optimize for perceived safety, not actual risk reduction. Batched releases, surface-level reviews, and meeting cadence latency create the very risks they are intended to mitigate.

- Policy as code, automated quality gates, and continuous change tracking enforce the same rules on every change, consistently and at scale.

- Speed and safety are not a trade-off if you build the right controls. Smaller, iterative releases with automated verification limit the blast radius and shorten recovery time.

The thing with Change Advisory Boards is that the intent was always good. Get smart people in a room, look at the evidence, and make sure nothing catastrophic goes out the door. In theory, that's hard to argue with.

It doesn't scale in practice. Things happen between meetings. Teams rush to hit the window. The CAB meeting may not catch every risky deployment, but at least everyone can feel good about the process before the incident happens.

Automated release management asks a different question entirely. Not "did a human approve this?" but "has this change actually proven it's safe?" Governance moves into the pipeline itself, running the same checks on every change at whatever speed your teams ship.

That's exactly what Harness Continuous Delivery is built for: policy-driven pipelines, automated assurance, and governance that scales with your teams.

Automated Release Management: What Is It?

Automated release management replaces manual review and approval steps with automated quality gates, policy enforcement, and deployment orchestration.

Rather than routing change decisions through a central committee, automated systems evaluate each change against defined criteria like test coverage, security scans, rollback definitions and compliance checks, then approve or block it based on objective results.

That does not get rid of governance. It brings governance into the delivery pipeline and consistently applies it to all changes, not just the ones that make it onto a CAB agenda.

Automated release management paired with a continuous delivery platform allows teams to deploy frequently, recover quickly, and audit completely, with no meeting necessary.

The Traditional Model: Why CABs Can't Keep Up

The CAB model made sense when software changed slowly and release cycles were long. Cross-functional stakeholders would review evidence packets, testing results, deployment plans, security scans and determine if a release was safe to promote.

The problem is that the model doesn't scale well as the speed of delivery accelerates. Some patterns keep repeating themselves:

- Surface inspection. CAB members typically don't have deep, application-level context on the changes they are approving. Reviews are about whether the evidence packet looks complete, not if the change is actually safe.

- Grouped risk. Changes build up between cycles and ship together in larger releases. The bigger the release, the bigger the blast radius when things go wrong.

- Delayed compounding. Delays build up across teams and sprints waiting for the next CAB slot. Delivery speed becomes a function of the meeting cadence, not the capability of the team.

- Big overhead for engineers. Senior engineers spend hours compiling evidence packets and presenting to committees, time that could be spent shipping.

DORA's research provides a useful gut-check here: high-performing engineering teams deploy far more frequently than their peers with lower change failure rates, not higher. It's not approval volume that matters; it's pipeline discipline.

The fundamental problem is not that governance is bad. It is that a meeting-based governance model cannot keep up with a continuous delivery operating model.

From Approval Gates to Automated Assurance

The difference in automated release management boils down to a different question at the heart of the process.

Old model: Who approved this? New model: What did this change prove before we shipped it?

That reframe yields a meaningfully different architecture. Governance takes place on every change, not at scheduled times. Pass/fail criteria are deterministic, not subjective. Compliance is an output of the pipeline, not a prerequisite to enter it.

Building an Automated Release Management Pipeline

1. Continuous Change Tracking

All changes must be traceable without requiring manual compilation. Version control becomes the single source of truth. CI systems automatically generate commit history, build artifacts and deployment-linked changelogs as part of normal pipeline execution. By default, the audit trail is there.

Harness GitOps takes this a step further, using Git as the single source of truth for the state of the deployment. All configuration changes are versioned, all deployments are tracked, and drift is detected automatically.

2. Automated Quality Gates

Validation moves from presentations to execution. Quality gates run on every change: unit and integration tests, end-to-end validation, security and compliance scans, and performance checks. These are not release-window activities. They are part of the standard CI/CD pipeline, running continuously on every change that moves through.

Harness Powerful Pipelines supports multi-stage pipeline orchestration across complex environments with built-in test intelligence and conditional execution logic. Quality gates run fast and don't create unnecessary bottlenecks.

3. Policy as Code

CAB rules get codified in an automated release management model. No critical vulnerabilities before production promotion. Minimum thresholds for test coverage. Mandatory rollback procedure definitions. These policies are automatically enforced in the pipeline. Pass, and the change proceeds. Fail, and it's reliably blocked at scale, with no human bottleneck in the critical path.

That's what policy as code is all about: governance that's version-controlled, auditable and applied the same way every time.

Harness DevOps Pipeline Governance lets teams define and enforce pipeline policies in one place. Compliance is not something you check at the end. It's something the pipeline enforces throughout.

4. AI-Assisted Deployment Verification

Even with strong quality gates, production deployments carry residual risk. Test environments do not always mirror what production surfaces.

Harness AI-Assisted Deployment Verification automatically analyzes deployment health using ML to compare metrics, logs and traces against baseline behavior. When something drifts, it surfaces the signal quickly, enabling rollback before an incident escalates. This closes the loop between deployment and validation, making the pipeline genuinely self-correcting, not just self-approving.

Managing Complex, Interdependent Releases

In practice, systems rarely exist in isolation. One change can affect backend services, APIs, web apps, mobile apps and edge targets all at once. In tightly coupled systems, changes to one component can cause another to break, and partial deployments can be risky without careful coordination.

Traditional coordination uses spreadsheets, emails, and war rooms. Modern automated release management means orchestration: platforms that model service dependencies, trigger pipelines in the right order, and ensure all components pass quality gates before release. Multi-team coordination becomes a single-action, end-to-end deployment.

Harness Continuous Delivery has built-in support for orchestrated multi-service deployments with dependency mapping and conditional promotion logic. Deploy Anywhere extends this to cloud, hybrid, on-prem and edge environments without requiring separate toolchains for each target.

Harness pipelines also support canary deployments and GitOps-based progressive delivery for rollout strategies tailored to deployment risk.

Reducing Coupling Over Time

Managing interdependent releases is a good start. The goal is to reduce the coupling itself so teams can ship independently without synchronized multi-team deployments. Three practices tend to accelerate that:

- Contract testing. Services define and verify their contracts, so a change in one will not silently break others.

- Feature flags. Feature flags decouple code deployment from feature activation. Code ships continuously; features turn on when they're ready. They also act as a safety net post-deployment. If a feature causes unexpected behavior in production, it can be disabled instantly without a full rollback.

- Backward-compatible APIs. Designing for backward compatibility means downstream services do not have to be updated simultaneously with upstream changes.

Together, these patterns move teams toward the continuous delivery ideal: frequent, small, independent releases, each of which is safe on its own.

What Automated Release Management Delivers

The results of replacing CAB-driven processes with policy-driven pipelines and automated assurance are measurable:

- More velocity. Releases are continuous, not fixed to a cadence. Time to production goes from weeks to hours.

- Consistent quality. All changes go through the same validation, not just the ones that make it onto a CAB agenda.

- Less risk. Smaller incremental releases mean a smaller blast radius. Automated rollback means quicker recovery when things go wrong.

- Complete auditability. All changes, gates, policy checks and deployments are automatically documented with no manual evidence collection required.

Harness CD Visualize DevOps Data surfaces deployment frequency, change failure rates and mean time to recovery in real time. These are the DORA metrics that measure delivery health with zero instrumentation overhead.

Build Controls That Don't Slow You Down

CABs were created for a slower world, where a weekly review meeting could credibly keep up with the cadence of releases. That world is long gone for most engineering organizations today.

The takeaway here is this: automated release management doesn't remove governance. It rebuilds governance as a system that is fast, consistent, auditable and embedded directly in the delivery pipeline. The teams that move fastest aren't the ones with the loosest controls. They're the ones with controls that don't slow them down.

If you're ready to move from approval bottlenecks to automated assurance, Harness Continuous Delivery is built for exactly that.

Release Management: Frequently Asked Questions (FAQs)

What is automated release management?

Automated release management is the practice of using automated quality gates, policy enforcement and deployment orchestration to replace manual approval steps in the software release process. Rather than routing changes to a committee, the pipeline evaluates each change against predefined criteria and approves or blocks it based on objective results.

What is the difference between automated release management and a CAB process?

A CAB relies on scheduled human review to approve changes before they go into production. Automated release management takes that validation and builds it into the pipeline itself, running the same checks on every change instead of batching them for periodic review. The result is faster delivery with more consistent governance.

What are quality gates in a release pipeline?

Quality gates are automated checkpoints a change must pass before moving to the next stage. Common examples include test coverage thresholds, security scan results, and performance benchmarks. A change that fails a gate is blocked automatically, without human intervention.

What is policy as code?

Policy as code is the practice of expressing governance rules in version-controlled configuration files rather than documents or meeting agendas. The pipeline then automatically enforces those rules on every deployment, making compliance consistent and auditable by default.

What is the role of feature flags in automated release management?

Feature flags decouple code deployment from feature activation. Teams can ship code continuously without exposing unfinished features to users, and can disable a feature instantly if it causes issues in production, without triggering a full rollback.

What deployment strategies work best with automated release management?

Incremental strategies like canary deployments work well because they limit the blast radius of any given change. Paired with automated verification, the pipeline can catch problems early in the rollout and halt or roll back before they affect all users.

How does Harness support automated release management?

Harness Continuous Delivery provides end-to-end pipeline orchestration, built-in policy governance, GitOps-based change tracking, AI-assisted deployment verification, and real-time DORA metrics. It's designed to replace manual release processes with automated systems that scale across any environment.

Introducing Harness Release Orchestration: Enterprise Release Management, Reimagined

Modern software delivery has evolved far beyond single-service deployments. Today's releases span dozens of services, multiple teams, and complex approval workflows—coordinated through spreadsheets, Slack channels, and manual checklists scattered across tools. When a production release involves deploying ten microservices across three environments, enabling five feature flags, running security scans, collecting approvals from four stakeholders, and coordinating with three different teams, the question isn't whether you can ship—it's whether you can track what shipped, when it shipped, and who approved it.

Release Orchestration solves this. It provides a unified framework for modeling, scheduling, automating, and tracking complex software releases across teams, tools, and environments—giving you end-to-end visibility from planning through production deployment and monitoring.

Why Release Orchestration Matters

Without orchestration, enterprise releases become coordination nightmares. Status lives in spreadsheets that go stale within hours. Coordination happens through email threads spanning dozens of messages. There's no single source of truth for what was deployed, when, or by whom. Manual checklists drift out of sync. Approval workflows rely on memory and goodwill. And when something goes wrong at 2 AM, reconstructing what happened requires археology across multiple systems.

Release Orchestration transforms this chaos into structured, auditable, repeatable processes. Model your release blueprint once—defining phases, activities, dependencies, and approval gates—then execute it repeatedly with different configurations. Automate pipeline-backed steps while retaining manual sign-offs where governance requires them. Track activity-level status, phase-level progress, and overall release health in real time. Enforce approvals, capture sign-offs, and maintain a full audit trail linking code to deployment to business outcome.

The result? Releases that used to require days of coordination now run faster with complete visibility and zero spreadsheets.

How Release Orchestration Works

Release Orchestration introduces a structured, visual approach to modeling and executing releases. Define Processes—reusable blueprints composed of Phases (Build, Testing, Deployment) and Activities (automated pipelines, manual approvals, or nested subprocesses). Release Groups define cadences and automatically generate releases. The Release Calendar provides unified visibility across all releases. The Activity Store and Input Store promote reusability—define once, execute many times with different configurations. And ad hoc releases let you execute any process on demand when you need flexibility outside your regular schedule.

At its core, Release Orchestration delivers the foundational capabilities enterprise teams need: process modeling with visual editors, scheduled and recurring releases through release groups, real-time execution tracking with dependency management, comprehensive audit trails for compliance, and AI-powered process creation that transforms natural language descriptions into structured workflows. These capabilities form the foundation for enterprise release management at scale.

What's New: Capabilities Launching with Release Orchestration

Release Orchestration launches with a comprehensive set of capabilities designed for enterprise release management. Here's what you can do today.

Ad Hoc Releases: Create Releases On-the-Go

Not every release fits a scheduled release. Customer-specific deployments, unscheduled maintenance, and process testing need one-off releases. Ad hoc releases let you create and execute releases on demand-select a process, configure timing, provide inputs, and optionally run immediately. Test new processes in isolation, handle customer deployments without disrupting your calendar, or orchestrate emergency maintenance with full tracking and audit capabilities.

Multi-Service & Multi-Environment Support

Modern releases deploy multiple services across multiple environments. Release Orchestration's input system handles this through variable mapping—define global variables like releaseVersion and `targetEnvironment` once, and they flow automatically to all activities. Deploy to QA with "QA Inputs," production with "Production Inputs"—same process, different configurations. This eliminates repetitive data entry, ensures consistency, and scales from three services to thirty without growing complexity.

Notifications: Stay Informed at Every Step

Release Orchestration integrates with Harness's centralized notification framework, delivering alerts when releases start, pause for input, complete, or fail. Route notifications to Slack, email, PagerDuty, Microsoft Teams, or webhooks. Platform teams managing multiple releases shift from reactive monitoring to proactive awareness—get notified immediately when action is required.

Reporting: Download Detailed Execution Reports

Compliance reviews and post-mortems require detailed records. Release Orchestration provides downloadable Excel reports with complete execution history—every activity, status, timestamps, approvals, and inputs used. Generate reports for individual releases (sprint retrospectives) or release groups (quarterly audits). Activity-level detail meets compliance needs; process-level overviews serve executive summaries. All execution data is captured in the audit trail, allowing you to reconstruct exactly what happened during any release.

Filters: Find What Matters Fast

As releases scale, filters help you focus. Filter by source (ad hoc vs recurring), status (in progress, completed, failed), time window (this sprint, Q1 2026), environment (production, staging), or scope (specific orgs/projects). Platform teams filter to ad hoc releases for one-off deployments. Release managers filter by status for in-progress releases. Compliance teams filter by date range for audit periods. Transform an overwhelming calendar into a focused view of exactly what you need.

Hotfix Process: Fast-Track Emergency Releases

Production incidents don't wait for your release cadence. Release Orchestration supports hotfix workflows that fast-track emergency releases while maintaining governance. Mark releases as hotfixes to distinguish them in calendars and reports. The system detects execution conflicts—if a hotfix targets an environment where a release is running, you get visibility to coordinate decisions. Hotfixes use the same process structure, ensuring that approvals and audit trails are maintained. The hotfix designation flows through reports and logs, documenting emergency procedures for post-incident reviews. Speed meets governance.

Manual Activities: Approvals and Sign-Offs in Context

Not everything can be automated. Security reviews, architectural approvals, and stakeholder sign-offs require human judgment. Release Orchestration treats manual activities as first-class citizens with the same visibility and dependency support as automated activities. Manual activities pause execution until someone provides input—an approval, verification, or checklist confirmation. Notifications alert the responsible person; they review the context and complete the activity, optionally leaving notes. Manual activities can depend on automated activities (approval after deployment) or vice versa (deployment after approval). All completions appear in audit trails and reports for compliance documentation.

Building Releases That Match How You Work

Release Orchestration provides primitives—processes, phases, activities, dependencies, inputs—that compose to match how your organization ships software. Model microservice releases with parallel deployments and end-to-end tracking. Define compliance-driven releases with approval gates at critical checkpoints. Create streamlined hotfix workflows for emergencies. Coordinate feature flag enablement with deployments. Assign phase owners for multi-team coordination with notification-driven handoffs. The system scales from simple three-phase releases to complex workflows with fifty activities and nested subprocesses.

AI-Powered Process Creation

Harness AI transforms natural language descriptions into structured processes. Describe your workflow—"Create a multi-service release with phases for build, testing, deployment, and monitoring. Assign owners for Development, QA, and DevOps,"—and AI generates the complete structure with phases, activities, and dependencies. Refine the generated process by adding activities, adjusting dependencies, and configuring inputs. This reduces process modeling time from hours to minutes, making it practical to create specialized processes for different release types.

Real-Time Visibility Across the Release Lifecycle

Release Orchestration provides real-time tracking at three levels: activity (running, succeeded, failed, waiting), phase (overall progress), and process (end-to-end status). The execution graph shows phases as nodes, dependencies as arrows, and color-coded status on each activity. Drill into pipeline executions from the release view with one click. See approval history for manual activities—who approved, when, and with what notes. This unified view eliminates the need to check multiple systems. Platform teams can see at a glance which releases are progressing smoothly, which are awaiting approval, and which need attention. [Learn more →](https://developer.harness.io/docs/release-orchestration/execution/activity-execution-flow)

Get Started with Release Orchestration

Release Orchestration is available now in Harness. Contact Harness Support to enable the module for your account. Once enabled, explore Processes (model release blueprints), Release Calendar (schedule and track releases), Activity Store (reusable activities), and Input Store (configuration sets). The getting started guide walks you through creating your first AI-powered process, adding activities, and executing a release.

What's Next

We're actively developing additional capabilities: deeper analytics and insights (release velocity metrics, phase duration trends, failure pattern analysis), advanced dependency modeling (cross-release dependencies, environment-level locking), enhanced collaboration (in-line comments, Slack-native monitoring), a template marketplace for common release patterns, and API/GitOps for managing processes as code. The roadmap prioritizes capabilities that help teams ship faster with greater confidence.

Transform Your Release Process

Software delivery has evolved far beyond single-service deployments, but release management tooling hasn't kept pace. Spreadsheets, email coordination, and manual checklists don't scale to modern microservice architectures, multi-team workflows, and compliance requirements. Release Orchestration provides the unified framework enterprise teams need to model, automate, and track complex releases across teams, tools, and environments.

Define reusable processes. Execute them with different inputs. Track activity-level progress. Enforce approvals and capture sign-offs. Maintain complete audit trails. All in one place, integrated with the pipelines and deployment workflows you already use.