When AI agents operate across a multi-module platform like Harness (from CI/CD to DevSecOps to FinOps), the number one goal is to give you answers that are correct, consistent, and grounded in real data. Getting there requires a deliberate architectural choice: when a question can be answered from structured platform data, the agent should use a schema-driven Knowledge Graph rather than raw API calls via MCP.

The principle is simple: if the data is modeled, retrieval should be deterministic.

The Problem with Exposing APIs Directly

MCP (Model Context Protocol) lets LLMs call external tools, including REST and gRPC APIs, by reading tool descriptions and deciding which to invoke. It's flexible and useful, but it comes with a high hidden cost when used as the default path for analytical questions.

To understand why, consider a real question a platform engineering lead might ask:

"Show me the pipelines with the highest failure rate in the last 30 days, and for each one, show which services they deploy and whether those services have any critical security vulnerabilities."

This spans four Harness modules: Pipeline, CD, STO, and SCS. Here's what happens under each approach:

MCP (Raw API) Path

1. The agent must discover which APIs exist across 4 modules → ~2,000 tokens

2. It calls the Pipeline API to list executions → full objects returned, 50+ fields each → ~100,000–150,000 tokens

3. It calls the CD API to correlate services → ~50,000–80,000 tokens

4. It calls the STO API to find vulnerabilities → ~40,000–60,000 tokens

5. It synthesizes everything in context → ~30,000–50,000 more tokens

Total: 5+ LLM calls, ~250,000–350,000 input tokens, high latency. And along the way, the agent may call APIs in the wrong order, miss pagination, misinterpret nested fields, or hallucinate field names.

Knowledge Graph Path

To query the data in our knowledge graph, we built a query language, Harness Query Language (HQL), which is a domain-specific query language designed for querying heterogeneous data sources in the Harness Data Platform.

1. The Type Selector receives the question and picks the right entity types from the schema catalog → ~4,000 tokens total

2. The Query Builder generates 2–3 Harness Query Language (HQL) queries using exact fields, known relationships, and valid aggregations

3. The Knowledge Graph executes those queries and returns structured, aggregated results → ~2,000 tokens

4. The agent summarizes the structured output → ~3,000 tokens

Total: 2–3 LLM calls, ~12,000 input tokens, low latency. That's a 15–25x reduction in token cost, and the answer is deterministic, not guessed.

Why Data Shape Is Everything

The Knowledge Graph stores rich metadata for every field. Take this example:

{

"name": "duration",

"field_type": "FIELD_TYPE_LONG",

"display_name": "Duration",

"description": "Pipeline execution duration in seconds",

"unit": "UNIT_CATEGORY_TIME",

"aggregation_functions": ["SUM", "AVERAGE", "MIN", "MAX", "PERCENTILE"],

"searchable": true,

"sortable": true,

"groupable": false

}This single definition tells the AI agent everything it needs:

- 1. It's a number, not a string, so duration = 'fast' is invalid

- 2. It's time-based: display in seconds or minutes, not raw integers

- 3. You can aggregate it (SUM, P95), but you can't GROUP BY it. It's continuous

- 4. You can filter and sort by it

Without this metadata, the LLM has to guess. And guessing is where hallucinations happen.

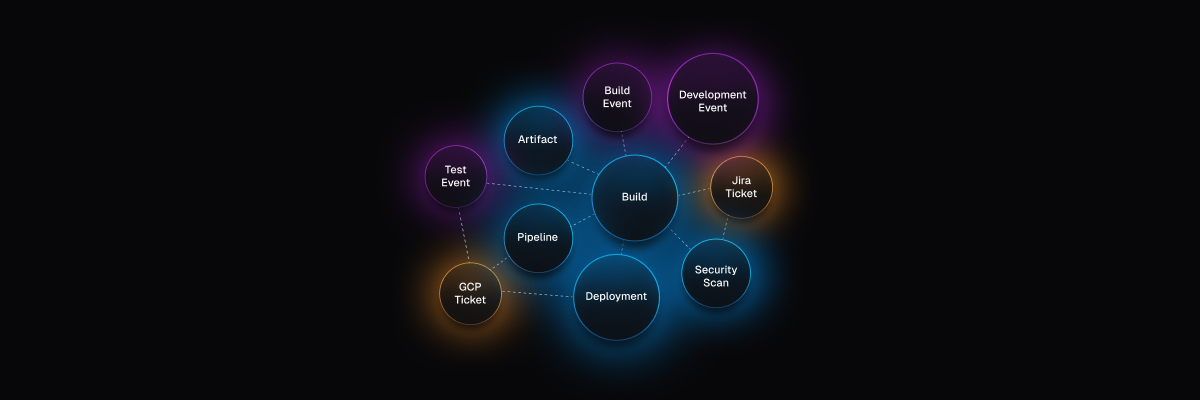

Relationships Don't Need to Be Inferred

Cross-module relationships are explicitly declared in the Knowledge Graph, including which entities connect, which fields to join on, cardinality (one-to-many, many-to-many), and human-readable traversal names. With MCP, the agent has to infer these connections from API documentation and field naming conventions, hoping that pipeline_id in the CD response matches execution_id in the Pipeline response. With the Knowledge Graph, the join is declared and reliable.

Semantic Annotations Enable Fast Routing

Type annotations act as a routing index over the Knowledge Graph:

- 1. Cost/billing questions → types tagged ccm, cost, billing

- 2. Pipeline/CI-CD questions → types tagged pipeline_orchestration

- 3. Security questions → types tagged sto, security

- 4. Compliance questions → types tagged ssca, sbom

This means the agent can select the right 1–3 types out of 80+ without scanning the full API surface of every module. The selection step runs at 0.1 temperature with strict JSON output, making it nearly deterministic.

HQL Is Safer Than Generating API Calls

When an LLM generates an invalid field in HQL, the query fails immediately with a clear, retry-able error, not a silent wrong answer.

A Four-Tier Model for Data Ownership

Not all data can be fully modeled, and MCP still has a role. The right framework is a four-tier data ownership model that determines how each type of data should be accessed:

The practical guidance:

- 1. Default to the Knowledge Graph (Tier 1) for all read, query, and analyze operations

- 2. Use schema-guided bridges (Tier 2) for logs and traces, query the modeled event envelope via HQL, then scope content retrieval using the resulting keys

- 3. Curate Harness-managed integrations (Tier 3) for key external systems, reshape data via API when needed, use MCP passthrough when it's sufficient

- 4. Enable external MCP (Tier 4) as an open extension point, but never optimize for it

- 5. Continuously move data up the tiers, every domain modeled into Tier 1 permanently reduces cost and increases reliability

- 6. Measure determinism, not just capability, a feature that works 95% of the time is worth more than one that works 70% of the time, but "can do anything"

The Bottom Line

The Harness Knowledge Graph and semantic layer aren't just another abstraction; they're the foundation that makes AI orchestration viable across a multi-module platform. By modeling entity types, relationships, field metadata, and aggregation rules upfront, we give AI agents the constraints they need to be deterministic and the structure they need to be efficient.

MCP is a tool for getting things done. The Knowledge Graph is the knowledge needed to understand things. Agents need both, but they need the understanding part first.